The AMD Threadripper 2 CPU Review: The 24-Core 2970WX and 12-Core 2920X Tested

by Ian Cutress on October 29, 2018 9:00 AM EST

This year AMD launched its second generation high-end desktop Ryzen Threadripper processors. The benefits of the new parts include better performance, better frequency, and parts up to 32 cores. We tested the first two processors back in August, the 32-core and the 16-core, and today AMD is launching the next two parts: the 24-core 2970WX and the 12-core 2920X. We have a full review ready for you to get your teeth in to.

Building out the HEDT Platform

When AMD first launched Threadripper in the summer of 2017, many considered it a breath of fresh air in the high-end desktop space. After several generations of +2 cores per year, but PCIe staying the same and pricing hitting $1721 for a 10-core, here was a fully-fledged 16 core processor for $999 with even more PCIe lanes. While it didn’t win medals for single core performance, it was competitive in prosumer workloads and opened up the floodgates to high core-count processors in the months that followed. Fast forward twelve months, and AMD doubled its core count with the Threadripper 2990WX, a second generation processor with 32 cores and upgraded 12nm Zen+ cores inside, fixing some of the low hanging fruit on performance.

While that first generation was AMD’s first crack at the HEDT market for a few years, it was really the stepping stone for the second generation that allows AMD to stretch its legs. In the first generation, users were offered three parts, of 8, 12, and 16 cores, using two zeppelin dies of eight cores each, and then cut down for the less than 16 core parts. For the second generation of Threadripper, the 8 core is dropped, but the stack is pushed higher at the top end, with AMD now offering new 24-core and 32-core parts.

| AMD SKUs | |||||||

| Cores/ Threads |

Base/ Turbo |

L3 | DRAM 1DPC |

PCIe | TDP | SRP | |

| TR 2990WX | 32/64 | 3.0/4.2 | 64 MB | 4x2933 | 60 | 250 W | $1799 |

| TR 2970WX | 24/48 | 3.0/4.2 | 64 MB | 4x2933 | 60 | 250 W | $1299 |

| TR 2950X | 16/32 | 3.5/4.4 | 32 MB | 4x2933 | 60 | 180 W | $899 |

| TR 2920X | 12/24 | 3.5/4.3 | 32 MB | 4x2933 | 60 | 180 W | $649 |

| Ryzen 7 2700X | 8/16 | 3.7/4.3 | 16 MB | 2x2933 | 16 | 105 W | $329 |

AMD launched the first two processors, the 16-core 2950X and the 32-core 2990WX, back in August. We did a thorough review, which you can read here, and due to the new features we had some interesting conclusions. Today AMD is lifting the embargo on the other two processors, the 12-core 2920X and the 24-core 2970WX, which should also be on shelves today.

Comparing what AMD brought to the table in 2017 to 2018 gives us the following:

| 2017 | 2018 | |||

| - | $1799 | TR 2990WX | ||

| - | $1299 | TR 2970WX | ||

| TR 1950X | $999 | $899 | TR 2950X | |

| TR 1920X | $799 | $649 | TR 2920X | |

| TR 1900X | $549 | |||

There is no direct replacement for the 1900X - in fact AMD never sampled it to reviewers, thus I kind of assume it didn't sell that well.

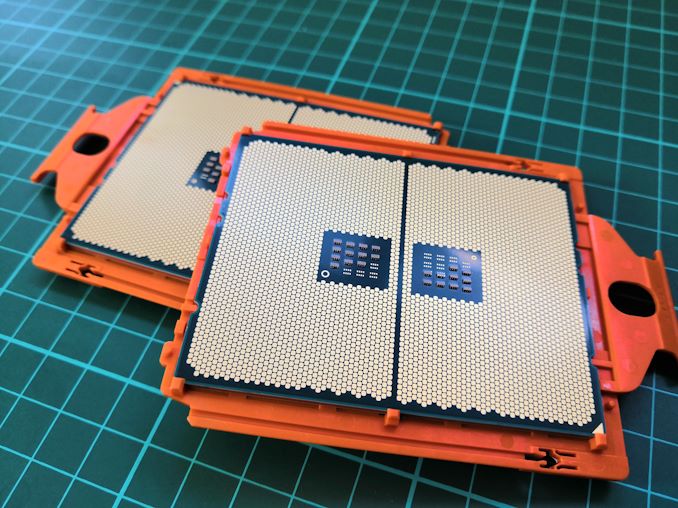

Constructing Threadripper 2

Rather than building up from Ryzen, AMD took its enterprise grade design in its EPYC platform and has filtered it down into the high-end desktop.

EPYC, with its large 4096-pin socket, was built on four lots of the eight-core Zeppelin die, with each die offering two memory channels and 32 PCIe lanes, to give a peak everything of 32 cores, 64 threads, 128 PCIe lanes, and eight memory channels.

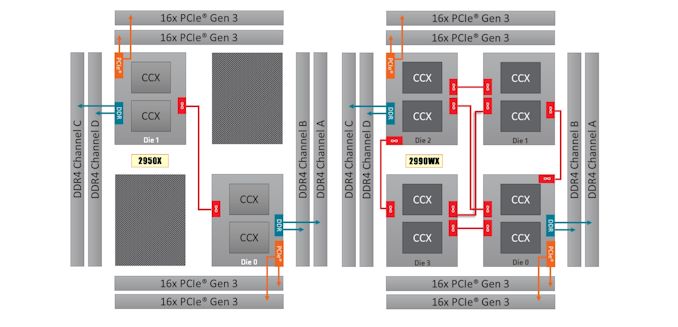

The first generation Threadripper took the same hardware and halved it – two active Zeppelin dies for 16 cores / 32 threads, quad channel memory, and 64 PCIe lanes (or 60 + chipset). For Threadripper 2, some of those EPYC features come back to the consumer market: no more memory channels or PCIe lanes, but those two inactive dies are reactivated to give up to 32 cores again for the WX series. The X series doesn’t get that, but they both get generational improvements, such as Zen+ and 12nm.

The new Threadripper 2 platform takes advantage of AMD’s newest Zen+ microarchitecture which is good for +3% performance at the same clock speed, but also the 12nm manufacturing node, which improved frequencies for an overall 10% improvement. We found AMD’s claims to be accurate on Zen+ and 12nm in our Ryzen 2000-series review.

Due to the way TR2 is laid out, we now have one Zeppelin die connected to two memory channels, another Zeppelin die connected to another two memory channels, and the two new active dies not connected to any memory, and thus to access memory, it has to perform an additional hop to do so. In memory bound testing, this can have obvious implications.

So when we tested the 32-core 2990WX and the 16-core 2950X, this is pretty much what we saw. The 2950X performed similarly to the 1950X, but with better per-core performance and higher frequencies. The 2990WX however was a mixed bag – for non-memory limited workloads, it was a beast, ripping through benchmarks like none-other. However for applications that compete for memory, it regressed somewhat, sometimes coming behind the 2950X in performance. Ultimately our suggestion was that the 2990WX is a monster processor, if you can use it, otherwise the 2950X was the smart purchase.

So with the new 2970WX and 2920X in this review, the 24-core and 12-core part, AMD has taken the 2990WX and 2950X processors and disabled two cores per Zeppelin die, giving 6+6+6+6 and 6+6 configurations. This has some pros and cons: having fewer cores per die means that larger threaded workloads will get additional latency between core-to-core communications, but the plus side is that the program moves onto a second die sooner, allowing the power budget to rise faster to get a better frequency.

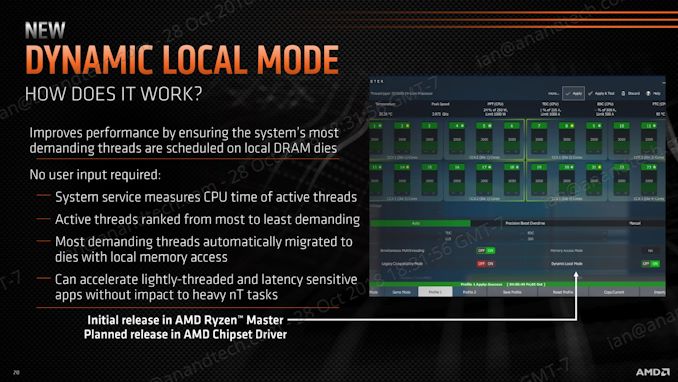

New Features: Dynamic Local Mode

For this second set of CPUs, AMD is also releasing a new mode for the 24-core 2970WX and the 32-core 2990WX called ‘Dynamic Local Mode’. This new mode will be selectable initially from the Ryzen Master software, but will eventually be made available through the chipset driver. The goal of this new mode is to improve performance.

When Threadripper first launched, it was the first mainstream single socket processor to have a non-uniform memory architecture: as each eight core Zeppelin die inside had direct access to two memory channels and extended access to the other two, it made the system unequal, and users had to decide between high bandwidth by enabling all four memory channels (default) or low latency by having each thread focus on the two memory channels closed. With Threadripper 2, especially with the four die variants, this problem takes another turn as the two dies not connected to memory will always have to jump through in order to execute a memory access.

What Dynamic Local Mode does involves some higher level adjustment of where programs are located on the chip. Through a system service, it measures the CPU time of active threads and ranks them from most demanding to least demanding, and then places the most demanding threads onto the Zeppelin dies with local memory access. The idea here is that by fixing the single thread or low thread programs to the primary silicon dies with the best performance, then overall system performance will increase. For fully multithreaded workloads, it won’t make much difference.

AMD is claiming that it offers around 10% better performance in some games at 1080p, and up to 20% in specific SPECwpc workloads. We should get round to testing this for a future article, however it will be more important when it becomes part of the chipset driver.

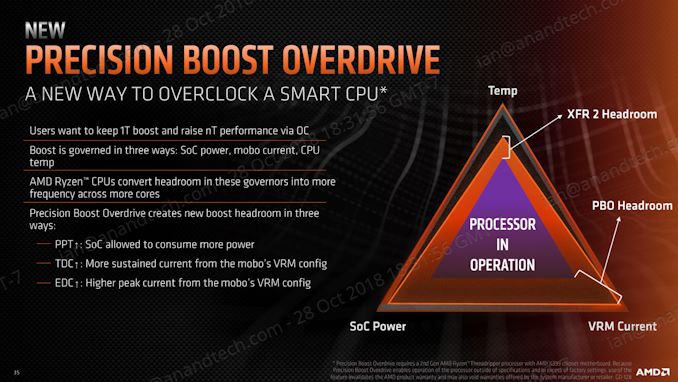

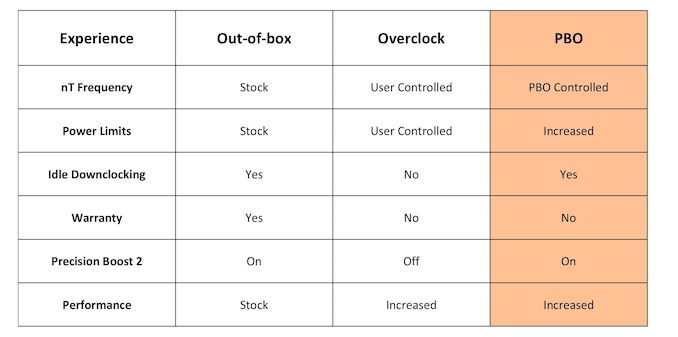

Old Features: Precision Boost Overdrive (PBO)

One feature not to forget is Precision Boost Overdrive.

Because AMD uses its Precision Boost 2 model for determining turbo frequency, it is not bound to the older ‘cores loaded = set frequency’ model, and instead attempts to boost as far as it can within power and current limits. Combined with the 25 MHz granularity of the frequency divider, it usually allows the CPU to make the best of the environment it is in.

What PBO does is increase the limits on power and current, still within safe temperature limits, in order to hopefully increase performance over a wide range of scenarios, especially heavily multithreaded scenarios if there is headroom in the motherboard power delivery and in the cooling. AMD claims around a 13% benefit in tasks that can benefit from it. PBO is enabled through the Ryzen Master software.

The three key areas are defined by AMD as follows:

- Package (CPU) Power, or PPT: Allowed socket power consumption permitted across the voltage rails supplying the socket

- Thermal Design Current, or TDC: The maximum current that can be delivered by the motherboard voltage regulator after warming to a steady-state temperature

- Electrical Design Current, or EDC: The maximum current that can be delivered by the motherboard voltage regulator in a peak/spike condition

By extending these limits, PBO gives rise for PB2 to have more headroom, letting PB2 push the system harder and further.

AMD also clarifies that PBO is pushing the processor beyond the rated specifications and is an overclock: and thus any damage incurred will not be protected by warranty.

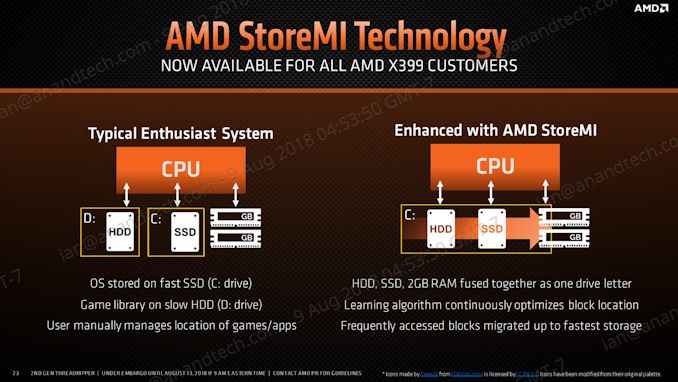

Old Features: StoreMI

AMD’s solution to caching technology is StoreMI, which allows users to combine a spinning rust HDD, up to a 256GB SSD, and up to 2GB of DRAM into a single unified storage space. The software deals with moving the data around to keep access times small, with the goal of increasing performance.

This is done after installing Windows, and can be disabled or adjusted at any time. One downside is if one drive fails, the whole chain is lost. However, AMD claims that in a best case scenario, StoreMI can improve loading times up to 90% over a large hard drive.

Motherboard Support

As promised by AMD, these new processors will fit straight into the X399 motherboard, with a BIOS update. Those motherboards already updated to support the 2990WX and 2950X will already have support for the 24-core and 12-core.

Some users will express concern that some of the motherboards might not be suitable for the 250W TDP parts. That may be true for some of the cheaper motherboards when users are overclocking, but users investing in this platform should also be prepared to invest in good cooling and making sure all the power circuitry is also actively cooled. We have seen MSI release the X399 MEG Creation as a new motherboard for these processors, GIGABYTE now has the X399 Aorus Extreme, and ASUS has released a cooling pack for its ROG Zenith Extreme.

We’ve reviewed over half the X399 motherboards currently on the market. Feel free to read our reviews:

| X399 Reviews | |||

| ASRock X399 Taichi |

MSI MEG X399 Creation |

Threadripper 2 2990WX Review |

Best CPUs |

_678x452.png) |

|

%20-%20Copya_678x452.jpg) |

|

| ASUS X399 ROG Zenith Extreme |

ASRock X399 Pro Gaming |

GIGABYTE X399 Designare EX |

X399 Overview |

|

|

|

|

For our testing, we’ve been using the ASUS X399 ROG Zenith Extreme, and it hasn’t missed a beat. I am a sucker for good budget boards, and that MSI X399 SLI Plus looks pretty handy too.

Competition and Market

Combating AMD’s march on the high-end desktop market is the blue team. Intel’s Skylake-X is still holding station, topping out with the Core i9-7980XE down to the Core i7-7800X. For this review we’ve actually got every single one of that family tested for comparison.

Intel is set to be launching an update to Skylake-X sometime in Q4, as was previously announced, with updates from the i7-9700X up to the i9-9980XE. On paper the major differences are the increased frequencies, increased L3 victim cache sizes, and increased power, as Intel is using HCC silicon across the board. We’ll test those when we get them in, but it will still be AMD’s 32-core vs Intel’s 18-core at the high end.

If you want to be insane, Intel will also be launching an overclockable 28-core Xeon W-3175X this year, although we expect it to be very expensive.

Pages In This Review

- Analysis and Competition

- Power Consumption and Uncore Update: Every TR2 CPU Re-tested

- Test Bed and Setup

- 2018 and 2019 Benchmark Suite: Spectre and Meltdown Hardened

- HEDT Performance: System Tests

- HEDT Performance: Rendering Tests

- HEDT Performance: Office Tests

- HEDT Performance: Encoding Tests

- HEDT Performance: Web and Legacy Tests

- Gaming: World of Tanks enCore

- Gaming: Final Fantasy XV

- Gaming: Shadow of War

- Gaming: Civilization 6

- Gaming: Ashes Classic

- Gaming: Strange Brigade

- Gaming: Grand Theft Auto V

- Gaming: Far Cry 5

- Gaming: Shadow of the Tomb Raider

- Gaming: F1 2018

- Conclusions and Final Words

69 Comments

View All Comments

snowranger13 - Monday, October 29, 2018 - link

On the AMD SKUs slide you show Ryzen 7 2700X has 16 PCI-E lanes. It actually has 20 (16 to PCI-E slots + 4 to 1x M.2)Ian Cutress - Monday, October 29, 2018 - link

Only 16 for graphics use. We've had this discussion many times before. Technically the silicon has 32.Nioktefe - Monday, October 29, 2018 - link

Many motherboards can use that 4 additionnal lanes as classic pci-ehttps://www.asrock.com/mb/AMD/B450%20Pro4/index.as...

mapesdhs - Monday, October 29, 2018 - link

Sure, but not for SLI. It's best for clarity's sake to exclude chipset PCIe in the lane count, otherwise we'll have no end of PR spin madness.Ratman6161 - Monday, October 29, 2018 - link

Ummm...there are lots of uses for more PCIe besides SLI ! Remember that while people do play games on these platforms, it would not make any sense to buy one of these for the purpose of playing games. You buy it for work and if it happens to game OK then great.TheinsanegamerN - Tuesday, October 30, 2018 - link

Is it guaranteed to be wired up to a physical slot?No?

then it is optional, and advertising it as being guaranteed available for expansion would be false advertising.

TechnicallyLogic - Thursday, February 28, 2019 - link

By that logic, Intel CPUs have no PCIE slots, as there are LGA 1151 Mini STX motherboards with no x16 slot at all. I think a good compromise would be to list the CPU as having 16+4 PCIE slots.Yorgos - Friday, November 2, 2018 - link

for clarity's sake they should report the 9900k at 250Watt TDP.selective clarity is purch media's approach, though.

2700x has 20 pcie lanes, period. if some motherboard manufacturers use it for nvme or as an extra x4 pcie slot, it's not up to debate for a "journalist" to include it or exclude it, it's fucking there.

unless the money are good ofc... everyone has their price.

TheGiantRat - Monday, October 29, 2018 - link

Technically the silicon of each die has total of 128 PCI-E lanes. Each die on Ryzen Threadripper and Epyc has 64 lanes for external buses and 64 lanes for IF. Therefore, the total is 128 lanes. They just have it limited to 20 lanes for consumer grade CPUs.atragorn - Monday, October 29, 2018 - link

Why are the epyc scores so low across the board? I dont expect it to game well but it was at the bottom or close to it for everything it seemed