A Timely Discovery: Examining Our AMD 2nd Gen Ryzen Results

by Ian Cutress & Ryan Smith on April 25, 2018 11:15 AM ESTA Timely Re-Discovery

Most users have no need to worry about the internals of a computer: point, click, run, play games, and spend money if they want something faster. However one of the important features in a system relates to how they measure time. A modern system relies on a series of both hardware and software timers, both internal and external, in order to maintain a linear relation between requests, commands, execution, and interrupts.

The timers have different users, such as following instructions, maintaining video coherency, tracking real time, or managing the flow of data. Timers can (but not always) use external references to ensure their own consistency – damage, unexpected behavior, and even thermal environments can cause timers to lose their accuracy.

Timers are highly relevant for benchmarking. Most benchmark results are a measure of work performed per unit time, or in a given time. This means that both the numerator and the denominator need to be accurate: the system has to be able to measure what amount of work has been processed, and how long it took to do it in. Ideally there is no uncertainty in either of those values, giving an accurate result.

With the advent of Windows 8, between Intel and Microsoft, the way that the timers were used in the OS were changed. Windows 8 had the mantra that it had to ‘support all devices’, all the way from the high-cost systems down to the embedded platforms. Most of these platforms use what is called an RTC, a ‘real time clock’, to maintain the real-world time – this is typically a hardware circuit found in almost all devices that need to keep track of time and the processing of data. However, compared to previous versions of Windows, Microsoft changed the way it uses timers, such that it was compatible with systems that did not have a hardware-based RTC, such as low-cost and embedded devices. The RTC was an extra cost that could be saved if the software was built to do so.

Ultimately, any benchmark software in play has to probe the OS to determine the current time during the benchmark to then at the end give an accurate result. However the concept of time, without an external verifying source, is an arbitrarily defined constant – without external synchronization, there is no guarantee that ‘one second’ on the system equals ‘one second’ in the real world. For the most part, all of us rely on the reporting from the OS and the hardware that this equality is true, and there are a lot of hardware/software engineers ensuring that this is the case.

However, back in 2013, it was discovered that it was fairly easy to 'distort time' on a Windows 8 machine. After loading into the operating system, any adjustment in the base frequency of the processor, which is usually 100 MHz, can cause the ‘system time’ to desynchronise with ‘real time’. This was a serious issue in the extreme overclocking community, where world records require the best system tuning: when comparing two systems at the same frequency but with different base clock adjustments, up to a 7% difference in results were observed when there should have been a sub-1% difference. This was down to how Windows was managing its timers, and was observed on most modern systems.

For home users, most would suspect that this is not an issue. Most users tend not to adjust the base frequencies of their systems manually. For the most part that is true. However, as shown in some of our motherboard testing over the years, frequency response due to default BIOS settings can provide an observable clock drift around a specified value, something which can be exacerbated by the thermal performance. Having a system with observable clock drift, and subsequent timing drift, is not a good thing. It relies on the accuracy and quality of the motherboard components, as well as the state of the firmware. This issue has formally been classified as ‘RTC Bias’.

The extreme overclocking community, after analysing the issue, found a solution: forcing the High Performance Event Timer, known as HPET, found in the chipset. Some of our readers will have heard of HPET before, however our analysis is more interesting than it first appears.

Why A PC Has Multiple Timers

Aside from the RTC, a modern system makes use of many timers. All modern x86 processors have a Time Stamp Counter (TSC) for example, that counts the number of cycles from a given core, which was seen back in the day as a high-resolution, low-overhead way to get CPU timing information. There is also a Query Performance Counter (QPC), a Windows implementation that relies on the processor performance metrics to get a better resolution version of the TSC, which was developed in the advent of multi-core systems where the TSC was not applicable. There is also a timer function provided by the Advanced Configuration and Power Interface (ACPI), which is typically used for power management (which means turbo related functionality). Legacy timing methodologies, such as the Programmable Interval Timer (PIT), are also in use on modern systems. Along with the High Performance Event Timer, depending on the system in play, these timers will run at different frequencies.

The timers will be used for different parts of the system as described above. Generally, the high performance timers are the ones used for work that is more time sensitive, such as video streaming and playback. HPET, for example, was previously referred to by its old name, the Multimedia Timer. HPET is also the preferred timer for a number of monitoring and overclocking tools, which becomes important in a bit.

With the HPET timer being at least 10 MHz as per the specification, any code that requires it is likely to be more in sync with the real-world time (the ‘one-second in the machine’ actually equals ‘one-second in reality’) than using any other timer.

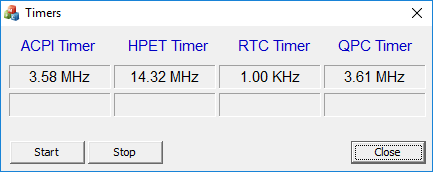

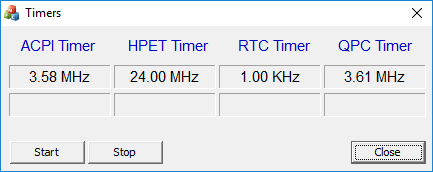

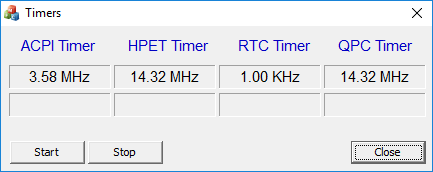

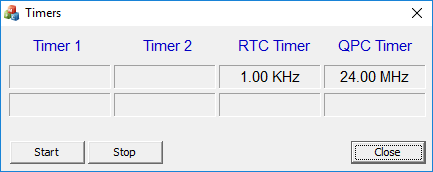

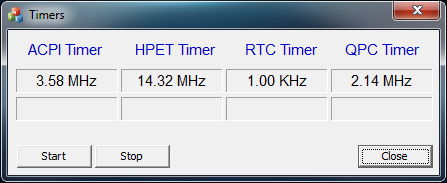

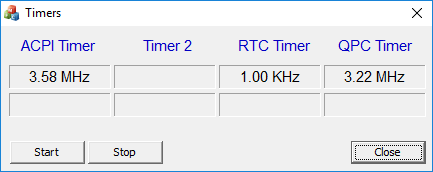

In a standard Windows installation, the operating system has access to all the timers available. The software used above is a custom tool developed to show if a system has any of those four timers (but the system can have more). For the most part, depending on the software instructions in play, the operating system will determine which timer is to be used – from a software perspective, it is fundamentally difficult to determine which timers will be available, so the software is often timer agnostic. There is not much of a way to force an algorithm to use one timer or another without invoking specific hardware or instructions that rely on a given timer, although the timers can be probed in software like the tool above.

HPET is slightly different, in that it can be forced to be the only timer. This is a two stage process:

The first stage is that it needs to be enabled in the BIOS. Depending on the motherboard and the chipset, there may or may not be an option for this. The options are usually for enable/disable, however this is not a simple on/off switch. When disabled, HPET is truly disabled. However, when enabled, this only means that the HPET is added to the pool of potential timers that the OS can use.

The second stage is in the operating system. In order to force HPET as the only timer to be used for the OS, it has to be explicitly mentioned in the system Boot Configuration Data (BCD). In standard operation, HPET is not in the BCD, so it remains in the pool of timers for the OS to use. However, for software to guarantee that the HPET is the only timer running, the software will typically request to make a change and make an accompanying system reboot to ensure the software works as planned. Ever wondered why some overclocking software requests a reboot *before* starting the overclock? One of the reasons is sometimes to force HPET to be enabled.

This leads to four potential configuration implementations:

- BIOS enabled, OS default: HPET is in list of potential timers

- BIOS enabled, OS forced: HPET is used in all situations

- BIOS disabled, OS default: HPET is not available

- BIOS disabled, OS forced: HPET is not available

Again, for extreme overclockers relying on benchmark results to be equal on Windows 8/10, HPET has to be forced to ensure benchmark consistency. Without it, the results are invalid.

The Effect of a High Performance Timer

With a high performance timer, the system is able to accurately determine clock speeds for monitoring software, or video streaming processing to ensure everything hits in the right order for audio and video. It can also come into play when gaming, especially when overclocking, ensuring data and frames are delivered in an orderly fashion, and has been shown to reduce stutter on overclocked systems. And perhaps most importantly, it avoids any timing issues caused by clock drift.

However, there are issues fundamental to the HPET design which means that it is not always the best timer to use. HPET is a continually upward counting timer, which relies on register recall or comparison metrics rather than a ‘set at x and count-down’ type of timer. The speed of the timer can, at times, cause a comparison to fail, depending on the time to write the compared value to the register and that time already passing. Using HPET for very granular timing requires a lot of register reads/writes, adding to the system load and power draw, and in a workload that requires explicit linearity, can actually introduce additional latency. Usually one of the biggest benefits to disabling HPET on some systems is the reduction in DPC Latency, for example.

242 Comments

View All Comments

Dr. Swag - Wednesday, April 25, 2018 - link

It looks like you guys are re running all the benchmarks in the original review then, right? I see that the results look to be changed and less CPUs are on the lists (since you haven't rerun them all, I assume)Ryan Smith - Wednesday, April 25, 2018 - link

Correct. We knew at the start of the Ryzen 2 review what benchmarks and what products we wanted to include; this timer issue hasn't changed that.freaqiedude - Wednesday, April 25, 2018 - link

So would it be fair to say that Intel’s HPET implementation is potentially buggy? It seems to cause a disproportionate performance hit.chrcoluk - Wednesday, April 25, 2018 - link

no its just that TSC + lapic is the way to go, There is a reason thats the default in windows and other modern OS's.DanNeely - Wednesday, April 25, 2018 - link

It suggests that their implementation could probably be made less impactful than it currently is; but that high precision timers have had a performance impact has been known for a long time. In its guise as the multi-media timer in Windows over a decade ago the official MS docs recommended using lesser timing sources in lieu of it whenever possible because it would affect your system.What's new to the general tech site reading public is that there are apparently significant differences in the size of the impact between different CPU families.

Tamz_msc - Wednesday, April 25, 2018 - link

But is there a 'real' performance impact or does default HPET behavior simply introduce a fudge factor that alters how the tools report the numbers? Is there a way to verify the results externally?eddman - Wednesday, April 25, 2018 - link

I'm wondering about the same thing. Do the games' frame rate really change (they get smoother or vice versa) or the timer just messes up the numbers reported by benchmarks and the games' actual frame rate that reaches the display doesn't change?rahvin - Wednesday, April 25, 2018 - link

I'd be more concerned that Intel has found a way to make the timer report false benchmarks that are higher than they actually are. I'd also be curious if the graphics card/cpu combination is potentially at fault.Nvidia has been shown to cheat in the past on benchmarks by turning off features in certain games that are used for benchmarking to boost the score. Is Intel doing something similar?

Rob_T - Wednesday, April 25, 2018 - link

I came across a similar issue on VMware, where a virtual machine's clock would drift out of time synchronisation. The cause of this was that VMWare uses a software based clock and when a host was under heavy CPU load the VM's clock wouldn't get enough CPU resource to keep it updated accurately. This resulted in time running 'slowly' on the virtual machine.Under normal circumstances this kind of time driftissue would be handled by the Network Time Protocol daemon slewing the time back to accuracy; the problem is the maximum slew rate possible is limited to 500 parts-per-million (PPM). Under peak loads we were observing the VM's clock running slow by anywhere up to a third. This far outweighed the ability of the NTP slew mechanism to bring the time back to accuracy.

If this issue has the same root cause, the software based timers would start to run slowly when the system is under heavy load. Therefore more work could be completed in a 'second' due to it's increased duration. It would be interesting to know if the highest discrepancy were also the ones with the largest CPU loads? Looking at the gaming graphs on page 4 the biggest differences are at 1080p which suggests this might be the case.

oleyska - Wednesday, April 25, 2018 - link

You also had Idle issue with windows servers where time would drift., the high load I've never heard of in our company we have thousands upon thousands of vm's using vmware though.