The NVIDIA GeForce GTX 980 Ti Review

by Ryan Smith on May 31, 2015 6:00 PM ESTNVIDIA's Computex Announcements & The Test

Alongside the launch of the GTX 980 Ti, NVIDIA is also taking advantage of Computex to make a couple of other major technology announcements. Given the scope of these announcements we’re covering these in separate articles, but we’ll quickly go over the high points here as they pertain to the GTX 980 Ti.

G-Sync Variable Overdrive & Windowed Mode G-Sync

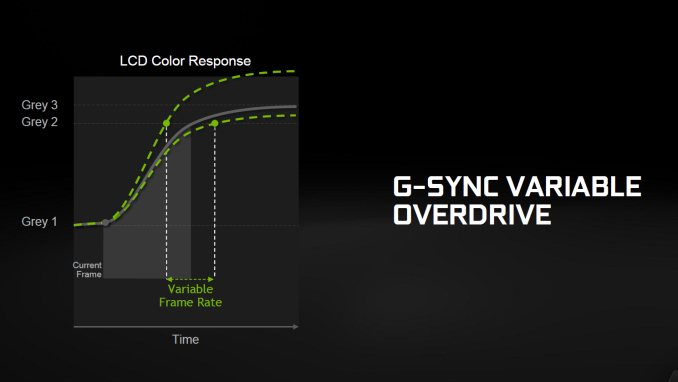

NVIDIA is announcing a slew of G-Sync products/technologies today, the most important of which is Mobile G-Sync for laptops. However as part of that launch, NVIDIA is also finally confirming that all G-Sync products, including existing desktop G-Sync products, feature support for G-Sync variable overdrive. As the name implies, this is the ability to vary the amount of overdrive applied to a pixel based on a best-effort guess of when the next frame will arrive. This allows NVIDIA to continue to use pixel overdrive on G-Sync monitors to improve pixel response times and reduce ghosting, at a slight cost to color accuracy while in motion from errors in the frame time predictions.

Variable overdrive has been in G-Sync since the start, however until now NVIDIA has never confirmed its existence, with NVIDIA presumably keeping quiet about it for trade secret purposes. However now that displays supporting AMD’s Freesync implementation of DisplayPort Adaptive-Sync are out, NVIDIA is further clarifying how G-Sync works.

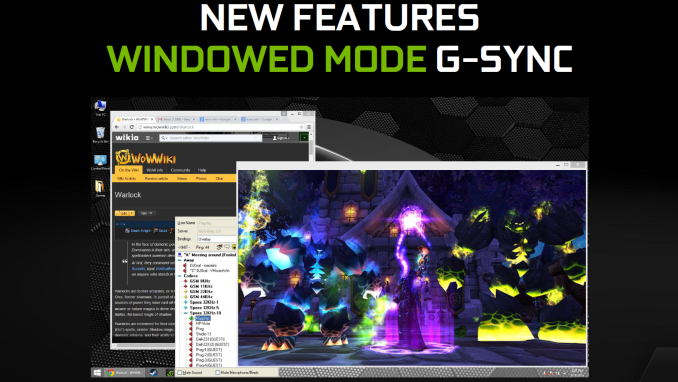

Meanwhile being freshly rolled out in NVIDIA’s latest drivers is support for Windowed Mode G-Sync. Before now, running a game in Windowed mode could cause stutters and tearing because once you are in Windowed mode, the image being output is composited by the Desktop Window Manager (DWM) in Windows. Even though a game might be outputting 200 frames per second, DWM will only refresh the image with its own timings. The off-screen buffer for applications can be updated many times before DWM updates the actual image on the display.

NVIDIA will now change this using their display driver, and when Windowed G-Sync is enabled, whichever window is the current active window will be the one that determines the refresh rate. That means if you have a game open, G-Sync can be leveraged to reduce screen tearing and stuttering, but if you then click on your email application, the refresh rate will switch back to whatever rate that application is using. Since this is not always going to be a perfect solution - without a fixed refresh rate, it's impossible to make every application perfectly line up with every other application - Windowed G-Sync can be enabled or disabled on a per-application basis, or just globally turned on or off.

GameWorks VR & Multi-Res Shading

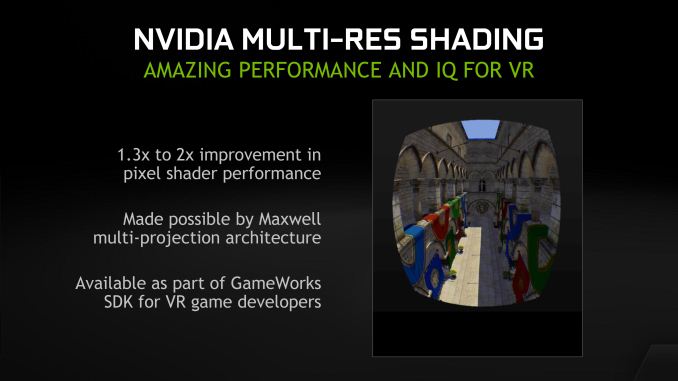

Also being announced at Computex is a combination of new functionality and an overall rebranding for NVIDIA’s suite of VR technologies. First introduced alongside the GeForce GTX 980 in September as VR Direct, NVIDIA will be bringing their VR technologies in under the GameWorks umbrella of developer tools. The collection of technologies will now be called GameWorks VR, adding to the already significant collection of GameWorks tools and libraries.

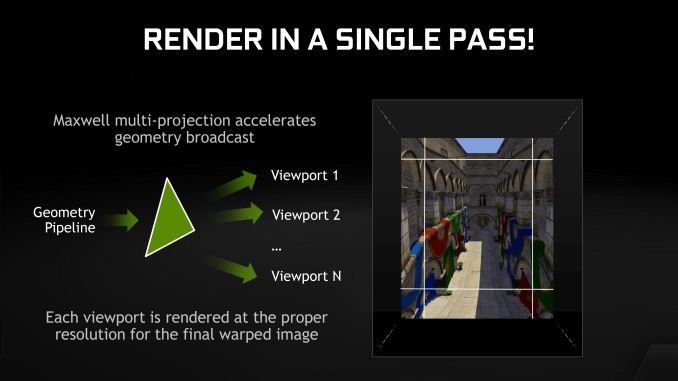

On the feature front, the newly minted GameWorks VR will be getting a new feature dubbed Multi-Resolution Shading, or Multi-Res Shading for short. With multi-res shading, NVIDIA is looking to leverage the Maxwell 2 architecture’s Multi-Projection Acceleration in order to increase rendering efficiency and ultimately the overall performance of their GPUs in VR situations.

By reducing the resolution of video frames at the edges where there is already the most optical distortion/compression and the human eye is less sensitive, NVIDIA says that using multi-res shading can result in a 1.3x to 2x increase in pixel shader performance without noticeably compromising the image quality. Like many of the other technologies in the GameWorks VR toolkit this is an implementation of a suggested VR practice, however in NVIDIA’s case the company believes they have a significant technological advantage in implementing it thanks to multi-projection acceleration. With MPA to bring down the rendering cost of this feature, NVIDIA’s hardware can better take advantage of the performance advantages of this rendering approach, essentially making it an even more efficient method of VR rendering.

Getting Behind DirectX Feature Level 12_1

Finally, though not an outright announcement per-se, from a marketing perspective we should expect to see NVIDIA further promote their current technological lead in rendering features. The Maxwell 2 architecture is currently the only architecture to support DirectX feature level 12_1, and with DirectX 12 games due a bit later this year, NVIDIA sees that as an advantage to press.

For promotional purposes NVIDIA has put together a chart listing the different tiers of feature levels for DirectX 12, and to their credit this is a simple but elegant layout of the current feature level situation. The bulk of the advanced DirectX 12 features we saw Microsoft present at the GTX 980 launch are part of feature level 12_1, while the rest, and other functionality not fully exploited under DirectX 11 are part of the 12_0 feature level. The one exception to this is volume tiled resources, which is not part of either feature level and instead is part of a separate feature list for tiled resources that can be implemented at either feature level.

The Test

The press drivers for the launch of the GTX 980 Ti are release 352.90, which other than formally adding support for the new card is otherwise identical to the standing 352.86 drivers.

| CPU: | Intel Core i7-4960X @ 4.2GHz |

| Motherboard: | ASRock Fatal1ty X79 Professional |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Samsung SSD 840 EVO (750GB) |

| Memory: | G.Skill RipjawZ DDR3-1866 4 x 8GB (9-10-9-26) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: | AMD Radeon R9 295X2 AMD Radeon R9 290X AMD Radeon HD 7970 NVIDIA GeForce GTX Titan X NVIDIA GeForce GTX 980 Ti NVIDIA GeForce GTX 980 NVIDIA GeForce GTX 780 Ti NVIDIA GeForce GTX 780 NVIDIA GeForce GTX 680 NVIDIA GeForce GTX 580 |

| Video Drivers: | NVIDIA Release 352.90 Beta AMD Catalyst Cat 15.5 Beta |

| OS: | Windows 8.1 Pro |

290 Comments

View All Comments

Yojimbo - Monday, June 1, 2015 - link

After some research, I posted a long and detailed reply to such a statement before, I believe it was in these forums. Basically, the offending NVIDIA rebrands fell into three categories: One category was that NVIDIA introduced a new architecture and DIDN'T change the name from the previous one, then later, 6 months if I remember, when issuing more cards on the new architecture, decided to change to a new brand (a higher numbered series). That happened once, that I found. The second category is where NVIDIA let a previously released GPU cascade down to a lower segment of a newly updated lineup. So the high end of one generation becomes the middle of the next generation, and in the process gets a new name to be uniform with the entire lineup. The third category is where NVIDIA is targeting low-end OEM segments where they are probably fulfilling specific requests from the OEMs. This is probably the GF108 which you say has "plagued the low end for too long now", as if you are the arbiter of OEM's product offerings and what sort of GPU their customers need or want. I'm sorry I don't want to go looking for specific citations of all the various rebrands, because I did it before in a previous message in another thread.The rumors of the upcoming retail 300 series rebrand (and the already released OEM 300 series rebrand) is a completely different beast. It is an across-the-board rebrand where the newly-named cards seem to take up the exact same segment as the "old" cards they replace. Of course in the competitive landscape, that place has naturally shifted downward over the last two years, as NVIDIA has introduced a new line up of cards. But all AMD seems to be doing is introducing 1 or 2 new cards in the ultra-enthusiast segment, still based on their ~2 year old architecture, and renaming the entire line up. If they had done that 6 months after the lineup was originally released, it would look like indecision. But being that it's being done almost 2 years since the original cards came out, it looks like a desperate attempt at staying relevant.

Oxford Guy - Monday, June 1, 2015 - link

Nice spin. The bottom line is that both companies are guilty of deceptive naming practices, and that includes OEM nonsense.Yojimbo - Monday, June 1, 2015 - link

In for a penny, in for a pound, eh? I too could say "nice spin" in turn. But I prefer to weigh facts.Oxford Guy - Monday, June 1, 2015 - link

"I too could say 'nice spin' in turn. But I prefer to weigh facts."Like the fact that both companies are guilty of deceptive naming practices or the fact that your post was a lot of spin?

FlushedBubblyJock - Wednesday, June 10, 2015 - link

AMD is guilty of going on a massive PR offensive, bending the weak minds of it's fanboys and swearing they would never rebrand as it is an unethical business practice.Then they launched their now completely laughable Gamer's Manifesto, which is one big fat lie.

They broke ever rule they ever laid out for their corpo pig PR halo, and as we can see, their fanboys to this very day cannot face reality.

AMD is dirtier than black box radiation

chizow - Monday, June 1, 2015 - link

Nice spin, no one is saying either company has clean hands here, but the level to which AMD has rebranded GCN is certainly, unprecedented.Oxford Guy - Monday, June 1, 2015 - link

Hear that sound? It's Orwell applauding.Klimax - Tuesday, June 2, 2015 - link

I see only rhetoric. But facts and counter points are missing. Fail...Yojimbo - Tuesday, June 2, 2015 - link

Because I already posted them in another thread and I believe they were in reply to the same guy.Yojimbo - Tuesday, June 2, 2015 - link

Orwell said that severity doesn't matter, everything is binary?