The NVIDIA GeForce GTX 980 Ti Review

by Ryan Smith on May 31, 2015 6:00 PM ESTNVIDIA's Computex Announcements & The Test

Alongside the launch of the GTX 980 Ti, NVIDIA is also taking advantage of Computex to make a couple of other major technology announcements. Given the scope of these announcements we’re covering these in separate articles, but we’ll quickly go over the high points here as they pertain to the GTX 980 Ti.

G-Sync Variable Overdrive & Windowed Mode G-Sync

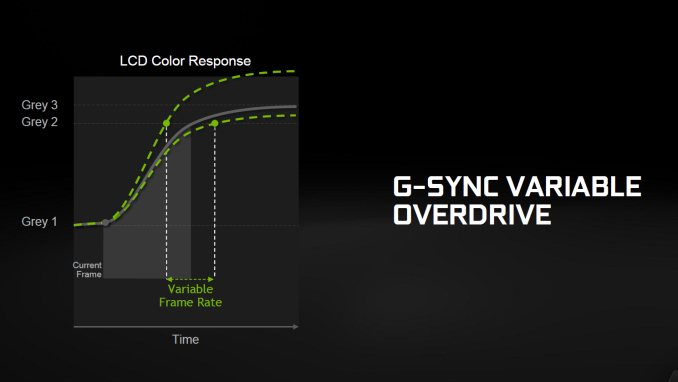

NVIDIA is announcing a slew of G-Sync products/technologies today, the most important of which is Mobile G-Sync for laptops. However as part of that launch, NVIDIA is also finally confirming that all G-Sync products, including existing desktop G-Sync products, feature support for G-Sync variable overdrive. As the name implies, this is the ability to vary the amount of overdrive applied to a pixel based on a best-effort guess of when the next frame will arrive. This allows NVIDIA to continue to use pixel overdrive on G-Sync monitors to improve pixel response times and reduce ghosting, at a slight cost to color accuracy while in motion from errors in the frame time predictions.

Variable overdrive has been in G-Sync since the start, however until now NVIDIA has never confirmed its existence, with NVIDIA presumably keeping quiet about it for trade secret purposes. However now that displays supporting AMD’s Freesync implementation of DisplayPort Adaptive-Sync are out, NVIDIA is further clarifying how G-Sync works.

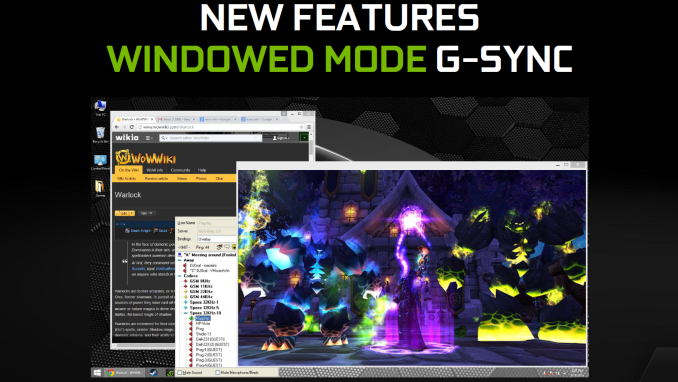

Meanwhile being freshly rolled out in NVIDIA’s latest drivers is support for Windowed Mode G-Sync. Before now, running a game in Windowed mode could cause stutters and tearing because once you are in Windowed mode, the image being output is composited by the Desktop Window Manager (DWM) in Windows. Even though a game might be outputting 200 frames per second, DWM will only refresh the image with its own timings. The off-screen buffer for applications can be updated many times before DWM updates the actual image on the display.

NVIDIA will now change this using their display driver, and when Windowed G-Sync is enabled, whichever window is the current active window will be the one that determines the refresh rate. That means if you have a game open, G-Sync can be leveraged to reduce screen tearing and stuttering, but if you then click on your email application, the refresh rate will switch back to whatever rate that application is using. Since this is not always going to be a perfect solution - without a fixed refresh rate, it's impossible to make every application perfectly line up with every other application - Windowed G-Sync can be enabled or disabled on a per-application basis, or just globally turned on or off.

GameWorks VR & Multi-Res Shading

Also being announced at Computex is a combination of new functionality and an overall rebranding for NVIDIA’s suite of VR technologies. First introduced alongside the GeForce GTX 980 in September as VR Direct, NVIDIA will be bringing their VR technologies in under the GameWorks umbrella of developer tools. The collection of technologies will now be called GameWorks VR, adding to the already significant collection of GameWorks tools and libraries.

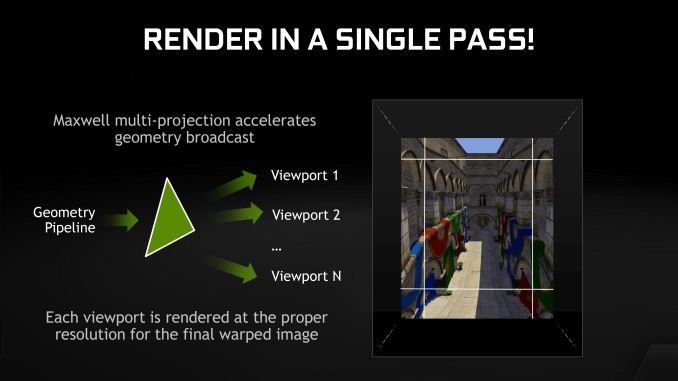

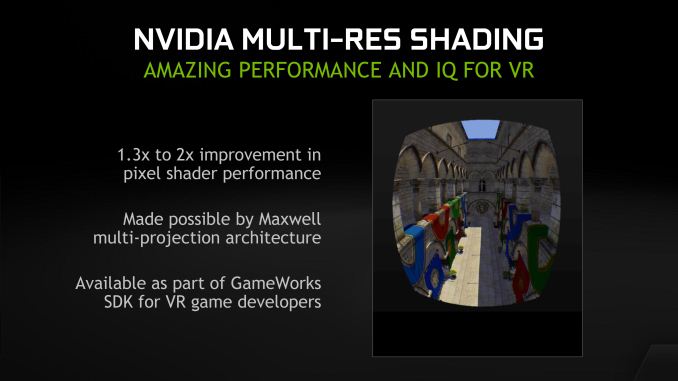

On the feature front, the newly minted GameWorks VR will be getting a new feature dubbed Multi-Resolution Shading, or Multi-Res Shading for short. With multi-res shading, NVIDIA is looking to leverage the Maxwell 2 architecture’s Multi-Projection Acceleration in order to increase rendering efficiency and ultimately the overall performance of their GPUs in VR situations.

By reducing the resolution of video frames at the edges where there is already the most optical distortion/compression and the human eye is less sensitive, NVIDIA says that using multi-res shading can result in a 1.3x to 2x increase in pixel shader performance without noticeably compromising the image quality. Like many of the other technologies in the GameWorks VR toolkit this is an implementation of a suggested VR practice, however in NVIDIA’s case the company believes they have a significant technological advantage in implementing it thanks to multi-projection acceleration. With MPA to bring down the rendering cost of this feature, NVIDIA’s hardware can better take advantage of the performance advantages of this rendering approach, essentially making it an even more efficient method of VR rendering.

Getting Behind DirectX Feature Level 12_1

Finally, though not an outright announcement per-se, from a marketing perspective we should expect to see NVIDIA further promote their current technological lead in rendering features. The Maxwell 2 architecture is currently the only architecture to support DirectX feature level 12_1, and with DirectX 12 games due a bit later this year, NVIDIA sees that as an advantage to press.

For promotional purposes NVIDIA has put together a chart listing the different tiers of feature levels for DirectX 12, and to their credit this is a simple but elegant layout of the current feature level situation. The bulk of the advanced DirectX 12 features we saw Microsoft present at the GTX 980 launch are part of feature level 12_1, while the rest, and other functionality not fully exploited under DirectX 11 are part of the 12_0 feature level. The one exception to this is volume tiled resources, which is not part of either feature level and instead is part of a separate feature list for tiled resources that can be implemented at either feature level.

The Test

The press drivers for the launch of the GTX 980 Ti are release 352.90, which other than formally adding support for the new card is otherwise identical to the standing 352.86 drivers.

| CPU: | Intel Core i7-4960X @ 4.2GHz |

| Motherboard: | ASRock Fatal1ty X79 Professional |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Samsung SSD 840 EVO (750GB) |

| Memory: | G.Skill RipjawZ DDR3-1866 4 x 8GB (9-10-9-26) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: | AMD Radeon R9 295X2 AMD Radeon R9 290X AMD Radeon HD 7970 NVIDIA GeForce GTX Titan X NVIDIA GeForce GTX 980 Ti NVIDIA GeForce GTX 980 NVIDIA GeForce GTX 780 Ti NVIDIA GeForce GTX 780 NVIDIA GeForce GTX 680 NVIDIA GeForce GTX 580 |

| Video Drivers: | NVIDIA Release 352.90 Beta AMD Catalyst Cat 15.5 Beta |

| OS: | Windows 8.1 Pro |

290 Comments

View All Comments

Klimax - Tuesday, June 2, 2015 - link

Just small bug in your article:Page "GRID Autosport" has one paragraph from previous page.

"Switching out to another strategy game, even given Attila’s significant GPU requirements at higher settings, GTX 980 Ti still doesn’t falter. It trails GTX Titan X by just 2% at all settings."

As for theoretical pixel test with anomalous 15% drop from Titan X, there is ready explanation:

Under specific conditions there won't be enough power to push those two Raster engines with cut down blocks. (also only three paths instead of four)

Ryan Smith - Wednesday, June 3, 2015 - link

Fixed. Thanks for pointing that out.bdiddytampa - Tuesday, June 2, 2015 - link

Really great and thorough review as usual :-) Thanks Ryan!Hrobertgar - Tuesday, June 2, 2015 - link

Today, Alienware is offering 15" laptops with an option for an R9-390x. Their spec sheet isn't updated, nor could I find updated specs for anything other than R9-370 on AMD's own website. Are you going to review some of these R9-300 series cards anytime soon?Hrobertgar - Tuesday, June 2, 2015 - link

When I went to checkout (didn't actually buy - just checking schedule) it indicated 6-8 day shipping with the R9-390X.3DJF - Tuesday, June 2, 2015 - link

Ummm....$599 for R9 295X2?......where exactly? every search i have done for that card over the last 4 months up to today shows a LOWEST price of $619.Ryan Smith - Wednesday, June 3, 2015 - link

It is currently $599 after rebate over at Newegg.Casecutter - Tuesday, June 2, 2015 - link

From most result the 980Ti offer 20% more @1440p than a 980 (GM204) and given the 980Ti cost like 18-19% more that the orginal MSRP of the 980 ($550) It's really not any big thing.Given GM200 a 38% larger die, and 38% more SU's over a GM204 and you get 20% increase? It worse when a full TitanX is considered, that has 50% more SU's and the TitanX get perhaps 4% more in FpS over the 980Ti. This points to the fact that Maxwell doesn't scale. Looking at power the 980Ti is needing approx. 28% more power, which is not the worst but is starting to indicate there a losses as Nvidia scaled it up.

chizow - Tuesday, June 2, 2015 - link

Well, I guess its a good thing 980Ti isn't just 20% faster than the 980 then lol.CiccioB - Thursday, June 4, 2015 - link

This is obviously a comment by a frustrated AMD fan.Maxwell scales perfectly as you didn't consider the frequency it runs.

GM200 is 50% more than a GM204 in all resources. But those GPU run at about 0.86% of GM204 frequency (1250 vs 1075). If you can do simple math, you'll see that for any 980 results, if you multiply it by 1.5 and then for 0.86 (or directly for 1.3, that means 30% more) you'll find almost exactly the numbers the 980Ti bench shows.

Now that the new 980 $500 price, do the same and... yes, it is $650 for 980Ti.

Oh, the die size... let's see... 398mm^2of GM204 * 1.5 = 597mm^2 which compares almost exactly with the calculated 601m^2 of GM200.

Pretty simply. It shows everything scales perfectly in nvidia house. Seen custom cards are coming, we'll see GM200 going to 50% more than GM204 at same frequency. Yet these cards will consume a bit more, as expected.

You cannot say the same for AMD architecture though, as with smaller chips GCN is somewhat on par or even better with respect to nvidia for perf/mm^2, but as soon as real crunching power is requested GCN becomes extremely inefficient under the point of both perf/Watt or perf/mm^2.

If you tried to plant a doubt about the quality of this GM200 or Maxwell architecture in general, sorry, you choose the wrong architecture/chip/method. You simply failed.