Exploring DirectX 12: 3DMark API Overhead Feature Test

by Ryan Smith & Ian Cutress on March 27, 2015 8:00 AM EST- Posted in

- GPUs

- Radeon

- Futuremark

- GeForce

- 3DMark

- DirectX 12

Other Notes

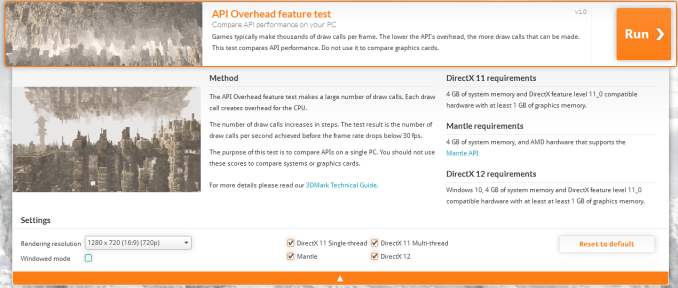

Before jumping into our results, let’s quickly talk about testing.

For our test we are using the latest version of the Windows 10 technical preview – build 10041 – and the latest drivers from AMD, Intel, and NVIDIA. In fact for testing DirectX 12 these latest packages are the minimum versions that the test supports. Meanwhile 3DMark does of course also run on Windows Vista and later, however on Windows Vista/7/8 only the DirectX 11 and Mantle tests are available since those are the only APIs available.

From a test reliability standpoint the API Overhead Feature Test (or as we’ll call it from now, AOFT) is generally reliable under DirectX 12 and Mantle, however we would like to note that we have found it to be somewhat unreliable under DirectX 11. DirectX 11 scores have varied widely at times, and we’ve seen one configuration flip between 1.4 million draw calls per second and 1.9 million draw calls per second based on indeterminable factors.

Our best guess right now is that the variability comes from the much greater overhead of DirectX 11, and consequently all of the work that the API, video drivers, and OS are all undertaking in the background. Consequently the DirectX 11 results are good enough for what the AOFT has set out to do – showcase just how much incredibly faster DX12 and Mantle are – but it has a much higher degree of variability than our standard tests and should be treated accordingly.

Meanwhile Futuremark for their part is looking to make it clear that this is first and foremost a test to showcase API differences, and is not a hardware test designed to showcase how different components perform.

The purpose of the test is to compare API performance on a single system. It should not be used to compare component performance across different systems. Specifically, this test should not be used to compare graphics cards, since the benefit of reducing API overhead is greatest in situations where the CPU is the limiting factor.

We have of course gone and benchmarked a number of configurations to showcase how they benefit from DirectX 12 and/or Mantle, however as per Futuremark’s guidelines we are not looking to directly compare video cards. Especially since we’re often hitting the throughput limits of the command processor, something a real-world task would not suffer from.

The Test

Moving on, we also want to quickly point out the clearly beta state of the current WDDM 2.0 drivers. Of note, the DX11 results with NVIDIA’s 349.90 driver are notably lower than the results with their WDDM 1.3 driver, showing much greater variability. Meanwhile AMD’s drivers have stability issues, with our dGPU testbed locking up a couple of different times. So these drivers are clearly not at production status.

| DirectX 12 Support Status | ||||

| Current Status | Supported At Launch | |||

| AMD GCN 1.2 (285) | Working | Yes | ||

| AMD GCN 1.1 (290/260 Series) | Working | Yes | ||

| AMD GCN 1.0 (7000/200 Series) | Working | Yes | ||

| NVIDIA Maxwell 2 (900 Series) | Working | Yes | ||

| NVIDIA Maxwell 1 (750 Series) | Working | Yes | ||

| NVIDIA Kepler (600/700 Series) | Working | Yes | ||

| NVIDIA Fermi (400/500 Series) | Not Active | Yes | ||

| Intel Gen 7.5 (Haswell) | Working | Yes | ||

| Intel Gen 8 (Broadwell) | Working | Yes | ||

And on that note, it should be noted that the OS and drivers are all still in development. So performance results are subject to change as Windows 10 and the WDDM 2.0 drivers get closer to finalization.

One bit of good news is that DirectX 12 support on AMD GCN 1.0 cards is up and running here, as opposed to the issues we ran into last month with Star Swarm. So other than NVIDIA’s Fermi cards, which aren’t turned on in beta drivers, we have the ability to test all of the major x86-paired GPU architectures that support DirectX 12.

For our actual testing, we’ve broken down our testing for dGPUs and for iGPUs. Given the vast performance difference between the two and the fact that the CPU and GPU are bound together in the latter, this helps to better control for relative performance.

On the dGPU side we are largely reusing our Star Swarm test configuration, meaning we’re testing the full range of working DX12-capable GPU architectures across a range of CPU configurations.

| DirectX 12 Preview dGPU Testing CPU Configurations (i7-4960X) | |||

| Configuration | Emulating | ||

| 6C/12T @ 4.2GHz | Overclocked Core i7 | ||

| 4C/4T @ 3.8GHz | Core i5-4670K | ||

| 2C/4T @ 3.8GHz | Core i3-4370 | ||

Meanwhile on the iGPU side we have a range of Haswell and Kaveri processors from Intel and AMD respectively.

| CPU: | Intel Core i7-4960X @ 4.2GHz |

| Motherboard: | ASRock Fatal1ty X79 Professional |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Samsung SSD 840 EVO (750GB) |

| Memory: | G.Skill RipjawZ DDR3-1866 4 x 8GB (9-10-9-26) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: | AMD Radeon R9 290X AMD Radeon R9 285 AMD Radeon HD 7970 NVIDIA GeForce GTX 980 NVIDIA GeForce GTX 750 Ti NVIDIA GeForce GTX 680 |

| Video Drivers: | NVIDIA Release 349.90 Beta AMD Catalyst 15.200.1012.2 Beta |

| OS: | Windows 10 Technical Preview (Build 10041) |

| CPU: | AMD A10-7850K AMD A10-7700K AMD A8-7600 AMD A6-7400L Intel Core i7-4790K Intel Core i5-4690 Intel Core i3-4360 Intel Core i3-4130T Pentium G3258 |

| Motherboard: | GIGABYTE F2A88X-UP4 for AMD ASUS Maximus VII Impact for Intel LGA-1150 Zotac ZBOX EI750 Plus for Intel BGA |

| Power Supply: | Rosewill Silent Night 500W Platinum |

| Hard Disk: | OCZ Vertex 3 256GB OS SSD |

| Memory: | G.Skill 2x4GB DDR3-2133 9-11-10 for AMD G.Skill 2x4GB DDR3-1866 9-10-9 at 1600 for Intel |

| Video Cards: | AMD APU Integrated Intel CPU Integrated |

| Video Drivers: | AMD Catalyst 15.200.1012.2 Beta Intel Driver Version 10.18.15.4124 |

| OS: | Windows 10 Technical Preview (Build 10041) |

113 Comments

View All Comments

Ryan Smith - Sunday, March 29, 2015 - link

DX12 brings two benefits in this context:1) Much, much less CPU overhead in submitting draw calls

2) Better scaling out with core count

Even though we can't take advantage of #2, we take advantage of #1. DX11ST means you have 1 relatively inefficient thread going, whereas DX12 means you have 2 (or 4 depending on HT) highly efficient threads going.

LoccOtHaN - Saturday, March 28, 2015 - link

Hmm, Where are FX 8350 + and FX x4 x6 or Phenom x4 & x6 Tests?Lot of people have those CPU's, and i mean LOT of People ;-)

flabber - Saturday, March 28, 2015 - link

It's too bad that AMD is at the end of the road. They were putting out some good technology. Or at least, pushing for technology to improve.Michael Bay - Sunday, March 29, 2015 - link

Intel will never let them die.deruberhanyok - Saturday, March 28, 2015 - link

Does this mean we could see games developed to similar levels of graphical fidelity as current ones, but performance significantly higher?In which case, current graphics hardware could, in theory, run a game in a 4k resolution at much higher framerates today, all other things being equal? Or run at a lower resolution at much higher sustained framerates (making a 120hz display suddenly a useful thing to have)?

Or, put another way: does the increased CPU overhead, which allows for significantly more draw calls, mean that developers will only see a benefit with more detail/objects on the screen, or could someone, for instance, take a current game with a D3D11 renderer, add a D3D12 renderer to it, and get huge performance increases? I don't think we've seen that with Mantle, so I'm assuming it isn't the case?

Michael Bay - Sunday, March 29, 2015 - link

You probably won`t get 4K out of middle to low-end cards of today, as it is also a memory size and bandwidth issue, but frameraates could improve I think.Gigaplex - Monday, March 30, 2015 - link

4k performance is generally ROP limited, not draw call limited. This won't help a whole lot.Uplink10 - Saturday, March 28, 2015 - link

Too bad publishers won`t issue developers to "remaster" older video games with DX12. Only new games will benefit from this.lukeiscool10 - Saturday, March 28, 2015 - link

Why do AMD and nvida fanboys continue to bitch at each other. Take a moment to realise we are going to both be getting great looking games but one thing hold us back consoles. So take your hate towards them as they are holding pc backjabber - Monday, March 30, 2015 - link

Maybe because we are entering into a new age when cards are not worth measuring on FPS alone in most cases and thats going to take a lot of fun out of the fanboy wars.To be honest unless you are running multi monitor/ultra high res just save up $200 and choose the card that looks best in your case.