GeForce GTX 970: Correcting The Specs & Exploring Memory Allocation

by Ryan Smith on January 26, 2015 1:00 PM ESTSegmented Memory Allocation in Software

So far we’ve talked about the hardware, and having finally explained the hardware basis of segmented memory we can begin to understand the role software plays, and how software allocates memory among the two segments.

From a low-level perspective, video memory management under Windows is the domain of the combination of the operating system and the video drivers. Strictly speaking Windows controls video memory management – this being one of the big changes of Windows Vista and the Windows Display Driver Model – while the video drivers get a significant amount of input in hinting at how things should be laid out.

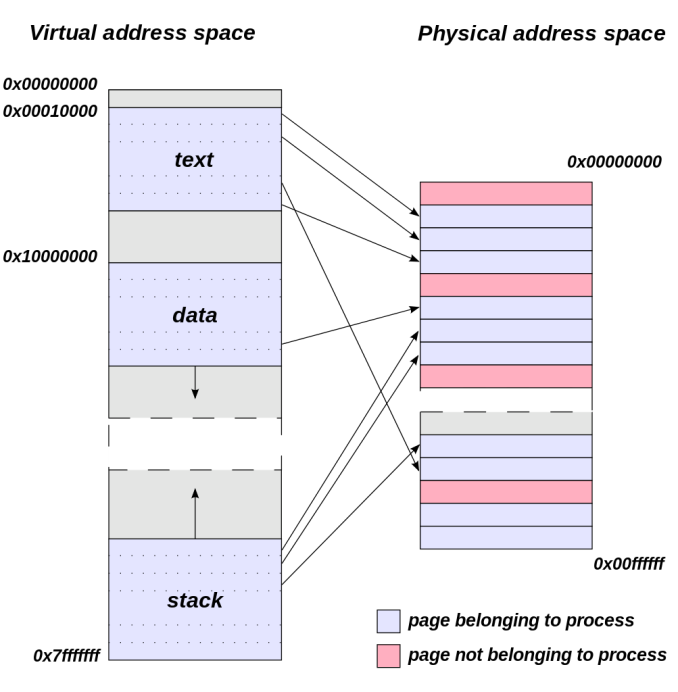

Meanwhile from an application’s perspective all video memory and its address space is virtual. This means that applications are writing to their own private space, blissfully unaware of what else is in video memory and where it may be, or for that matter where in memory (or even which memory) they are writing. As a result of this memory virtualization it falls to the OS and video drivers to decide where in physical VRAM to allocate memory requests, and for the GTX 970 in particular, whether to put a request in the 3.5GB segment, the 512MB segment, or in the worst case scenario system memory over PCIe.

Virtual Address Space (Image Courtesy Dysprosia)

Without going quite so far to rehash the entire theory of memory management and caching, the goal of memory management in the case of the GTX 970 is to allocate resources over the entire 4GB of VRAM such that high-priority items end up in the fast segment and low-priority items end up in the slow segment. To do this NVIDIA focuses up to the first 3.5GB of memory allocations on the faster 3.5GB segment, and then finally for memory allocations beyond 3.5GB they turn to the 512MB segment, as there’s no benefit to using the slower segment so long as there’s available space in the faster segment.

The complex part of this process occurs once both memory segments are in use, at which point NVIDIA’s heuristics come into play to try to best determine which resources to allocate to which segments. How NVIDIA does this is very much a “secret sauce” scenario for the company, but from a high level identifying the type of resource and when it was last used are good ways to figure out where to send a resource. Frame buffers, render targets, UAVs, and other intermediate buffers for example are the last thing you want to send to the slow segment; meanwhile textures, resources not in active use (e.g. cached), and resources belonging to inactive applications would be great candidates to send off to the slower segment. The way NVIDIA describes the process we suspect there are even per-application optimizations in use, though NVIDIA can clearly handle generic cases as well.

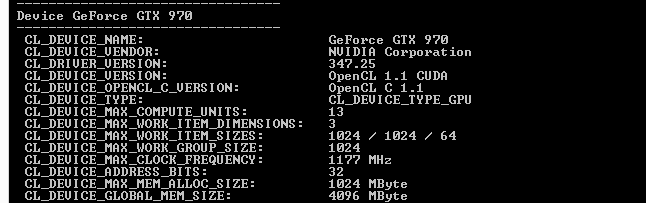

From an API perspective this is applicable towards both graphics and compute, though it’s a safe bet that graphics is the more easily and accurately handled of the two thanks to the rigid nature of graphics rendering. Direct3D, OpenGL, CUDA, and OpenCL all see and have access to the full 4GB of memory available on the GTX 970, and from the perspective of the applications using these APIs the 4GB of memory is identical, the segments being abstracted. This is also why applications attempting to benchmark the memory in a piecemeal fashion will not find slow memory areas until the end of their run, as their earlier allocations will be in the fast segment and only finally spill over to the slow segment once the fast segment is full.

| GeForce GTX 970 Addressable VRAM | |||

| API | Memory | ||

| Direct3D | 4GB | ||

| OpenGL | 4GB | ||

| CUDA | 4GB | ||

| OpenCL | 4GB | ||

The one remaining unknown element here (and something NVIDIA is still investigating) is why some users have been seeing total VRAM allocation top out at 3.5GB on a GTX 970, but go to 4GB on a GTX 980. Again from a high-level perspective all of this segmentation is abstracted, so games should not be aware of what’s going on under the hood.

Overall then the role of software in memory allocation is relatively straightforward since it’s layered on top of the segments. Applications have access to the full 4GB, and due to the fact that application memory space is virtualized the existence and usage of the memory segments is abstracted from the application, with the physical memory allocation handled by the OS and driver. Only after 3.5GB is requested – enough to fill the entire 3.5GB segment – does the 512MB segment get used, at which point NVIDIA attempts to place the least sensitive/important data in the slower segment.

398 Comments

View All Comments

Orange213 - Monday, February 2, 2015 - link

I've got EVGA GTX970 SSC and I am totally fine with cards performance. However, this is an issue that nVidia should take more seriously, but obviously they don't care too much about it which is sad....Anyway I am not switching to AMD, but they are doing is pretty low.Ballist1x - Tuesday, February 3, 2015 - link

So no follow up from anandtech in probably the biggest scandal to hit the GPU market in the last couple of years?No opinion or viewpoint on how Nvidia have handled the fiasco or continued testing to understand the memory implications?

I am losing my faith in anand...Why not take a stance? Or are they afraid to bite the hand that feeds.

Ballist1x - Tuesday, February 3, 2015 - link

Is anandtech now deleting my posts for asking where the follow up is and what Anand's stance is on this mis information?Ranger101 - Wednesday, February 4, 2015 - link

Following their astonishingly quick (and completely erroneous) absolution of Nvidia Anandtech is no doubt keen to sweep this under the carpet as soon as possible, especially as legal action is now pending...Lol Anandtech.paulemannsen - Wednesday, February 4, 2015 - link

its a 3.5 gb card. end of story. and this WILL become a problem in the future for some. while i expected that kind of behaviour from firms like nvidia or amd i didnt know anandtech would chime in so blatantly and take their readers for fools. im deeply disappointed.matcarfer - Thursday, February 5, 2015 - link

I will post an analogy that everyone should understand and we all are going to arrive at the same conclusion:You buy a car thats supposed to reach 224km/h, no matter how many people sits in it (car has only 4 seats). Problem is, this car can peak 224km/h if only 3 adults and one child are in it. If it has 4 adults, it will have problems, behaving like a slower car.

See the problem? Nvidia should've told us, they didn't. If we had known this (and review sites), people planning to max out Ram wouldn't buy this. It's false marketing, plain and simple and everyone who got a 970 should have the opportunity to take the card back and/or recieve a compensation for this.

wolfman3k5 - Thursday, February 5, 2015 - link

You guys really should check this out: https://sqz.io/gtx970Azix - Friday, February 6, 2015 - link

I am wondering how this will play out later on when driver support for the card lags behind. Really disappointed in both nvidia and AMD. if AMDs cards weren't so power hungry I'd never have gotten cheated by nvidia because I rather AMDs more solid feature offerings than nvidias "hey, we got a different way of doing ambient occlusion!" software features.Stas - Saturday, February 7, 2015 - link

That was the first thing to come to mind. 8800GTX doesn't work well for me with drivers that were released in the past 9 months. I feel like 970 will be a dead card by the time next models have settled.I'm quite skeptical of the yields being the reason behind this. There is a very small chance that one section of L2 or ROP is bad but the rest is good. I bet nVidia purposely castrated 970 to create favorable market for the 980.

LazloPanaflex - Sunday, February 8, 2015 - link

Damn dude, still rocking an 8800GTX? Um, anything you buy now is gonna be a massive upgrade, LOL