GeForce GTX 970: Correcting The Specs & Exploring Memory Allocation

by Ryan Smith on January 26, 2015 1:00 PM ESTSegmented Memory Allocation in Software

So far we’ve talked about the hardware, and having finally explained the hardware basis of segmented memory we can begin to understand the role software plays, and how software allocates memory among the two segments.

From a low-level perspective, video memory management under Windows is the domain of the combination of the operating system and the video drivers. Strictly speaking Windows controls video memory management – this being one of the big changes of Windows Vista and the Windows Display Driver Model – while the video drivers get a significant amount of input in hinting at how things should be laid out.

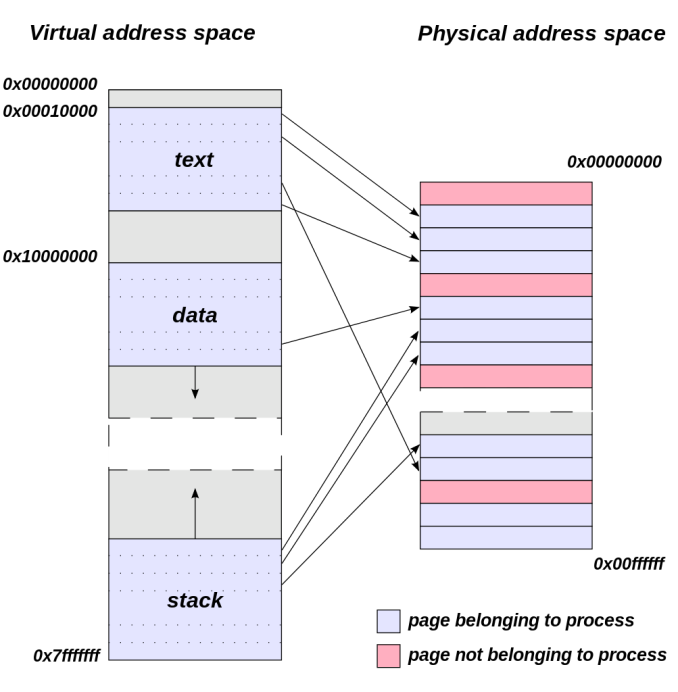

Meanwhile from an application’s perspective all video memory and its address space is virtual. This means that applications are writing to their own private space, blissfully unaware of what else is in video memory and where it may be, or for that matter where in memory (or even which memory) they are writing. As a result of this memory virtualization it falls to the OS and video drivers to decide where in physical VRAM to allocate memory requests, and for the GTX 970 in particular, whether to put a request in the 3.5GB segment, the 512MB segment, or in the worst case scenario system memory over PCIe.

Virtual Address Space (Image Courtesy Dysprosia)

Without going quite so far to rehash the entire theory of memory management and caching, the goal of memory management in the case of the GTX 970 is to allocate resources over the entire 4GB of VRAM such that high-priority items end up in the fast segment and low-priority items end up in the slow segment. To do this NVIDIA focuses up to the first 3.5GB of memory allocations on the faster 3.5GB segment, and then finally for memory allocations beyond 3.5GB they turn to the 512MB segment, as there’s no benefit to using the slower segment so long as there’s available space in the faster segment.

The complex part of this process occurs once both memory segments are in use, at which point NVIDIA’s heuristics come into play to try to best determine which resources to allocate to which segments. How NVIDIA does this is very much a “secret sauce” scenario for the company, but from a high level identifying the type of resource and when it was last used are good ways to figure out where to send a resource. Frame buffers, render targets, UAVs, and other intermediate buffers for example are the last thing you want to send to the slow segment; meanwhile textures, resources not in active use (e.g. cached), and resources belonging to inactive applications would be great candidates to send off to the slower segment. The way NVIDIA describes the process we suspect there are even per-application optimizations in use, though NVIDIA can clearly handle generic cases as well.

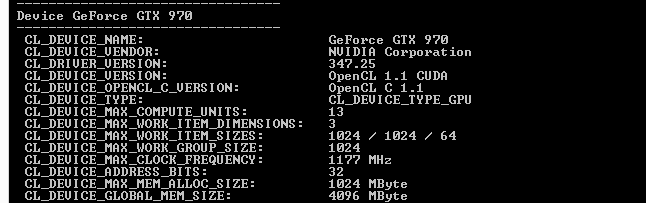

From an API perspective this is applicable towards both graphics and compute, though it’s a safe bet that graphics is the more easily and accurately handled of the two thanks to the rigid nature of graphics rendering. Direct3D, OpenGL, CUDA, and OpenCL all see and have access to the full 4GB of memory available on the GTX 970, and from the perspective of the applications using these APIs the 4GB of memory is identical, the segments being abstracted. This is also why applications attempting to benchmark the memory in a piecemeal fashion will not find slow memory areas until the end of their run, as their earlier allocations will be in the fast segment and only finally spill over to the slow segment once the fast segment is full.

| GeForce GTX 970 Addressable VRAM | |||

| API | Memory | ||

| Direct3D | 4GB | ||

| OpenGL | 4GB | ||

| CUDA | 4GB | ||

| OpenCL | 4GB | ||

The one remaining unknown element here (and something NVIDIA is still investigating) is why some users have been seeing total VRAM allocation top out at 3.5GB on a GTX 970, but go to 4GB on a GTX 980. Again from a high-level perspective all of this segmentation is abstracted, so games should not be aware of what’s going on under the hood.

Overall then the role of software in memory allocation is relatively straightforward since it’s layered on top of the segments. Applications have access to the full 4GB, and due to the fact that application memory space is virtualized the existence and usage of the memory segments is abstracted from the application, with the physical memory allocation handled by the OS and driver. Only after 3.5GB is requested – enough to fill the entire 3.5GB segment – does the 512MB segment get used, at which point NVIDIA attempts to place the least sensitive/important data in the slower segment.

398 Comments

View All Comments

piiman - Saturday, January 31, 2015 - link

" if anything this will only affect users who are maxing out VRAM and thats probably only a few of most owners who are probably on 1080p or 1440p without cranking modded textures/effects on games."Oh well if that's all it hurts.....oh wait that would be me. I bought 2 970s to do just that but guess what?

But some how since you don't' think it effects you it's ok? VRAM use is going up and will go higher I didn't buy these cards to play last years games but the next gen games.

Nfarce - Saturday, January 31, 2015 - link

Thank you piiman. I failed to mention that while I'm happy TODAY, I would not be affected TOMORROW. And this is WAY beyond a PR issue as Dal claims. WAY BEYOND. It's a complete misrepresentation of the card's stats whether by accident or intentional. And it is extremely hard to believe that all the engineering, marketing, and management teams collectively completely MISSED the wrong specs officially released by Nvidia for the card. REAL hard.So while I'm happy *currently* in my usage, there is a good chance I may have problems with games released this year and next year, something I did not anticipate as someone who skips at least one and sometimes two refreshes or generations of GPUs. That's not a contradiction in my beliefs as Dal also wrongly claimed.

Nfarce - Saturday, January 31, 2015 - link

Let me restate: while I likely would have bought the 970 over the 980 even with reduced stats, I would definitely have rethought the purchase decision for long term usage.Dal Makhani - Tuesday, February 3, 2015 - link

You guys are overreacting, you will be fine in next gen games, especially if piiman has 2 of them. So what you have to reduce settings a bit so you dont hit the frame buffer limit, its not a big deal. While I am upset to hear that Nvidia flat out lied, we are all people and if marketing and engineering misrepresent something, its just business as usual because all companies face situations like this.HOWEVER, I think Nvidia should somehow make this up with game codes or some sort of step up program where they pay part of the bill for users to upgrade to a 980 if they are unhappy with their purchase. Loyalty should be kept through a response, i am in no way saying they should just let this go. I would bet a fair bit of money to say your 970's will still last as long as you thought they would.

inolvidable - Friday, January 30, 2015 - link

I leave you here an interview with an engenieer from NVIDIA explaining everything: http://youtu.be/spZJrsssPA0piiman - Saturday, January 31, 2015 - link

LOL But what would Hitler say?peevee - Saturday, January 31, 2015 - link

Admit it, really used memory bus width is 224 bits, not 256 bits.InsidiousTechnology - Saturday, January 31, 2015 - link

There are times when a Class Action law suit are prudent and this is one of them. All those people who mindlessly cry about law suits fail to realize they help prevent clearly deceptive practices...and with Nvidia this is not the first time.FlushedBubblyJock - Saturday, January 31, 2015 - link

Can we sue AMD for worse at the same time ?http://www.anandtech.com/show/5176/amd-revises-bul...

aliciakr - Monday, February 2, 2015 - link

Verry nice