The Impact of Spare Area on SandForce, More Capacity At No Performance Loss?

by Anand Lal Shimpi on May 3, 2010 2:08 AM ESTNo, it’s not the new Indilinx JetStream controller - that’ll be in the second half of the year at the earliest. And it’s definitely not Intel’s 3rd generation X25-M, we won’t see that until Q4. The SSD I posted a teaser of last week is a modified version of OCZ’s Agility 2.

The modification? Instead of around 28% of the drive’s NAND set aside as spare area, this version of the Agility 2 has 13%. You get more capacity to store data, at the expense of potentially lower performance. How much lower? That’s exactly what I’ve spent the past several days trying to find out.

The drive looks just like a standard Agility 2. OCZ often makes special runs of drives for testing with no official labels or markings, in fact that's what my first SandForce drive came as late last year. Internally the drive looks identical to the Agility 2 we reviewed not too long ago.

OCZ lists the firmware as 1.01 compared to the standard 1.0 firmware on the shipping Agility 2. The only difference I'm aware of is the amount of NAND set aside as spare area.

SandForce and Spare Area

When you write data to a SandForce drive the controller attempts to represent the data you’re writing with fewer bits. What’s stored isn’t your exact data, but a smaller representation of it plus a hash or index so that you can recover the original data. This results in potentially lower write amplification, but greater reliance on the controller and firmware.

SandForce stores some amount of redundant information in order to deal with decreasing reliability of smaller geometry NAND. The redundant data and index/hash of the actual data being written are stored in the drive’s spare area.

While most consumer SSDs dedicate around 7% of their total capacity to spare area, SandForce’s drives have required ~28% until now. As I mentioned at the end of last year however, SandForce would be bringing a more consumer focused firmware to market after the SF-1200 with only 13% over provisioning. That’s what’s loaded on the drive OCZ sent me late last week.

| SandForce Overprovisioning Comparison | |||||

| Advertised Capacity | Total Flash | Formatted Capacity (28% OP) | Formatted Capacity (13% OP) | ||

| 50GB | 64GB | 46.6GB | 55.9GB | ||

| 100GB | 128GB | 93.1GB | 111.8GB | ||

| 200GB | 256GB | 186.3GB | 223.5GB | ||

| 400GB | 512GB | 372.5GB | 447.0GB | ||

As always, if you want to know more about SandForce read this, and if you want to know more about how SSDs work read this.

When Does Spare Area Matter?

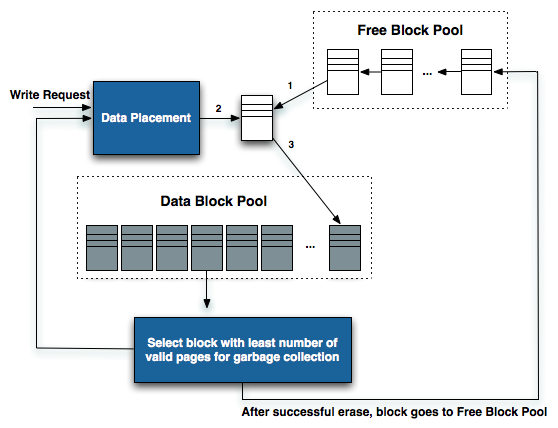

In addition to the SandForce-specific uses of spare area, all SSDs use it for three purposes: 1) read-modify-writes, 2) wear leveling and 3) bad block replacement.

If a SSD is running out of open pages and a block full of invalid data needs to be cleaned, its valid contents is copied to a new block allocated from the spare area and the two blocks swap positions. The old block is cleaned and tossed into the spare area pool and formerly spare block is now put into regular use.

Recreated from diagram originally produced by IBM's Zurich Research Lab

The spare area is also used for wear leveling. NAND blocks in the spare area are constantly being moved in and out of user space to make sure that all parts of the drive wear evenly.

And finally, if a block does wear out (either expectedly or unexpectedly), its replacement comes from the spare area.

30 Comments

View All Comments

StormyParis - Monday, May 3, 2010 - link

it seems SSD perf is the new dick size ?Belard - Tuesday, May 4, 2010 - link

No, it has nothing to do with "mine is bigger than yours"...They are still somewhat expensive. But when you use one in a notebook or desktop, it makes your computer SO much more faster. Its about SPEED>

Applications like Word or Photoshop are ready to go by the time your finger leaves the mouse button. Windows7 - fully configured (not just some clean / bare install) boots in about 10 seconds (7~14sec depending on the CPU/Mobo & software used)... compared to a 7200RPM drive in 20~55seconds.

And that is with todays X25-G2 drives using SATA 2.0. Imagine in about 2 years, a $100 should get you 128GB that can READ upwards of 450+ MB/s.

Back in the OLD days, my old Amiga 1000 would boot the OS off a floppy in about 45 seconds, with the HD installed, closer to 10 seconds.

I'll admit that Win7's sleep mode works very good with a wake up time on a HDD that is about 3~5 seconds.

neoflux - Friday, June 11, 2010 - link

Wow, someone's an internet douche.Maybe you need to look at the overall system benchmarks, like PCMark Vantage or the AnandTech Storage Bench, where your precious Intels are destroyed by the SF-based drives.

And even if you look at the random reads/writes, the Intels are again destroyed on the writes and the same speed for the reads. I'm of course looking at the aligned benchmarks (aligned read not in this article, but shown here: http://www.anandtech.com/bench/SSD/83 ), because I'm actually being objective and realistic about how SSDs are used rather than trying to justify my purchases/recommendations.

buggyfunbunny - Monday, May 3, 2010 - link

What I'd like to see added to the TestBench, for all drive types (not just SSD), is a Real World, highly (or fully) normalized relational database. Selects, inserts, updates, deletes. The main reason for SSDs will turn out to be such join intensive databases; massive file systems, awash in redundant data, will always out pace silicon storage. In Real World size, tens of gigabytes running transactions. The TPC suite would be nice, but AT may not want to spend the money to join. Any consistent test is fine, but should implement joins over flat-file structures.Zan Lynx - Monday, May 3, 2010 - link

Big databases don't bother with SATA SSDs. They go straight to PCI-e direct-connected SSD, like Fusion-IO and others.If you want fast, you have to get rid of the overhead associated with SATA. SATA protocol overhead is really a big waste of time.

FunBunny - Monday, May 3, 2010 - link

Of course they do. PCIe "drives" aren't drives, and are limited to the number of slots. This approach works OK if you're taking the Google way: one server one drive. That's not the point. One wants a centralized, controlled datastore. That's what BCNF databases are all about. (Massive flatfiles aren't databases, even if they're stored in a crippled engine like MySql.) Such databases talk to some sort of storage array; an SSD one running BCNF data will be faster than some kiddie koders java talking to files (kiddie koders don't know that what they're building is just like their grandfathers' COBOL messes; but that's another episode).In any case, the point is to subject all drives to join intensive datastores, to see which ones do best. PCIe will likely be faster in pure I/O (but not necessarily in retrieving the joined data) than SSD or HDD, but that's OK; some databases can work that way, most won't. Last I checked, the Fusion (which, if you've looked, now expressly say their parts AREN'T SSD) parts are substantially more expensive than the "consumer" parts that AT has been looking at. That said, storage vendors have been using commodity HDD (suitably QA'd) for years. In due time, the same will be true for SSD array vending.

Zan Lynx - Monday, May 3, 2010 - link

It is solid state. It stores data and looks like a drive to the OS. That makes it a SSD by my definition.I wonder why Fusion-IO wants to claim their devices aren't SSDs? My guess is that they just don't want people thinking their devices are the same as SATA SSDs.

jimhsu - Monday, May 3, 2010 - link

Hey Anand,I'm not doubting your Intel performance figures, but I wonder why your benchmarks only show about a 20% performance decrease, when threads like this (http://forums.anandtech.com/showthread.php?t=20699... show that it is possibly quite more? (20% is just on the edge of human perception, and I can tell you that a completely full X25-M does really feel much slower than that). Is the decrease in performance different for read vs/ write, sequential vs. random? Are you using the drive in an OS context (where the drive is constantly being hit with small read/writes while the benchmark is running)?

Anand Lal Shimpi - Monday, May 3, 2010 - link

20% is a pretty decent sized hit. Also note that it's not really a matter of how full your drive is, but how you fill the drive. If you look back at the SSD Relapse article I did you'll see that SSDs like to be written to in a nice sequential manner. A lot of small random writes all over the drive and you run into performance problems much faster. This is one reason I've been very interested in looking at how resilient the SF drives are, they seem to be much better than Intel at this point.Take care,

Anand

FunBunnyBuggyBuggy - Tuesday, May 4, 2010 - link

(first: two issues; the login refuses to retain so I've had to create a new each time I comment which is a pain, and I wanted to re-read the Relapse to refresh my memory but it gets flagged as an Attack Site on FF 3.0.X)-- If you look back at the SSD Relapse article I did you'll see that SSDs like to be written to in a nice sequential manner.

I'm still not convinced that this is strictly true. Controllers are wont to maximize performance and wear-leveling. The assumption is that what is a sequential operation from the point of view of application/OS is so on the "disc"; which is strictly true for a HDD, and measurements bear this out. For SSD, the reality is murkier. As you've pointed out, spare area is not a sequestered group of NAND cells, but NAND cells with a Flag; thus spare move around, presumably in erase block size. A sequential write may not only appear in non-contiguous blocks, but also in non-pure blocks, that is, blocks with data unrelated to the sequential write request from the application/OS. Whichever approach maximizes the controller writers notion of the trade off between performance requirement and wear level requirement will determine the physical write.