The Intel Core i3-12300 Review: Quad-Core Alder Lake Shines

by Gavin Bonshor on March 3, 2022 8:30 AM ESTIntel Core i3-12300 Performance: DDR5 vs DDR4

Intel’s 12th generation processors from the top of the stack, including the flagship Core i9-12900K) and the more affordable and entry-level offerings such as the Core i3-12300, allow users to build a new system with the latest technologies available. One of the main elements that make Intel’s Alder Lake processors flexible for users building a new system is that it includes support for both DDR5 and DDR4 memory. It’s no secret that DDR5 memory costs (far) more than the already established DDR4 counterparts. One element to this includes an early adopter’s fee. Having the latest and greatest technology comes at a price premium.

The reason why we have opted to test the difference in performance between DDR5 and DDR4 memory with the Core i3-12300 is simply down to the price point. While users will most likely be looking to use DDR5 with the performance SKUs such as the Core i9-12900K, Core i7-12700K, and Core i5-12600K, users building a new system with the Core i3-12300 are more likely to go down a more affordable route. This includes using DDR4 memory, which is inherently cheaper than DDR5 and opting for a cheaper motherboard such as an H670, B660, or H610 option. Such systems do give up some performance versus what the i3-12300 can do at its peak, but in return it can bring costs down signfiicantly.

Traditionally we test our memory settings at JEDEC specifications. JEDEC is the standards body that determines the requirements for each memory standard. In the case of Intel's Alder Lake, the Core i3 supports both DDR5 and DDR4 memory. Below are the memory settings we used for our DDR5 versus DDR4 testing:

- DDR4-3200 CL22

- DDR5-4800(B) CL40

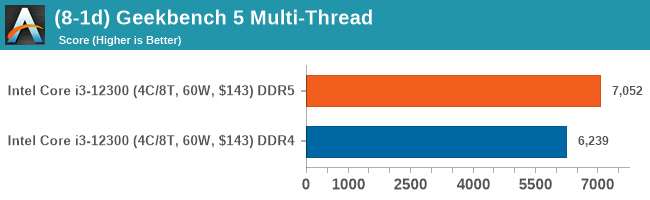

CPU Performance: DDR5 versus DDR4

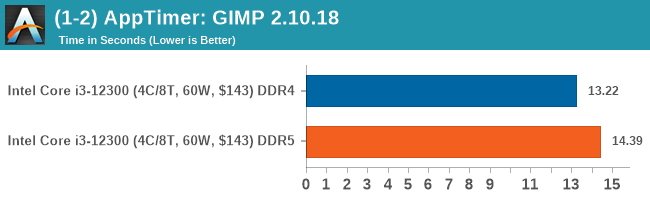

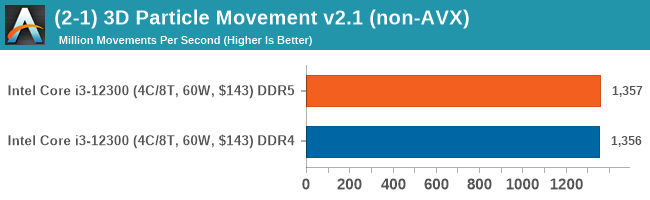

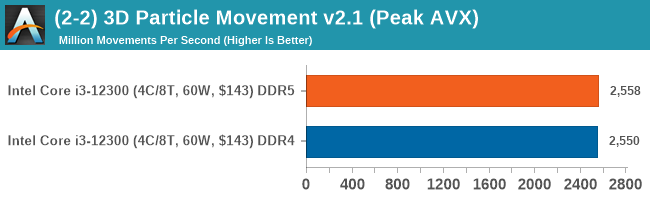

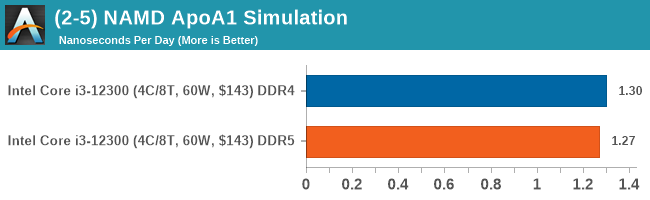

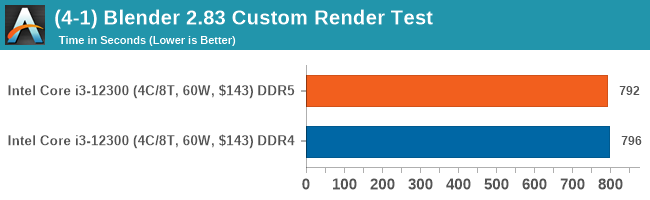

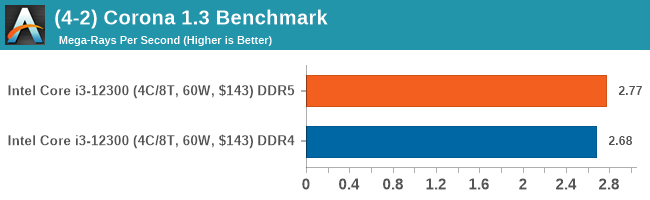

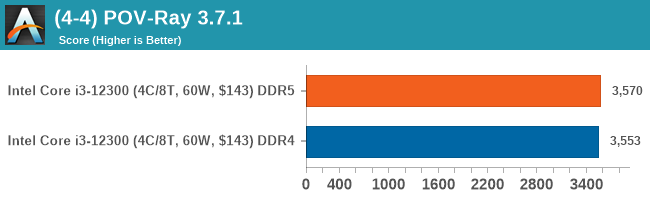

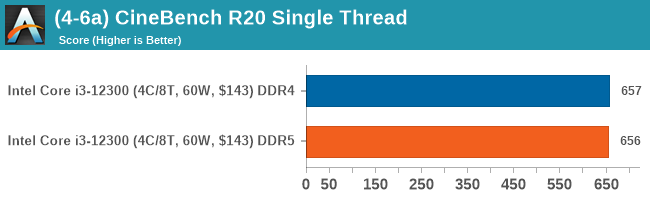

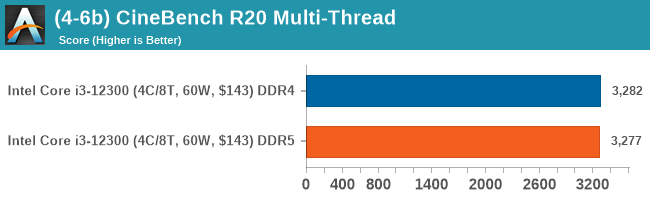

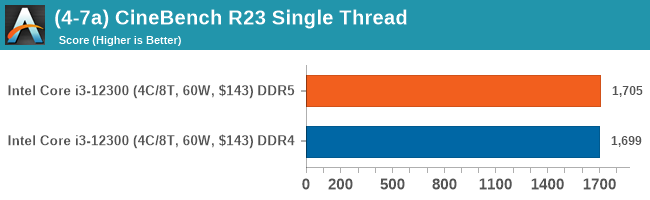

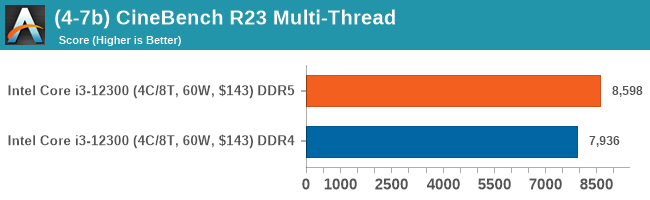

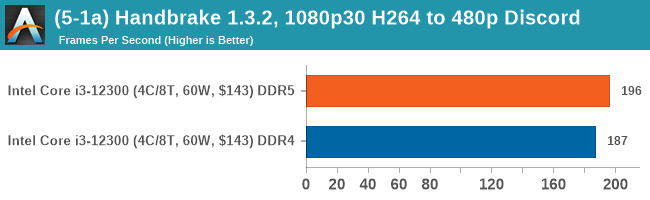

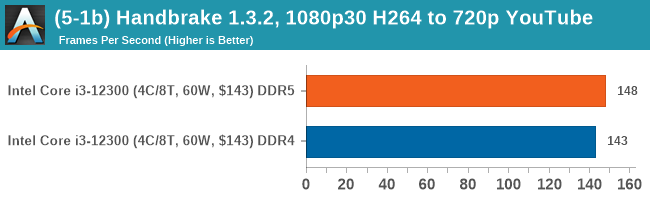

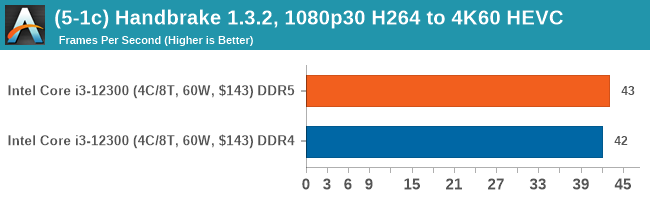

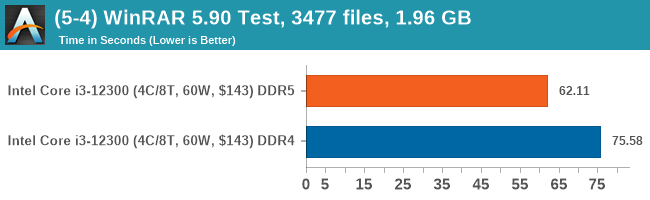

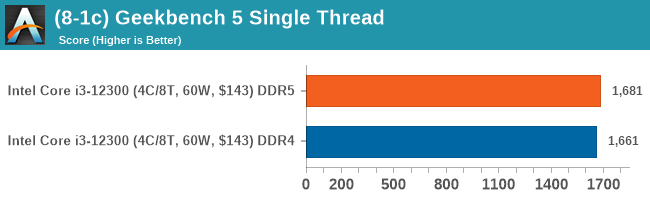

In our computational benchmarks, there wasn't much difference between DDR5-4800 CL40 and DDR4-3200 CL22 when using the Core i3-12300. The biggest difference came in our WinRAR benchmark which is heavily reliant on memory to increase performance; the DDR5 performed around 21% better than DDR4 in this scenario.

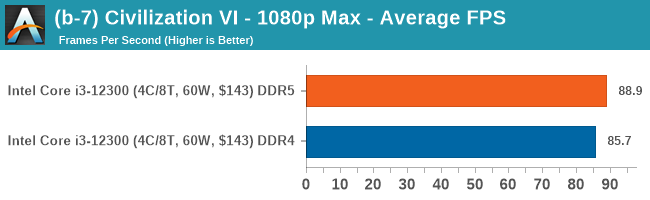

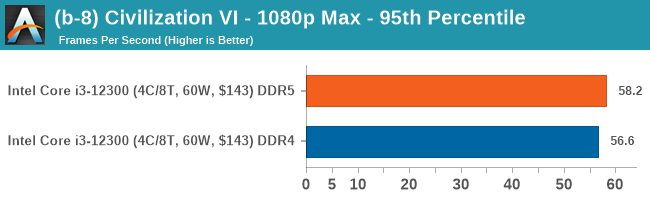

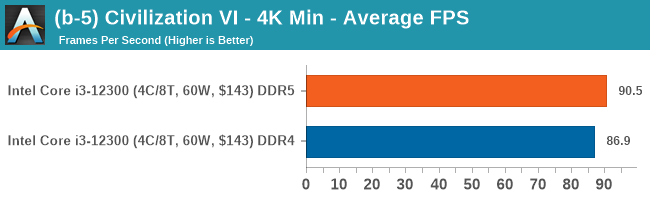

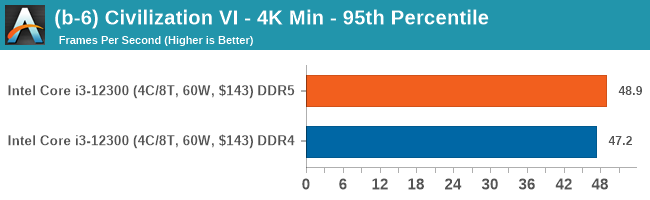

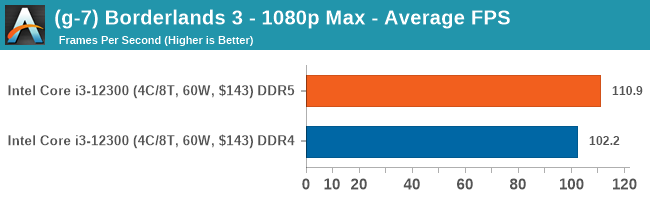

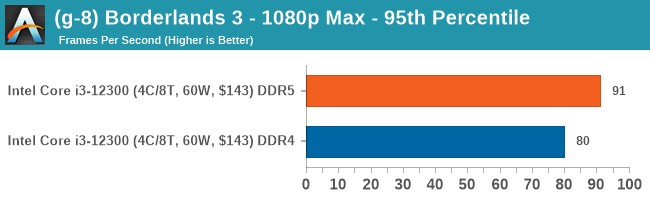

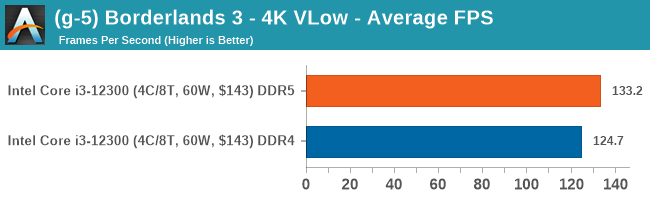

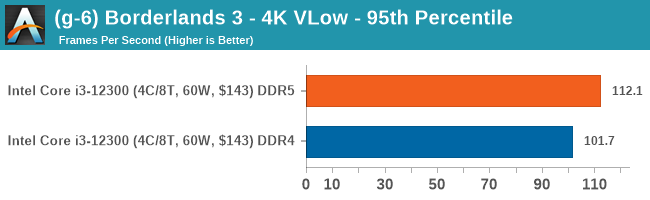

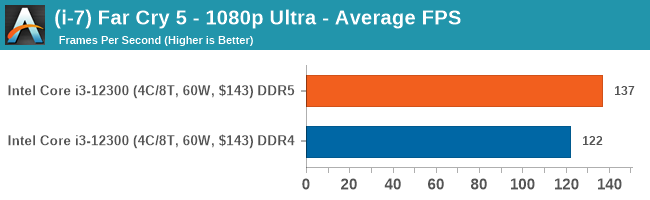

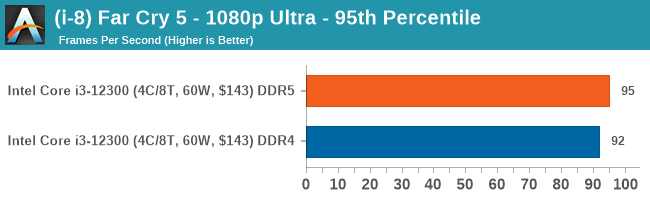

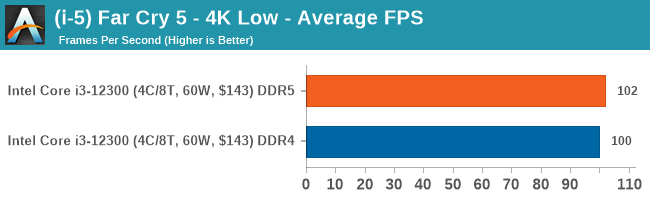

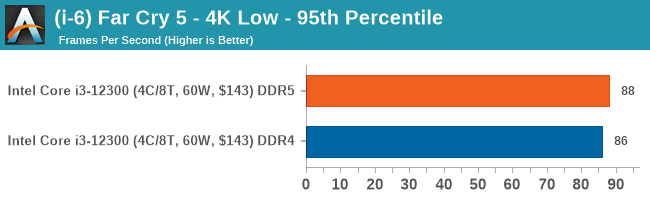

Gaming Performance: DDR5 versus DDR4

On the whole, DDR5 does perform better in our gaming tests, but not enough to make it a 'must have' in comparison to DDR4 memory. The gains overall are marginal for the most part, with DDR5 offering around 3-7 more frames per second over DDR4 memory, depending on the titles game engine optimization.

140 Comments

View All Comments

mode_13h - Friday, March 11, 2022 - link

Always glad to share!I know about laziness. I probably should be working on programming puzzles, since I hear job interviews tend to be big on those. The last time I did anything like that was Google's "foobar", which was pretty fun. I did well enough to get an interview, but I didn't pursue it.

GeoffreyA - Monday, March 14, 2022 - link

I really wish I had known about these things, or that they had existed, 10-15 years ago. I had a thirst for programming back then and, if I may say so, would've done well. I've let go of thinking of myself as a programmer any more, but still hope to keep up an acquaintance with code.mode_13h - Monday, March 14, 2022 - link

Anything to keep your mind active is good!Especially learning *new* things. The first time I tried to learn another spoken language, as an adult, I could feel the blood rushing to my brain as I struggled to remember and pronounce new words and phrases.

GeoffreyA - Monday, March 14, 2022 - link

Absolutely!I am learning francaise, the beautiful language itself these days (and incidentally, it makes me so disappointed in English as always). There was a great article on Quanta last week that really got my brain working too.

https://www.quantamagazine.org/crisis-in-particle-...

mode_13h - Tuesday, March 15, 2022 - link

OMG, I stay away from any cutting-edge theoretical physics. I'm not investing that much effort into trying to understand something that will likely turn out to be wrong. It'd be different if I had a stake in the matter, but there's more than enough more practical stuff I should be learning.But, if you enjoy it, and trying to wrap your head around it, then it's far from the worst way you could spend your time!

GeoffreyA - Friday, March 18, 2022 - link

I enjoy it, and wish I was a physicist, but it does drain out the mind considerably, and afterwards one feels it's all rather meaningless, and it's the practical business of life that really counts: love, family, work, etc.mode_13h - Friday, March 18, 2022 - link

A lot of physicists don't have a career doing physics!It's not a bad field of study, but it doesn't pay the bills for many.

That said, I'm sure all the big quantum computing projects are staffed by some of the best physicists.

GeoffreyA - Saturday, March 19, 2022 - link

Yep, there's big money to be made in this field right now. Intel should jump on the quantum bandwagon any day now, if they haven't already done so.Silver5urfer - Thursday, March 3, 2022 - link

High respect to AT team, for mentioning the fundamental design flaw of this 12th generation. Intel really screwed up hard. Nobody cares, esp those Youtubers.They messed up the ILM hard. And the AVX512 fuse off is another gigantic kick in the face, latest update they are saying Intel will fuse them off from factory. If we see the silicon area on the ADL processors for P cores it occupies a good chunk of space plus it allows the P core to fully unleash it's performance (no more E-Cores hampering the Uncore and Powerplane and overall CPU performance)

Prime reason to skip this entire 12th gen, esp with the new rumors saying RPL LGA1700 Z700 chipset might be DDR5 only, so you get this haphazardly designed ILM which requires end user to perform a Socket mod (I would not do it despite love tinkering because the torque and all the fitting is not public, Igor clearly mentioned this) for DDR4 or buy the uber expensive DDR5 kits which have 2 flaws on their own - Price to performance, Dual Rank kits mandatory for ADL IMC to maximize the quad channel speed and performance, a.k.a needing APEX or Unify X or Tachyon all top end boards HWBot grade.

Intel really messed this, a solid CPU Arch but from an enthusiast point of view who wants to use a PC for decades going forth. AMD also, AM4 has it's own share of issues - AGESA1.2.0.5 is busted, they still did not fix the IOD USB issues through firmware, it has been plaguing the platform. And now no more X3D CPUs refresh which would have perfectly fixed all the firmware problems and IOD and DRAM plus the WHEA. Sad, still Ryzen is best if you do not want to OC or tweak just enable PBO2 and that's it. Let it do it's job, and do not touch DRAM past 3600MHz. For Intel LGA1200 10th gen is best for SMT performance and all workloads for those who want PCIe4.0 (still not a big deal because not much use) a 11900K is fine but the absolute worst class of binning, poor IMC is a strict no no. The biggest loss going with LGA1200 Z590 is DMI speed is very low. But since majority will not saturate the NVMe on a constant load it is okay, a shame if 10900K had DMI of 11th gen it would have been solid since both X570 and Z590 are practically same in PCH link lanes, only on the CPU side Ryzen has extra USB but with the crapping out it doesn't make sense, at-least to me.

AM5 needs maturity as well, first customers will always end up being guinea pigs. Still I would like to see how AMD plays their game, and I'm looking forward since it won't have BS E-Core crap. Full fast fat cores.

Makaveli - Thursday, March 3, 2022 - link

No USB issues here on AGESA 1.2.0.3 Patch C or WHEA.