The Intel Core i3-12300 Review: Quad-Core Alder Lake Shines

by Gavin Bonshor on March 3, 2022 8:30 AM ESTIntel Core i3-12300 Performance: DDR5 vs DDR4

Intel’s 12th generation processors from the top of the stack, including the flagship Core i9-12900K) and the more affordable and entry-level offerings such as the Core i3-12300, allow users to build a new system with the latest technologies available. One of the main elements that make Intel’s Alder Lake processors flexible for users building a new system is that it includes support for both DDR5 and DDR4 memory. It’s no secret that DDR5 memory costs (far) more than the already established DDR4 counterparts. One element to this includes an early adopter’s fee. Having the latest and greatest technology comes at a price premium.

The reason why we have opted to test the difference in performance between DDR5 and DDR4 memory with the Core i3-12300 is simply down to the price point. While users will most likely be looking to use DDR5 with the performance SKUs such as the Core i9-12900K, Core i7-12700K, and Core i5-12600K, users building a new system with the Core i3-12300 are more likely to go down a more affordable route. This includes using DDR4 memory, which is inherently cheaper than DDR5 and opting for a cheaper motherboard such as an H670, B660, or H610 option. Such systems do give up some performance versus what the i3-12300 can do at its peak, but in return it can bring costs down signfiicantly.

Traditionally we test our memory settings at JEDEC specifications. JEDEC is the standards body that determines the requirements for each memory standard. In the case of Intel's Alder Lake, the Core i3 supports both DDR5 and DDR4 memory. Below are the memory settings we used for our DDR5 versus DDR4 testing:

- DDR4-3200 CL22

- DDR5-4800(B) CL40

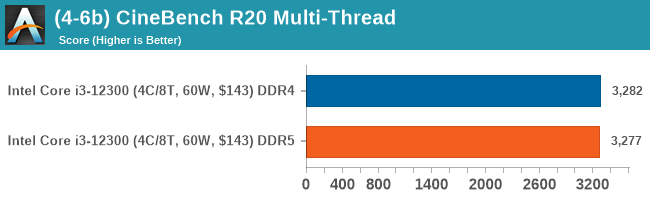

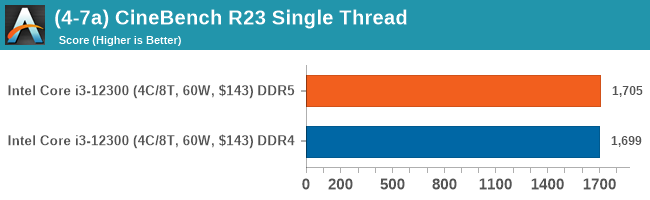

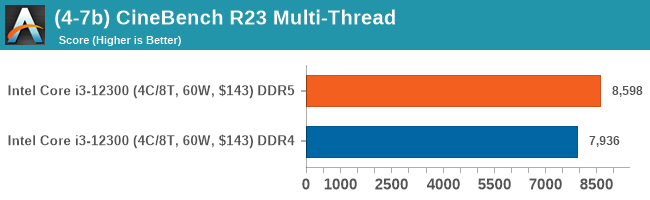

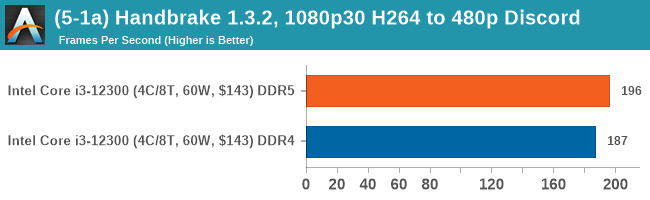

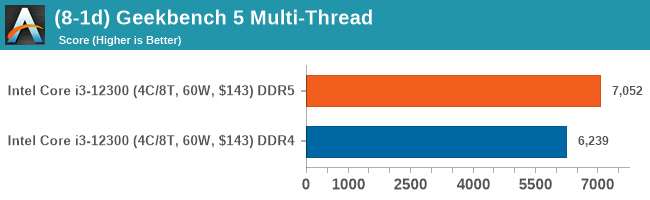

CPU Performance: DDR5 versus DDR4

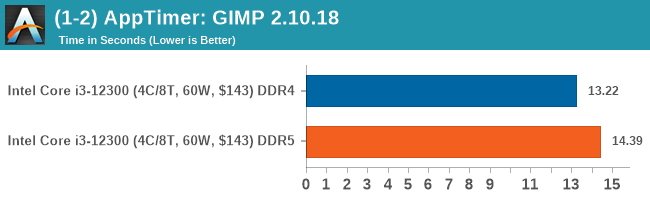

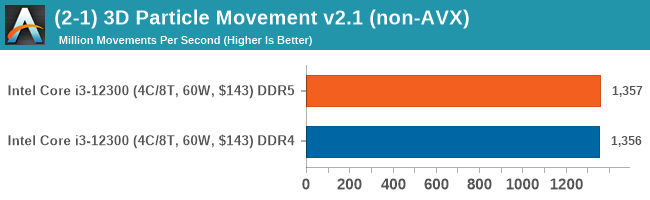

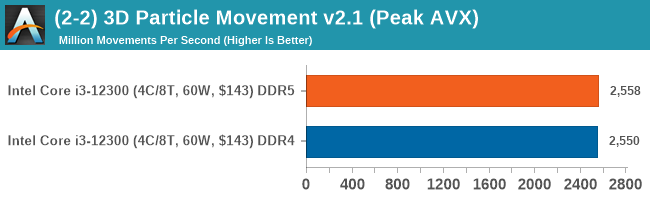

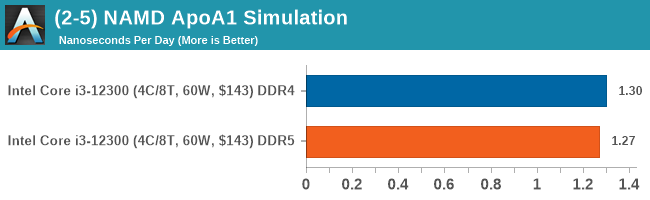

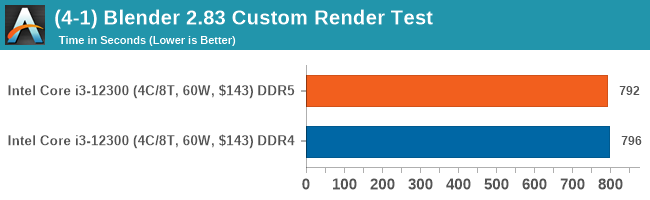

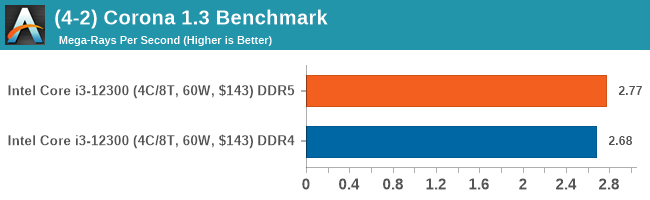

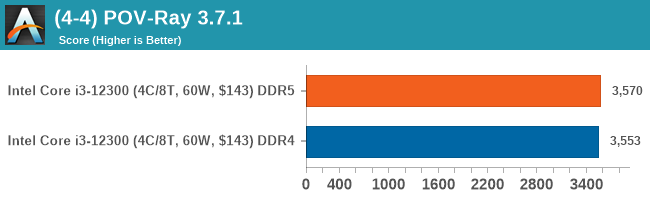

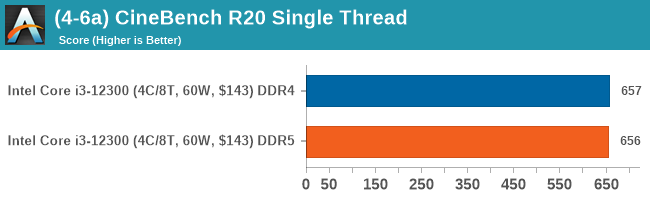

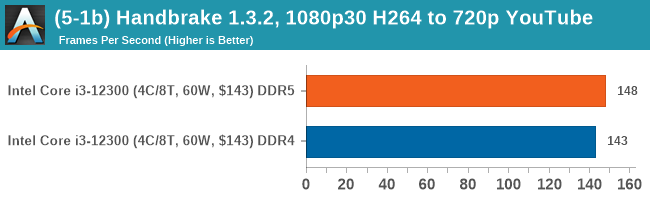

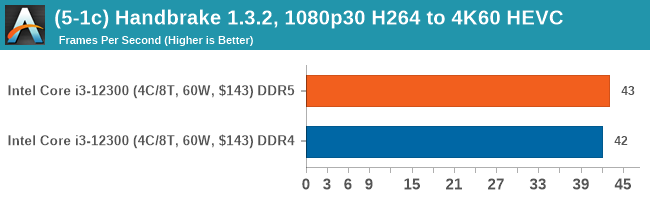

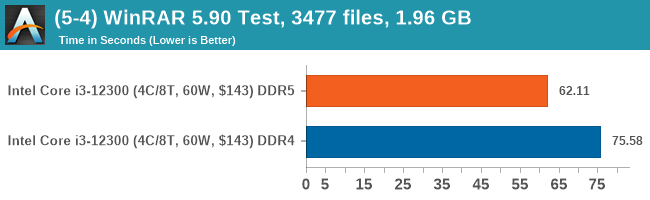

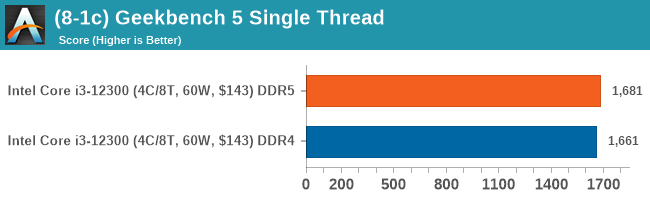

In our computational benchmarks, there wasn't much difference between DDR5-4800 CL40 and DDR4-3200 CL22 when using the Core i3-12300. The biggest difference came in our WinRAR benchmark which is heavily reliant on memory to increase performance; the DDR5 performed around 21% better than DDR4 in this scenario.

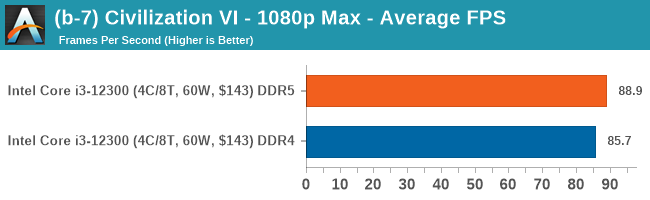

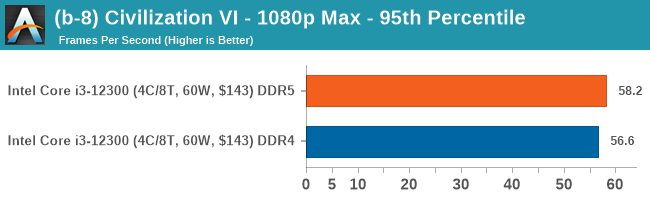

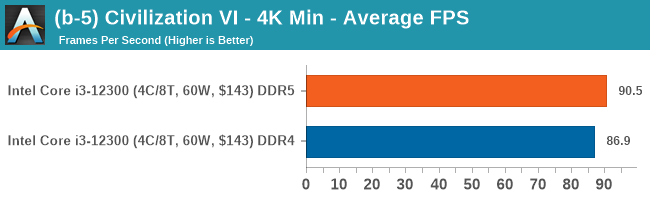

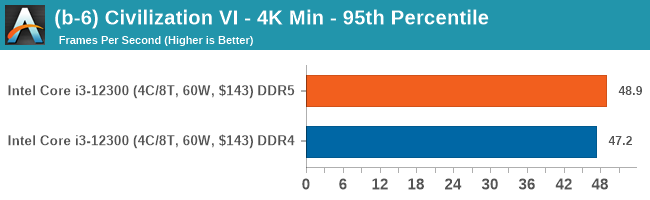

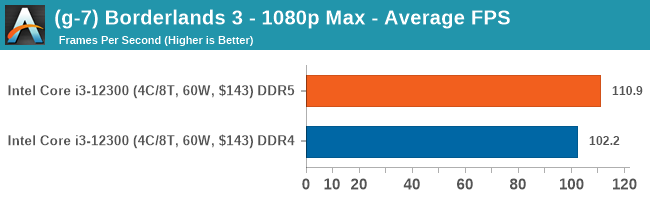

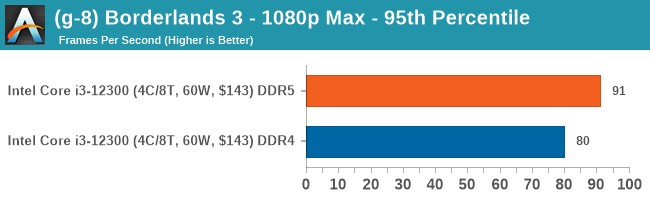

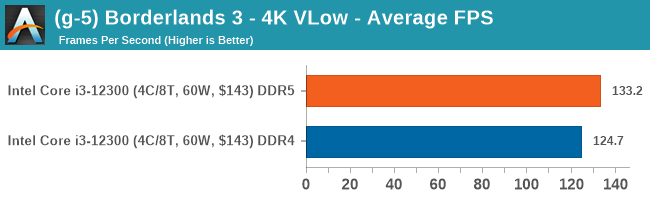

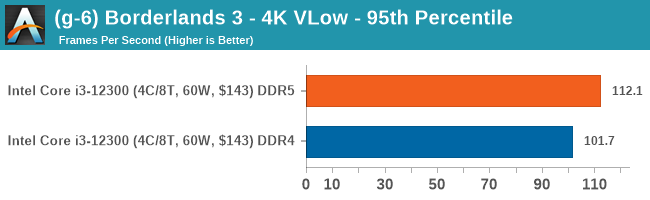

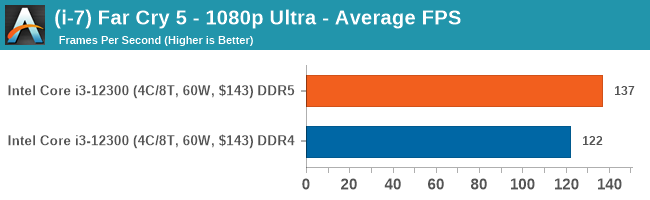

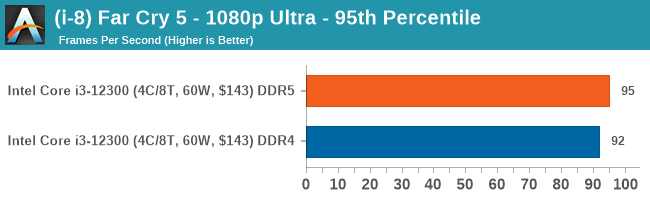

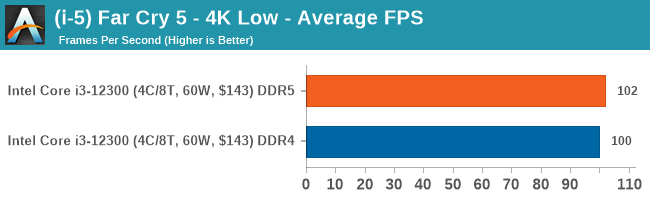

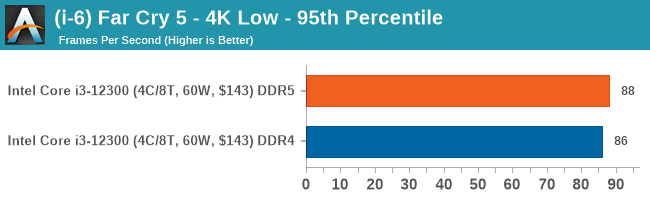

Gaming Performance: DDR5 versus DDR4

On the whole, DDR5 does perform better in our gaming tests, but not enough to make it a 'must have' in comparison to DDR4 memory. The gains overall are marginal for the most part, with DDR5 offering around 3-7 more frames per second over DDR4 memory, depending on the titles game engine optimization.

140 Comments

View All Comments

TheinsanegamerN - Thursday, March 3, 2022 - link

You could read the review and look at the benchmarks, that may helpSunMaster - Thursday, March 3, 2022 - link

Todays games are nowhere near single threaded, even though there is a main thread. The reason alder lake does well is because it has several/many cores/threads clocked high performing well. both xbox and playstation have had multicore amds for a decade (since 2006 for PS, 2013 for xbox), which forced developers to focus on weaker threads rather than the old fashioned monolithic design.SunMaster - Thursday, March 3, 2022 - link

Can't edit my post it seems, but by multicore AMDS I meant 8 core.GeoffreyA - Tuesday, March 8, 2022 - link

I would say, ST is really the building block of multi-threading. Get that single brick strong, and the entire wall will be strong.mode_13h - Wednesday, March 9, 2022 - link

Ah, but it's not that simple. You need a good interconnect, cache, memory system, and clock/power-management.For instance, just look an Ampere Altra. Even though its single-thread performance is somewhat lacking, it shines at MT.

GeoffreyA - Wednesday, March 9, 2022 - link

Indeed, the mortar and bond style are just as important as the brick.mirancar - Thursday, March 3, 2022 - link

most games have some sort of single thread bottleneckalso web browser performance is highly affected by the single thread performance

this is what most people do 99% of time on their computers, almost nobody is "rendering"

more cores help up to a certain point, then it becomes useless. anything above 6C12T is usually completelly useless. single thread perf matters much more than 16 core 32 thread benchmark

Calin - Thursday, March 3, 2022 - link

Office applications. Legacy software. Interpreted code (as was the Visual Basic for Applications). Compilation (C or C++) of a single file.There are many places where "single-core" performance counts, as - if your typical operation lasts only a few seconds you might not really care to optimize (and going parallel might not be easy, and might not even be possible for some problems).

jcb2121 - Thursday, March 3, 2022 - link

ZwiftWereweeb - Thursday, March 3, 2022 - link

Virtually every application. Google "Amdahl's Law".