Intel to Build Silicon for Fully Homomorphic Encryption: This is Important

by Dr. Ian Cutress on March 8, 2021 11:00 AM EST

When considering data privacy and protections, there is no data more important than personal data, whether that’s medical, financial, or even social. The discussions around access to our data, or even our metadata, becomes about who knows what, and if my personal data is safe. Today’s announcement between Intel, Microsoft, and DARPA, is a program designed around keeping information safe and encrypted, but still using that data to build better models or provide better statistical analysis without disclosing the actual data. It’s called Fully Homomorphic Encryption, but it is so computationally intense that the concept is almost useless in practice. This program between the three companies is a driver to provide IP and silicon to accelerate the compute, enabling a more secure environment for collaborative data analysis.

Mind Your Data

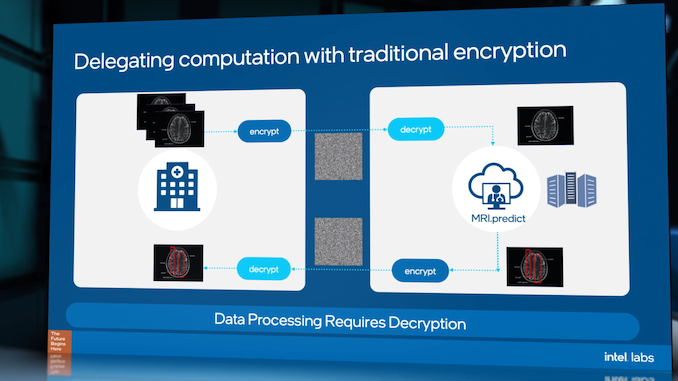

Data protection is one of the most important aspects to the future of computing. The volume of personal data is continually growing, as well as the value of that data, and the number of legal protections required. This makes any processing of personal, private, and confidential data difficult, often resulting in dedicated data silos, because any processing requires data transfer coupled with encryption/decryption, involving trust that isn’t always possible. All it takes is for one key in the chain to be lost or leaked, and the dataset is compromised.

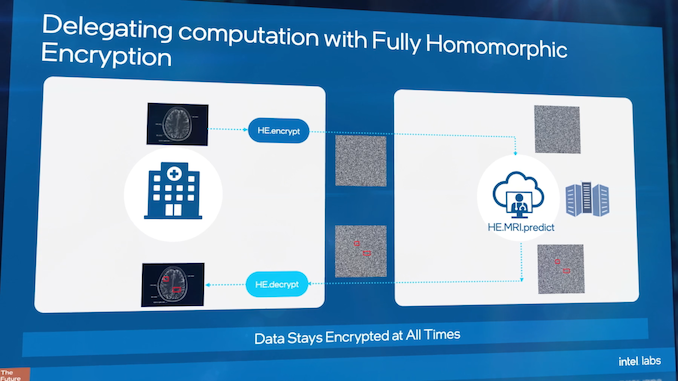

There is a way around this, known as Fully Homomorphic Encryption (FHE). FHE enables the ability to take encrypted data, transfer it to where it needs to go, perform calculations on it, and get results without ever knowing the exact underlying dataset.

Take for example, analyzing medical data records: if a researcher needs to process a specific data-set for some analysis, the traditional method would be to encrypt the data, send the data, decrypt the data, and process it – but giving the researcher access to the specifics in the records might not be legal or face regulatory challenges. With FHE, that researcher can take the encrypted data, perform the analysis and get a result, without ever knowing any specifics of the dataset. This might involve combined statistical analysis of a population over multiple encrypted datasets, or taking those encrypted datasets and using them as additional inputs to train machine learning algorithms, enhancing the accuracy through having more data. Of course, the researcher has to have trust that the data given is complete and genuine, however that is arguably a different topic than enabling compute on encrypted data.

One of the issues as to why this matters is because the best insights from data come from the largest datasets. This includes being able to train a neural network, and the best neural networks are coming against issues of not having enough data, or are facing regulatory hurdles when it comes to the sensitive nature of that data. This is why Fully Homomorphic Encryption, the ability to analyze data without knowing its contents, matters.

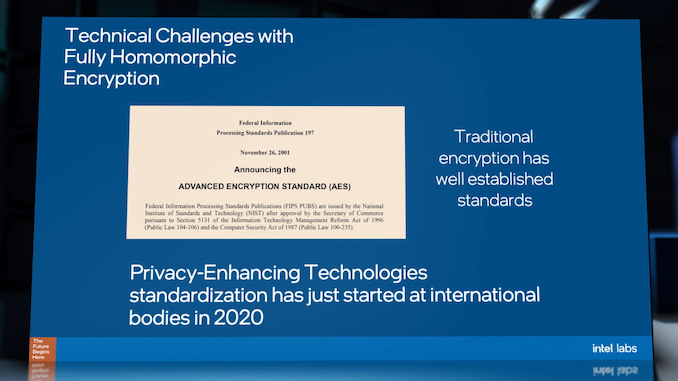

Fully Homomorphic Encryption, as a concept, has been around for several decades, however the concept has only been realized in the last 20 years or so. A number of partial homomorphic encryption schemes were presented in that initial timeframe, and since 2010 several PHE/FHE designs able to process basic operations on encrypted data or cyphertexts have been developed with a number of libraries developed with industry standards. Some of these are open source. A lot of these methods are computationally complex for obvious reasons due to dealing with encrypted data, although efforts are being made with SIMD-like packing and other features to accelerate processing. Even though FHE schemes are being accelerated, this isn’t the same as decryption, because the math doesn’t decrypt the data - because the data is always in an encrypted state, it can (arguably) be used by untrusted third parties as the underlying information is never exposed. (One might argue that a sufficient dataset could reveal more than intended despite being encrypted.)

Today’s Announcement: Custom Silicon for FHE

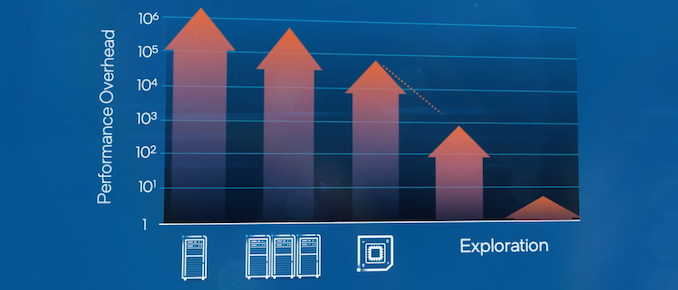

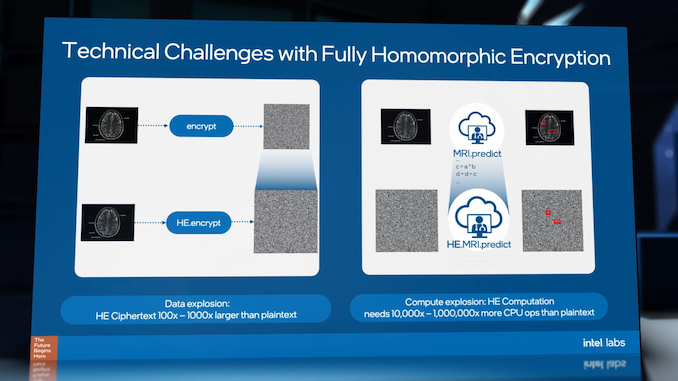

When measuring the performance of FHE compute, the result is compared to the same analysis against the plain text version of the data. Because of the computational complexity of FHE compute, current compute methods are substantially slower. Encryption methods to enable FHE can increase the size of the data by 100-1000x, and then compute on that data is 10000x to 1 million times slower than conventional compute. That means that one second of compute on the raw data can take from 3 hours to 12 days.

So whether that means combining hospital medical records over a state, or customizing a personal service using personal metadata gathered on a user’s smartphone, FHE at that scale is no longer a viable solution. Enter the DARPA DPRIVE program.

- DARPA: Defense Advanced Research Projects Agency

- DPRIVE: Data Protection in Virtual Environments

Intel has announced that as part of the DPRIVE program, it has signed an agreement with DARPA to develop custom IP leading to silicon to enable faster FHE in the cloud, specifically with Microsoft on both Azure and JEDI cloud, initially with the US government. As part of this multi-year project, expertise from Intel Labs, Intel’s Design Engineering, and Intel’s Data Platforms Group will come together to create a dedicated ASIC to reduce the computational overhead of FHE over existing CPU-based methods. The press release states that the target is to reduce processing time by five orders of magnitude from current methods, reducing compute times from days to minutes.

Intel already has a foot in the door when it comes to FHE, having a research team inside Intel Labs dedicated to the issue. This has primarily been on the side of software, standards, and regulatory hurdles, but will now also move into hardware design, cloud software stacks, and collaborative deployment within Azure and JEDI cloud for the US government. Other highlighted target markets include healthcare, insurance, and finance.

During the Intel Labs day in December 2020, Intel detailed some of the direction it was already going in with this work, along with standards and development to parallel traditional encryption but at an international scale given the additional regulatory hurdles. Microsoft will now become part of that discussion with the DPRIVE program, along with Intel’s continued investments at the academic level.

Aside from the ‘five orders of magnitude’ element, today’s announcement doesn’t go beyond that in creating definitive goals, nor does it present a time-frame, instead saying this is a ‘multi-year’ agreement. It will be interesting to see how much Intel or their academic affiliations discuss on the topic beyond today, beyond the work standardization.

Related Reading

- Federated Learning and Fully Homomorphic Encryption at Intel Labs Day 2020

- Microsemi Announces SmartRAID Cards With On-Board Supercapacitors And Encryption

- Synaptics Discusses Fingerprint Security and the Need For End-to-End Encryption

31 Comments

View All Comments

WaltC - Monday, March 8, 2021 - link

It will be a milestone when Intel finally develops a new x86 architecture as fast and as secure as AMD's zen2/3. Until then....yawn...dwbogardus - Monday, March 8, 2021 - link

The fact that Intel can even remain in a close second place, using a 14 nm process is impressive. Imagine what they could do with TSMC's 7 nm process! It would almost certainly outperform AMD by a significant margin.WaltC - Monday, March 8, 2021 - link

Heh...;) The only problem with your argument is that Zen2/3 CPUs are already much faster than Intel *per clock*...so not even 7nm will help them. By the time Intel gets to 7nm AMD will be on Zen4 (or 5?) @ 5nm and another new architecture that will lengthen AMD's already substantial performance lead. Intel doesn't need to "imagine" anything--it needs solid products--desperately. Imaginary CPUs just don't seem to sell all that well for some reason...;)BedfordTim - Tuesday, March 9, 2021 - link

They are available on Intel's 10nm which is much better than 14nm, but to really go faster they need to improve the IPC. Shrinking the process node will just help power consumption.Wereweeb - Tuesday, March 9, 2021 - link

Zen was already more efficient per Watt using 14nm, Intel was only better at gaming. Now AMD is at Zen 3, and readying up Zen 4, which improved the latency of the overall design and reworked the guts of the architecture to be even more efficient.Intel had the benefit of refining their process node and architecture for eachother. Now they'll have to use TSMC to catch up, and adapt their architecture for TSMC's node.

I.e. don't kid yourself, Intel's architectyre is behind AMD's. Alder Lake will only allow them to catch up to AMD, in part thanks to Intel's vast R&D resources allowing them to work on small and big cores at the same time.

JeffJoshua - Tuesday, March 9, 2021 - link

That's exactly what I was thinking. Plus AMD has less leeway before things would need to become subatomic. I skipped past the gaming benchmarks on the AnandT review of the 11700K, where I understand the performance increase is abysmal, but for everything else they're not that far behind. And employers don't care if your PC can't run Crysis; in fact they'd probably be happy if there was a way to disable running games.PleaseMindTheGap - Tuesday, March 9, 2021 - link

Why hate so much Intel and love AMD! AMD will do exactly what Intel does in terms of price "if one day AMD reaches the Intel´s position"! This remembers me a kind of country that is trying to get rid of a dictator, once it leaves the place other will occupy the position and will make sometimes worst than the previous. Intel is much more than a processor nano technology! Do not forget that!grant3 - Tuesday, March 9, 2021 - link

I hope you do realize that Intel is a large company with many different teams, and the people working on FHE are barely related to the people working on x86 architectures.If you're going to yawn about FHE, and only care about x86 architectures, then why are you reading this article?

It's like reading an article about Honda prototyping a levitating lawnmower and you're saying "yawn, who cares, it's not a self-driving car"

brucethemoose - Monday, March 8, 2021 - link

I have trouble wrapping my head around this, as data can be structured in so many different ways, and there are many unique operations on different architectures to perform on each structure.Can an ASIC handle so many possibilities? This seems more like FPGA+accelerator territory, which in a field Microsoft and Intel (and perhaps DARPA?) happen to be experienced in.

WaltC - Monday, March 8, 2021 - link

IMO, announcements without products are so much vapor. It's always been that way...no matter what companies are involved--applies to AMD just as much as to anyone else. Such announcements are only important when they lead to real products--the world is littered with the carcasses of announcements that never went anywhere. I'm cynical that way--I've seen too many "announcements" that turned out to be vaporware, so these days only shipping products impress me and are "important."