Intel to Offer Socketed 56-core Cooper Lake Xeon Scalable in new Socket Compatible with Ice Lake

by Dr. Ian Cutress on August 6, 2019 8:01 AM EST- Posted in

- CPUs

- Intel

- Xeon

- 14nm

- 10nm

- Xeon Platinum

- Ice Lake

- Xeon Scalable

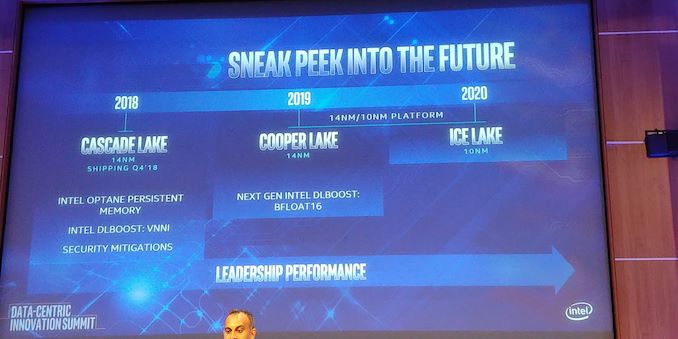

- Cooper Lake

Today Intel is announcing some of its plans for its future Xeon Scalable platform. The company has already announced that after the Cascade Lake series of processors launched this year that it will bring forth another generation of 14nm products, called Cooper Lake, followed by its first generation of 10nm on Xeon, Ice Lake. Today’s announcement relates to the core count of Cooper Lake, the form factor, and the platform.

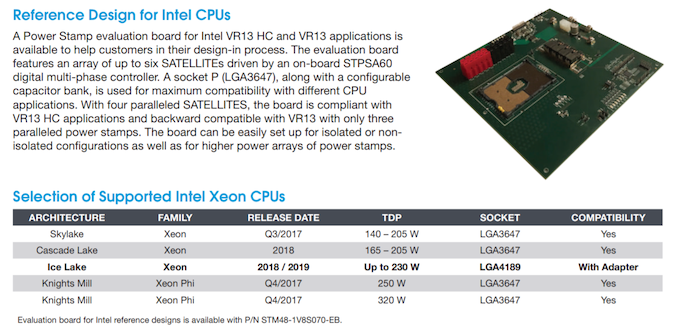

Today Intel is confirming that it will be bringing its 56-core Xeon Platinum 9200 family to Cooper Lake, so developers can take advantage of its new bfloat16 instructions with a high core count. On top of this, Intel is also stating that the new CPUs will be socketed, unlike the 56-core Cascade Lake CPUs which are BGA only. In order to necessitate the socketing of the product, this means that a new socket is required, which Intel has confirmed will also support Ice Lake in the same socket. According to one of our sources, this will be an LGA4189 product.

Based on our research, it should be noted that we expect bfloat16 support to only be present in Cooper Lake and not Ice Lake. Intel has stated that the 56-core version of Cooper Lake will be in a similar format to its 56-core Cascade Lake, which we take to mean that it is two dies on the same chip and limited to 2S deployments, however based on our expectations for Ice Lake Xeon parts, we have come to understand that there will be eight memory channels in the single chip design, and perhaps up to 16 memory channels with the dual-die 56-core version. (It will be interesting to see 16 channels at 2DPC on a 2S motherboard, given that 12 channels * 2DPC * 2S barely fits into a standard 19-inch chassis.)

Intel’s Lisa Spelman, VP of the Data Center Group and GM of Xeon, stated in an interview with AnandTech last year that Cooper Lake will be launched in 2019, with Ice Lake as a ‘fast follow-on’, expected in the middle of 2020. That’s not a confirmation that the 56-core version of Cooper will be in 2019, but this is the general cadence for both families that Intel is expected to run to.

At Intel’s Architecture Day in December 2018, Sailesh Kottapalli showed off an early sample of Ice Lake Xeon silicon. At the time I was skeptical, given that Intel’s 10+ process still looked like it was having yield issues with small quad-core chips, let alone large Xeon-like designs. Cooper Lake on 14nm should easily be able to be rolled into a dual-die design, like Cascade Lake, so it will be interesting to see where 10nm Ice Lake Xeon will end up.

Intel states that 56-core based Cascade Lake-AP Xeon Scalable systems are currently available as part of pre-built systems from major OEMs such as Atos, HPE, Lenovo, Penguin Computing, Megware, and authorized resellers. Given that Cooper Lake 56-core will be socketed, I would imagine that the design should ultimately be more widely available.

Related Reading

- Intel Xeon Update: Ice Lake and Cooper Lake Sampling, Faster Future Updates

- Power Stamp Alliance Exposes Ice Lake Xeon Details: LGA4189 and 8-Channel Memory

- Intel's Xeon Cascade Lake vs. NVIDIA Turing: An Analysis in AI

- Intel Architecture Manual Updates: bfloat16 for Cooper Lake Xeon Scalable Only?

- Some Cascade Lake Xeon Scalable Processor Specifications Exposed in SI Documents

- Cisco Documents Shed Light on Cascade Lake, Cooper Lake, and Ice Lake for Servers

- Intel's Architecture Day 2018: The Future of Core, Intel GPUs, 10nm, and Hybrid x86

42 Comments

View All Comments

lefty2 - Tuesday, August 6, 2019 - link

Paper launched in summer 2019. Actual launch in Q4 2019HStewart - Tuesday, August 6, 2019 - link

We are still in middle of Q3, so it still possible that it be launch Q3, It sounds like Intel is making refinements - because original Dell mailing has new XPS 13 2in1 coming in July.HStewart - Tuesday, August 6, 2019 - link

Not sure it matters what + it means - but Ice Lake's 10nm is new revised version of failed 10nm Cannon Lake. So I not sure it consider 10nm or 10nm+, but instead 10nm take 2/ as for the timing it actually not end of 2020 but in 2020 framed. Technically we are not that far away now, we are in 2nd half of 2019 and Ice Lake mobiles should be out in this quarter and my guess higher core versions and clock speed version in 1st half of 2020 follow by Xeon's in 2nd half.But the key is Intel is over or shortly over with Skylake version and all the backlash with Spectre/Meltodon stuff which I have yes send some real virus using it.

Santoval - Thursday, August 8, 2019 - link

No, it's not. That Intel slide you saw is "temporally arbitrary", since Intel would prefer to not highlight that their original 10nm node was basically a mere beta node. In December 2017 they "released" (in very low volume) a barely functional Cannon Lake dual core i3 CPU fabbed on that node, with a disabled iGPU, horrible clocks and thermals, and targeted exclusively at Chinese schools.Why? In order to report a nominal 2017 release of CPUs fabbed on their 10nm node to their shareholders, hoping at least the gullible ones will buy it. There was no other reason for that "release", since Cannon Lake / 1st gen 10nm node's yields were truly *atrocious* (apparently Ice Lake / 2nd gen 10nm+'s yields are "barely tolerable", but Intel can no longer afford to delay the launch of Ice Lake).

Ice Lake is to be released on their 2nd gen 10nm+ node, while Tiger Lake will be released on their 3rd gen 10nm++ node. They avoid explicitly calling Ice Lake's node "second gen" though, because they don't want to remind people of Canned Lake. Due to Intel's still poor yields Ice Lake U/Y will be co-released with Comet Lake U/Y, and based on leaks there will be no mid power (-H) and full power (-S) version of Ice Lake. It's still unknown if Intel will manage to release Ice Lake Xeon with acceptable yields (and thus acceptable profit). Cooper Lake's job is to buy time for Intel to fix their continuing 10nm+ issues.

Teckk - Tuesday, August 6, 2019 - link

Gotta give credit to all you folks publishing articles - how do you not get drowned in the Lakes already !?Please make it easy for everyone Intel !

OTOH, will be good to see this go up against Rome HCC server part. TDP?

liquidaim - Tuesday, August 6, 2019 - link

having trouble getting exclusive sneak peeks from Intel's competitor? sad.At least take the time to list the TDP of the parts advertised on this page rather than selectively showing information about parts which *may* get released 14 months from now.

AshlayW - Tuesday, August 6, 2019 - link

Don't see how these will be competitive with 64C ROME likely offering more performance at lower power use, or the same performance at half the wattage. The only advantage these parts will have, is in heavy AVX512 workloads where they will likely beat the ROME CPUs due to higher through-put per core (wider SIMD pipes). Other than that, essentially DOA.quorm - Tuesday, August 6, 2019 - link

Is there any workload that runs better on AVX512 than a gpu?dullard - Tuesday, August 6, 2019 - link

Yes. Financial simulations for example (see the MC Libor Swaption Portfolio): https://www.xcelerit.com/computing-benchmarks/insi...Of course the GPU wins by a big margin in other software. You need to know what you are going to do and use the appropriate hardware for it. Computer simulations tend to run poorly on GPUs, but can benefit greatly by AVX 512: https://www.simutechgroup.com/images/easyblog_arti...

HollyDOL - Wednesday, August 7, 2019 - link

Huh, Adapter with this pin count sounds a bit scary... and expensive