Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

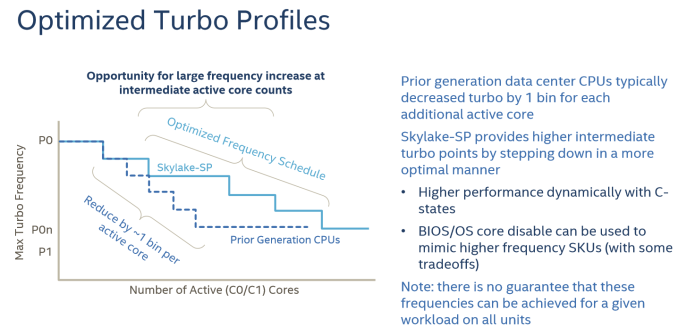

Intel's Optimized Turbo Profiles

Also new to Skylake-SP, Intel has also further enhanced turbo boosting.

There are also some security and virtualization enhancements (MBE, PPK, MPX) , but these are beyond the scope this article as we don't test them.

Summing It All Up: How Skylake-SP and Zen Compare

The table below shows you the differences in a nutshell.

| AMD EPYC 7000 |

Intel Skylake-SP | Intel Broadwell-EP |

|

| Package & Dies | Four dies in one MCM | Monolithic | Monolithic |

| Die size | 4x 195 mm² | 677 mm² | 456 mm² |

| On-Chip Topology | Infinity Fabric (1-Hop Max) |

Mesh | Dual Ring |

| Socket configuration | 1-2S | 1-8S ("Platinum") | 1-2S |

| Interconnect (Max.) Bandwidth (*)(Max.) |

4x16 (64) PCIe lanes 4x 37.9 GB/s |

3x UPI 20 lanes 3x 41.6 GB/s |

2x QPI 20 lanes 2x 38.4 GB/s |

| TDP | 120-180W | 70-205W | 55-145W |

| 8-32 | 4-28 | 4-22 | |

| LLC (max.) | 64MB (8x8 MB) | 38.5 MB | 55 MB |

| Max. Memory | 2 TB | 1.5 TB | 1.5 TB |

| Memory subsystem Fastest sup. DRAM |

8 channels DDR4-2666 |

6 channels DDR4-2666 |

4 channels DDR4-2400 |

| PCIe Per CPU in a 2P |

64 PCIe (available) | 48 PCIe 3.0 | 40 PCIe 3.0 |

(*) total bandwidth (bidirectional)

At a high level, I would argue that Intel has the most advanced multi-core topology, as they're capable of integrating up to 28 cores in a mesh. The mesh topology will allow Intel to add more cores in future generations while scaling consistently in most applications. The last level cache has a decent latency and can accommodate applications with a massive memory footprint. The latency difference between accessing a local L3-cache chunk and one further away is negligible on average, allowing the L3-cache to be a central storage for fast data synchronization between the L2-caches. However, the highest performing Xeons are huge, and thus expensive to manufacture.

AMD's MCM approach is much cheaper to manufacture. Peak memory bandwidth and capacity is quite a bit higher with 4 dies and 2 memory channels per die. However, there is no central last level cache that can perform low latency data coordination between the L2-caches of the different cores (except inside one CCX). The eight 8 MB L3-caches acts like - relatively low latency - spill over caches for the 32 L2-caches on one chip.

219 Comments

View All Comments

alpha754293 - Tuesday, July 11, 2017 - link

Pity that OpenFOAM failed to run on Ubuntu 16.04.2 LTS. I would have been very interested in those results.farmergann - Tuesday, July 11, 2017 - link

Are you trying to hide the fact that AMD's performance per watt absolutely dominates intel's, or have you simply overlooked one of, if not the, single most important aspects of server processors?Ryan Smith - Tuesday, July 11, 2017 - link

Neither. We just had very little time to look at power consumption. It's also the metric we're the least confident in right now, as we'd like to have a better understanding of the quirks of the platform (which again takes more time).Carl Bicknell - Wednesday, July 12, 2017 - link

Ryan / Ian,Just to let you know there are better chess benchmarks than the one you've chosen. Stockfish is an example of a newer program which better uses modern CPU architecture.

NixZero - Tuesday, July 11, 2017 - link

"AMD's MCM approach is much cheaper to manufacture. Peak memory bandwidth and capacity is quite a bit higher with 4 dies and 2 memory channels per die. However, there is no central last level cache that can perform low latency data coordination between the L2-caches of the different cores (except inside one CCX). The eight 8 MB L3-caches acts like - relatively low latency - spill over caches for the 32 L2-caches on one chip. "isnt skylake-x's l3 a victim cache too? and divided at 1.3mb for each core, not a monolytic one?

Ian Cutress - Tuesday, July 11, 2017 - link

That's what a 'spill-over' cache is - it accepts evicted cache lines.NixZero - Wednesday, July 12, 2017 - link

so why its put as an advantage for intel cache, which is spill over too?JonathanWoodruff - Wednesday, July 12, 2017 - link

Since the Intel one is all on one die, a miss to a "slice" of cache can be filled without DRAM-like latencies from another slice. Since AMD has it's last level caches spread across dies, going to another cache looks to be equivalent latency-wise to going to DRAM. It wouldn't necessarily have to be quite that bad, and I would expect some improvement here for Zen2.Martin_Schou - Tuesday, July 11, 2017 - link

This has to be wrong:CPU Two EPYC 7601 (2.2 GHz, 32c, 8x8MB L3, 180W)

RAM 512 GB (12x32 GB) Samsung DDR4-2666 @2400

12 x 32 GB is 384 GB, and 12 sticks doesn't fit nicely into 8 channels. In all likelihood that's supposed to be 16x32 GB, as we see in the E52690

Dr.Neale - Tuesday, July 11, 2017 - link

I find myself puzzled by the curious omission in this article of a key aspect of Server architecture: Data Security.AMD has a LOT; Intel, not so much.

I would think this aspect of Server "Performance" would be a major consideration in choosing which company's Architecture to deploy in a Secure Server scenario. Especially in light of Recent Revelations fuelling Hacking Headlines in the news, and Dominating Discussions on various social media websites.

How much is Data Security worth?

A topic of EPYC consequence!