Launching the #CPUOverload Project: Testing Every x86 Desktop Processor since 2010

by Dr. Ian Cutress on July 20, 2020 1:30 PM ESTCPU Tests: Science

In this version of our test suite, all the science focused tests that aren’t ‘simulation’ work are now in our science section. This includes Brownian Motion, calculating digits of Pi, molecular dynamics, and for the first time, we’re trialing an artificial intelligence benchmark, both inference and training, that works under Windows using python and TensorFlow. Where possible these benchmarks have been optimized with the latest in vector instructions, except for the AI test – we were told that while it uses Intel’s Math Kernel Libraries, they’re optimized more for Linux than for Windows, and so it gives an interesting result when unoptimized software is used.

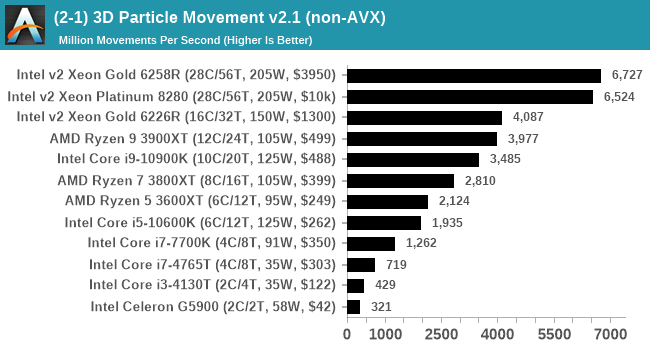

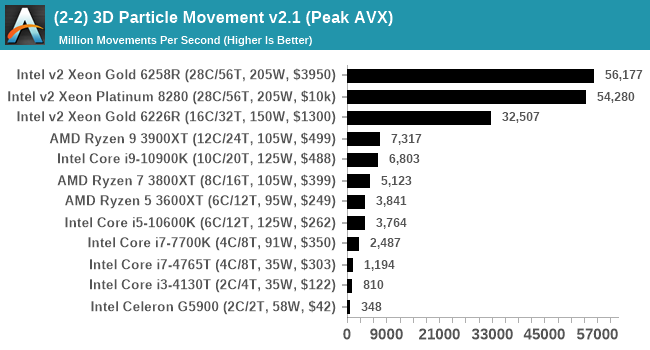

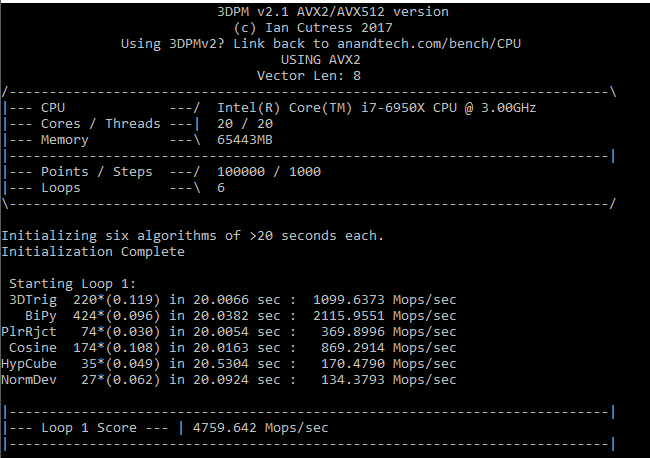

3D Particle Movement v2.1: Non-AVX and AVX2/AVX512

This is the latest version of the benchmark designed to simulate semi-optimized scientific algorithms taken directly from my doctorate thesis. This involves randomly moving particles in a 3D space using a set of algorithms that define random movement. Version 2.1 improves over 2.0 by passing the main particle structs by reference rather than by value, and decreasing the amount of double->float->double recasts the compiler was adding in.

The initial version of v2.1 is a custom C++ binary of my own code, flags are in place to allow for multiple loops of the code with a custom benchmark length. By default this version runs six times and outputs the average score to the console, which we capture with a redirection operator that writes to file.

For v2.1, we also have a fully optimized AVX2/AVX512 version, which uses intrinsics to get the best performance out of the software. This was done by a former Intel AVX-512 engineer who now works elsewhere. According to Jim Keller, there are only a couple dozen or so people who understand how to extract the best performance out of a CPU, and this guy is one of them. To keep things honest, AMD also has a copy of the code, but has not proposed any changes.

The final result is a table that looks like this:

The 3DPM test is set to output millions of movements per second, rather than time to complete a fixed number of movements. This way the data represented becomes a linear when performance scales and easier to read as a result.

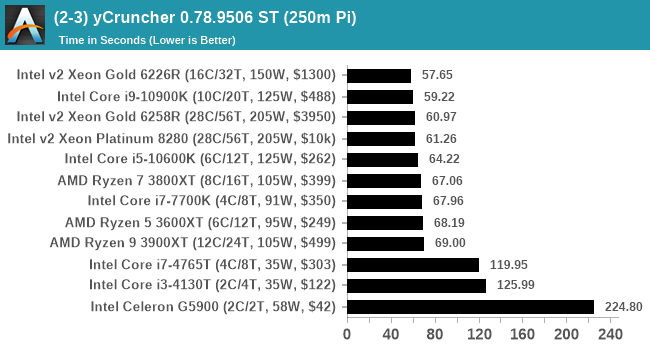

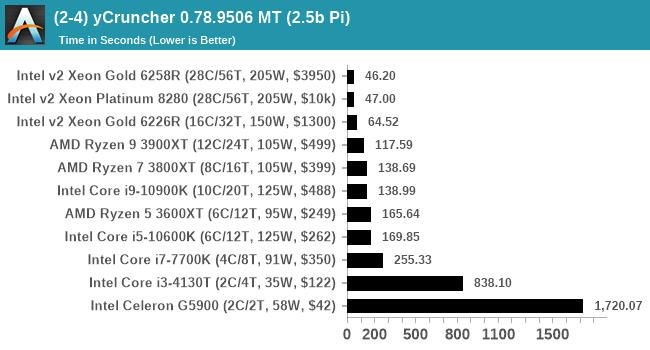

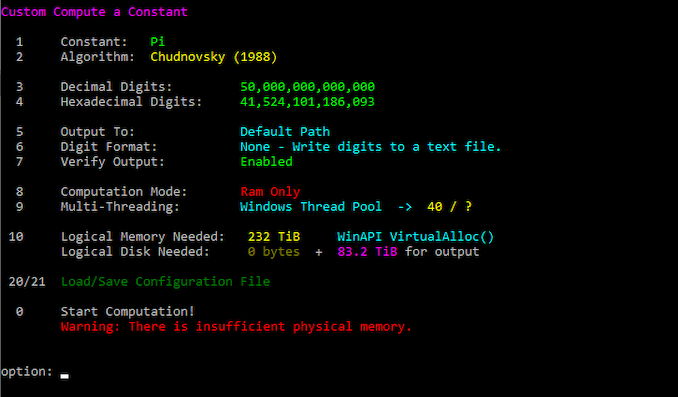

y-Cruncher 0.78.9506: www.numberworld.org/y-cruncher

If you ask anyone what sort of computer holds the world record for calculating the most digits of pi, I can guarantee that a good portion of those answers might point to some colossus super computer built into a mountain by a super-villain. Fortunately nothing could be further from the truth – the computer with the record is a quad socket Ivy Bridge server with 300 TB of storage. The software that was run to get that was y-cruncher.

Built by Alex Yee over the last part of a decade and some more, y-Cruncher is the software of choice for calculating billions and trillions of digits of the most popular mathematical constants. The software has held the world record for Pi since August 2010, and has broken the record a total of 7 times since. It also holds records for e, the Golden Ratio, and others. According to Alex, the program runs around 500,000 lines of code, and he has multiple binaries each optimized for different families of processors, such as Zen, Ice Lake, Sky Lake, all the way back to Nehalem, using the latest SSE/AVX2/AVX512 instructions where they fit in, and then further optimized for how each core is built.

For our purposes, we’re calculating Pi, as it is more compute bound than memory bound. In single thread mode we calculate 250 million digits, while in multithreaded mode we go for 2.5 billion digits. That 2.5 billion digit value requires ~12 GB of DRAM, so for systems that do not have that much, we also have a separate table for slower CPUs and 250 million digits.

y-Cruncher is also affected by memory bandwidth, even in ST mode, which is why we're seeing the Xeons score very highly despite the lower single thread frequency.

Personally I have held a few of the records that y-Cruncher keeps track of, and my latest attempt at a record was to compute 600 billion digits of the Euler-Mascheroni constant, using a Xeon 8280 and 768 GB of DRAM. It took over 100 days (!).

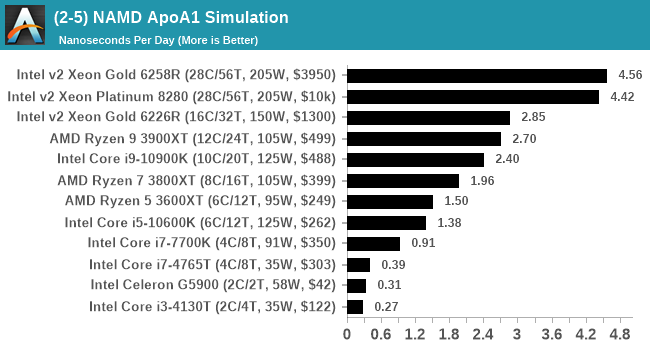

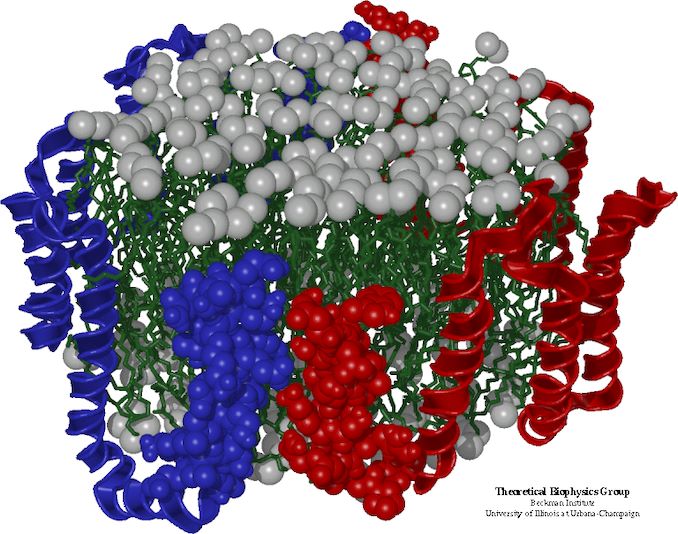

NAMD 2.13 (ApoA1): Molecular Dynamics

One of the popular science fields is modelling the dynamics of proteins. By looking at how the energy of active sites within a large protein structure over time, scientists behind the research can calculate required activation energies for potential interactions. This becomes very important in drug discovery. Molecular dynamics also plays a large role in protein folding, and in understanding what happens when proteins misfold, and what can be done to prevent it. Two of the most popular molecular dynamics packages in use today are NAMD and GROMACS.

NAMD, or Nanoscale Molecular Dynamics, has already been used in extensive Coronavirus research on the Frontier supercomputer. Typical simulations using the package are measured in how many nanoseconds per day can be calculated with the given hardware, and the ApoA1 protein (92,224 atoms) has been the standard model for molecular dynamics simulation.

Luckily the compute can home in on a typical ‘nanoseconds-per-day’ rate after only 60 seconds of simulation, however we stretch that out to 10 minutes to take a more sustained value, as by that time most turbo limits should be surpassed. The simulation itself works with 2 femtosecond timesteps.

How NAMD is going to scale in our testing is going to be interesting, as the software has been developed to go across large supercomputers while taking advantage of fast communications and MPI.

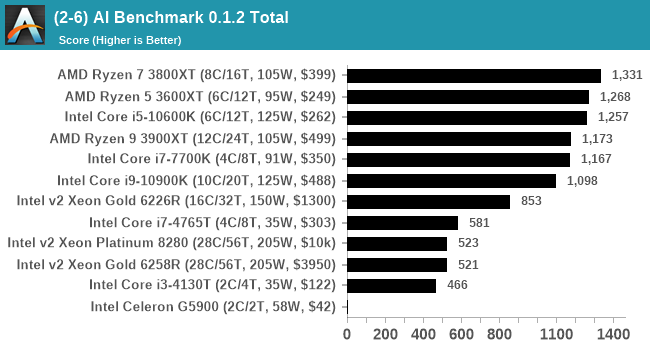

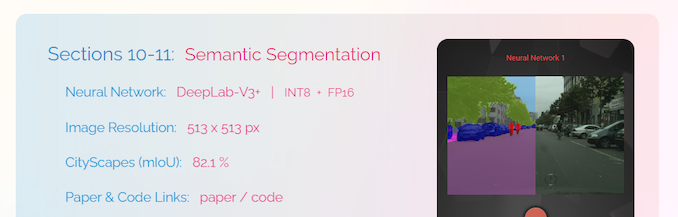

AI Benchmark 0.1.2 using TensorFlow: Link

Finding an appropriate artificial intelligence benchmark for Windows has been a holy grail of mine for quite a while. The problem is that AI is such a fast moving, fast paced word that whatever I compute this quarter will no longer be relevant in the next, and one of the key metrics in this benchmarking suite is being able to keep data over a long period of time. We’ve had AI benchmarks on smartphones for a while, given that smartphones are a better target for AI workloads, but it also makes some sense that everything on PC is geared towards Linux as well.

Thankfully however, the good folks over at ETH Zurich in Switzerland have converted their smartphone AI benchmark into something that’s useable in Windows. It uses TensorFlow, and for our benchmark purposes we’ve locked our testing down to TensorFlow 2.10, AI Benchmark 0.1.2, while using Python 3.7.6 – this was the only combination of versions we could get to work, because Python 3.8 has some quirks.

The benchmark runs through 19 different networks including MobileNet-V2, ResNet-V2, VGG-19 Super-Res, NVIDIA-SPADE, PSPNet, DeepLab, Pixel-RNN, and GNMT-Translation. All the tests probe both the inference and the training at various input sizes and batch sizes, except the translation that only does inference. It measures the time taken to do a given amount of work, and spits out a value at the end.

There is one big caveat for all of this, however. Speaking with the folks over at ETH, they use Intel’s Math Kernel Libraries (MKL) for Windows, and they’re seeing some incredible drawbacks. I was told that MKL for Windows doesn’t play well with multiple threads, and as a result any Windows results are going to perform a lot worse than Linux results. On top of that, after a given number of threads (~16), MKL kind of gives up and performance drops of quite substantially.

So why test it at all? Firstly, because we need an AI benchmark, and a bad one is still better than not having one at all. Secondly, if MKL on Windows is the problem, then by publicizing the test, it might just put a boot somewhere for MKL to get fixed. To that end, we’ll stay with the benchmark as long as it remains feasible.

As you can see, we’re already seeing it perform really badly with the big chips. Somewhere around the Ryzen 7 is probably where the peak is. Our Xeon chips didn't really work at all.

110 Comments

View All Comments

Smell This - Monday, July 20, 2020 - link

;- )

Oxford Guy - Monday, July 20, 2020 - link

"If there’s a CPU, old or new, you want to see tested, then please drop a comment below."• i7-3820. This one is especially interesting because it had roughly the same number of transistors as Piledriver on roughly the same node (Intel 32nm vs. GF 32 nm).

• 5775C

• 5675C (which outperformed and matched the 5775C in some games due to thermal throttling)

• 5775C with TDP bypassed or increased if this is possible, to avoid the aforementioned throttling

• I would really really like you to add Deserts of Kharak to your games test suite. It is the only game I know of that showed Piledriver beating Intel's chips. That unusual performance suggests that it was possible to get more performance out of Piledriver if developers targeted that CPU for optimization and/or the game's engine somehow simply suited it particularly.

• 8320E or 8370E at 4.7 GHz (non-turbo) with 2133 CAS 9-11-10 RAM, the most optimal Piledriver setup. The 9590 was not the most performant of the FX line, likely because of the turbo. A straight overclock coupled with tuned RAM (not 1600 CAS 10 nonsense) makes a difference. 4.7 GHz is a realistic speed achievable by a large AIO or small loop. If you want air cooling only then drop to 4.5 Ghz but keep the fast RAM. The point of testing this is to see what people were able to get in the real world from the AMD alternative for all the years they had to wait for Zen. Since we were stuck with Piledriver as the most performant Intel alternative for so so many years it's worth including for historical context. The "E" models don't have to be used but their lower leakage makes higher clocks less stressful on cooling than a 9000 series. 4.7 GHz was obtainable on a cheap motherboard like the Gigabyte UD3P, with strong airflow to the VRM sink.

• VIA's highest-performance model. If it won't work with Windows 10 then run the tests on it with 8.1. The thing is, though... VIA released an update fairly recently that should make it compatible with Windows 10. I saw Youtube footage of it gaming, in fact, with a discrete card. It really would be a refreshing thing to see VIA included, even though it's such a bit player.

• Lynnfield at 3 GHz.

• i7-9700K, of course.

Oxford Guy - Monday, July 20, 2020 - link

Regarding Deserts of Kharak... It may be that it took advantage of the extra cores. That would make it noteworthy also as an early example of a game that scaled to 8 threads.Oxford Guy - Monday, July 20, 2020 - link

Also, the Chinese X86 CPU, the one based on Zen 1, would be very nice to have included.Oxford Guy - Monday, July 20, 2020 - link

VIA CPUs tested with games as recently as 2019 (there was another video of the quad core but I didn't find it today with a quick search):https://www.youtube.com/watch?v=JPvKwqSMo-k

https://www.youtube.com/watch?v=Da0BkEW459E

The Zhaoxin KaiXian KX-U6880A would be nice to see included, not just the Chinese Zen 1 derivative.

Oxford Guy - Monday, July 20, 2020 - link

"due to thermal throttling"TDP throttling, to be more accurate. I suppose it could throttle due to current demand rather than temp.

axer1234 - Monday, July 20, 2020 - link

honestly i would love to know how different generation processor perform today especially higher core count. like prescott series pentium 4 athlon II phenomX6 core2 duo core2quad nehlam sandy bridge bulldozer etc with todays generation work loads and offeringin many scenario like word excel ppt photoshop it all works very well still in many offices

its just the new generation of application slowing it down for almost the same work etc

herefortheflops - Monday, July 20, 2020 - link

@Dr. Cutress.,As someone that has been dealing with similar or greater product testing challenges and configuration complexity for the better part of a decade or so, I would like to commend you for your ambitious goals and efforts so far. Additionally, I could be of high value to your effort if you are willing to discuss. I have reviewed in-depth the bench database (as well as competing websites) and I have come to the conclusion the Anandtech bench data is of very limited usefulness at present--and would require some significant changes to the data being collected/reported and the way things have been done to this point. I do understand where the industry is going, the questions the readers are going to be asking of the data, and the major comparisons that will be attempted with the data. Unfortunately, much of your effort may easily become irrelevant unless you proceed with some extreme caution to provide data with more utility. I also know methods to accomplish the desired result while reducing the size and cost of the task at hand. Reply by e-mail if you are interested in talking.

Best,

-A potential contributor to your effort.

Bensam123 - Tuesday, July 21, 2020 - link

Despite how impressive this is, one thing that hasn't been tackled is still multiplayer performance and it vastly changes recommendations for CPUs (doesn't effect GPUs as much).It goes from recommending a 6 core chip hands down to trying to make a case for 4 core chips still in this day and age. I own a 3900x and 2800 and I can tell you hands down Modern Warfare will gobble 70% of that 12 core chip, sometimes a bit more, that's equivalent to maxing out a 8 core of the same series. That vastly changes recommendations and data points. It's not just Modern Warfare. Overwatch, Black Ops 3(same engine as MW), and recently Hyper Scape will will make use of those extra cores. I have a widget to monitor CPU utilization in the background and I can check Task Manager. If I had a better video card I'm positive it would've sucked down even more of those 12 cores (my GPU is running at 100% load according to MSI AB).

This is a huge deal and while I understand, I get it, it's hard to reliably reproduce the same results in a multiplayer environment because it changes so much and generally seen as taboo from a hardware benchmarking standpoint, it is vastly different then singleplayer workloads to the point at which it requires completely different recommendations. Given how many people are making expensive hardware choices specifically because they play multiplayer games, I would even say most tech reviews in this day and age are irrelevant for CPU recommendations outside of the casual single player gamer. GPU recommendations are still very much on par, CPU is not remotely.

I talk about this frequently on my stream and why I still recommended the 1600 AF even when it was sitting at $105-125, it's a steal if you play multiplayer games, while most people that either read benchmarking websites or run benchmarks themselves will start making a case for a 4c Intel. 6 core is a must at the very least in this day and age.

Anandtech it's time to tread new ground and go into the uncharted area. Singleplayer results and multiplayer results are too different, you can't keep spinning the wheel and expect things to remain the same. You can verify this yourself just by running task manager in the background while playing one of the games I mentioned at the lowest settings regardless of being able to repeat those results exactly you'll see it's definitely a multi-core landscape for newer multiplayer games.

Not even touched on in the article.

Bensam123 - Tuesday, July 21, 2020 - link

70%, I have SMT off for clarification.