Launching the #CPUOverload Project: Testing Every x86 Desktop Processor since 2010

by Dr. Ian Cutress on July 20, 2020 1:30 PM ESTCPU Tests: Simulation

Simulation and Science have a lot of overlap in the benchmarking world, however for this distinction we’re separating into two segments mostly based on the utility of the resulting data. The benchmarks that fall under Science have a distinct use for the data they output – in our Simulation section, these act more like synthetics but at some level are still trying to simulate a given environment.

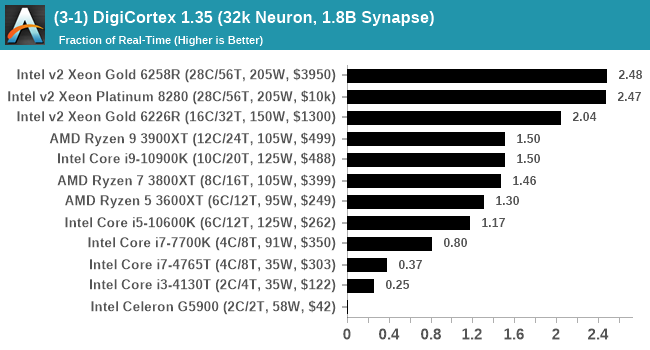

DigiCortex v1.35: link

DigiCortex is a pet project for the visualization of neuron and synapse activity in the brain. The software comes with a variety of benchmark modes, and we take the small benchmark which runs a 32k neuron/1.8B synapse simulation, similar to a small slug.

The results on the output are given as a fraction of whether the system can simulate in real-time, so anything above a value of one is suitable for real-time work. The benchmark offers a 'no firing synapse' mode, which in essence detects DRAM and bus speed, however we take the firing mode which adds CPU work with every firing.

I reached out to the author of the software, who has added in several features to make the software conducive to benchmarking. The software comes with a series of batch files for testing, and we run the ‘small 64-bit nogui’ version with a modified command line to allow for ‘benchmark warmup’ and then perform the actual testing.

The software originally shipped with a benchmark that recorded the first few cycles and output a result. So while fast multi-threaded processors this made the benchmark last less than a few seconds, slow dual-core processors could be running for almost an hour. There is also the issue of DigiCortex starting with a base neuron/synapse map in ‘off mode’, giving a high result in the first few cycles as none of the nodes are currently active. We found that the performance settles down into a steady state after a while (when the model is actively in use), so we asked the author to allow for a ‘warm-up’ phase and for the benchmark to be the average over a second sample time.

For our test, we give the benchmark 20000 cycles to warm up and then take the data over the next 10000 cycles seconds for the test – on a modern processor this takes 30 seconds and 150 seconds respectively. This is then repeated a minimum of 10 times, with the first three results rejected.

We also have an additional flag on the software to make the benchmark exit when complete (which is not default behavior). The final results are output into a predefined file, which can be parsed for the result. The number of interest for us is the ability to simulate this system in real-time, and results are given as a factor of this: hardware that can simulate double real-time is given the value of 2.0, for example.

The final result is a table that looks like this:

The variety of results show that DigiCortex loves cache and single thread frequency, is not too fond of victim caches, but still likes threads and DRAM bandwidth.

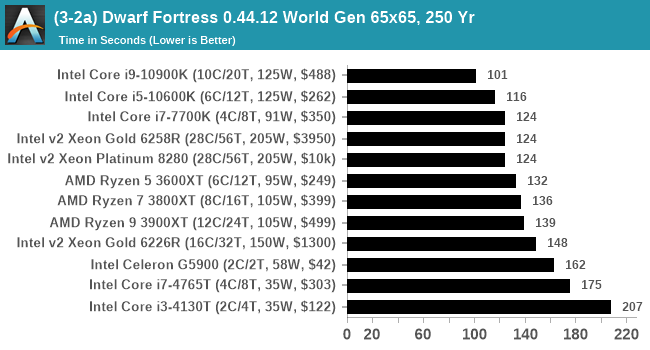

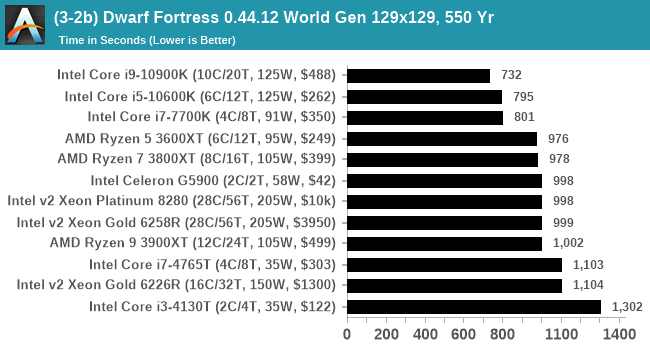

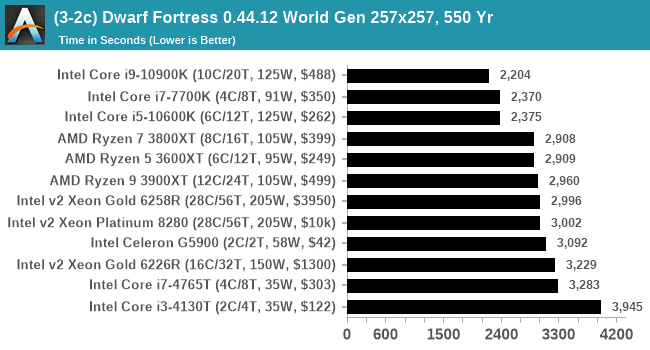

Dwarf Fortress 0.44.12: Link

Another long standing request for our benchmark suite has been Dwarf Fortress, a popular management/roguelike indie video game, first launched in 2006 and still being regularly updated today, aiming for a Steam launch sometime in the future.

Emulating the ASCII interfaces of old, this title is a rather complex beast, which can generate environments subject to millennia of rule, famous faces, peasants, and key historical figures and events. The further you get into the game, depending on the size of the world, the slower it becomes as it has to simulate more famous people, more world events, and the natural way that humanoid creatures take over an environment. Like some kind of virus.

For our test we’re using DFMark. DFMark is a benchmark built by vorsgren on the Bay12Forums that gives two different modes built on DFHack: world generation and embark. These tests can be configured, but range anywhere from 3 minutes to several hours. After analyzing the test, we ended up going for three different world generation sizes:

- Small, a 65x65 world with 250 years, 10 civilizations and 4 megabeasts

- Medium, a 127x127 world with 550 years, 10 civilizations and 4 megabeasts

- Large, a 257x257 world with 550 years, 40 civilizations and 10 megabeasts

I looked into the embark mode, but came to the conclusion that due to the way people played embark, to get something close to a real world data would require several hours’ worth of embark tests. This would be functionally prohibitive to the bench suite, and so I decided to focus on world generation.

DFMark outputs the time to run any given test, so this is what we use for the output. We loop the small test for as many times possible in 10 minutes, the medium test for as many times in 30 minutes, and the large test for as many times in an hour.

Interestingly Intel's hardware likes Dwarf Fortress. It is primarily single threaded, and so a high IPC and a high frequency is what matters here.

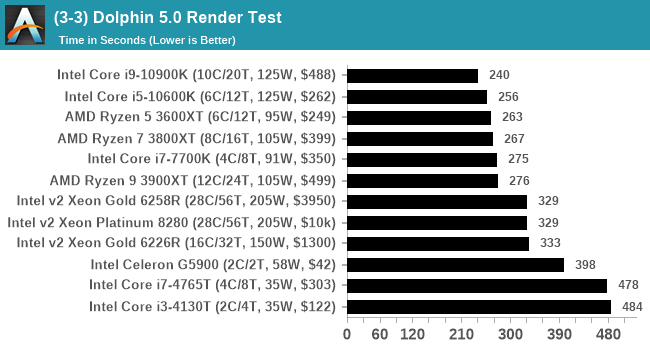

Dolphin v5.0 Emulation: Link

Many emulators are often bound by single thread CPU performance, and general reports tended to suggest that Haswell provided a significant boost to emulator performance. This benchmark runs a Wii program that ray traces a complex 3D scene inside the Dolphin Wii emulator. Performance on this benchmark is a good proxy of the speed of Dolphin CPU emulation, which is an intensive single core task using most aspects of a CPU. Results are given in seconds, where the Wii itself scores 1051 seconds.

The Dolphin software has the ability to output a log, and we obtained a version of the benchmark from a Dolphin developer that outputs the display into that log file. The benchmark when finished will automatically try to close the Dolphin software (which is not normal behavior) and brings a pop-up on display to confirm, which our benchmark script can detects and remove. The log file is fairly verbose, so the benchmark script iterates through line-by-line looking for a regex match in line with the final time to complete.

The final result is a table that looks like this:

Dolphin does still have one flaw – about one in every 10 runs it will hang when the benchmark is complete and can only be removed by memory via a taskkill command or equivalent. I have not found a solution for this yet, and due to this issue Dolphin is one of the final tests in the benchmark run. If the issue occurs and I notice, I can close Dolphin and re-run the test by manually opening the benchmark in Dolphin to run again, and allow the script to pick up the final dialog box when done.

110 Comments

View All Comments

PeachNCream - Tuesday, July 21, 2020 - link

You don't get what it means to perform a controlled test do you?Aspernari - Wednesday, July 22, 2020 - link

It's important to note that the environment is not actually well-controlled.https://twitter.com/IanCutress/status/128480609693...

We don't know temperature for the operating conditions for these tests, which matters more and more for boost behavior for CPUs and GPUs. He says 36c when he got into the office, we'll never know what the temperature peaked at, nor how often similar conditions were reached.

A standard platform is a good choice, but a controlled environment is also important. Unfortunately, the results aren't as reliable as they otherwise might have been.

PeterCollier - Wednesday, July 22, 2020 - link

And that's why this entire test is a complete waste of time. Something like Geekbench or especially Userbench is much, much better because it gives you a range of scores. Instead of trying to create false precision by saying that a AMD 4700U scored, say, a "979" on a benchmark, Userbench will say that all the 4700U's tested scored from 899 to 1008, and break it down into percentiles. This way, you have a range of expected performance in mind instead of being fixated on that "979" number, which could have been obtained in an unrealistic scenario.Rudde - Saturday, July 25, 2020 - link

Isn't userbench a synthetic together with geekbench? What exactly are they testing? Instead of knowing which of Intel i7 10700k and AMD ryzen 7 3800X is better at rendering, video encoding, number crunching or whatever your use case is, you'll get a distribution based on a largely unknown test. The Intel and AMD processors might end up being within error margins of each other in your use case, but that in itself tells something too. All benchmarks are inherently bad; there is not a single benchmark that captures every use case while not being affected by its environment (ram speeds, temperatures, etc). I prefer tests that I understand, over tests that I do not understand.bananaforscale - Wednesday, July 22, 2020 - link

One could ask what the point of Userbenchmark is in these days of quadcores being basically entry level while the benchmark has DECREASED its multicore weighting.A5 - Monday, July 20, 2020 - link

For my own personal test, getting an i7-4770K in the list would be a big help.Once you have a compile test, a Xeon E5-1680v3 would be nice to see so that I can sell my corp on newer workstations...

Shmee - Wednesday, July 22, 2020 - link

Those are great Haswell EP CPUs, and they OC too! I have an E5-1660v3 in my X99 rig.Mockingtruth - Monday, July 20, 2020 - link

I have a 3570k and a E8600 spare with respective motherboards and ram if useful?CampGareth - Monday, July 20, 2020 - link

Personally I'd like to see a Xeon E5-2670 v1 benchmarked. I'm still running a pair of them as my workstation but these days AMD can beat the performance on a single socket and halve the power consumption.Samus - Tuesday, July 21, 2020 - link

Do you run them in an HP Z620? I ran the same system with the same CPU’s for years at one of my clients. What a beast.