Launching the #CPUOverload Project: Testing Every x86 Desktop Processor since 2010

by Dr. Ian Cutress on July 20, 2020 1:30 PM ESTCPU Tests: Office

Our previous set of ‘office’ benchmarks have often been a mix of science and synthetics, so this time we wanted to keep our office section purely on real world performance.

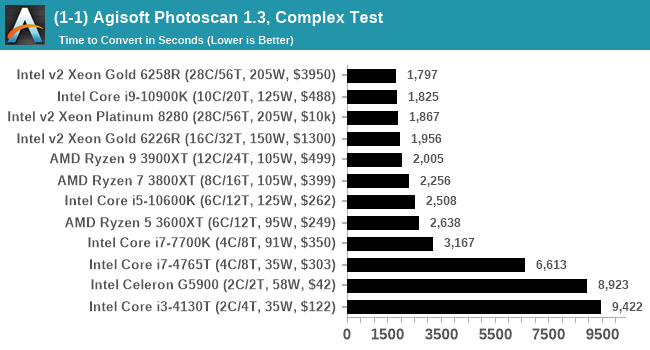

Agisoft Photoscan 1.3.3: link

Photoscan stays in our benchmark suite from the previous benchmark scripts, but is updated to the 1.3.3 Pro version. As this benchmark has evolved, features such as Speed Shift or XFR on the latest processors come into play as it has many segments in a variable threaded workload.

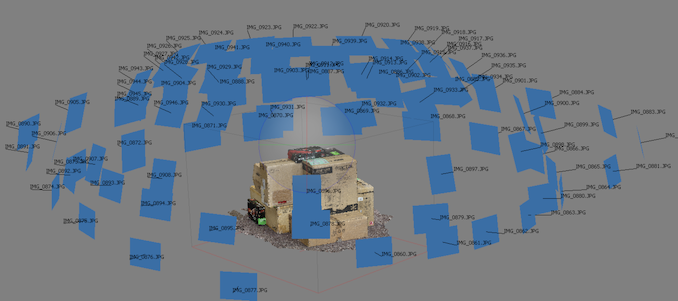

The concept of Photoscan is about translating many 2D images into a 3D model - so the more detailed the images, and the more you have, the better the final 3D model in both spatial accuracy and texturing accuracy. The algorithm has four stages, with some parts of the stages being single-threaded and others multi-threaded, along with some cache/memory dependency in there as well. For some of the more variable threaded workload, features such as Speed Shift and XFR will be able to take advantage of CPU stalls or downtime, giving sizeable speedups on newer microarchitectures.

For the update to version 1.3.3, the Agisoft software now supports command line operation. Agisoft provided us with a set of new images for this version of the test, and a python script to run it. We’ve modified the script slightly by changing some quality settings for the sake of the benchmark suite length, as well as adjusting how the final timing data is recorded. The python script dumps the results file in the format of our choosing. For our test we obtain the time for each stage of the benchmark, as well as the overall time.

The final result is a table that looks like this:

The new v1.3.3 version of the software is faster than the v1.0.0 version we were previously using on the old set of benchmark images, however the newer set of benchmark images are more detailed (and a higher quantity), giving a longer benchmark overall. This is usually observed in the multi-threaded stages for the 3D mesh calculation.

Technically Agisoft has renamed Photoscan to MetaShape, and is currently on version 1.6.2. We reached out to Agisoft to get an updated script for the latest edition however I never heard back from our contacts. Because the scripting interface has changed, we’ve stuck with 1.3.3.

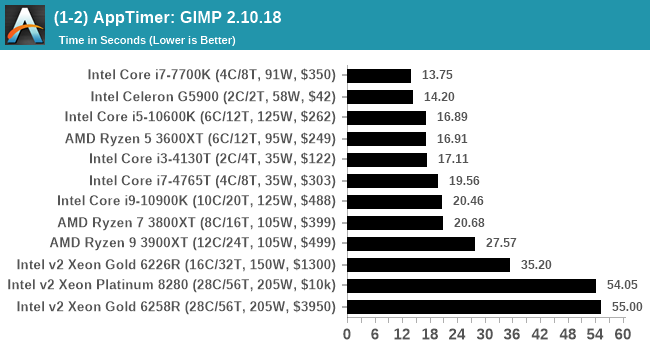

Application Opening: GIMP 2.10.18

First up is a test using a monstrous multi-layered xcf file we once received in advance of attending an event. While the file is only a single ‘image’, it has so many high-quality layers embedded it was taking north of 15 seconds to open and to gain control on the mid-range notebook I was using at the time.

For this test, we’ve upgraded from GIMP 2.10.4 to 2.10.18, but also changed the test a bit. Normally, on the first time a user loads the GIMP package from a fresh install, the system has to configure a few dozen files that remain optimized on subsequent opening. For our test we delete those configured optimized files in order to force a ‘fresh load’ each time the software in run.

We measure the time taken from calling the software to be opened, and until the software hands itself back over to the OS for user control. The test is repeated for a minimum of ten minutes or at least 15 loops, whichever comes first, with the first three results discarded.

The final result is a table that looks like this:

Because GIMP is optimizing files as it starts up, the amount of work required as we increase the core count increases dramatically.

Ultimately we chose GIMP because it takes a long time to load, is free, and actually fits very nicely with our testing system. There is software out there that can take longer to start up, however I found that most of it required licences, wouldn’t allow installation across multiple systems, or that most of the delay was contacting home servers. For this test GIMP is the ultimate portable solution (however if people have suggestions, I would like to hear them).

110 Comments

View All Comments

PeachNCream - Tuesday, July 21, 2020 - link

You don't get what it means to perform a controlled test do you?Aspernari - Wednesday, July 22, 2020 - link

It's important to note that the environment is not actually well-controlled.https://twitter.com/IanCutress/status/128480609693...

We don't know temperature for the operating conditions for these tests, which matters more and more for boost behavior for CPUs and GPUs. He says 36c when he got into the office, we'll never know what the temperature peaked at, nor how often similar conditions were reached.

A standard platform is a good choice, but a controlled environment is also important. Unfortunately, the results aren't as reliable as they otherwise might have been.

PeterCollier - Wednesday, July 22, 2020 - link

And that's why this entire test is a complete waste of time. Something like Geekbench or especially Userbench is much, much better because it gives you a range of scores. Instead of trying to create false precision by saying that a AMD 4700U scored, say, a "979" on a benchmark, Userbench will say that all the 4700U's tested scored from 899 to 1008, and break it down into percentiles. This way, you have a range of expected performance in mind instead of being fixated on that "979" number, which could have been obtained in an unrealistic scenario.Rudde - Saturday, July 25, 2020 - link

Isn't userbench a synthetic together with geekbench? What exactly are they testing? Instead of knowing which of Intel i7 10700k and AMD ryzen 7 3800X is better at rendering, video encoding, number crunching or whatever your use case is, you'll get a distribution based on a largely unknown test. The Intel and AMD processors might end up being within error margins of each other in your use case, but that in itself tells something too. All benchmarks are inherently bad; there is not a single benchmark that captures every use case while not being affected by its environment (ram speeds, temperatures, etc). I prefer tests that I understand, over tests that I do not understand.bananaforscale - Wednesday, July 22, 2020 - link

One could ask what the point of Userbenchmark is in these days of quadcores being basically entry level while the benchmark has DECREASED its multicore weighting.A5 - Monday, July 20, 2020 - link

For my own personal test, getting an i7-4770K in the list would be a big help.Once you have a compile test, a Xeon E5-1680v3 would be nice to see so that I can sell my corp on newer workstations...

Shmee - Wednesday, July 22, 2020 - link

Those are great Haswell EP CPUs, and they OC too! I have an E5-1660v3 in my X99 rig.Mockingtruth - Monday, July 20, 2020 - link

I have a 3570k and a E8600 spare with respective motherboards and ram if useful?CampGareth - Monday, July 20, 2020 - link

Personally I'd like to see a Xeon E5-2670 v1 benchmarked. I'm still running a pair of them as my workstation but these days AMD can beat the performance on a single socket and halve the power consumption.Samus - Tuesday, July 21, 2020 - link

Do you run them in an HP Z620? I ran the same system with the same CPU’s for years at one of my clients. What a beast.