Launching the #CPUOverload Project: Testing Every x86 Desktop Processor since 2010

by Dr. Ian Cutress on July 20, 2020 1:30 PM ESTCPU Tests: Office

Our previous set of ‘office’ benchmarks have often been a mix of science and synthetics, so this time we wanted to keep our office section purely on real world performance.

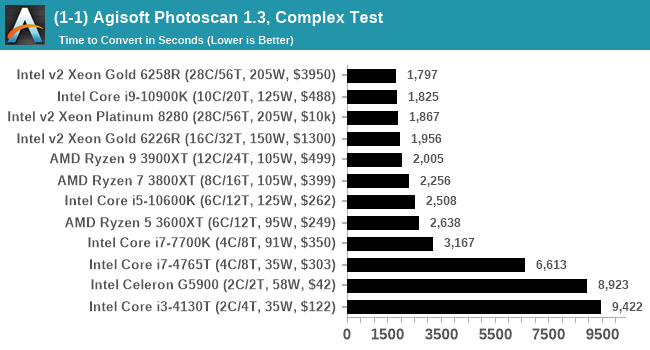

Agisoft Photoscan 1.3.3: link

Photoscan stays in our benchmark suite from the previous benchmark scripts, but is updated to the 1.3.3 Pro version. As this benchmark has evolved, features such as Speed Shift or XFR on the latest processors come into play as it has many segments in a variable threaded workload.

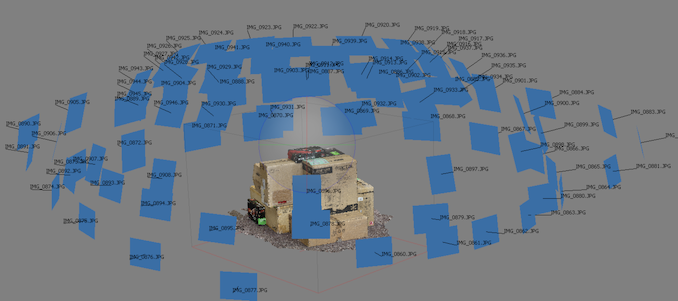

The concept of Photoscan is about translating many 2D images into a 3D model - so the more detailed the images, and the more you have, the better the final 3D model in both spatial accuracy and texturing accuracy. The algorithm has four stages, with some parts of the stages being single-threaded and others multi-threaded, along with some cache/memory dependency in there as well. For some of the more variable threaded workload, features such as Speed Shift and XFR will be able to take advantage of CPU stalls or downtime, giving sizeable speedups on newer microarchitectures.

For the update to version 1.3.3, the Agisoft software now supports command line operation. Agisoft provided us with a set of new images for this version of the test, and a python script to run it. We’ve modified the script slightly by changing some quality settings for the sake of the benchmark suite length, as well as adjusting how the final timing data is recorded. The python script dumps the results file in the format of our choosing. For our test we obtain the time for each stage of the benchmark, as well as the overall time.

The final result is a table that looks like this:

The new v1.3.3 version of the software is faster than the v1.0.0 version we were previously using on the old set of benchmark images, however the newer set of benchmark images are more detailed (and a higher quantity), giving a longer benchmark overall. This is usually observed in the multi-threaded stages for the 3D mesh calculation.

Technically Agisoft has renamed Photoscan to MetaShape, and is currently on version 1.6.2. We reached out to Agisoft to get an updated script for the latest edition however I never heard back from our contacts. Because the scripting interface has changed, we’ve stuck with 1.3.3.

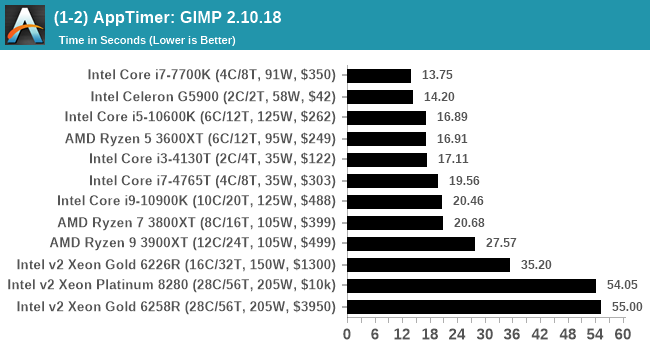

Application Opening: GIMP 2.10.18

First up is a test using a monstrous multi-layered xcf file we once received in advance of attending an event. While the file is only a single ‘image’, it has so many high-quality layers embedded it was taking north of 15 seconds to open and to gain control on the mid-range notebook I was using at the time.

For this test, we’ve upgraded from GIMP 2.10.4 to 2.10.18, but also changed the test a bit. Normally, on the first time a user loads the GIMP package from a fresh install, the system has to configure a few dozen files that remain optimized on subsequent opening. For our test we delete those configured optimized files in order to force a ‘fresh load’ each time the software in run.

We measure the time taken from calling the software to be opened, and until the software hands itself back over to the OS for user control. The test is repeated for a minimum of ten minutes or at least 15 loops, whichever comes first, with the first three results discarded.

The final result is a table that looks like this:

Because GIMP is optimizing files as it starts up, the amount of work required as we increase the core count increases dramatically.

Ultimately we chose GIMP because it takes a long time to load, is free, and actually fits very nicely with our testing system. There is software out there that can take longer to start up, however I found that most of it required licences, wouldn’t allow installation across multiple systems, or that most of the delay was contacting home servers. For this test GIMP is the ultimate portable solution (however if people have suggestions, I would like to hear them).

110 Comments

View All Comments

ruthan - Monday, July 27, 2020 - link

Well lots of bla, bla, bla.. I checked graphs in archizlr they are classic just few entries.. there is link to your benchmark database, but here i see preselected some Crysis benchmark, which is not part of article.. and dont lead to some ultimate lots of cpus graphs. So it need much more streamlining.i usually using old Geekbench for cpus tests and there i can compare usually what i want.. well not with real applications and games, but its quick too. Otherwise usually have enough knowledge to know if is some cpu good enough for some games or not.. so i dont need some very old and very need comparisions. Something can be found at Phoronix.

These benchmarks will always lots relevancy with new updates, unless all cpus would in own machines and update and running and reresting constantly - which could be quite waste of power and money.

Maybe some golden path is some simple multithreaded testing utility with 2 benchmarks one for integers and one for floats.

Ian Cutress - Wednesday, August 5, 2020 - link

When you're in Bench, Check the drop down menu on your left for the individual testshnlog - Wednesday, July 29, 2020 - link

> For our testing on the 2020 suite, we have secured three RTX 2080 Ti GPUs direct from NVIDIA.Congrats!

Koenig168 - Saturday, August 1, 2020 - link

It would be more efficient to focus on the more popular CPUs. Some of the less popular SKUs which differ only by clock speed can have their performance extrapolated. Testing 900 CPUs sound nice but quickly hit diminishing returns in terms of usefulness after the first few hundred.You might also wish to set some minimum performance standards using just a few tests. Any CPU which failed to meet those standards should be marked as "obsolete, upgrade already dude!" and be done with them rather than spend the full 30 to 40 hours testing each of them.

Finally, you need to ask yourself "How often do I wish to redo this project and how much resources will I be able to devote to it?" Bearing in mind that with new drivers, games etc, the database needs to be updated oeriodically to stay relevant. This will provide a realistic estimate of how many CPUs to include in the database.

Meteor2 - Monday, August 3, 2020 - link

I think it's a labour of love...TrevorX - Thursday, September 3, 2020 - link

My suggestion would be to bench the highest performing Xeons that supported DDR3 RAM. Why? Because the cost of DDR3 RDIMMs is so amazingly cheap (as in, less than 10%) compared with DDR4. I personally have a Xeon E5-1660v2 @4.1GHz with 128GB DDR3 1866MHz RDIMMs that's the most rock stable PC I've ever had. Moving up to a DDR4 system with similar memory capacity would be eye-wateringly expensive. I currently have 466 tabs open in Chrome, Outlook, Photoshop, Word, several Excel spreadsheets, and I'm only using 31.3% of physical RAM. I don't game, so I would be genuinely interested in what actual benefit would be derived from an upgrade to Ryzen / Threadripper.Also very keen to see server/hypervisor testing of something like Xeon E5-2667v2 vs Xeon W-1270P or Xeon Silver 4215R for evaluation of on-prem virtualisation hosts. A lot of server workloads are being shifted to the cloud for very good reasons, but for smaller businesses it might be difficult to justify the monthly expense of cloud hosting (and Azure licensing) when they still have a perfectly serviceable 5yo server with plenty of legs left on it. It would be great to be able to see what performance and efficiency improvements can be had jumping between generations.

Tilmitt - Thursday, October 8, 2020 - link

When is this going to be done?Mil0 - Friday, October 16, 2020 - link

Well they launched with 12 results if I count correctly, and currently there are 38 listed, that's close to 10/month. With the goal of 900, that would mean over 7 years (in which ofc more CPUs would be released)Mil0 - Friday, October 16, 2020 - link

Well they launched with 12 results if I count correctly, and currently there are 44 listed, that's about a dozen a month. With the goal of 900, that would mean 6 years (in which ofc more CPUs would be released)Mil0 - Friday, October 16, 2020 - link

Caching hid my previous comment from me, so instead of a follow up there are now 2 pretty similar ones. However, in the mean time I found Ian is actually updating on twitter, which you can find here: https://twitter.com/IanCutress/status/131350328982...He actually did 36 CPU's in 2.5 months, so it should only take 5 years! :D