Intel Confirms 8th Gen Core on 14nm, Data Center First to New Nodes

by Ian Cutress on February 9, 2017 6:52 PM EST- Posted in

- CPUs

- Intel

- Core 8th Gen

- Cannon Lake

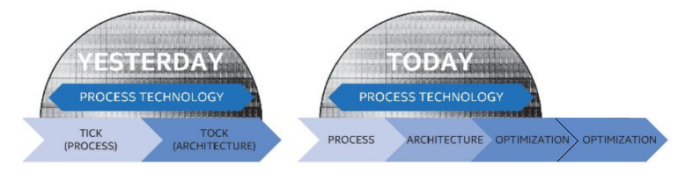

A quick news piece on information coming out of Intel’s annual Investor Day in California. As confirmed to Ashraf Eassa by Intel at the event, Intel’s 8th Generation Core microarchitecture will remain on the 14nm node. This is an interesting development with the recent launch of Intel’s 7th Generation Core products being touted as the ‘optimization’ behind the new ‘Process-Architecture-Optimization’ three-stage cadence that had replaced the old ‘tick-tock’ cadence. With Intel stringing out 14nm (or at least, an improved variant of 14nm as we’ve seen on 7th Gen) for another generation, it makes us wonder where exactly Intel can promise future performance or efficiency gains on the design unless they start implementing microarchitecture changes.

Despite this, if you were to believe supposed ‘leaked’ roadmaps (which we haven’t confirmed from a second source as of yet), the 8th Generation product ‘Cannon Lake’ is more geared towards the Y and U part of Intel’s roadmap. This would ring true with a mobile first strategy that Intel has mirrored with recent generations such that the smaller, low power chips are off the production line for a new product first, however we'd also expect 10nm to also be in the smaller chips first too (as demonstrated at CES). Where Cannon Lake will end up in the desktop or enterprise segment however remains to be seen. To put something a bit more solid into this, Ashraf also mentioned words from Dr. Venkata ‘Murthy’ Renduchintala, VP and GM of Client and IoT:

‘Murthy referred to it at the event, process tech use will be ‘fluid’ based on segment’.

If one read too much into this, we may start seeing a blend of process nodes for different segments at the same time for different areas of the market. We already do have that to some extent with the mainstream CPUs and the HEDT/Xeon families, but this phrasing seems that we might get another split between consumer products or consumer and enterprise. We may get to a point where Intel's 'Gen' naming scheme for its CPUs covers two or more process node variants.

Speaking of the Enterprise segment, another bit of information has also surfaced, coming from a slide during a talk by Diane Bryant (EVP/GM of Data Center) and posted online by Ashraf. The slide contains the words ‘Data center first for next process node’

We can either talk about process node in terms of the ‘number’, either 14nm/10nm/7nm, or by variants within that process (high power, high efficiency). One might suspect that this means Intel is moving hard and fast with 10nm for Xeons and big computing projects, despite showing off 10nm silicon at CES earlier this year. That being said, it’s important to remember that the data center market is large, and includes high-density systems with many cores, such as Atom cores, and Intel did recently open up its 10nm foundry business to ARM Artisan IP projects. So while the slide does say ‘Data center first’, it might be referring to DC projects based on ARM IP in that segment rather than big 4-24+ core Xeons. At this stage of the game it is hard to tell.

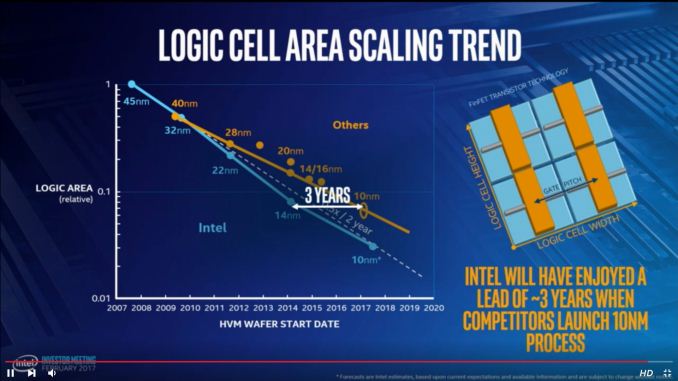

On top of all this, Intel still has extreme confidence in its foundry business. An image posted by Dick James of Siliconics from the livestream shows Intel expects to have a three-year process node advantage when its competitors (Samsung, TSMC) start launching 10nm:

I’ve been brief with this news for a reason - at this point there are a lot of balls in the air with many different ways to take this information, and the Investor Day is winding down on talks and finishing with smaller 1-on-1 meetings. We may get further clarification on this news as the day goes on.

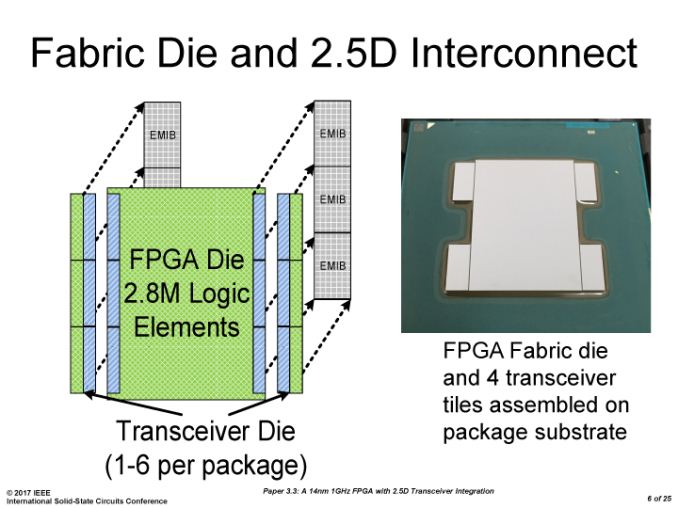

Update 1: On speaking with Diane Bryant, the 'data center gets new nodes first' is going to be achieved by using multiple small dies on a single package. But rather than use a multi-chip package as in previous multi-core products, Intel will be using EMIB as demonstrated at ISSCC: an MCP/2.5D interposer-like design with an Embedded Multi-Die Interconnect Bridge (EMIB).

An Intel Slide from ISSCC, via PC Watch

Initially EMIB was thought of as a technology relating to Intel's acquisition of Altera and potential future embedded FPGA designs, and given the slide above and comments made at the Investor Day, it seems there are other plans for this technology too. The benefit of using multiple smaller dies over a large monolithic 600mm2 die is typically related to cost and yield, however the EMIB technology also has to be up to par and there may be a latency or compatibility trade-off.

Source: Intel, @TMFChipFool

124 Comments

View All Comments

Meteor2 - Friday, February 10, 2017 - link

Actually some of them make sense. Coffee Lake will be 8th gen consumer >15W on 14 nm, as previously rumoured. It launches late this year. Intel is aiming for 15% performance gains and they'll have up to 6 cores.Cannonlake is 8th gen consumer <15W on 10 nm. It was thought this would release late this year. What we appear to be seeing is it being pushed back so the first 10 nm dies can go into a currently unknown data centre product.

7 nm (called 5 nm if you're TSMC) will come around 2020, probably with EUV, and possibly might be the last node shrink for a very long time as it will be so expensive to design, develop and produce chips at 7 nm.

dstarr3 - Friday, February 10, 2017 - link

So why are die shrinks still so important exactly? I get why it was important in the past, but at this point, with things already so small, where do the diminishing returns begin? Considering shrinking things further has been hugely problematic, what exactly is there to be gained?extide - Friday, February 10, 2017 - link

Basically because all the competitors are still doing shrinks, if you don't do them too, you fall behind. I mean there is a lot more to it than that, but that's really the simple answer -- it is to stay competitive.name99 - Friday, February 10, 2017 - link

Here's what I would say:At a TECHNICAL level, die shrinks are less important than in the past. Performance improvements mostly derive from material improvements and new transistor designs, not from the fact that the lithography is drawing smaller features.

BUT

You can't just roll out each new improvement (a different high-K material, a new idea for annealing contact metals, a higher-aspect ration fin, etc) one at a time as they get perfected. Designs are optimized for a particular process and don't expect that random small aspects of that process keep changing every few months.

SO

All the improvements over the past year or so are kept in the lab, forced to play nice together, then rolled out simultaneously as a new "node". Sometimes this node comes with smaller features (eg TSMC 20nm to 16nm), sometimes it "just" reflects material and transistor design improvements (+ and ++ nodes like TSMC 16nm+).

.......................................

There's a sort of weird ignorance+snobbery on the internet (though god knows what the people involved have to be snobbish about...) that thinks these "+" nodes are not "real" improvements. This CAN be the case, but they can also be substantial improvements (as in the case of the 16nm+ node). Partly there's an issue of just how much was improved by the various tweaks; partly there's an issue of how well prepared designers were for the new node and so could take advantage of it. The foundry customers seem to be well informed about future plans ahead of time, and to do an adequate job of exploiting new designs. Whereas Intel seems to have stumbled into the "Optimization, Optimization2, ...?" scheme unprepared and with no backup plan, and their designs have such a long lead time that they have not been able to really exploit the process changes (regardless of this "Optimization" claim).

So the "+" that's the Kaby Lake 14nm+FF node appears to be essentially

- the exact same CPU design

- pretty much the exact same process

- JUST a slight relaxing of how close some transistors are to each other, meaning that they don't interfere with each other as much, and so allowing for a minor boost in frequency.

This is obviously a completely different (and vastly less impressive) sort of "optimization" than the sort of optimization that has Apple improving frequency by 30%, performance by 50%, and reducing energy substantially when they move from 16nmFF to 16nm+FF on TSMC.

But, as I said, that's the difference between a planned and well-executed constant stream of improvement, and a mad-scramble for something, anything, when things don't go the way you planned.

Intel can't be faulted for having their processes delayed in their introduction --- issues happen. They CAN be faulted in apparently have absolutely zero back-up plans in the event that something might go wrong. I'm damn sure that, eg, both TSMC and Apple have backup plans B, C, and D in the event that something unexpected happens to their schedules.

fanofanand - Friday, February 10, 2017 - link

Apple is taking stock ARM IP and tweaking it to widen things up. Apple is not home to the Gods of Engineering as you repeatedly claim. Apple's CPUs are nowhere near as complex as Intel's, and their instruction set is nowhere near as versatile as X86. Get off your knees and stop worshipping at the altar of Apple, the innovation brought by Intel dwarfs the "rectangular device with rounded corners" crap that Apple does.Meteor2 - Sunday, February 12, 2017 - link

No sign of diminishing returns yet. Each shrink still provides performance and efficiency gains. It's just that complexity of design and production is increasing exponentially with each node nowadays, and thus cost. There's every chance consumers won't pay the prices necessary for 5 and 3 nm to be built.name99 - Friday, February 10, 2017 - link

"An image posted by Dick James of Siliconics from the livestream shows Intel expects to have a three-year process node advantage when its competitors (Samsung, TSMC) start launching 10nm:"

So let's see. In the REAL world

- Apple on TSMC is already a match for Intel at equivalent power. So much for that 3yr node advantage. Where exactly do we see it pay off?

- Intel was telling us at the Koby Lake launch about their new comfort fit transistors. So WTF is it? If logic density is the most important metric possible, why did they go backwards with their "relaxed" KL transistor layout?

- What we expect in a month or two is an A10X manufactured on TSMC 10nm which is likely a reasonable match for pretty much any non-K Intel core. (If we assume previous scaling we'd expect this to be at around 3.4GHz, with 25% IPC advantage over intel).

- Next year (maybe Q1, maybe as late as Q3) we expect A11X on TSMC's 7nm.

So yeah, sure, TSMC 16nm+ is not as dense as Intel 14nm. And sure TSMC's 7nm will not be as dense as Intel's 10nm. BUT

- Intel seem unable to extract a performance advantage from their process

- TSMC will be shipping real A11Xs in significant volumes on 7nm at the same time that Intel will be shipping god knows what on 10nm, in volumes that appear to be calculated to make the slow slow slow rollout of Broadwell look like a rocket.

If this is the best story Intel can tell its investors, good luck those of you stuck with them (either via stock possession or unable to switch chips).

lefty2 - Friday, February 10, 2017 - link

TSMC's 7nm is *exactly* as dense as Intel's 10nm, in terms of metal/silicon pitches anyway: https://www.semiwiki.com/forum/content/6477-iedm-2...fanofanand - Friday, February 10, 2017 - link

Apple is not a "match for Intel at equivalent power" GTFO with that crap. Can't tell if you are just trolling or a paid shill.name99 - Friday, February 10, 2017 - link

I'm not interested in re-litigating this. If you consider it still a unproved proposition, there's nothing I can do to cure your ignorance.A9X was comparable with Intel over a year ago:

http://www.anandtech.com/show/9766/the-apple-ipad-...

A10 was a 50% improvement over A9, and we'd expect at least the same sort of improvement for A10X over A10, with a likely additional 20% boost or so from the transition to 10nm.