Hidden Secrets: Investigation Shows That NVIDIA GPUs Implement Tile Based Rasterization for Greater Efficiency

by Ryan Smith on August 1, 2016 5:00 AM EST

As someone who analyzes GPUs for a living, one of the more vexing things in my life has been NVIDIA’s Maxwell architecture. The company’s 28nm refresh offered a huge performance-per-watt increase for only a modest die size increase, essentially allowing NVIDIA to offer a full generation’s performance improvement without a corresponding manufacturing improvement. We’ve had architectural updates on the same node before, but never anything quite like Maxwell.

The vexing aspect to me has been that while NVIDIA shared some details about how they improved Maxwell’s efficiency over Kepler, they have never disclosed all of the major improvements under the hood. We know, for example, that Maxwell implemented a significantly altered SM structure that was easier to reach peak utilization on, and thanks to its partitioning wasted much less power on interconnects. We also know that NVIDIA significantly increased the L2 cache size and did a number of low-level (transistor level) optimizations to the design. But NVIDIA has also held back information – the technical advantages that are their secret sauce – so I’ve never had a complete picture of how Maxwell compares to Kepler.

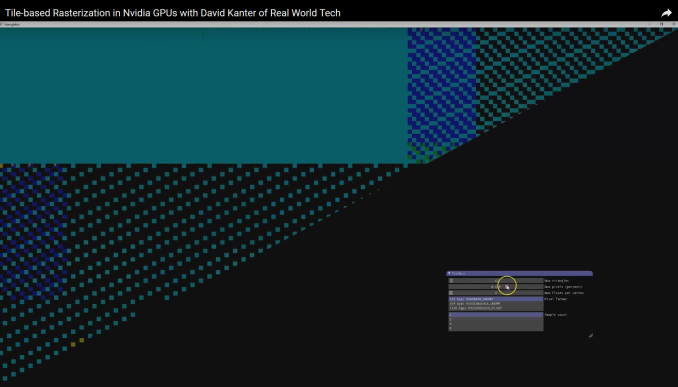

For a while now, a number of people have suspected that one of the ingredients of that secret sauce was that NVIDIA had applied some mobile power efficiency technologies to Maxwell. It was, after all, their original mobile-first GPU architecture, and now we have some data to back that up. Friend of AnandTech and all around tech guru David Kanter of Real World Tech has gone digging through Maxwell/Pascal, and in an article & video published this morning, he outlines how he has uncovered very convincing evidence that NVIDIA implemented a tile based rendering system with Maxwell.

In short, by playing around with some DirectX code specifically designed to look at triangle rasterization, he has come up with some solid evidence that NVIDIA’s handling of tringles has significantly changed since Kepler, and that their current method of triangle handling is consistent with a tile based renderer.

NVIDIA Maxwell Architecture Rasterization Tiling Pattern (Image Courtesy: Real World Tech)

Tile based rendering is something we’ve seen for some time in the mobile space, with both Imagination PowerVR and ARM Mali implementing it. The significance of tiling is that by splitting a scene up into tiles, tiles can be rasterized piece by piece by the GPU almost entirely on die, as opposed to the more memory (and power) intensive process of rasterizing the entire frame at once via immediate mode rendering. The trade-off with tiling, and why it’s a bit surprising to see it here, is that the PC legacy is immediate mode rendering, and this is still how most applications expect PC GPUs to work. So to implement tile based rasterization on Maxwell means that NVIDIA has found a practical means to overcome the drawbacks of the method and the potential compatibility issues.

In any case, Real Word Tech’s article goes into greater detail about what’s going on, so I won’t spoil it further. But with this information in hand, we now have a more complete picture of how Maxwell (and Pascal) work, and consequently how NVIDIA was able to improve over Kepler by so much. Finally, at this point in time Real World Tech believes that NVIDIA is the only PC GPU manufacturer to use tile based rasterization, which also helps to explain some of NVIDIA’s current advantages over Intel’s and AMD’s GPU architectures, and gives us an idea of what we may see them do in the future.

Source: Real World Tech

191 Comments

View All Comments

Scali - Monday, August 1, 2016 - link

That is not entirely correct.Intel licensed PowerVR technology, but only for their Atom-based systems.

The desktop/notebook GPUs, such as the ones discussed in this paper, were their own design. Also, Zone Rendering is quite different from PowerVR's TBDR.

Manch - Tuesday, August 2, 2016 - link

Wasn't Imageon and Adreno at one point in time ATI and AMD/ATI GPU designs/technologies that were sold off?Mr.AMD - Monday, August 1, 2016 - link

Now the 1 Billion dollar question, can NVIDIA do Async.Many say they can, i say and many sites support me on this, NOPE.

Rasterization and pre-emption are OK and all, but will never deliver the same performance as Async compute by AMD hardware.

JeffFlanagan - Monday, August 1, 2016 - link

You're not a credible person. You have to know that.Yojimbo - Monday, August 1, 2016 - link

Amen, brutha. Keep preachin' the word...Scali - Monday, August 1, 2016 - link

"can NVIDIA do Async"...

"will never deliver the same performance as Async compute by AMD hardware"

^^ Those are two distinct things.

By applying your logic, AMD can't do 3D graphics, because they don't have the same performance as NVidia hardware.

HollyDOL - Monday, August 1, 2016 - link

As async compute on nV hardware doesn't throw NotImplementedException the answer on your question is obvious.Is nV implementation of async compute as effective as AMD's is the question you should be asking.

garbagedisposal - Monday, August 1, 2016 - link

Nobody cares, they don't need async to fuck AMD. Can you guys ban this retard?Michael Bay - Tuesday, August 2, 2016 - link

Nonono, good amd fanatics are rare nowadays.ZeDestructor - Tuesday, August 2, 2016 - link

Well, this one isn't very good, so, yknow...