Updated: NVIDIA Announces “NVIDIA Titan X” Video Card: $1200, Available August 2nd

by Ryan Smith on July 25, 2016 11:30 AM EST

In 2014/2015, it took NVIDIA 6 months from the launch of the Maxwell 2 architecture to get GTX Titan X out the door. All things considered, that was a fast turnaround for a new architecture. However now that we’re the Pascal generation, it turns out NVIDIA is in the mood to set a speed record, and in more ways than one.

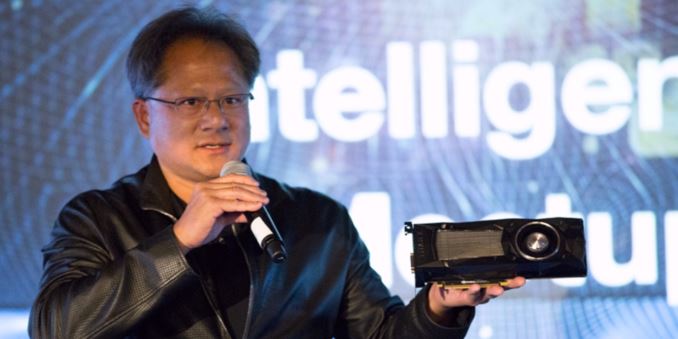

Announced this evening by Jen-Hsun Huang at an engagement at Stanford University is the NVIDIA Titan X, NVIDIA’s new flagship video card. Based on the company’s new GP102 GPU, it’s launching in less than two weeks, on August 2nd.

| NVIDIA GPU Specification Comparison | ||||||

| NVIDIA Titan X | GTX 1080 | GTX Titan X | GTX Titan | |||

| CUDA Cores | 3584 | 2560 | 3072 | 2688 | ||

| Texture Units | 224? | 160 | 192 | 224 | ||

| ROPs | 96? | 64 | 96 | 48 | ||

| Core Clock | 1417MHz | 1607MHz | 1000MHz | 837MHz | ||

| Boost Clock | 1531MHz | 1733MHz | 1075MHz | 876MHz | ||

| TFLOPs (FMA) | 11 TFLOPs | 9 TFLOPs | 6.6 TFLOPs | 4.7 TFLOPs | ||

| Memory Clock | 10Gbps GDDR5X | 10Gbps GDDR5X | 7Gbps GDDR5 | 6Gbps GDDR5 | ||

| Memory Bus Width | 384-bit | 256-bit | 384-bit | 384-bit | ||

| VRAM | 12GB | 8GB | 12GB | 6GB | ||

| FP64 | 1/32 | 1/32 | 1/32 | 1/3 | ||

| FP16 (Native) | 1/64 | 1/64 | N/A | N/A | ||

| INT8 | 4:1 | ? | ? | ? | ||

| TDP | 250W | 180W | 250W | 250W | ||

| GPU | GP102 | GP104 | GM200 | GK110 | ||

| Transistor Count | 12B | 7.2B | 8B | 7.1B | ||

| Die Size | 471mm2 | 314mm2 | 601mm2 | 551mm2 | ||

| Manufacturing Process | TSMC 16nm | TSMC 16nm | TSMC 28nm | TSMC 28nm | ||

| Launch Date | 08/02/2016 | 05/27/2016 | 03/17/2015 | 02/21/2013 | ||

| Launch Price | $1200 | MSRP: $599 Founders $699 |

$999 | $999 | ||

Let’s dive right into the numbers, shall we? The NVIDIA Titan X will be shipping with 3584 CUDA cores. Assuming that NVIDIA retains their GP104-style consumer architecture here – and there’s every reason to expect they will – then we’re looking at 28 SMs, or 40% more than GP104 and the GTX 1080.

It’s interesting to note here that 3584 CUDA cores happens to be the exact same number of CUDA cores also found in the Tesla P100 accelerator. These products are based on very different GPUs, but I bring this up because Tesla P100 did not use a fully enabled GP100 GPU; its GPU features 3840 CUDA cores in total. NVIDIA is not confirming the total number of CUDA cores in GP102 at this time, but if it’s meant to be a lightweight version of GP100, then this may not be a fully enabled card. This would also maintain the 3:2:1 ratio between GP102/104/106, as we saw with GM200/204/206.

On the clockspeed front, Titan X will be clocked at 1417MHz base and 1531MHz boost. This puts the total FP32 throughput at 11 TFLOPs (well, 10.97…), 24% higher than GTX 1080. In terms of expected performance, NVIDIA isn’t offering any comparisons to GTX 1080 at this time, but relative to the Maxwell 2 based GTX Titan X, they are talking about an up to 60% performance boost.

Feeding the beast that is GP102 is a 384-bit GDDR5X memory bus. NVIDIA will be running Titan X’s GDDR5X at the same 10Gbps as on GTX 1080, so we’re looking at a straight-up 50% increase in memory bus size and resulting memory bandwidth, bringing Titan X to 480GB/sec.

At this point in time there are a few unknowns about other specifications of the card. ROP count and texture unit count have not been disclosed (and this is something NVIDIA rarely posts on their site anyhow), but based on GP104 and GP106, I believe it’s safe to assume that we’re looking at 224 texture units and 96 ROPs respectively. To put this into numbers then, theoretical performance versus a GTX 1080 would be 24% more shading/texturing/geometry/compute performance, 50% more memory bandwidth, and 33% more ROP throughput. Or relative GTX Titan X (Maxwell 2), 56% more shading/texturing/geometry/compute performance, 43% more memory bandwidth, and 42% more ROP throughput. Of course, none of this takes into account any of Pascal’s architectural advantages such as a new delta color compression system.

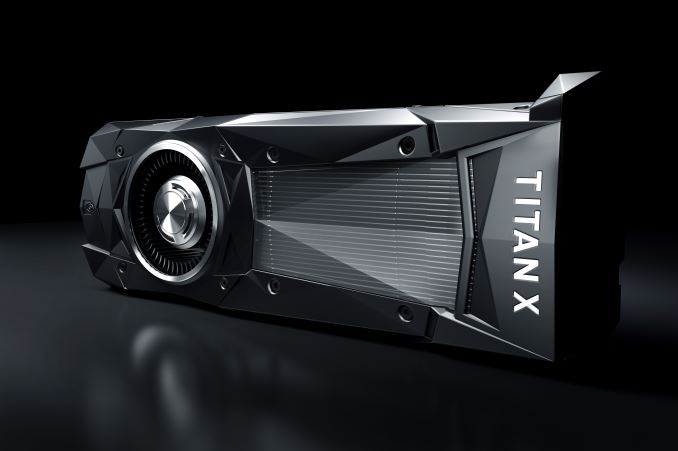

Meanwhile like the past Titans, the new Titan X is a 250W card, putting it 70W (39%) above GTX 1080. In pictures released by NVIDIA and confirmed by their spec sheet, this will be powered by the typical 8-pin + 6-pin power connector setup. And speaking of pictures, the handful of pictures released so far confirm that the card will be following NVIDIA’s previous reference design, in the new GTX 1000 series triangular style. This means we’re looking at a blower based card – now clad in black for Titan X – using a vapor chamber setup like the GTX 1080 and past Titan cards.

The TDP difference between Titan X and GTX 1080 may also explain some of rationale behind the performance estimates above. In the Maxwel 2 generation, GTX Titan X (250W) consumed 85W more than GTX 980 (165W); but for the Pascal generation, NVIDIA only gets another 70W. As power is the ultimate factor limiting performance, it stands to reason that NVIDIA can't increase performance over GTX 1080 (in the form of CUDA cores and clockspeeds) by as much as they could over GTX 980. There is always the option to go above 250W - Tesla P100 in mezzanine form goes to 300 W - but for a PCIe form factor, 250W seems to be the sweet spot for NVIDIA.

Moving on, display I/O is listed as DisplayPort 1.4, HDMI 2.0b, and DL-DVI; NVIDIA doesn’t list the number of ports (and they aren’t visible in product photos), but I’d expect that it’s 3x DP, 1x HDMI, and 1x DL-DVI, just as with the past Titan X and GTX 1080.

From a marketing standpoint, it goes without saying that NVIDIA is pitching the Titan X as their new flagship card. What is interesting however is that it’s not being classified as a GeForce card, rather it’s the amorphous “NVIDIA Titan X”, being neither Quadro, Tesla, nor GeForce. Since the first card’s introduction in 2013, the GTX Titan series has always walked a fine line as a prosumer card, balanced between a relatively cheap compute card for workstations, and an uber gaming card for gaming PCs.

That NVIDIA has removed this card from the GeForce family would seem to further cement its place as a prosumer card. On the compute front the company is separately advertising the card's 44 TOPs INT8 compute performance - INT8 being frequently used for neural network inference - which is something they haven't done before for GeForce or Titan cards. Though make no mistake: the company’s GeForce division is marketing the card and it’s listed on GeForce.com, so it is still very much a gaming card as well.

As for pricing and availability, NVIDIA’s flagships have always been expensive, and NVIDIA Titan X even more so. The card will retail for $1200, $200 more than the previous GTX Titan X (Maxwell 2), and $500 more than the NVIDIA-built GTX 1080 Founders Edition. Given the overall higher prices for the GTX 1000 series, this isn’t something that surprises me, but none the less it means buying NVIDIA’s best card just got a bit more expensive. Meanwhile for distribution, making a departure from previous generations, the card is only being sold directly by NVIDIA through their website. The company’s board partners will not be distributing it, though system builders will still be able to include it.

Overall the announcement of this new Titan card, its specifications, and its timing raises a lot of questions. Does GP102 have fast FP64/FP16 hardware, or is it purely a larger GP104, finally formalizing the long-anticipated divide between HPC and consumer GPUs? Just how much smaller is GP102 versus GP100? How has NVIDIA been able to contract their launch window by so much for the Pascal generation, launching 3 GPUs in the span of 3 months? These are all good questions I hope we’ll get an answer to, and with an August 2nd launch it looks like we won’t be waiting too long.

Update 07/25: NVIDIA has given us a few answers to the question above. We have confirmation that the FP64 and FP16 rates are identical to GP104, which is to say very slow, and primarily there for compatibility/debug purposes. With the exception of INT8 support, this is a bigger GP104 throughout.

Meanwhile we have a die size for GP102: 471mm2, which is 139mm2 smaller than GP100. Given that both (presumably) have the same number of FP32 cores, the die space savings and implications are significant. This is as best of an example as we're ever going to get on the die space cost of the HPC features limited to GP100: NVLInk, fast FP64/FP16 support, larger register files, etc. By splitting HPC and graphics/inference into two GPUs, NVIDIA can produce GP102 at what should be a significantly lower price (and higher yield), something they couldn't do until the market for compute products based on GP100 was self-sustaining.

Finally, NVIDIA has clarified the branding a bit. Despite GeForce.com labeling it "the world’s ultimate graphics card," NVIDIA this morning has stated that the primary market is FP32 and INT8 compute, not gaming. Though gaming is certainly possible - and I fully expect they'll be happy to sell you $1200 gaming cards - the tables have essentially been flipped from the past Titan cards, where they were treated as gaming first and compute second. This of course opens the door to a proper GeForce branded GP102 card later on, possibly with neutered INT8 support to enforce the market segmentation.

228 Comments

View All Comments

Morg72 - Saturday, July 23, 2016 - link

So what happened to HBM2? Did nVidia give up on waiting for it and going for next gen? I'd think that these high end cards would be the ones that could actually take advantage of the bandwidth it offers.As I understand it, AMD's Vega GPU's are including HBM2 and that is a big part of why they wont be out until later this year or early next.

Ryan Smith - Saturday, July 23, 2016 - link

HBM2 is in use on GP100, which in turn is used for the Tesla P100.Morg72 - Saturday, July 23, 2016 - link

I find it odd that they didn't see fit to include it on a $1,200 card. Makes me think that either they are rushing this to market so they can get the 1080ti out whenever AMD's Vega does, like they did last year or it's not offering much of a performance advantage as hyped...a least not for their GPU's.TheinsanegamerN - Monday, July 25, 2016 - link

I'd go with option 2 there.Pascal doesnt need as much memory bandwidth as maxwell, the 1080 already outperforms the titan x, and the new titan will have a 50% wider bus to play with. If the new titan is only 50% faster then a 1080 (being charitable here) then it would have no need of pricey, difficult to work with HBM2, as opposed to available GDDR5X.

haukionkannel - Tuesday, July 26, 2016 - link

It Also allows to use normal gddr5 in 1080ti instead of gddr5+ to reduce the cost.webdoctors - Saturday, July 23, 2016 - link

Obama and the Feds have been printing money so long that inflation is finally starting to hit us in our wallets. Video cards that used to be $800 are now $1200. This is what happens when interest rates are kept at 0% for 10 years so the left can guilt us into empting our back accounts so everyone can live the American dream, albeit only until the dollar comes crashing down.Don't blame the businesses that are just having to deal with the BS that zerohedge have been exposing for years. If we were using the gold standard, this card would only be $500 and 1060GTX would be $99. Imagine 980GTX performance for $99!

Just something to consider.

trackgoon - Saturday, July 23, 2016 - link

You have a funny way of looking at money. Who cares if its 99 or 999, its the same value. Inflation isn't emotional.cocochanel - Saturday, July 23, 2016 - link

Obama and the Feds had and still have no choice. If they stop printing, the US will go into a deep recession. Who wants that ?There is more to it. Even the mighty US has a tough time competing on a global stage with countries where state capitalism is a religion. Japan and South Korea are prime examples and China, Brazil and others have been going the same route for some time. Unless US makes some changes, the situation will only get worse.

stardude82 - Saturday, July 23, 2016 - link

In your analysis labor would be price accordingly as well. Assuming $10/hr. after tax wages now, it would still take you 25 hrs. of work to buy your hypothetical $99 card.webdoctors - Monday, July 25, 2016 - link

Thats the issue, salaries haven't changed in 10+ years but the cost of living/goods have gone up significantly. The middleclass is being left to fight among themselves while the fat cats get richer though their inflation immune assets like Trump towers.If ppl were making $100/hr than a $600 or $1200 vid card wouldn't raise any eyebrows.But now you've got the masses in an uproar because they can't buy their precious toy.