QNAP TS-451 Bay Trail NAS Performance Review

by Ganesh T S on July 28, 2014 9:00 AM ESTHardware Platform and Setup Impressions

The industrial design of the QNAP TS-451 is quite utilitarian. Despite the metal chassis, the drive caddies are themselves made of plastic and feel a bit more flimsy that what we would like. At the price point that QNAP wants to place the product, consumers would be looking for a premium product with proper metal caddies (like the ones that come along with the TS-x70 and the rackmount units). Apart from the main unit, the package consists of the following:

- 2M Cat 5E Ethernet cable

- 90 W external power supply with US power cord

- Getting started guide / warranty card

- Screws for hard drive installation

In terms of chassis I/O, we have a USB 3.0 port in front (beneath the power and backup buttons). On the rear side, we have the power inlet, a USB 3.0 port, two USB 2.0 ports, two GbE ports and a HDMI port. Since we are in the middle of a long-term evaluation (for the virtualization and multimedia capabilities), a teardown hasn't been performed yet, but Legion Hardware disassembled the unit and found two ASMedia ASM1061 SATA to PCIe bridges as well as an Etron EJ168A USB 3.0 host controller (two-port hub chip).

Platform Analysis

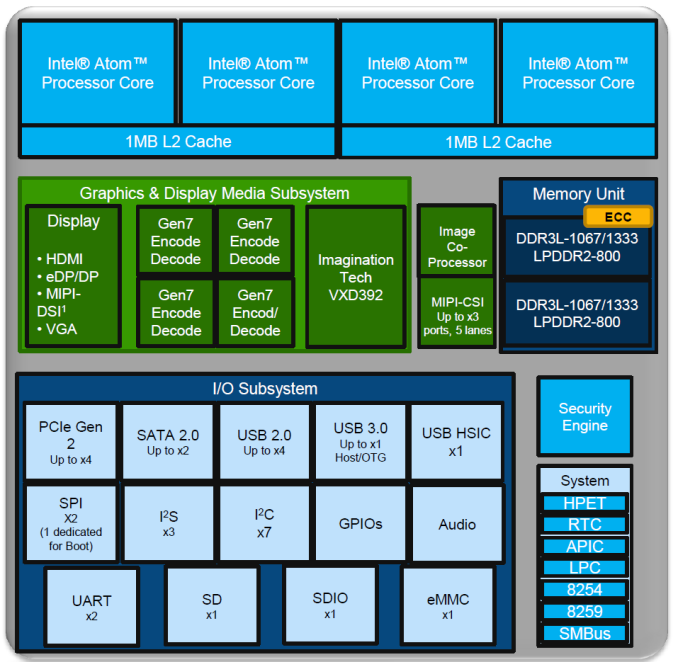

The various components of a Bay Trail-D part (the family to which the Celeron J1800 belongs) are provided in the diagram below.

Obviously, two cores are cut, as are a number of miscellaneous ports, in the Celeron part we are looking at.

As we already discussed in the launch coverage, the USB 3.0 port is connected the upstream port of the Etron EJ168A, while two PCIe 2.0 x1 lanes are connected to the two ASMedia ASM1061 ports. From Legion Hardware's disassembly, the other two PCIe 2.0 x1 lanes are connected to two Intel i210 Ethernet controllers.

Setup and Usage

QNAP's QTS is one of the more full-features NAS operating systems that we have seen from off-the-shelf NAS vendors. A diskless unit can be set up in three ways - the first one is to use QNAP's cloud service (at start.qnap.com) and enter the Cloud ID that comes in the getting started guide. The second one is to use QNAP's QFinder utility and set up the unit through that. The third one is to somehow determine the DHCP IP received by the unit and access the unit directly over the web browser. We chose the second option to get things up and running.

In terms of usage, the web interface allows multi-tasking and provides a desktop environment within the browser. It is a cross between a mobile OS-type app layout and a traditional desktop environment. From our experience, even though the features are awesome, we did find the UI responsiveness to be a bit on the slower side compared to, say, Asustor or Synology. Some of the relevant features are exposed in the gallery below.

We have not dealt with higher-level applications and the mobile app ecosystem in the above gallery. A discussion of those will be made in the upcoming coverage of the virtualization and multimedia capabilities.

The NAS's primary purpose is, of course, the handling of the storage aspects - RAID setup, migration and expansion. Our full test process of starting with one drive, migrating to RAID-1, adding another drive to migrate to RAID-5 and yet another one to expand the RAID-5 volume using a total of 4x 4 TB WD Re drives successfully completed with no issues whatsoever.

We simulated drive failure by yanking out one of the drives during data transfer. The operations from the client didn't face any hiccups, but the NAS UI immediately reported the trouble (alerts can be configured). Inserting a new drive allowed for rebuild. There was a bit of an issue with the NAS not allowing for the hot-swap because of some pre-existing partitions on the hard drive that was inserted as new, but the issue couldn't be reliably reproduced. QNAP suggested the use of drives free of partitions for the empty bays / replacements for reliable expansions / rebuilds.

55 Comments

View All Comments

DanNeely - Monday, July 28, 2014 - link

I currently retire my old computers by making an image of their HD and parking it on my NAS so I can recover files and (theoretically) bring the whole system up as a VM or on spare hardware if I needed access to some software on it. As a result, I'd be interested in seeing how well it can run an image of a well used working computer in addition to the standard stripped down OS with a single application setup that a conventional VM hosts. I know Baytrail is much slower than the i7 of my main system; but knowing that all the cruft that accumulates after using a system for a half dozen years won't strangle the NAS would be reassuring.eliluong - Monday, July 28, 2014 - link

What software do you use to image your old drives? And what VM software do you boot it up in? Is the image usable, given that in the VM all the hardware will be different?Samus - Monday, July 28, 2014 - link

I just sysprep and capture the image with WDS which can redeploy using WinPE via PXE (network) or USB. I still use a Windows 2008 server for this, but 2012 with Hyper-V gives the flexibility of snapshots before/after sysprepping which helps prevent running into that sealing limitation (3 syspreps before it craps out) of images. I typically refresh my corporate image quarterly with Windows Updates, Office Updates, Adobe Updates, Driver Updates, and profile tweaks.Hyper-V definitely has the edge on VMware for imaging because of how easy it is to convert a VXD to a universal image. You can go straight from Hyper-V to WDS, and vice versa (I can turn any PC on the network into a VXD through WDS capture, and virtualize, say, a legacy PC running legacy software that's currently on a KVM and annoying someone as they have to switch between it and their main PC. This is still preferable over XP Mode in Windows 7 because of XP Modes lack of snapshots, poor remote management and inability to backup while the machine is running.

eliluong - Monday, July 28, 2014 - link

Thanks for the informative reply. I'm not in the IT field so this new to me. I've dabbled with virtual machines, but not in this manner. For a home-use case, where I have a 500GB system running XP that I want to have accessible in a virtualized environment, is this something what you described is capable of, or is it intended to just run one or two applications?Samus - Monday, July 28, 2014 - link

You could use VMware, XP Mode or Hyper-V (Windows 2012 Server) to completely emulate a PC. VMWare and Hyper-V both offer tools to take an image of a physical machine and turn it into a virtual machine. They do this by stripping the HAL (hardware layer) and generalizing the image, so the next time it boots up, whether it be on different physical hardware are emulated virtual hardware, it rebuilds the HAL. In Windows 7, you'll see "detecting hardware" on the first boot, in Windows XP, it actually just goes through the second-phase of the XP setup again.Vista and Windows 7 brought Windows PE (preexecution environment) into the picture which makes low-level Windows deployment easier. For example, if your XP machine is running IDE mode (not AHCI) it's pretty tricky to get it to run in a Hyper-V machine, but there is a lengthy process for doing so. Windows Vista and newer can dynamically boot between SATA controllers, command configurations, etc. Windows 8 is even better, having the most feature-rich WinPE environment, with native bare-metal recovery (easily restore any system image to any hardware configuration without generalizing) but Windows 8 is hated on in corporate sectors, so us IT engineers are stuck dealing with Winodws 7's inferior manageability.

rufuselder - Thursday, October 9, 2014 - link

I like it, although I think there are some better storage options out there... /Rufus from http://www.consumertop.com/best-computer-storage-g...blaktron - Tuesday, July 29, 2014 - link

Hey, if you do this often enough, what you should do is just run your system off a vhdx right from the get go. Its actually pretty good for a couple of reasons if you don't mind losing a % or 2 of random performance and total space.Here is a link that explains what I am talking about, but Windows 7 and 8 support this, and it works pretty well:

http://technet.microsoft.com/en-us/library/hh82569...

Oyster - Monday, July 28, 2014 - link

Since you asked for feedback, Ganesh:1) It goes without saying that all your reviews focus too heavily on the hardware. You need to dedicate more time to the software/OS and the app ecosystem for these offerings. I personally have a QNAP TS 470, and can't see myself switching to a competitor unless their offerings consist of basic functionality like "ipkg", myQNAPcloud, Qsync, etc. There's no way for me to reach a decision unless you cover these areas :).

2) For VM performance, I realize that most of the NAS vendors are going to want you to benchmark under ideal conditions (e.g. iSCSI only). But it would be awesome if you can cover non-iSCSI performance.

3) Please, please add an additional test to cover the effectiveness of these devices to recover from failures. This would allow us to figure out how effective the RAID software implementation is.

Thanks.

Kevin G - Monday, July 28, 2014 - link

I'll second the request for some more attention to software and failure recovery.ganeshts - Monday, July 28, 2014 - link

I already do testing for RAID rebuild after simulating a drive failure by just yanking out one of the disks at random. Rebuild times as well as power consumption numbers for that operation are reported in the review. In case of any issues, I do make a note of what was encountered (for example, in this review, I noted that reinserting a disk with pre-existing partitions might sometime cause the 'hot-swap' rebuild to not take effect.What other 'failure recovery' testing do you want to see?