USB 3.2 Gen 2 Portable SSDs Roundup - Featuring the Samsung T7 Touch and the SanDisk Extreme Pro

by Ganesh T S on January 23, 2020 9:00 AM ESTPCMark 10 Storage Bench - Real-World Access Traces

There are a number of storage benchmarks that can subject a device to artificial access traces by varying the mix of reads and writes, the access block sizes, and the queue depth / number of outstanding data requests. We saw results from two popular ones - ATTO, and CrystalDiskMark - in a previous section. More serious benchmarks, however, actually replicate access traces from real-world workloads to determine the suitability of a particular device for a particular workload. Real-world access traces may be used for simulating the behavior of computing activities that are limited by storage performance. Examples include booting an operating system or loading a particular game from the disk.

PCMark 10's storage bench (introduced in v2.1.2153) includes four storage benchmarks that use relevant real-world traces from popular applications and common tasks to fully test the performance of the latest modern drives:

- The Full System Drive Benchmark uses a wide-ranging set of real-world traces from popular applications and common tasks to fully test the performance of the fastest modern drives. It involves a total of 204 GB of write traffic.

- The Quick System Drive Benchmark is a shorter test with a smaller set of less demanding real-world traces. It subjects the device to 23 GB of writes.

- The Data Drive Benchmark is designed to test drives that are used for storing files rather than applications. These typically include NAS drives, USB sticks, memory cards, and other external storage devices. The device is subjected to 15 GB of writes.

- The Drive Performance Consistency Test is a long-running and extremely demanding test with a heavy, continuous load for expert users. In-depth reporting shows how the performance of the drive varies under different conditions. This writes more than 23 TB of data to the drive.

Despite the data drive benchmark appearing most suitable for testing direct-attached storage, we opted to run the full system drive benchmark as part of our evaluation flow. Many of us use portable flash drives as boot drives and storage for Steam games. These types of use-cases are addressed only in the full system drive benchmark.

The Full System Drive Benchmark comprises of 23 different traces. For the purpose of presenting results, we classify them under five different categories:

- Boot: Replay of storage access trace recorded while booting Windows 10

- Creative: Replay of storage access traces recorded during the start up and usage of Adobe applications such as Acrobat, After Effects, Illustrator, Premiere Pro, Lightroom, and Photoshop.

- Office: Replay of storage access traces recorded during the usage of Microsoft Office applications such as Excel and Powerpoint.

- Gaming: Replay of storage access traces recorded during the start up of games such as Battlefield V, Call of Duty Black Ops 4, and Overwatch.

- File Transfers: Replay of storage access traces (Write-Only, Read-Write, and Read-Only) recorded during the transfer of data such as ISOs and photographs.

PCMark 10 also generates an overall score, bandwidth, and average latency number for quick comparison of different drives. The sub-sections in the rest of the page reference the access traces specified in the PCMark 10 Technical Guide.

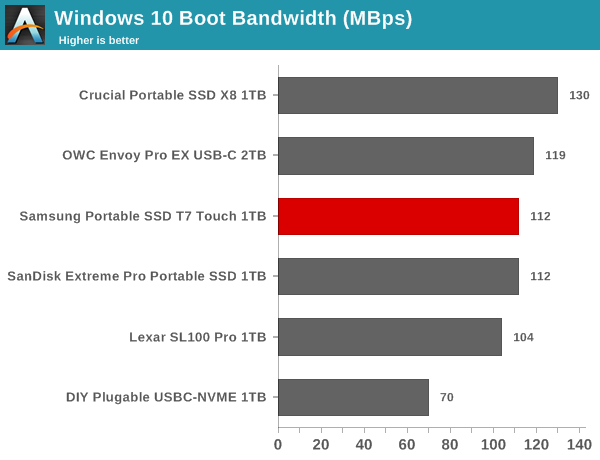

Booting Windows 10

The read-write bandwidth recorded for each drive in the boo access trace is presented below.

The Crucial X8 appears to be the best of the lot for this workload, while the other new drives are clustered between 104 MBps and 120 MBps. Given the amount of data traffic involved, the gulf is unlikely to result in a major difference in the boot times with various drives.

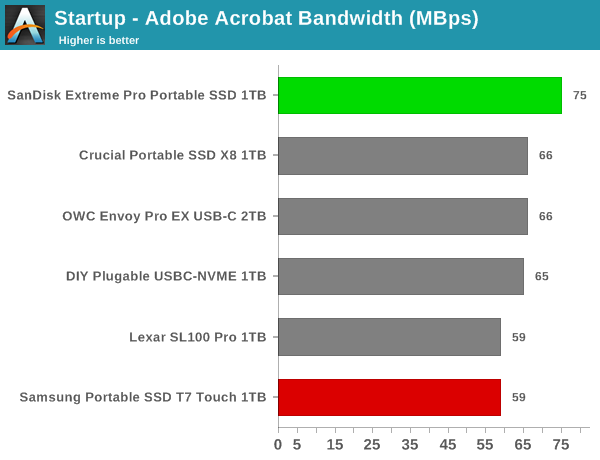

Creative Workloads

The read-write bandwidth recorded for each drive in the sacr, saft, sill, spre, slig, sps, aft, exc, ill, ind, psh, and psl access traces are presented below.

The SanDisk Extreme Pro Portable SSD offers the best startup times overall for different programs used in creative workloads. For usage after startup, the drive is a middling performer, though it comes out on top in a couple of relevant traces. The disappointing aspect is that the T7 Touch doesn't really shine as might be expected.

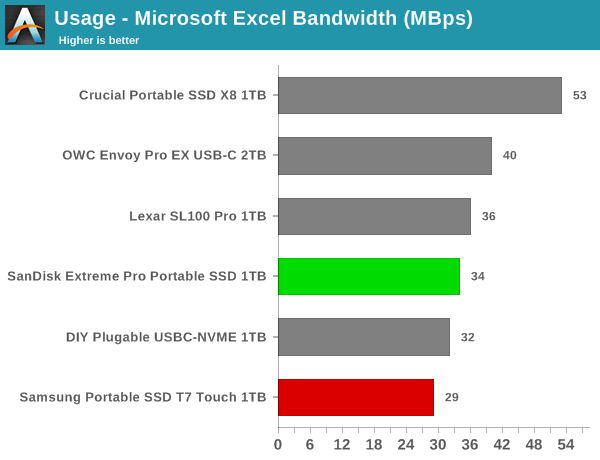

Office Workloads

The read-write bandwidth recorded for each drive in the exc and pow access traces are presented below.

The X8 wins out in the office workloads, with the SanDisk and Samsung units coming in the bottom half of the graph.

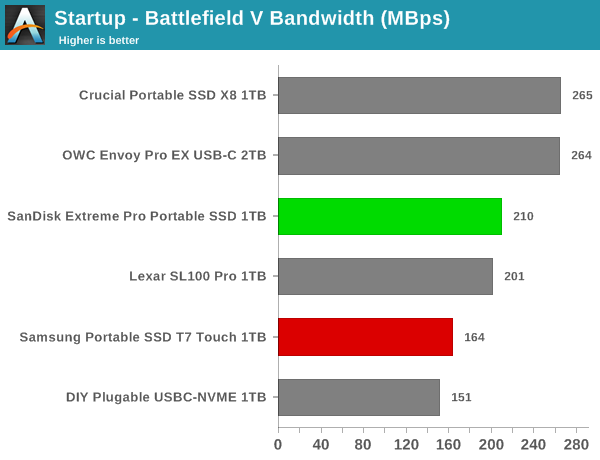

Gaming Workloads

The read-write bandwidth recorded for each drive in the bf, cod, and ow access traces are presented below.

The X8 again wins out here, with the Extreme Pro in the middle of the pack and the T7 Touch in the lower half.

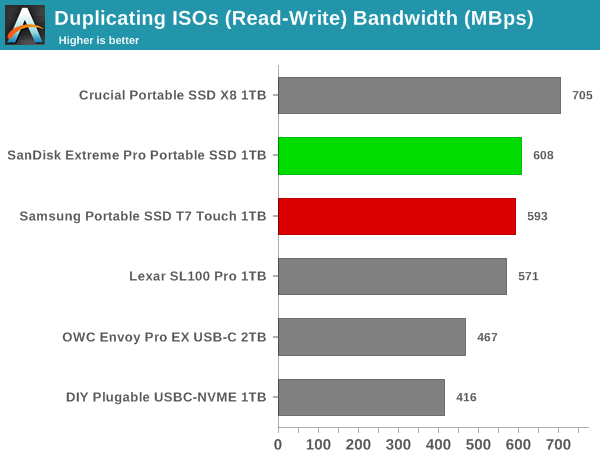

Files Transfer Workloads

The read-write bandwidth recorded for each drive in the cp1, cp2, cp3, cps1, cps2, and cps3 access traces are presented below.

The X8 and the OWC Envoy Pro EX keeps coming on top for the file transfer cases. The Extreme Pro remains in the top half mostly, while the T7 Touch right behind it.

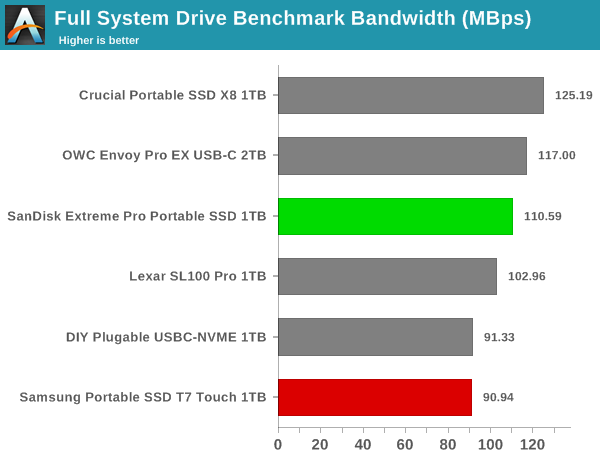

Overall Scores

PCMark 10 reports an overall score based on the observed bandwidth and access times for the full workload set. The score, bandwidth, and average access latency for each of the drives are presented below.

With an overall PCMark 10 full system drive benchmark score of 561, the T7 Touch comes in last among the considered DAS devices. The Extreme Pro scores a more respectable 683, but the Crucial X8 leas the pack with a 765.

38 Comments

View All Comments

zebrax2 - Thursday, January 23, 2020 - link

I don't know about the others but I'm not a fan of having the benchmarks hidden inside a drop down box unless selected. I also feel that for some of the benchmark 1 or 2 charts containing all the data ,e.g. ATTO and CrystalDiskMark, would be better instead of the screenshots.chaos215bar2 - Thursday, January 23, 2020 - link

Agreed. Did I miss something, or are there no actual direct comparisons between the drives (aside from the feature table at the end)?This reads like 6 separate reviews, where I have to keep messing with drop downs to follow each one. It’s all but impossible to follow in a mobile browser. On desktop, I could at least open the screenshots side by side.

sonny73n - Friday, January 24, 2020 - link

Who reads news on desktop anymore? Unless working on PC and want to have a peek at what’s on, nobody would give up the comfort of reading while lying down on the sofa or in bed with a mobile device. Therefore, drop down comparison is useless to most readers.s.yu - Saturday, January 25, 2020 - link

Sorry, I visit Anandtech almost exclusively on my PC.dontlistentome - Sunday, February 2, 2020 - link

This forum is increasingly populated by people with zero concept that other people may think or do things differently to them. Guess it's a microcosm of the wider no-platforming world.My advice? Spend a morning learning keyboard shortcuts and you'll understand why we oldies still prefer to browse on desktop rather than mobile when doing anything other than *really* casual browsing.

bigboxes - Monday, February 3, 2020 - link

Yeah, reading this on mobile platform sucks. Desktop is way more comfortable. Then again, my workstation is fairly ergonomic (and badass!). Get off the couch if you want to live to old age.Sivar - Thursday, February 6, 2020 - link

I find mobile devices to be extremely annoying for web browsing. Small screen, slow CPU, extremely limited browser plugins, frustrating data entry, more difficult copy/paste.I have a tablet and smart phone, but my web browsing on them tends to be light and often only directs me on what to read later on my "real" device.

JanW1 - Monday, January 27, 2020 - link

That was my first thought on this review as well. Plus, the scales of the charts hidden behind the dropdown menu are all different for no good reason. This almost looks like every effort was made to prevent readers from comparing the drives. Just let the throughput scale on all charts go to 1000MB/s and the temperature scale to 70°. This fits the data from all drives nicely and readers can see the differences in a glimpse.alphasquadron - Thursday, January 23, 2020 - link

Yeah I agree as well. Don't it was like this previously or maybe it was a different reviewer.ganeshts - Thursday, January 23, 2020 - link

Would it help you if I were to keep the 'Expand All' option as the default and allow readers to use the drop down to 'compress' it down to 1 graph / make the analysis text visible along with?As for the ATTO / CDM 'graphs' instead of 'screenshots' - the aspect I need to trade off with is the number of data points. For example, CDM has 12 sets per drive (or 24 if you include the IOPS version also). ATTO has more than 20 sets * 2 (R/W). That would be 64 graphs. It doesn't make sense to have that many graphs for two synthetic benchmarks.