GeForce GTX 970: Correcting The Specs & Exploring Memory Allocation

by Ryan Smith on January 26, 2015 1:00 PM ESTSegmented Memory Allocation in Software

So far we’ve talked about the hardware, and having finally explained the hardware basis of segmented memory we can begin to understand the role software plays, and how software allocates memory among the two segments.

From a low-level perspective, video memory management under Windows is the domain of the combination of the operating system and the video drivers. Strictly speaking Windows controls video memory management – this being one of the big changes of Windows Vista and the Windows Display Driver Model – while the video drivers get a significant amount of input in hinting at how things should be laid out.

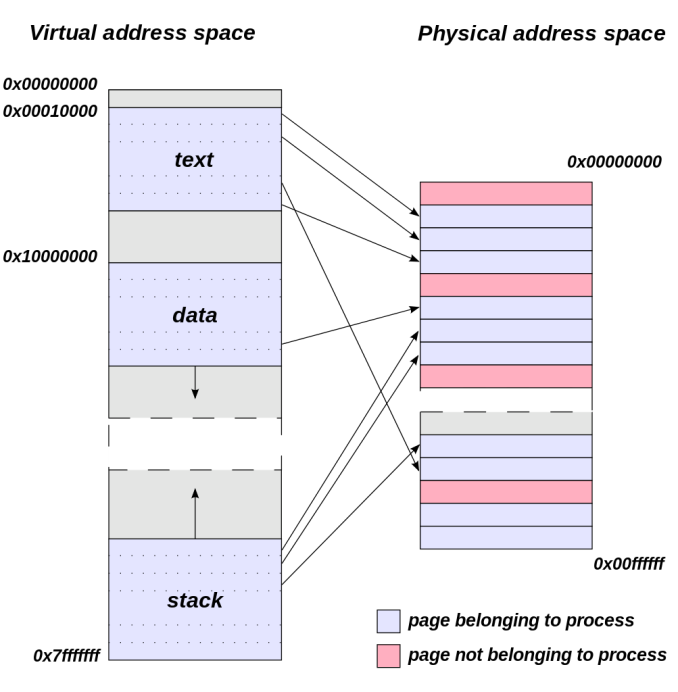

Meanwhile from an application’s perspective all video memory and its address space is virtual. This means that applications are writing to their own private space, blissfully unaware of what else is in video memory and where it may be, or for that matter where in memory (or even which memory) they are writing. As a result of this memory virtualization it falls to the OS and video drivers to decide where in physical VRAM to allocate memory requests, and for the GTX 970 in particular, whether to put a request in the 3.5GB segment, the 512MB segment, or in the worst case scenario system memory over PCIe.

Virtual Address Space (Image Courtesy Dysprosia)

Without going quite so far to rehash the entire theory of memory management and caching, the goal of memory management in the case of the GTX 970 is to allocate resources over the entire 4GB of VRAM such that high-priority items end up in the fast segment and low-priority items end up in the slow segment. To do this NVIDIA focuses up to the first 3.5GB of memory allocations on the faster 3.5GB segment, and then finally for memory allocations beyond 3.5GB they turn to the 512MB segment, as there’s no benefit to using the slower segment so long as there’s available space in the faster segment.

The complex part of this process occurs once both memory segments are in use, at which point NVIDIA’s heuristics come into play to try to best determine which resources to allocate to which segments. How NVIDIA does this is very much a “secret sauce” scenario for the company, but from a high level identifying the type of resource and when it was last used are good ways to figure out where to send a resource. Frame buffers, render targets, UAVs, and other intermediate buffers for example are the last thing you want to send to the slow segment; meanwhile textures, resources not in active use (e.g. cached), and resources belonging to inactive applications would be great candidates to send off to the slower segment. The way NVIDIA describes the process we suspect there are even per-application optimizations in use, though NVIDIA can clearly handle generic cases as well.

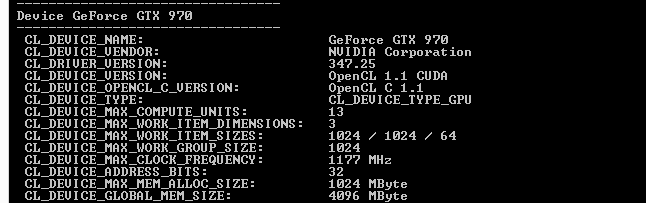

From an API perspective this is applicable towards both graphics and compute, though it’s a safe bet that graphics is the more easily and accurately handled of the two thanks to the rigid nature of graphics rendering. Direct3D, OpenGL, CUDA, and OpenCL all see and have access to the full 4GB of memory available on the GTX 970, and from the perspective of the applications using these APIs the 4GB of memory is identical, the segments being abstracted. This is also why applications attempting to benchmark the memory in a piecemeal fashion will not find slow memory areas until the end of their run, as their earlier allocations will be in the fast segment and only finally spill over to the slow segment once the fast segment is full.

| GeForce GTX 970 Addressable VRAM | |||

| API | Memory | ||

| Direct3D | 4GB | ||

| OpenGL | 4GB | ||

| CUDA | 4GB | ||

| OpenCL | 4GB | ||

The one remaining unknown element here (and something NVIDIA is still investigating) is why some users have been seeing total VRAM allocation top out at 3.5GB on a GTX 970, but go to 4GB on a GTX 980. Again from a high-level perspective all of this segmentation is abstracted, so games should not be aware of what’s going on under the hood.

Overall then the role of software in memory allocation is relatively straightforward since it’s layered on top of the segments. Applications have access to the full 4GB, and due to the fact that application memory space is virtualized the existence and usage of the memory segments is abstracted from the application, with the physical memory allocation handled by the OS and driver. Only after 3.5GB is requested – enough to fill the entire 3.5GB segment – does the 512MB segment get used, at which point NVIDIA attempts to place the least sensitive/important data in the slower segment.

398 Comments

View All Comments

piiman - Saturday, January 31, 2015 - link

"not ROP- nor memory bandwidth-bound, and the .5 GB of slower RAM hasn't been shown to create a problem, from what I've seen."Go buy dying light and watch what happens the second it goes over 3.5gb. I can tell the second it does because the game begins to stutter going from 150 fps 2 FPS. I have to lower settings, can't us triple monitor surround, to keep it under 3.5 so I lose LOTS of graphic goodness. Below 3.5 the game runs great over and it makes you want to throw it out the window.

gw74 - Thursday, January 29, 2015 - link

nopegw74 - Thursday, January 29, 2015 - link

nope. a clear case for a refund or exchange perhaps, if you even want one, at most.adamrussell - Friday, January 30, 2015 - link

Doubtful. The most you could ask for is a full refund and is that what you really want?ol1bit - Monday, February 2, 2015 - link

Clas action? Are you nuts? Really, in the world just a few years ago, no one would even care. You still are getting the same kick ass card the benchmarks ran, for a kick ass price. You cannot expect it to be the same as the 980?Stas - Saturday, February 7, 2015 - link

They better give us free shit. I don't care about some lawyers collecting millions and sending me a $20 check. I'd take a discounted trade-up to 980 or maybe a $50 coupon toward any nVidia product purchase valid for 2 years.StevoLincolnite - Tuesday, January 27, 2015 - link

The big issue is... These specifications have been KNOWN for a long time, yet nVidia did nothing to notify ANYONE about the inaccuracies that *every* review site posted.It was only until they were "caught out" that they became apologetic.

This will probably a good moment for AMD to launch it's 300 series of cards to capitalize on this fumble, I doubt they will though, they haven't exactly been quick to react for many years.

HisDivineOrder - Tuesday, January 27, 2015 - link

More likely, AMD will announce a re-re-release of the 7970..er... 7970Ghz...er...R9 280X line as the R9 284X line with a "Never Settle Not Ever No Way" bundle that includes three of the following: Deus Ex Human Revolution, Sleeping Dogs (non-Definitive), Hitman Absolution, Alien Isolation, Saints Row 4, Saints Row: Gat Out of Hell, a ship for Star Citizen, or Lego Batman 3.Meanwhile, the R9 285X may finally arrive by the time nVidia finishes filling out the Maxwell stack with the GM200.

Kutark - Tuesday, January 27, 2015 - link

Well played sir, i got a nice chuckle out of that post ;-)Yojimbo - Tuesday, January 27, 2015 - link

Four months ago would have been a better time for AMD to launch their 300 series cards, but AMD doesn't have any 300 series cards to launch at the moment and if the rumors are true, won't have them until June.