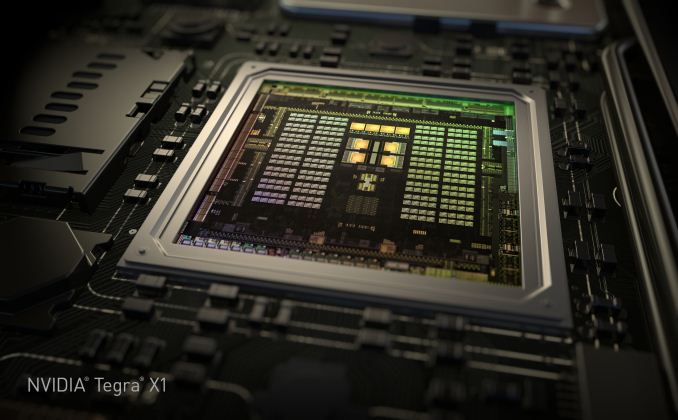

NVIDIA Tegra X1 Preview & Architecture Analysis

by Joshua Ho & Ryan Smith on January 5, 2015 1:00 AM EST- Posted in

- SoCs

- Arm

- Project Denver

- Mobile

- 20nm

- GPUs

- Tablets

- NVIDIA

- Cortex A57

- Tegra X1

In the past few years, we’ve seen NVIDIA shift their mobile strategy dramatically with time. With Tegra 2 and 3, we saw multiple design wins in the smartphone space, along with the tablet space. These SoCs often had dedicated GPUs that were quite unlike what we saw in NVIDIA’s desktop and laptop GPUs, with a reduced feature set and unique architecture. However, with Tegra K1 we saw a distinct shift in NVIDIA’s SoC strategy, as the Tegra K1 was the first mobile SoC to achieve parity in GPU architecture with desktop GPUs. In the case of the Tegra K1, this meant a single Kepler SMX which made for truly incredible GPU performance. However, in the time since we’ve seen companies like Apple release new SoCs such as the A8X, which managed to bring largely similar performance with less power.

NVIDIA of course is never content to stay idle for too long and let themselves be surpassed, which has led to the company developing their Tegra SoCs on a roughly yearly cadence. In NVIDIA’s development timeline, 2014 brought us the Tegra K1-32, the company’s first SoC to integrate a modern high-performance GPU architecture (Kepler), and later on the TK1-64, a K1 with NVIDIA’s own in-house developed ARMv8 Denver CPU in place of the 32bit ARM A15 CPU.

NVIDIA's GTC 2014 Tegra Roadmap

2014 also brought a revision to NVIDIA’s public Tegra roadmap: for 2015 NVIDIA would bump the previously announced Parker SoC and release a new in its place, Erista. This schedule change ended up being quite a surprise due to the fact that NVIDIA had up until this time released their roadmaps roughly 2 years out, whereas Erista was put on the map less than a year before it was to be announced. More unusual, NVIDIA offered up minimal details of Erista; it would be powered by a Maxwell GPU with no additional information on the CPU or manufacturing process. Parker on the other hand – NVIDIA’s planned Denver + Maxwell + 16nm FinFet part – fell off the schedule, with NVIDIA not officially canceling it but also saying little else about it.

Now in 2015 and with the launch of the Tegra X1, we can finally begin putting the picture together. Erista as it turns out is something of a rapid release product for NVIDIA; what had been plans to produce a 16nm FF part in 2015 became plans to produce a 20nm part, with Erista to be that part. To pull together Erista NVIDIA would go for a quick time-to-market approach in SoC design, pairing up a Maxwell GPU with ARM Cortex A57 & A53 GPUs, to be produced on TSMC’s 20nm SoC process.

CPU

We’ll kick off our look at Erista then with a look at Erista’s CPUs, where one of the bigger surprises in Erista is the CPU configuration. While Tegra K1 had a version with NVIDIA’s custom Denver core for the CPU, NVIDIA has elected to use ARM’s Cortex A57 and A53 in the Tegra X1. The A57 CPU cluster has 2MB of L2 cache shared across the four cores, with 48KB/32KB L1s (I$+D$) per core. The A53 cluster has 512KB of L2 cache shared by all four cores and 32KB/32KB L1s (I$+D$) per core. NVIDIA representatives stated that this was done for time to market reasons.

However, rather than a somewhat standard big.LITTLE configuration as one might expect, NVIDIA continues to use their own unique system. This includes a custom interconnect rather than ARM’s CCI-400, and cluster migration rather than global task scheduling which exposes all eight cores to userspace applications. It’s important to note that NVIDIA’s solution is cache coherent, so this system won't suffer from the power/performance penalties that one might expect given experience with previous SoCs that use cluster migration.

Although cluster migration is usually a detriment to power and performance (especially without a cache coherent interconnect), NVIDIA claims that Tegra X1 significantly outperforms Samsung System LSI’s Exynos 5433 in performance per watt with 1.4x more performance at the same amount of power or half the power for the same performance on the CPU. It's likely that this difference comes from optimizing the implemented logic and differences between Samsung and TSMC 20nm processes rather than differences in thread management. NVIDIA continues to use System EDP (Electrical Design Point) management to control throttling and turbo rather than ARM's IPA (Intelligent Power Allocation) drivers, and in general it seems that while the CPU architecture is ARM's reference design it's quite clear that everything else is distinctly NVIDIA in design.

All of this of course raises the question of where’s Denver, and what is to happen to it? The use of the ARM Cortex A57 and A53, as NVIDIA tells it, was based on a time-to-market decision, and that NVIDIA could bring an off-the-shelf Cortex-based SoC to the market sooner than they could another Denver SoC. On the surface there is at least some evidence to agree with this, as NVIDIA would be able to buy a 20nm design from ARM versus spending the time porting Denver over from TSMC’s 28nm process to their 20nm process. At the same time however integrating an ARM CPU core into an SoC is not an easy task – logic LEGO this is not – so it’s hard to say whether this actually saved NVIDIA much time.

In any case, much like the still in the dark Parker design, NVIDIA is holding their cards close to their chest and hinting that Denver will still be in future generation products. If Parker is still part of NVIDIA’s plans – and they are without a doubt working on some kind of 16nm FF Tegra SoC – then we may still see Denver as part of the original Parker plan. Otherwise at this point while there’s no reason not to believe NVIDIA about the fate of future Denver SoCs, it’s anyone’s guess when we might see it again.

Uncore

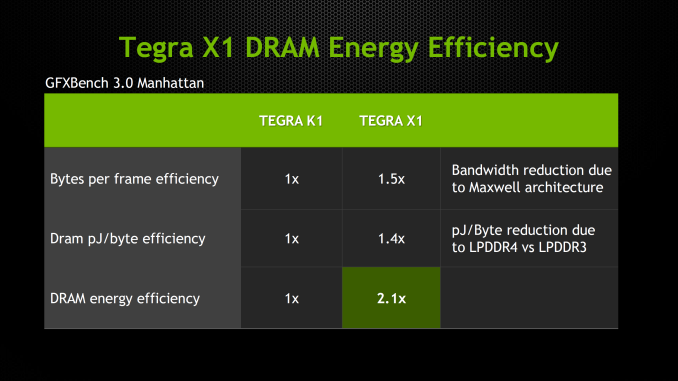

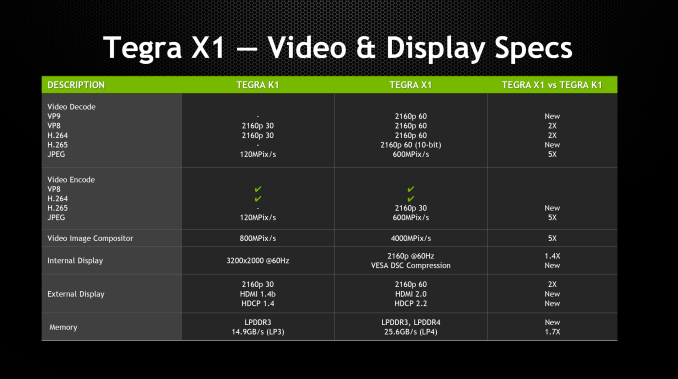

Outside of the CPU and GPU, NVIDIA has also dramatically improved the rest of Tegra X1 in comparison with Tegra K1. We see a move from 64-bit wide LPDDR3 to 64-bit wide LPDDR4 on the memory interface, which improves peak memory bandwidth from 14.9 GB/s to 25.6 GB/s and improves power efficiency by around 40%. In addition, the maximum internal display resolution moves from 3200x2000 at 60 Hz to 3840x2160 at 60 Hz with support for VESA’s display stream compression. For external displays, we see a significant improvement with support for HDMI 2.0 and HDCP 2.2, which means that 4K60 is supported in contrast with the Tegra K1, which only supported 4K30.

The ISP is largely similar to the one we see in the Tegra K1 when it comes to feature set, but JPEG encode and decode rate is now five times as fast, going from 120 MP/s to 600 MP/s. For video encode and decode, we see support for 4K60 H.265 and VP9, with support for 10 bit color on decode on H.265. In addition to support for new codecs in the hardware blocks, we see that H.264 and VP8 now support 4K60, a notable improvement over the 4K30 that was the maximum for Tegra K1. We also see that the storage controller now supports eMMC 5.1 for faster storage performance.

194 Comments

View All Comments

Morawka - Monday, January 5, 2015 - link

omg i'm gonna go buy some nvidia stock now. Not because of the X1, but because of the Automotive platforms.iwod - Monday, January 5, 2015 - link

That is some impressive GPU performance / watt. However I think LPDDR4 with double the bandwidth do help the performance on X1. But even with the accounted difference the A8X GPU still does not hold up against Maxwell, assuming Nvidia benchmarks can be trusted.It should be noted that the A8X is partly a custom GPU from Apple. Since it doesn't come directly off IMG, and it is likely not as power efficient as possible.

junky77 - Monday, January 5, 2015 - link

Where's AMD in all this thing..chizow - Monday, January 5, 2015 - link

They're not in the discussion, blame Dirk "Not Interested in Netbooks" Meyer for that one.junky77 - Monday, January 5, 2015 - link

:(But all the other stuff showed here - vehicles and stuff (not that I think there will be a good AI in 2017, but still)

GC2:CS - Monday, January 5, 2015 - link

This chip looks awesome, but so was all tegras before.Like the tegra k1, a huge annoncement supposed to bring "revolution" to mobile graphics computing. That turned out to be a power hog, pulling so much power it was absolutelly unsuitable for any phone and it's also throotling significally.

This looks like the same story yet aggain, lots of marketing talk, lots of hype, no promise delivered.

pSupaNova - Monday, January 5, 2015 - link

Nothing wrong with the Tegra K1 in both forms, I have a Shield Tablet and Nexus 9.I have a Program that I changed so I can run https://www.shadertoy.com/ shaders natively and both tablets are impressively fast.

Nvidia just need to make sure they run on the same process as Apple and they will have the fastest SOC CPU and GPU wise.

techconc - Tuesday, January 6, 2015 - link

Apple is expected to move to 14nm for the A9. That's just speculation, but given Apple position in the supply chain as opposed to nVidia's I would be surprised if nVidia was able to be on the same process. With regards to CPUs, since nVidia has regressed from the Denver core to the standard reference designs, I wouldn't expect nVidia to have any CPU advantage. Certainly not with single threaded apps anyway. As for the GPU, the Rogue 7 series appears to be more scalable with up to 512 "cores". If the X1 chip has any GPU advantage it would not be for technical reasons. Rather it would be because Apple chose to scale up to that level. Given that Apple has historically chosen rather beefy GPUs, I would again be surprised if they allowed the X1 to have a more powerful GPU. We'll see.kron123456789 - Monday, January 5, 2015 - link

"it's also throotling significally." — Um, no. It has throttling under heavy load but it's about 20% in worst case. It was Snapdragon 800/801 and Exynos 5430 that "throotling significally".jwcalla - Monday, January 5, 2015 - link

The fact that the announcement for this chip was coordinated with an almost exclusive discussion about automotive applications -- and correct me if I'm wrong, but it does not appear they even discussed gaming or mobile applications, except for the demo -- could be a signal that indicates to which markets NVIDIA wants to focus Tegra and which markets they're abandoning.A couple years back Jen-Hsun said that Android was the future of gaming, but I wonder if he still believes that today?

I do think there is some truth to the idea that there is not much of a consumer market for high-end mobile graphics. Other than making for a great slide at a press event (Apple), there doesn't seem to be much of a use case for big graphics in a tablet. The kind of casual games people play there don't seem to align with nvidia's strengths.