QNAP TS-853 Pro 8-bay Intel Bay Trail SMB NAS Review

by Ganesh T S on December 29, 2014 7:30 AM EST

Introduction and Testbed Setup

QNAP has focused on Intel's Bay Trail platform for this generation of NAS units (compared to Synology's efforts with Intel Rangeley). While the choice made sense for the home users / prosumer-targeted TS-x51 series, we were a bit surprised to see the TS-x53 Pro series (targeting business users) also use the same Bay Trail platform. Having evaluated 8-bay solutions from Synology (the DS1815+) and Asustor (the AS7008T), we requested QNAP to send over their 8-bay solution, the TS-853 Pro-8G. Hardware-wise, the main difference between the three units lie in the host processor and the amount of RAM.

The specifications of our sample of the QNAP TS-853 Pro are provided in the table below

| QNAP TS-853 Pro-8G Specifications | |

| Processor | Intel Celeron J1900 (4C/4T Silvermont x86 @ 2.0 GHz) |

| RAM | 8 GB |

| Drive Bays | 8x 3.5"/2.5" SATA II / III HDD / SSD (Hot-Swappable) |

| Network Links | 4x 1 GbE |

| External I/O Peripherals | 3x USB 3.0, 2x USB 2.0 |

| Expansion Slots | None |

| VGA / Display Out | HDMI (with HD Audio Bitstreaming) |

| Full Specifications Link | QNAP TS-853 Pro-8G Specifications |

| Price | USD 1195 |

Note that the $1195 price point is for the 8GB RAM version. The default 2 GB version retails for $986. The extra RAM is important if the end user wishes to take advantage of the unit as a VM host using the Virtualization Station package.

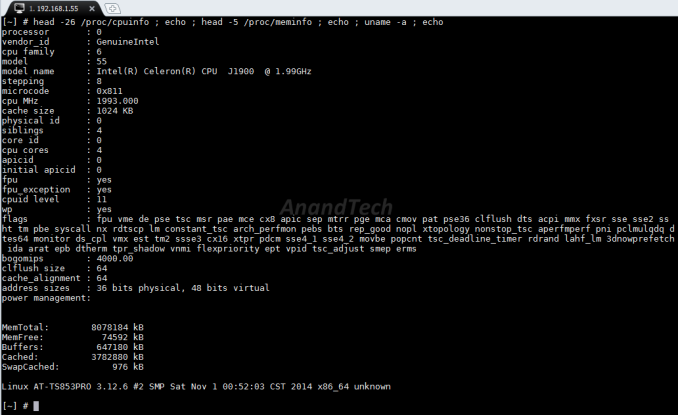

The TS-853 Pro runs Linux (kernel version 3.12.6). Other aspects of the platform can be gleaned by accessing the unit over SSH.

Compared to the TS-451, we find that the host CPU is now a quad-core Celeron (J1900) instead of a dual-core one (J1800). The amount of RAM is doubled. However, the platform and setup impressions are otherwise similar to the TS-451. Hence, we won't go into those details in our review.

One of the main limitations of the TS-x51 units is the fact that it can have only one virtual machine (VM) active at a time. The TS-x53 Pro relaxes that restriction and allows two simultaneous VMs. Between our review of the TS-x51 and this piece, QNAP introduced QvPC, a unique way to use the display output from the TS-x51 and TS-x53 Pro series. We will first take a look at the technology and how it shaped our evaluation strategy.

Beyond QvPC, we follow our standard NAS evaluation routine - benchmark numbers for both single and multi-client scenarios across a number of different client platforms as well as access protocols. We have a separate section devoted to the performance of the NAS with encrypted shared folders, as well as RAID operation parameters (rebuild durations and power consumption). Prior to all that, we will take a look at our testbed setup and testing methodology.

Testbed Setup and Testing Methodology

The QNAP TS-853 Pro can take up to 8 drives. Users can opt for either JBOD, RAID 0, RAID 1, RAID 5, RAID 6 or RAID 10 configurations. We expect typical usage to be with multiple volumes in a RAID-5 or RAID-6 disk group. However, to keep things consistent across different NAS units, we benchmarked a single RAID-5 volume across all disks. Eight Western Digital WD4000FYYZ RE drives were used as the test disks. Our testbed configuration is outlined below.

| AnandTech NAS Testbed Configuration | |

| Motherboard | Asus Z9PE-D8 WS Dual LGA2011 SSI-EEB |

| CPU | 2 x Intel Xeon E5-2630L |

| Coolers | 2 x Dynatron R17 |

| Memory | G.Skill RipjawsZ F3-12800CL10Q2-64GBZL (8x8GB) CAS 10-10-10-30 |

| OS Drive | OCZ Technology Vertex 4 128GB |

| Secondary Drive | OCZ Technology Vertex 4 128GB |

| Tertiary Drive | OCZ Z-Drive R4 CM88 (1.6TB PCIe SSD) |

| Other Drives | 12 x OCZ Technology Vertex 4 64GB (Offline in the Host OS) |

| Network Cards | 6 x Intel ESA I-340 Quad-GbE Port Network Adapter |

| Chassis | SilverStoneTek Raven RV03 |

| PSU | SilverStoneTek Strider Plus Gold Evolution 850W |

| OS | Windows Server 2008 R2 |

| Network Switch | Netgear ProSafe GSM7352S-200 |

The above testbed runs 25 Windows 7 VMs simultaneously, each with a dedicated 1 Gbps network interface. This simulates a real-life workload of up to 25 clients for the NAS being evaluated. All the VMs connect to the network switch to which the NAS is also connected (with link aggregation, as applicable). The VMs generate the NAS traffic for performance evaluation.

Thank You!

We thank the following companies for helping us out with our NAS testbed:

- Thanks to Intel for the Xeon E5-2630L CPUs and the ESA I-340 quad port network adapters

- Thanks to Asus for the Z9PE-D8 WS dual LGA 2011 workstation motherboard

- Thanks to Dynatron for the R17 coolers

- Thanks to G.Skill for the RipjawsZ 64GB DDR3 DRAM kit

- Thanks to OCZ Technology for the two 128GB Vertex 4 SSDs, twelve 64GB Vertex 4 SSDs and the OCZ Z-Drive R4 CM88

- Thanks to SilverStone for the Raven RV03 chassis and the 850W Strider Gold Evolution PSU

- Thanks to Netgear for the ProSafe GSM7352S-200 L3 48-port Gigabit Switch with 10 GbE capabilities.

- Thanks to Western Digital for the eight WD RE hard drives (WD4000FYYZ) to use in the NAS under test.

58 Comments

View All Comments

ap90033 - Wednesday, December 31, 2014 - link

RAID is not a REPLACEMENT for BACKUP and BACKUP is not a REPLACEMENT for RAID.... RAID 5 can be perfectly fine... Especially if you have it backed up. ;)shodanshok - Wednesday, December 31, 2014 - link

I think you should consider raid10: recovery is much faster (the system "only" need to copy the content of a disk to another) and URE-imposed threat is way lower.Moreover, remember that large RAIDZ arrays have the IOPS of a single disk. While you can use a large ZIL device to transform random writes into sequential ones, the moment you hit the platters the low IOPS performance can bite you.

For reference: https://blogs.oracle.com/roch/entry/when_to_and_no...

shodanshok - Wednesday, December 31, 2014 - link

I agree.The only thing to remember when using large RAIDZ system is that, by design, RAIDZ arrays have the IOPS of a single disk, no matter how much disks you throw at it (throughput will linearly increase, though). For increased IOPS capability, you should construct your ZPOOL from multiple, striped RAIDZ arrays (similar to how RAID50/RAID60 work).

For more information: https://blogs.oracle.com/roch/entry/when_to_and_no...

ap90033 - Friday, January 2, 2015 - link

That is why RAID is not Backup and Backup is not RAID. ;)cjs150 - Wednesday, January 7, 2015 - link

Totally agree. As a home user, Raid 5 on a 4 bay NAS unit is fine, but I have had it fall over twice in 4 yrs, once when a disk failed and a second time when a disk worked loose (probably my fault). Failure was picked up, disk replaced and riad rebuilt. Once you have 5+ discs, Raid 5 is too risky for me.jwcalla - Monday, December 29, 2014 - link

Just doing some research and it's impossible to find out if this has ECC RAM or not, which is usually a good indication that it doesn't. (Which is kind of surprising for the price.)I don't know why they even bother making storage systems w/o ECC RAM. It's like saying, "Hey, let's set up this empty fire extinguisher here in the kitchen... you know... just in case."

Brett Howse - Monday, December 29, 2014 - link

The J1900 doesn't support ECC:http://ark.intel.com/products/78867/Intel-Celeron-...

icrf - Monday, December 29, 2014 - link

I thought the whole "ECC required for a reliable file system" was really only a thing for ZFS, and even then, only barely, with dangers generally over-stated.shodanshok - Wednesday, December 31, 2014 - link

It's not over-stated: any filesystem that proactively scrubs the disk/array (BTRFS and ZFS, at the moment) subsystem _need_ ECC memory.While you can ignore this fact on a client system (where the value of the corrupted data is probably low), on NAS or multi-user storage system ECC is almost mandatory.

This is the very same reason why hardware RAID cards have ECC memory: when they scrubs the disks, any memory-related corruption can wreak havoc on array (and data) integrity.

Regards.

creed3020 - Monday, December 29, 2014 - link

I hope that Synology is working on something similar to the QvM solution here. The day I started my Synology NAS was the day I shutdown my Windows Server. I would, however, still love to have an always on Windows machine for the use cases that my NAS cannot perform or would be onerous to set up and get running.