Intel’s Silvermont Architecture Revealed: Getting Serious About Mobile

by Anand Lal Shimpi on May 6, 2013 1:00 PM EST- Posted in

- CPUs

- Intel

- Silvermont

- SoCs

The most frustrating part about covering Intel’s journey into mobile over the past five years is just how long it’s taken to get here. The CPU cores used in Medfield, Clover Trail and Clover Trail+ are very similar to what Intel had with the first Atom in 2008. Obviously we’re dealing with higher levels of integration and tweaks for further power consumption, but the architecture and much of the core remains unchanged. Just consider what that means. A single Bonnell core, designed in 2004, released in 2008, is already faster than ARM’s Cortex A9. Intel had this architecture for five years now and from the market’s perspective, did absolutely nothing with it. You could argue that the part wasn’t really ready until Intel had its 32nm process, so perhaps we’ve only wasted 3 years (Intel debuted its 32nm process in 2010). It’s beyond frustrating to think about just how competitive Intel would have been had it aggressively pursued this market.

Today Intel is in a different position. After acquisitions, new hires and some significant internal organizational changes, Intel seems to finally have the foundation to iterate and innovate in mobile. Although Bonnell (the first Atom core) was the beginning of Intel’s journey into mobile, it’s Silvermont - Intel’s first new Atom microarchitecture since 2008 - that finally puts Intel on the right course.

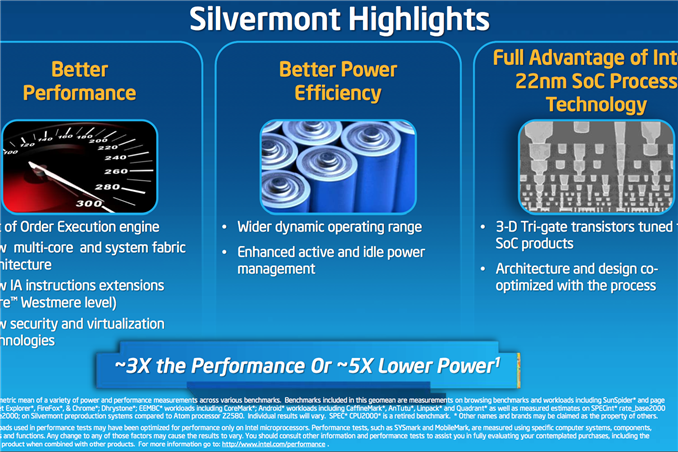

Although Silvermont can find its way into everything from cars to servers, the architecture is primarily optimized for use in smartphones and then in tablets, in that order. This is a significant departure from the previous Bonnell core that was first designed to serve the now defunct Mobile Internet Devices category that Intel put so much faith in back in the early to mid 2000s. As Intel’s first Atom architecture designed for mobile, expectations are high for Silvermont. While we’ll have to wait until the end of the year to see Silvermont in tablets (and early next year for phones), the good news for Intel is that Silvermont seems competitive right out of the gate. The even better news is that Silvermont will only be with us for a year before it gets its first update: Airmont.

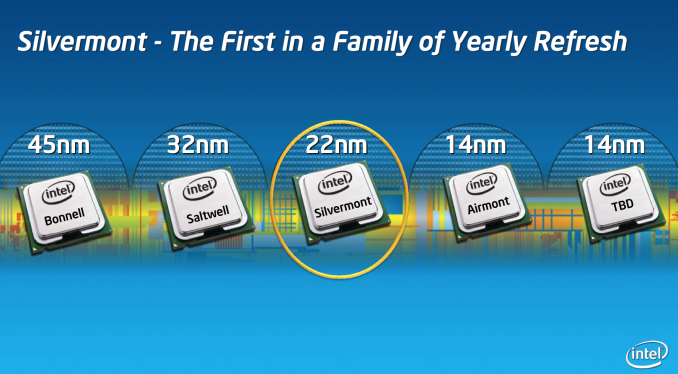

Intel made this announcement last year, but Silvermont is the beginning of Intel’s tick-tock cadence for Atom. Intel plans on revving Atom yearly for at least the next three years. Silvermont introduces a new architecture, while Airmont will take that architecture and bring it down to 14nm in 2014/2015. One year later, we’ll see another brand new architecture take the stage also on 14nm. This is a shift that Intel needed to implement years ago, but it’s still not too late.

Intel made this announcement last year, but Silvermont is the beginning of Intel’s tick-tock cadence for Atom. Intel plans on revving Atom yearly for at least the next three years. Silvermont introduces a new architecture, while Airmont will take that architecture and bring it down to 14nm in 2014/2015. One year later, we’ll see another brand new architecture take the stage also on 14nm. This is a shift that Intel needed to implement years ago, but it’s still not too late.

Before we get into an architectural analysis of Silvermont, it’s important to get some codenames in order. Bonnell was the name of the original 45nm Atom core, it was later shrunk to 32nm and called Saltwell when it arrived in smartphones and tablets last year. Silvermont is the name of the CPU core alone, but when it shows up in tablets later this year it will do so as a part of the Baytrail SoC and a part of the Merrifield SoC next year in smartphones.

22nm

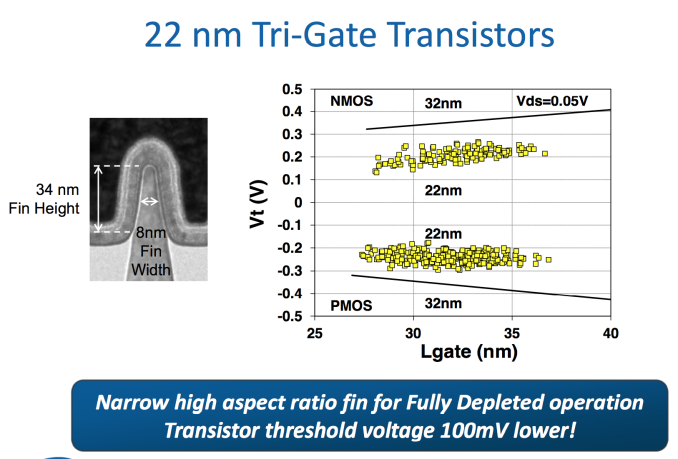

To really understand the Silvermont story, you need to first understand Intel’s 22nm SoC process. Two years ago Intel announced its 22nm tri-gate 3D transistors, which would eventually ship a year later in Intel’s Ivy Bridge processors. That process wasn’t suited for ultra mobile. It was optimized for the sort of high performance silicon that was deployed on it, but not the ultra compact, very affordable, low power silicon necessary in smartphones and tablets. A derivative of that process would be needed for mobile. Intel now makes two versions of all of its processes, one optimized for its high performance CPUs and one for low power SoCs. P1270 was the 22nm CPU process, and P1271 is the low power SoC version. Silvermont uses P1271. The high level characteristics are the same however. Intel’s 22nm process moves to tri-gate non-planar transistors that can significantly increase transistor performance and/or decrease power.

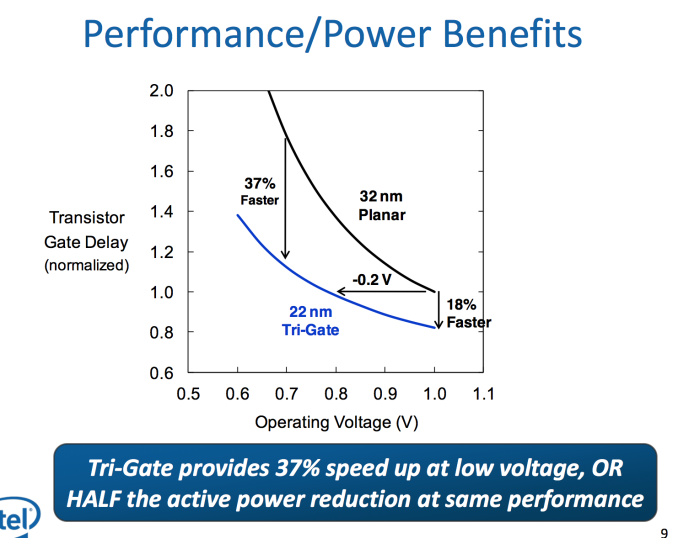

This part is huge. The move to 22nm 3D transistors lets Intel drop threshold voltage by approximately 100mV at the same leakage level. Remember that power scales with the square of voltage, so a 100mV savings depending on what voltage you’re talking about can be very huge. Intel’s numbers put the power savings at anywhere from 25 - 35% at threshold voltage. The gains don’t stop there either. At 1V, Intel’s 22nm process gives it an 18% improvement in transistor performance or at the same performance Intel can run the transistors at 0.8V - a 20% power savings. The benefits are even more pronounced at lower voltages: 37% faster performance at 0.7V or less than half the active power at the same performance.

The end result here is Intel can scale frequency and/or add more active logic without drawing any more power than it did at 32nm. This helps at the top end with performance, but the vast majority of the time mobile devices are operating at very lower performance and power levels. Where performance doesn’t matter as much, Intel’s 22nm process gives it an insane advantage.

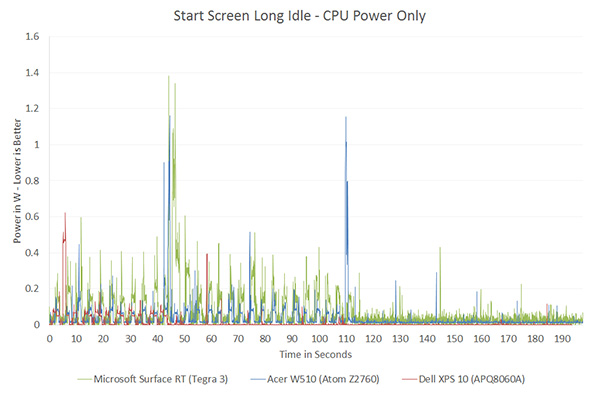

If we look back at our first x86 vs. ARM performance data we get a good indication of where Intel’s 32nm process had issues and where we should see tangible improvements with the move to 22nm:

Qualcomm’s 28nm Krait 200 was actually able to get down to lower power levels than Intel could at 32nm. Without having specific data I can’t say for certain, but it’s extremely likely that with Silvermont Intel will be able to drive down to far lower power levels than anything we’ve ever measured.

Understanding what Intel’s 22nm process gives it is really key to understanding Silvermont.

174 Comments

View All Comments

xTRICKYxx - Tuesday, May 7, 2013 - link

You're right. Intel has nothing to show at all.... Its not like they have the most powerful mobile and desktop consumer processors available.R0H1T - Tuesday, May 7, 2013 - link

Yeah, now sit & watch that market(x86) die a slow death at the hands of mobile/tablets that are powered by "good enough" ARM which doesn't need teraflop level of performance to sell their stuff unlike Intel !misaki - Monday, May 6, 2013 - link

Wow, clearly you are a new reader. This is an architecture overview, not a performance article, which means the information HAS to come from Intel. They have done these type of articles with every architecture redesign since the 90's.When chips are available to test that is when the real world performance articles will come out.

Ortanon - Monday, May 6, 2013 - link

SERIOUSLY.kyuu - Monday, May 6, 2013 - link

Yes, but a lot of performance claims are being made in the article, and Anand really seemed to just be taking Intel's marketing speak for gospel. That's how it read, at least.xTRICKYxx - Tuesday, May 7, 2013 - link

Not really. He clearly states to take the graphs cautiously. Also Intel may be slightly misleading, but nothing in the graphs are lies. They chose the best possible scenario for the greatest advantage.R0H1T - Tuesday, May 7, 2013 - link

Like how they(AT) claimed Intel's "SDP" was superior after stress testing an Exynos Octa, yup loved that fairytale !Kevin G - Monday, May 6, 2013 - link

The article mentions that the IDI is similar to internal bus found on the Nehalem and later desktop processor. IDI here is mentioned as a point-to-point interconnect where as everything is linked via a ring bus in recent Core processors. Of course you can loop multipe point-to-point interfaces into a loop but the article's wording allows for other topologies.For example, each Silvermont module could have its down dedicated point-to-point link to the system agent. In Nehalem, the system against logically appears as another hop in the internal ring bus.

Exophase - Monday, May 6, 2013 - link

Small correction:"Remember that with the first version of Atom, Intel enabled the fusion of load-op-store and load-op-execute instructions. Instead of these instruction combinations decoding into three and two micro-ops respectively, they would be fused post-fetch and treated like single operations throughout the entire pipeline."

Atom (the current one anyway) doesn't have instruction (macro-op) fusion. It does handle load + op and load + op + store are one issue down the pipeline but they still came from single instructions that are a natural part of the x86 ISA. While these may be considered fused micro-ops in Intel's other CPUs that terminology doesn't fit Atom.

These operations do need multiple instructions on most more RISCy ISAs like ARM. But the same is true the other way around (notably, 3 address arithmetic). I very much doubt you'll find typical x86 programs only need 2 instructions for every 3 ARM instructions on average, or at least any papers I've seen that measure micro-ops vs instructions on high-end CPUs are nowhere close to 1.5 (and a uop isn't generally more powerful than an ARM instruction, sometimes less when you consider two are needed for a store). But there are lots of other places that caused stalls on Atom that weren't related to decode, that it's easy to see how you could still gain a lot of perf/MHz without increasing it - as Bobcat and Jaguar have shown. All the details here do seem to point to a Bobcat-like design only with a much lower L2 cache latency and branch mispredict penalty which can only help more.

Anand Lal Shimpi - Monday, May 6, 2013 - link

Er you're very correct. Atom doesn't break these instructions down further, they're treated like single ops throughout the pipeline. I've updated the section. Thank you!