3DMark for Windows Launches; We Test It with Various Laptops

by Jarred Walton on February 5, 2013 5:00 AM ESTInitial 3DMark Notebook Results

While we don’t normally run 3DMark for our CPU and GPU reviews, we do like to run the tests for our system and notebook reviews. The reason is simple: we don’t usually have long-term access to these systems, so in six months or a year when we update benchmarks we don’t have the option to go back and retest a bunch of hardware to provide current results. That’s not the case on desktop CPUs and GPUs, which explains the seeming discrepancy. 3DMark has been and will always be a synthetic graphics benchmark, which means the results are not representative of true gaming performance; instead, the results are a ballpark estimate of gaming potential, and as such they will correlate well with some titles and not so well with others. This is the reason we benchmark multiple games—not to mention mixing up our gaming suite means that driver teams have to do work for the games people actually play and not just the benchmarks.

The short story here (TL;DR) is that just as Batman: Arkham City, Elder Scrolls: Skyrim, and Far Cry 3 have differing requirements and performance characteristics, 3DMark results can’t tell you exactly how every game will run—the only thing that will tell you how game X truly scales across various platforms is of course to specifically benchmark game X. I’m also more than a little curious to see how performance will change over the coming months as 3DMark and the various GPU drivers are updated, so with version 1.00 and current drivers in hand I ran the benchmarks on a selection of laptops along with my own gaming desktop.

I tried to include the last two generations of hardware, with a variety of AMD, Intel, and NVIDIA hardware. Unfortunately, there's only so much I can do in a single day, and right now I don't have any high-end mobile NVIDIA GPUs available. Here’s the short rundown of what I tested:

| System Details for Initial 3DMark Results | |||

| System | CPU (Clocks) |

GPU (Core/RAM Clocks) |

RAM (Timings) |

| Gaming Desktop |

Intel Core i7-965X 4x3.64GHz (no Turbo) |

HD 7950 3GB 900/5000MHz |

6x2GB DDR2-800 675MHz@9-9-9-24-2T |

| Alienware M17x R4 |

Intel Core i7-3720QM 4x2.6-3.6GHz |

HD 7970M 2GB 850/4800MHz |

2GB+4GB DDR3-1600 800MHz@11-11-11-28-1T |

| AMD Llano |

AMD A8-3500M 4x1.5-2.4GHz |

HD 6620G 444MHz |

2x2GB DDR3-1333 673MHz@9-9-9-24 |

| AMD Trinity |

AMD A10-4600M 4x2.3-3.2GHz |

HD 7660G 686MHz |

2x2GB DDR3-1600 800MHz@11-11-12-28 |

| ASUS N56V |

Intel Core i7-3720QM 4x2.6-3.6GHz |

GT 630M 2GB 800/1800MHz HD 4000@1.25GHz |

2x4GB DDR3-1600 800MHz@11-11-11-28-1T |

| ASUS UX51VZ |

Intel Core i7-3612QM 4x2.1-3.1GHz |

GT 650M 2GB 745-835/4000MHz |

2x4GB DDR3-1600 800MHz@11-11-11-28-1T |

| Dell E6430s |

Intel Core i5-3360M 2x2.8-3.5GHz |

HD 4000@1.2GHz |

2GB+4GB DDR3-1600 800MHz@11-11-11-28-1T |

| Dell XPS 12 |

Intel Core i7-3517U 2x1.9-3.0GHz |

HD 4000@1.15GHz |

2x4GB DDR3-1333 667MHz@9-9-9-24-1T |

| MSI GX60 |

AMD A10-4600M 4x2.3-3.2GHz |

HD 7970M 2GB 850/4800MHz |

2x4GB DDR3-1600 800MHz@11-11-12-28 |

| Samsung NP355V4C |

AMD A10-4600M 4x2.3-3.2GHz |

HD 7670M 1GB 600/1800MHz HD 7660G 686MHz (Dual Graphics) |

2GB+4GB DDR3-1600 800MHz@11-11-11-28 |

| Sony VAIO C |

Intel Core i5-2410M 2x2.3-2.9GHz |

HD 3000@1.2GHz |

2x2GB DDR3-1333 666MHz@9-9-9-24-1T |

Just a quick note on the above laptops is that I did run several overlapping results (e.g. HD 4000 with dual-core, quad-core, and ULV; A10-4600M with several dGPU options), but I’ve taken the best result on items like the quad-core HD 4000 and Trinity iGPU. The Samsung laptop also deserves special mention as it supports AMD Dual Graphics with HD 7660G and 7670M; my last encounter with Dual Graphics was on the Llano prototype, and things didn’t go so well. 3DMark is so new that I wouldn’t expect optimal performance, but I figured I’d give it a shot. Obviously, some of the laptops in the above list haven’t received a complete review, and in most cases those reviews are in progress.

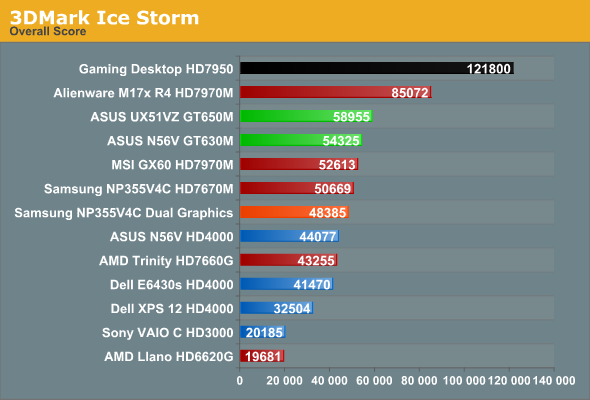

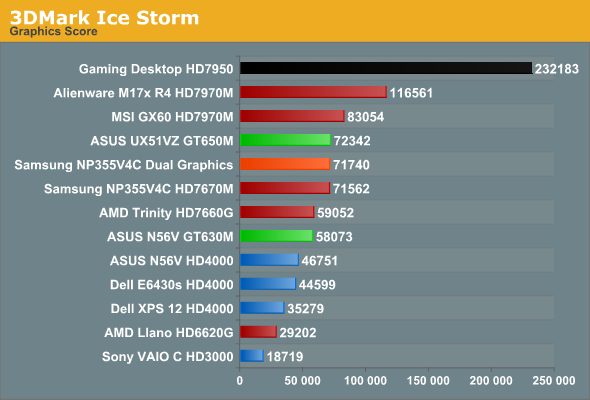

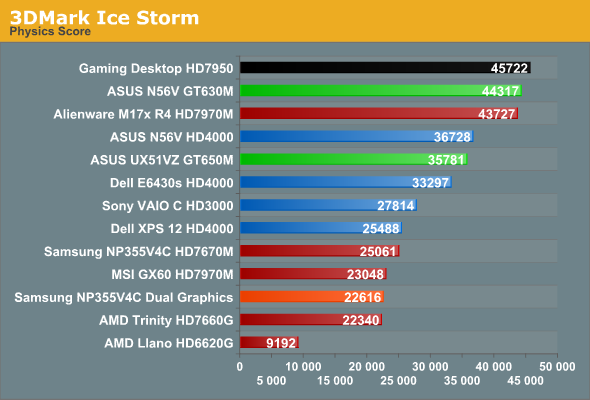

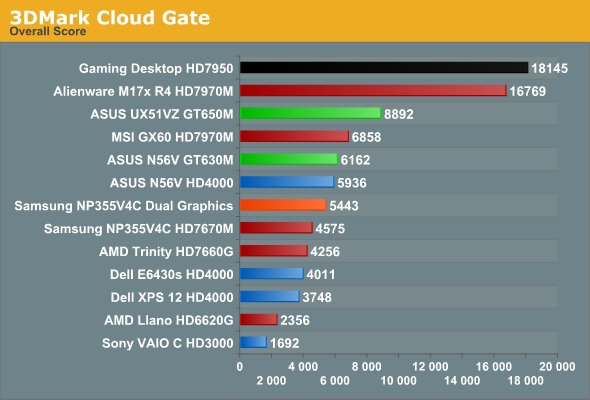

And with that out of the way, here are the results. I’ll start with the Ice Storm tests, followed by Cloud Gate and then Fire Strike.

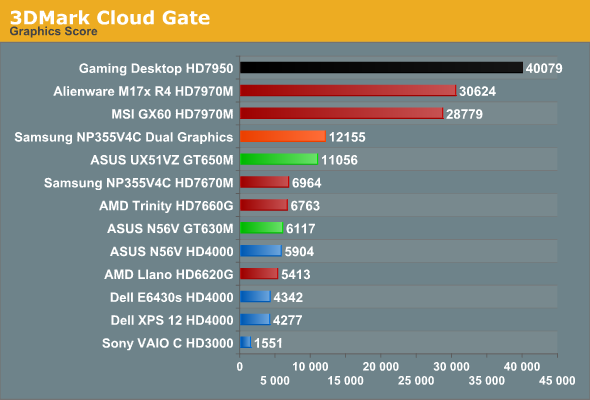

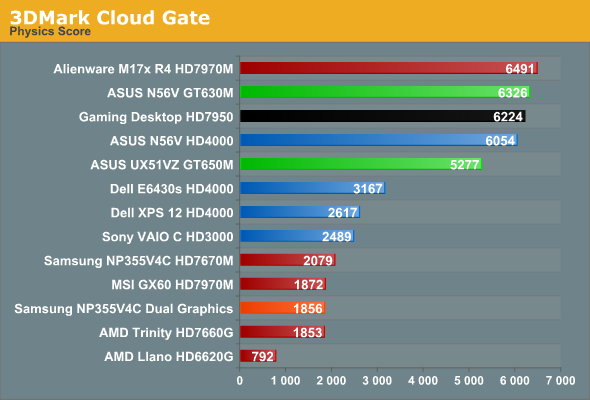

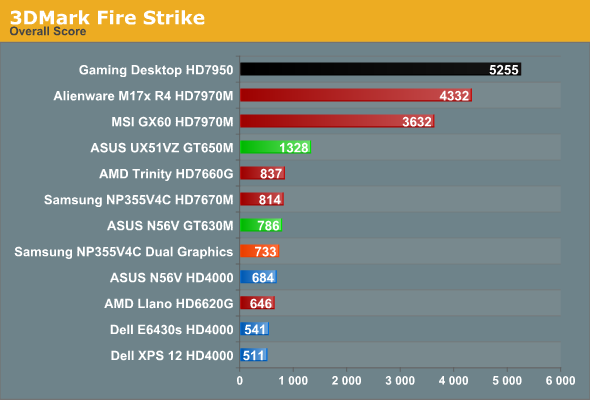

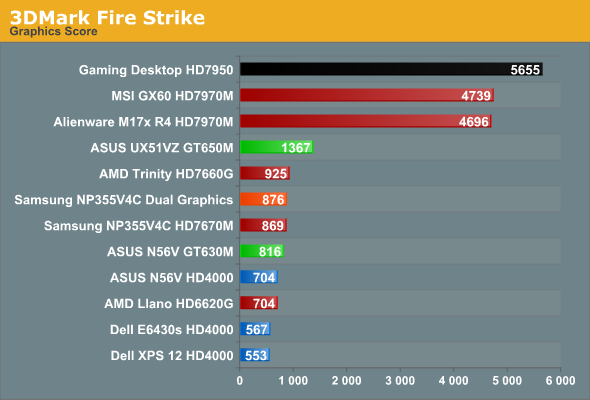

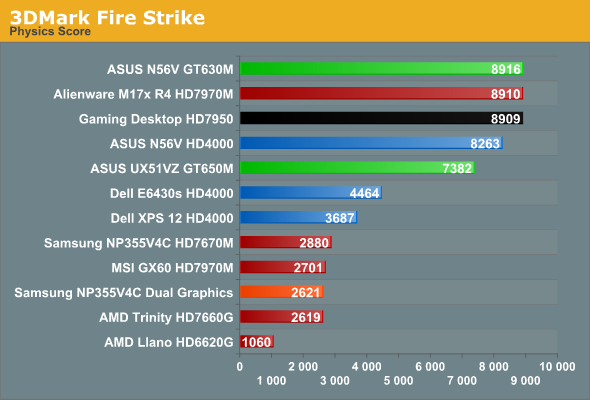

As expected, the desktop typically outpaces everything else, but the margins are a bit closer than what I experience in terms of actual gaming. Generally speaking, even with an older Bloomfield CPU, the desktop HD 7950 is around 30-60% faster than the mobile HD 7970M. Thanks to Ivy Bridge, the CPU side of the equation is actually pretty close, so the overall scores don’t always reflect the difference but the graphics tests do. The physics tests even have a few instances of mobile CPUs besting Bloomfield, which is pretty accurate—with the latest process technology, Ivy Bridge can certainly keep up with my i7-965X.

Moving to the mobile comparisons, at the high end we have two laptops with HD 7970M, one with Ivy Bridge and one with Trinity. I made a video a while back showing the difference between the two systems running just one game (Batman), and 3DMark again shows that with HD 7970M, Trinity APUs are a bottleneck in many instances. Cloud Gate has the Trinity setup get closer to the IVB system, and on the Graphics score the MSI GX60 actually came out just ahead in the Fire Strike test, but in the Physics and Overall scores it’s never all that close. Physics in particular shows very disappointing results for the AMD APUs, which is why even Sandy Bridge with HD 3000 is able to match Llano in the Ice Storm benchmark (though not in the Graphics result).

A look at the ASUS UX51VZ also provides some interesting food for thought: thanks to the much faster CPU, even a moderate GPU like the GT 650M can surpass the 3DMark results of the MSI GX60 in two of the overall scores. That’s probably a bit much, but there are titles (Skyrim for instance) where CPU performance is very important, and in those cases the 3DMark rankings of the UX51VZ and the GX60 are likely to match up; in most demanding games (or games at higher resolutions/settings), however, you can expect the GX60 to deliver a superior gaming experience that more closely resembles the Fire Strike results.

The Samsung Series 3 with Dual Graphics is another interesting story. In many of the individual tests, the second GPU goes almost wholly unused—note that I’d expect updated drivers to improve the situation, if/when they become available. The odd man out is the Cloud Gate Graphics test, which scales almost perfectly with Dual Graphics. Given how fraught CrossFire can be even on a desktop system, the fact that Dual Graphics works at all with asymmetrical hardware is almost surprising. Unfortunately, with Trinity generally being underpowered on the CPU side and with the added overhead of Dual Graphics (aka Asymmetrical CrossFire), there are many instances where you’re better off running with just the 7670M and leaving the 7660G idle. I’m still working on a full review of the Samsung, but while Dual Graphics is now at least better than what I experienced with the Llano prototype, it’s not perfect by any means.

Wrapping things up, we have the HD 4000 in three flavors: i7-3720QM, i5-3360M, and i7-3517U. While in theory they iGPU is clocked similarly, as I showed back in June, on a ULV platform the 17W TDP is often too little to allow the HD 4000 to reach its full potential. Under a full load, it looks like HD 4000 in a ULV processor can consume roughly 10-12W, but the CPU side can also use up to 15W. Run a taxing game where both the CPU and iGPU are needed and something has to give; that something is usually iGPU clocks, but the CPU tends to throttle as well. Interestingly, 3DMark only really seems to show this limitation with the Ice Storm tests; the other two benchmarks give the dual-core i5-3360M and i7-3517U very close results. In actual games, however, I don’t expect that to be the case very often (meaning, Ice Storm is likely the best representation of how HD 4000 scales across various CPU and TDP configurations).

HD 4000 also tends to place quite well with respect to Trinity and some of the discrete GPUs, but in practice that’s rarely the case. GT 630M for instance was typically 50% to 100% (or slightly more) faster than HD 4000 in the ASUS N56V Ivy Bridge prototype, but looking at the 3DMark results it almost looks like a tie. Don’t believe those relative scores for an instant; they’re simply not representative of real gaming experiences. And that is one of the reasons why we continue to look at 3DMark as merely a rough estimate of performance potential; it often gives reasonable rankings, but unfortunately there are times (optimizations by drivers perhaps) where it clearly doesn’t tell the whole story. I’m almost curious to see what sort of results HD 4000 gets with some older Intel drivers, as my gut is telling me there may be some serious tuning going on in the latest build.

69 Comments

View All Comments

JarredWalton - Tuesday, February 5, 2013 - link

Celery 300a was pretty awesome, wasn't it? I think that's around the time I started reading Tom's Hardware and AnandTech (when it was still on Geo Cities!) I had that same Abit BH6 motherboard, I think... I also think I used an Abit IT5H before that, with the bus running at 83.3MHz and my Pentium 200 MMX ripping along at 250MHz! And I had a whopping 64MB or RAM.But even better was the good old days before we even had a reasonable Windows setup. Yeah, I recall installing Windows 2.x and it sucked. 3.0 was actually the first usable version, and there were even Windows alternatives way back then -- I had a friend running some alternative Windows OS as well. GEM maybe, or Viewmax? I can't recall, other than that it didn't really work properly for what we were trying to do at the time (play games).

Dug - Wednesday, March 13, 2013 - link

I remember deciding between 8 and 12mb and then getting my 2nd voodoo card later and running time demos of Quake over and over again after overclocking. The 300 to 450 was fun times too.WeaselITB - Tuesday, February 5, 2013 - link

RE: Space Simshttp://www.robertsspaceindustries.com/star-citizen...

Parhel - Tuesday, February 5, 2013 - link

If that ever sees the light of day, I'll buy it in a second. Freelancer was one of all time favorite games. I still play it from time to time.silverblue - Wednesday, February 6, 2013 - link

Seconded... great game with excellent atmosphere.Peanutsrevenge - Wednesday, February 6, 2013 - link

Just incase you've not heard (somehow).Check out Star Citizen.

Also cool is Diaspora, which is actually playable.!

alwayssts - Tuesday, February 5, 2013 - link

It looks exactly like the video. :-PI'm not super impressed by how it looks, but appreciate what I think it's saying.

I am of the mind Fire Strike gpu tests are FM saying to the desktop community 'if it can run test 1 with 30fps, your avg framerate in dx11 titles should be ok for the foreseeable future. If it can run test 2 at 30 frames, your minimum should be okay'. The combined test obviously shows what the current feature-set can do (and how far away from using it's full potential in a realistic manner current hardware is). Perhaps it's not a guide, but a suggestion and/or an approximation of where games and gpus are headed, and I think it's a reasonable one at that.

For reference, test 1 would need ~ a stock 670/highly overclocked 7870/slightly overclocked Tahiti LE or 660ti, test 2 ~ a stock 680 or 7970/highly overclocked 670 or Tahiti LE/moderately overclocked 7950 for 1080p. IOW, pretty much what people use.

The combined score looks to be a rough estimation of what to expect from the 20nm high-end to get 30fps at 1080p, which also makes sense, as it will likely be the last generation/process to target 1080p before it it becomes realistic to see higher-end consumer single screens become 4k (probably around 14nm and 2016-2017ish). Also, the differance in frame rate from the combined test to gpu test 2 is approx the difference in resolution from 720p to 1080p...so it works on a few different levels.

Dustin Sklavos - Tuesday, February 5, 2013 - link

An AT editor running Nehalem and only 12GB of RAM?What are you, some kind of animal? Was that the only computer you could fit into your cave? ;)

JarredWalton - Tuesday, February 5, 2013 - link

Hey, I only upgraded from Core 2 Quad on my desktop last year! On the bright side, I have a bunch of laptops that are decent.Penti - Tuesday, February 5, 2013 - link

Nehalem is quite fine. Performance hasn't changed all that much. Servers are still stuck on Nehalem|Westmere/Xeon-"MPs" or Sandy Bridge-EP/Xeon-E5. Should count as a modern desktop :) Really it's quite nice that a 2-4 year old system isn't ancient and can't drive games, support lots of memory or whatever like how it was in the not so old days. It's still PCI-e 2.0, DDR3 etc. You really wouldn't exactly have thought of putting say a Radeon 9800 Pro into a 600-800MHz Pentium 3 Coppermine machine.