3DMark for Windows Launches; We Test It with Various Laptops

by Jarred Walton on February 5, 2013 5:00 AM EST3DMark for Windows Overview

After a two-year hiatus, Futuremark is back with a new version of 3DMark, and in many ways this is their most ambitious version to date. Instead of the usual PC graphics benchmark, with this release, dubbed simply “3DMark” (there’s no year or other designation this time), Futuremark is creating a cross-platform benchmark—Windows, Windows RT, iOS, and Android will all be capable of running the same graphics benchmark, sort of. Today’s release is for Windows only, and this is the most feature-packed of the 3DMark releases with three separate graphics benchmarks.

Ice Storm is a DX9-level graphics benchmark (ed: specifically D3D11 FL 9_1), and this is what we’ll see on Android, iOS, and Windows RT. Cloud Gate is the second benchmark and it uses DX10-level effects and hardware, but it will only run on standard Windows; it’s intended to show the capabilities of Windows notebooks and home PCs. The third benchmark is Fire Strike, and this is the one that will unlock the full potential of DX11-level hardware; it’s intended to showcase the capabilities of modern gaming PCs. Fire Strike also has a separate Extreme setting to tax your system even more.

Each of the three benchmarks, at least on the Windows release, comes with four elements: two graphics tests, a physics test, and a demo mode (not used in the benchmark score) that comes complete with audio and a lengthier “story” to go with the scene. I have fond memories of running various demo scene files way back in the day, and I think the inclusion of A/V sequences for all three scenes is a nice addition. Another change with this release is that all resolutions are unlocked for all platforms; the testing will render internally to the specified resolution and will then scale the output to fit your particular display—no longer will we have to use an external display to test 1080p on a typical laptop, hallelujah! You can even run the Extreme preset for Fire Strike on a 1366x768 budget notebook if you like seeing things render at seconds per frame.

As has been the case with most releases, 3DMark comes in three different versions. The free Basic Edition includes all three tests and simply runs them at the default settings; there’s no option to tweak any of the settings, and the Fire Strike test does not include the Extreme preset. When you run the Basic Edition, your only option is to run all three tests (at least on Windows platforms), and the results are submitted to the online database for management. For $24.99, the Advanced Edition adds the Extreme Fire Strike preset, you can run at custom settings and resolutions, and you can individually benchmark the three tests. There are also options to loop the benchmarks and 3DMark has added a bunch of new graphs; you can also save the results offline for later viewing. Finally, the Professional Edition is intended for business and commercial use and costs $995. Besides all of the features in the Advanced Edition, it adds a command line utility, an image quality tool, private offline results option, and it can export the results to XML.

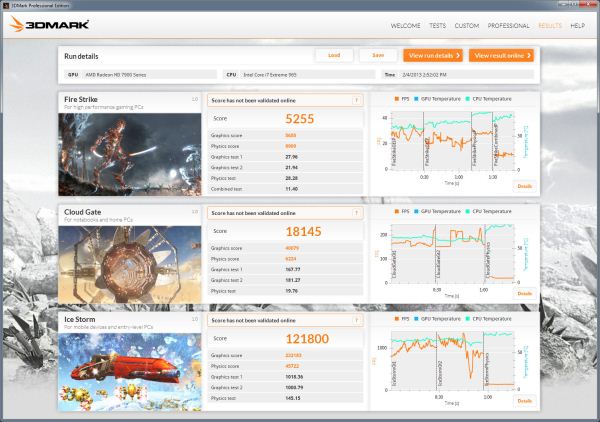

Before we get to some initial results, let’s take a look at one of the cool new features with the latest 3DMark: graphs. Above you can see the post-benchmark results from my personal gaming desktop with a slightly overclocked i7-965X and HD 7950, and along with the usual scores there are graphs for each test showing real-time frame rates and CPU and GPU temperatures. Something I’ve noticed is that the GPU temperatures don’t show up on quite a few of my test systems, and hopefully that will improve with future updates, but this is still a great new inclusion. Each graph also allows you to explore further details:

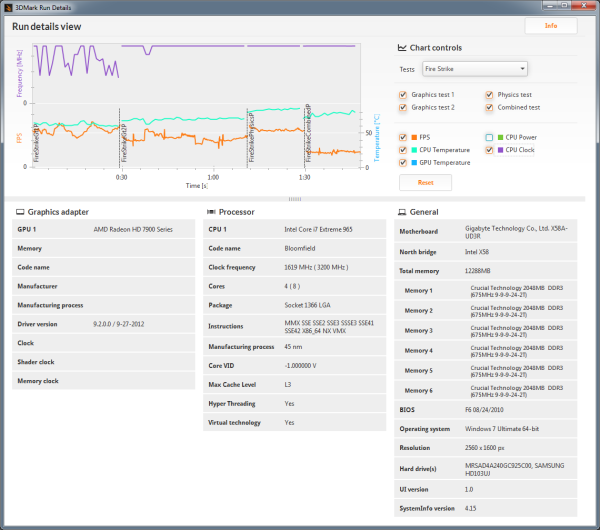

Along with the FPS and temperature graphs, the detailed view also adds the option for CPU clocks and CPU power (though again, power at least isn’t always available depending on the platform, e.g. it’s missing again on my Bloomfield desktop). Something you can’t see with the images is that you can also mouse over and select any of the points on the graphs to get additional details (e.g. frame rate at a specific point), and you can zoom in/out as well. It’s too bad that only paying customers (or press) will be able to get full access to the graphs, but for ORB and overclocking enthusiasts these new features definitely make the $25 cost look more palatable.

Along with the various updates, the UI for 3DMark has change quite a bit as well, presumably to make it more tablet-friendly. I’m not sure how it will work on tablets specifically, but what I can say is that there are certain options that are missing, and the new UI takes some getting used to. For example, even with the Professional Edition, there’s no easy way to run all the benchmarks without the demos. You can run the Ice Storm, Cloud Gate, and Fire Strike benchmarks individually, or you can do a custom run of any of those three, but what I want is an option to run all three tests with custom settings in one batch. This was possible on every previous 3DMark release, so hopefully we get an update to add this functionality (or at least give the Advanced and Professional versions a “run all without demo” on the Welcome screen). Besides that minor complaint, things are pretty much what we’re used to seeing, so let’s do some benchmarking.

69 Comments

View All Comments

JarredWalton - Tuesday, February 5, 2013 - link

Celery 300a was pretty awesome, wasn't it? I think that's around the time I started reading Tom's Hardware and AnandTech (when it was still on Geo Cities!) I had that same Abit BH6 motherboard, I think... I also think I used an Abit IT5H before that, with the bus running at 83.3MHz and my Pentium 200 MMX ripping along at 250MHz! And I had a whopping 64MB or RAM.But even better was the good old days before we even had a reasonable Windows setup. Yeah, I recall installing Windows 2.x and it sucked. 3.0 was actually the first usable version, and there were even Windows alternatives way back then -- I had a friend running some alternative Windows OS as well. GEM maybe, or Viewmax? I can't recall, other than that it didn't really work properly for what we were trying to do at the time (play games).

Dug - Wednesday, March 13, 2013 - link

I remember deciding between 8 and 12mb and then getting my 2nd voodoo card later and running time demos of Quake over and over again after overclocking. The 300 to 450 was fun times too.WeaselITB - Tuesday, February 5, 2013 - link

RE: Space Simshttp://www.robertsspaceindustries.com/star-citizen...

Parhel - Tuesday, February 5, 2013 - link

If that ever sees the light of day, I'll buy it in a second. Freelancer was one of all time favorite games. I still play it from time to time.silverblue - Wednesday, February 6, 2013 - link

Seconded... great game with excellent atmosphere.Peanutsrevenge - Wednesday, February 6, 2013 - link

Just incase you've not heard (somehow).Check out Star Citizen.

Also cool is Diaspora, which is actually playable.!

alwayssts - Tuesday, February 5, 2013 - link

It looks exactly like the video. :-PI'm not super impressed by how it looks, but appreciate what I think it's saying.

I am of the mind Fire Strike gpu tests are FM saying to the desktop community 'if it can run test 1 with 30fps, your avg framerate in dx11 titles should be ok for the foreseeable future. If it can run test 2 at 30 frames, your minimum should be okay'. The combined test obviously shows what the current feature-set can do (and how far away from using it's full potential in a realistic manner current hardware is). Perhaps it's not a guide, but a suggestion and/or an approximation of where games and gpus are headed, and I think it's a reasonable one at that.

For reference, test 1 would need ~ a stock 670/highly overclocked 7870/slightly overclocked Tahiti LE or 660ti, test 2 ~ a stock 680 or 7970/highly overclocked 670 or Tahiti LE/moderately overclocked 7950 for 1080p. IOW, pretty much what people use.

The combined score looks to be a rough estimation of what to expect from the 20nm high-end to get 30fps at 1080p, which also makes sense, as it will likely be the last generation/process to target 1080p before it it becomes realistic to see higher-end consumer single screens become 4k (probably around 14nm and 2016-2017ish). Also, the differance in frame rate from the combined test to gpu test 2 is approx the difference in resolution from 720p to 1080p...so it works on a few different levels.

Dustin Sklavos - Tuesday, February 5, 2013 - link

An AT editor running Nehalem and only 12GB of RAM?What are you, some kind of animal? Was that the only computer you could fit into your cave? ;)

JarredWalton - Tuesday, February 5, 2013 - link

Hey, I only upgraded from Core 2 Quad on my desktop last year! On the bright side, I have a bunch of laptops that are decent.Penti - Tuesday, February 5, 2013 - link

Nehalem is quite fine. Performance hasn't changed all that much. Servers are still stuck on Nehalem|Westmere/Xeon-"MPs" or Sandy Bridge-EP/Xeon-E5. Should count as a modern desktop :) Really it's quite nice that a 2-4 year old system isn't ancient and can't drive games, support lots of memory or whatever like how it was in the not so old days. It's still PCI-e 2.0, DDR3 etc. You really wouldn't exactly have thought of putting say a Radeon 9800 Pro into a 600-800MHz Pentium 3 Coppermine machine.