Kingston SSDNow V+100 Review

by Anand Lal Shimpi on November 11, 2010 3:05 AM EST- Posted in

- Storage

- SSDs

- Kingston

- SSDNow V+100

I'm not sure what it is about SSD manufacturers and overly complicated product stacks. Kingston has no less than six different SSD brands in its lineup. The E Series, M Series, SSDNow V 100, SSDNow V+ 100, SSDNow V+ 100E and SSDNow V+ 180. The E and M series are just rebranded Intel drives, these use Intel's X25-E and X25-M G2 controllers respectively with Kingston logo on the enclosure. The SSDNow V 100 is an update to the SSDNow V Series drives, both of which use the JMicron JMF618 controller. Don't get this confused with the 30GB SSDNow V Series Boot Drive which actually uses a Toshiba T6UG1XBG controller, also used in the SSDNow V+. Confused yet? It gets better.

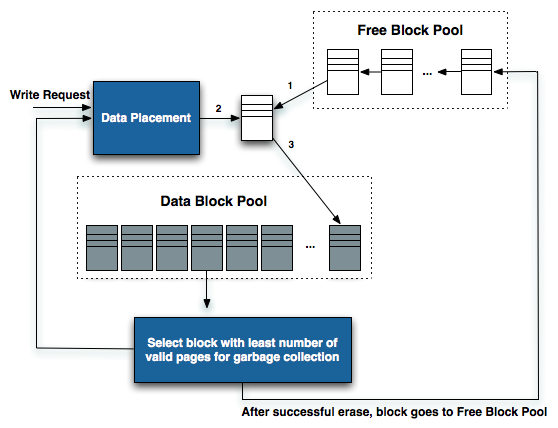

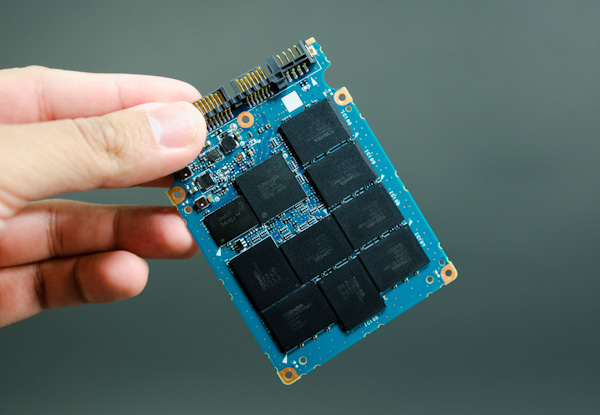

The standard V+ is gone and replaced by the new V+ 100, which is what we're here to take a look at today. This drive uses the T6UG1XBG controller but with updated firmware. The new firmware enables two things: very aggressive OS-independent garbage collection and higher overall performance. The former is very important as this is the same controller used in Apple's new MacBook Air. In fact, the performance of the Kingston V+100 drive mimics that of Apple's new SSDs:

| Apple vs. Kingston SSDNow V+100 Performance | ||||||

| Drive | Sequential Write | Sequential Read | Random Write | Random Read | ||

| Apple TS064C 64GB | 185.4 MB/s | 199.7 MB/s | 4.9 MB/s | 19.0 MB/s | ||

| Kingston SSDNow V+100 128GB | 193.1 MB/s | 227.0 MB/s | 4.9 MB/s | 19.7 MB/s | ||

Sequential speed is higher on the Kingston drive but that is likely due to the size difference. Random read/write speed are nearly identical. And there's one phrase in Kingston's press release that sums up why Apple chose this controller for its MacBook Air: "always-on garbage collection". Remember that NAND is written to at the page level (4KB), but erased at the block level (512 pages). Unless told otherwise, SSDs try to retain data as long as possible because to erase a block of NAND usually means erasing a bunch of valid as well as invalid data and then re-writing the valid data again to a new block. Garbage collection is the process by which a block of NAND is cleaned for future writes.

Diagram inspired by IBM Zurich Research Laboratory

If you're too lax with your garbage collection algorithm then write speed will eventually suffer. Each write will eventually have a large penalty associated with it, driving write latency up and throughput down. Too aggressive with garbage collection and drive lifespan suffers. NAND can only be written/erased a finite number of times, aggressively cleaning NAND before it's absolutely necessary will keep write performance high at the expense of wearing out NAND quicker.

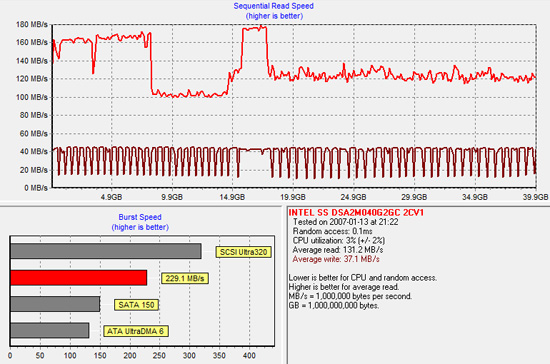

Intel was the first to really show us what realtime garbage collection looked like. Here is a graph showing sequential write speed of Intel's X25-V:

The almost periodic square wave formed by the darker red line above shows a horribly fragmented X25-V attempting to clean itself up at every write. Eventually, with enough writes, the X25-V will return to peak performance. At every write request the X25-V controller will attempt to clean some blocks and return to peak performance. The garbage collection isn't seamless but it will eventually restore performance.

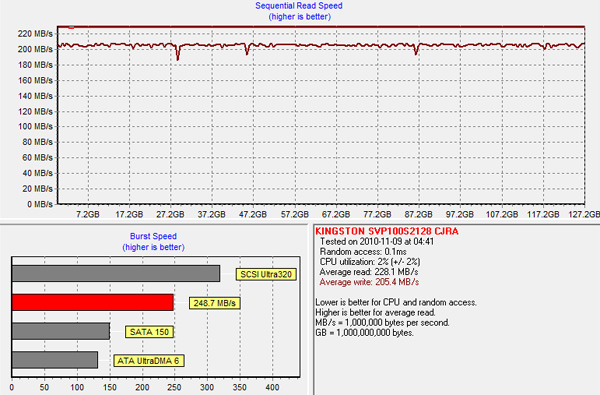

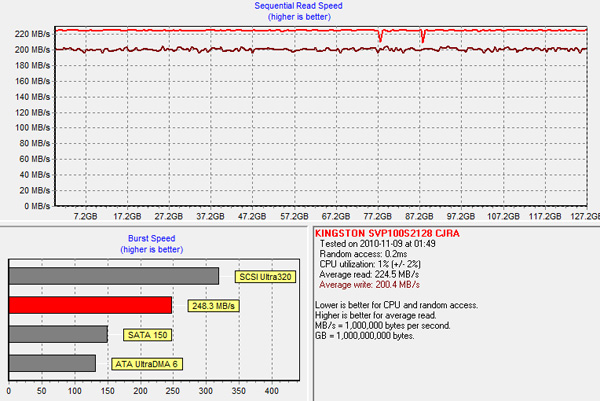

Now look at Kingston's SSDNow V+100, both before fragmentation and after:

There's hardly any difference. Actually the best way to see this in work is to look at power draw when firing random write requests all over the drive. The SSDNow V+100 has wild swings in power consumption during our random write test ranging from 1.25W to 3.40W. The swings would happen several times in a window of a couple of seconds. The V+100 is aggressively tries to reorganize writes and recycle bad blocks, more aggressively than we've seen from any other SSD.

The benefit of this is you get peak performance out of the drive regardless of how much you use it, which is perfect for an OS without TRIM support - ahem, OS X. Now you can see why Apple chose this controller.

There is a downside however: write amplification. For every 4KB we randomly write to a location on the drive, the actual amount of data written is much, much greater. It's the cost of constantly cleaning/reorganizing the drive for performance. While I haven't had any 50nm, 4xnm or 3xnm NAND physically wear out on me, the V+100 is the most likely to blow through those program/erase cycles. Keep in mind that at the 3xnm node you no longer have 10,000 cycles, but closer to 5,000 before your NAND dies. On nearly all drives we've tested this isn't an issue, but I would be concerned about the V+100. Concerned enough to recommend running it with 20% free space at all times (at least). The more free space you have, the better job the controller can do wear leveling.

96 Comments

View All Comments

Gonemad - Thursday, November 11, 2010 - link

Yes, a longevity test. Put it on grind mode until it pops. P51 Mustangs benefited from engines tested that way.Now, this one, should it be fully synthetic or more life like? Just place the drive in "write 0, write 1" until failure, record how many times it can be used, or create some random workload scripted in such a manner that it behaves pretty much like real usage, overusing the first few bytes of every 4k sector... if it affects any results. What am I asking is, will it wear only the used bytes, or the entire 4k rewritten sector will be worn evenly, if I am expressing myself correctly here.

On another comment, I always thought SSD drives were like overpriced undersized Raptors, since they came to be, but damn... I hope fierce competition drive the prices down. Way down. "Neck and neck to mag drives" down.

And what about defragmenting utilities? Don't they lose their sense of purpose on a SSD? Are they blocked from usage, since the best situation you have on a SSD is actually randomly sprayed data, because there is no "needle" running over it at 7200rpm in a forcibly better sequential read? Should they be renamed to "optimization tools" when concerning SSD's? Should anybody ever consider manually giving permission to a system to run garbage collection, TRIM, whatever, while blocking it until strictly necessary, in order to increase life span?

Iketh - Thursday, November 11, 2010 - link

win7 "automatically disables" their built-in defrag for SSDs, though if you go in and manually tell it to defrag an SSD, it will do it without questionprefetch and superfetch are supposedly also disabled automatically when the OS is installed on an SSD, though I don't feel comfortable until i change the values myself in the registry

cwebersd - Thursday, November 11, 2010 - link

I have torture-tested a 50 GB Sandforce-based drive (OWC Mercury Extreme Pro RE) with the goal to destroy it. I stopped writing our semi-random data after 21 days because I grew tired.16.5 TB/d, 360 disk fills/day, 21 days more or less 24/7 duty cycle (we stopped a few times for an hour or two to make adjustments)

~7500 disk fills total, 350 TB written

The drive still performs as good as new, and SMART parameters look reasonably good - to the extent that current tools can interpret them anyway.

If I normally write 20 GB/d this drive is going to outlast me. Actually, I expect it to die from "normal" (for electronics) age-related causes, not flash cells becoming unwritable.

Anand Lal Shimpi - Thursday, November 11, 2010 - link

This is something I've been working on for the past few months. Physically wearing down a drive as quickly as possible is one way to go about it (all of the manufacturers do this) but it's basically impossible to do for real world workloads (like the AT Storage Bench). It would take months on the worst drives, and years on the best ones.There is another way however. Remember NAND should fail predictably, we just need to fill in some of the variables of the equation...

I'm still a month or two away from publishing but if you're buying for longevity, SandForce seems to last the longest, followed by Crucial and then Intel. There's a *sharp* fall off after Intel however. The Indilinx and JMicron stuff, depending on workload, could fail within 3 - 5 years. Again it's entirely dependent on workload, if all you're doing is browsing the web even a JMF618 drive can last a decade. If you're running a workload full of 4KB random writes across the entire drive constantly, the 618 will be dead in less than 2 years.

Take care,

Anand

Greg512 - Thursday, November 11, 2010 - link

Wow, I would have expected Intel to last the longest. I am going to purchase an ssd and longevity is one of my main concerns. In fact, longevity is the main reason I have not yet bought a Sandforce drive. Well, I guess that is what happens when you make assumptions. Looking forward to the article!JohnBooty - Saturday, November 13, 2010 - link

Awesome news. Looking forward to that article.A torture test like that is going to sell a LOT of SSDs, Anand. Because right now that's the only thing keeping businesses and a lot of "power users" from adopting them - "but won't they wear out soon?"

That was the exact question I got when trying to get my boss to buy me one. Though I was eventually able to convince him. :)

Out of Box Experience - Thursday, November 11, 2010 - link

Here's the problemSynthetic Benchmarks won't show you how fast the various SSD controllers handle uncompressible data

Only a copy and paste of several hundred megabytes to and from the same drive under XP will show you what SSD's will do under actual load

First off, due to Windows 7's caching scheme, ALL drives (Slow or Fast) seem to finish a copy and paste in the same amount of time and cannot be used for this test

In a worst case scenario, using an ATOM computer with Windows XP and Zero SSD Tweaks, a OCZ VERTEX 2 will copy and paste data at only 3.6 Megabytes per second

A 5400RPM laptop drive was faster than the Vertex 2 in this test because OCZ drives require massive amounts of Tweaking and highly compressible data to get the numbers they are advertizing

A 7200RPM desktop drive was A LOT faster than the Vertex 2 in this type of test

Anyone working with uncompressible data "already on the drive" such as video editors should avoid Sandforce SSD's and stick with the much faster desktop platter drives

Using a slower ATOM computer for these tests will amplify the difference between slower and faster drives and give you a better idea of the "Relative" speed difference between drives

You should use this test for ALL SSD's and compare the results to common hard drives so that end users can get a feel for the "Actual" throughput of these drives on uncompressible data

Remember, Data on the Vertex drive's is already compressed and cannot be compressed again during a copy/paste to show you the actual throughput of the drive under XP

Worst case scenario testing under XP is the way to go with SSD's to see what they will really do under actual workloads without endless tweaking and without getting bogus results due to Windows 7's caching scheme

Anand Lal Shimpi - Friday, November 12, 2010 - link

The issue with Windows XP vs. Windows 7 doesn't have anything to do with actual load, it has to do with alignment.Controllers designed with modern OSes in mind (Windows 7, OS X 10.5/10.6) in mind (C300, SandForce) are optimized for 4K aligned transfers. By default, Windows XP isn't 4K aligned and thus performance suffers. See here:

http://www.anandtech.com/show/2944/10

If you want the best out of box XP performance for incompressible data, Intel's X25-M G2 is likely the best candidate. The G1/G2 controllers are alignment agnostic and will always deliver the same performance regardless of partition alignment. Intel's controller was designed to go after large corporate sales and, at the time it was designed, many of those companies were still on XP.

Take care,

Anand

Out of Box Experience - Saturday, November 13, 2010 - link

Thanks AnandThats good to know

With so many XP machines out there for the foreseeable future, I would think more SSD manufacturers would target the XP market with alignment agnostic controllers instead of making the consumers jump through all these hoops to get reasonable XP performance from their SSD's

Last question..

Would OS agnostic garbage collection like that on the new Kingston SSD work with Sandforce controllers if the manufacturers chose to include it in firmware or is it irrelevant with Duraclass ?

I still think SSD's should be plug and play on ALL operating Systems

Personally, I'd rather just use the drives instead of spending all this time tweaking them

sheh - Thursday, November 11, 2010 - link

This seems like a worrying trend, though time will tell how reliable SSDs are long-term. What's the situation with 2Xnm? And where does SLC fit into all that regarding reliability, performance, pricing, market usage trends?