The Impact of Spare Area on SandForce, More Capacity At No Performance Loss?

by Anand Lal Shimpi on May 3, 2010 2:08 AM ESTThe Impact of Spare Area on Performance

More spare area can provide for a longer lasting drive, but the best way to measure its impact is to look at performance (lower write amplification leads to lower wear which ultimately leads to a longer lifespan).

SandForce's controllers are dynamic: they'll use all free (untouched or TRIMed) space on the drive as spare area. To measure the difference in performance between a drive with 28% spare area and one with 13% we must first fill the drives to their capacity. The point being to leave the controller with nothing but its spare area to use the moment we start writing to it. Unfortunately this is easier said than done with a SandForce drive.

If you just write sequential data to the drive first there's no guarantee that you'll actually fill up the NAND. The controller may just do away with a lot of the bits and write a fraction of it. To get around this problem we resorted to our custom build of iometer that let's us write completely random data to the drive. In theory this should get around SandForce's algorithms and give us virtually 1:1 mapping between what we write and what ends up on the NAND.

That’s not enough though. The minute we run one of our desktop workloads on the SandForce drive it’ll most likely toss out most of the bits and only lightly tap into the spare area. To be sure let’s look at the results of our AT Storage Bench suite if we first fill these drives with completely random, invalid data. Every time our benchmark scripts write to the drive it should force a read-modify-write and tap into some of that spare area.

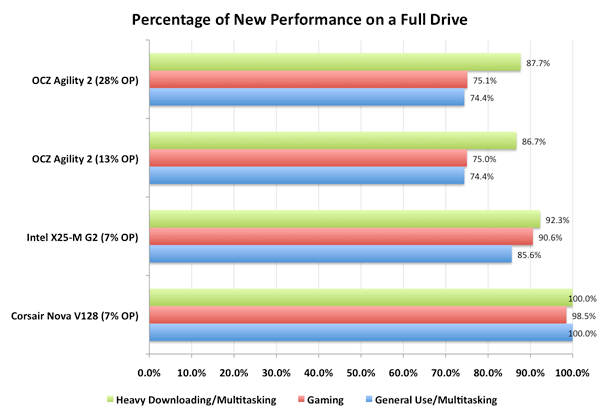

This graph shows the percentage of new performance once the drive is completely full. Dynamic controllers like Intel’s and SandForce’s will show a drop here as they use any unused (or TRIMed) space as spare area. The Indilinx Barefoot controller doesn’t appear to and thus shows no performance hit from a full drive.

You’ll note that there’s virtually no difference between a SF-1200 drive with 13% spare area and one with 28% spare area. Chances are, most users would agree. However I’m not totally satisfied. What we want to see is the biggest performance difference a desktop/notebook user would see between a 13% and 28% overprovisioned drive. To do that we have to not only fill up the user area on the drive but also dirty the spare area as well. Another pass of our Iometer script with some random writes thrown in should do the trick. Now all LBAs on the drive should be touched as well as the spare area.

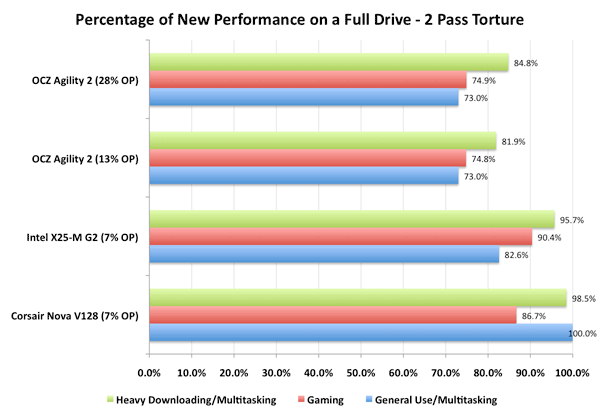

Once again, let’s look at the percentage of new performance from our very full, very dirty drive:

Now we’re getting somewhere. Intel’s controller actually improved in performance between the runs (at least in the heavy downloading/multitasking test), which is a testament to Intel’s architecture. The X25-M is an overachiever, it’s in a constant state of trying to improve itself.

The SandForce controller is being unfairly penalized here - most desktop workloads shouldn’t hit it with so much data that’s purely random in nature. But we’re trying to understand the worst case scenario. And there is a difference, just a very slight one. Only in the heavy downloading/multitasking workload did we see a difference between the two spare area capacities and it’s only about 3 percentage points.

30 Comments

View All Comments

DigitalFreak - Monday, May 3, 2010 - link

Apparently IBM trusts Sandforce's technology.http://www.engadget.com/2010/05/03/sandforce-makes...

MrSpadge - Monday, May 3, 2010 - link

A 60 GB Vertex 2 for the price of the current 50 GB one would make me finally buy an SSD. Actually, even a 60 GB Agility 2 would do the trick!Impulses - Monday, May 3, 2010 - link

Interesting, Newegg's got the Agility 2 in stock for $399... Vertex 2 is OOS but has an ETA. That makes my choice of what drive to give my sister a lil' harder (I promised her a SSD as a birthday gift last month, gonna install it on her laptop when I visit her soon). The old Vertex/Agility drives are 20GB more for $80 less... I dunno whether the performance bump and capacity loss would be worth it.Do the SandForce and Crucial drives feel noticeably faster than an X25-M or Indillix Barefoot drive in everyday tasks or are they all so fast that the difference is not really appreciable outside of heavy multi-tasking or certain heavy tasks? I own an X25-M and an X25-V and I'm ecstatic with both...

MadMan007 - Monday, May 3, 2010 - link

Hello Anand, thanks for the review. I am posting the same comment regarding capacity that I've posted before - I hope it doesn't get ignored this time :) While it's nice to say 'formatted capacity' it is not 100% clear whether that is in HD-style gigabytes (10^9 bytes) or gibibytes (base 1024 - what OSes actually report.) This is very important information imo because people want to know 'How much space am I really getting' or they have a specific space target they need to hit.Please clarify this in future reviews! (If not this one too :)) Thanks.

anurax - Tuesday, May 4, 2010 - link

I've had 2 brand new OCZ Vertex Limited edition died on me in the span of 2 weeks, so you guys should really take into consideration the reliability when buying new SSD. Like Anand say WE are the test pigs here and the manufacture's dun really give a care about us or the inconvenience we experience when we have to re-install and reload our systems.My Vertex Limited Edition drive just died all of a sudden without any prompt or s.m.a.r.t. notification, it just simple cannot be detected anymore. It so damn frustrating to have such poor reliability standards.

One thing is 100% sure, OCZ and SandForce are a NO NO NO, they have played me out enough and me forking out hard earned $$$ to be their test pig is simply not acceptable.

To all you folks out there, seriously be careful about reliability and be even more careful about doing things to hamper the reliability, cuz in the end its your data, time and efforts that are at stake here (unless we are Anand whose job is to fully stress and review these new toys everyday)

mattmc61 - Wednesday, May 5, 2010 - link

Sorry to hear you lost two drives, that must be pretty rare. I lost a 120g Vertex Turbo myself. No warning, just "poof", and it was gone. I think that's the nature of the beast there are no moving parts to let s.m.a.r.t. technology to know when a SSD is slowly dying. One this is for sure, you are right, we are guinea pigs when it comes to a technology in its infancy such as SSDs, which are experiencing growing pains. Anand did warn us a while back that we should procede at our own risk when it comes to these drives. He had a few SSDs go poof on him as well. It just suprizes me when guys buy bleeding edge technology, which usually costs a premium, has a high risk of failure, then procede to trash-mouth the manufacturer or the technology itself when it fails them. I think some people who want the latest and greatest so bad, that they have an "aah, that won't happen to me" attitude, go ahead and buy the product. Then when it fails they are shocked and take it personally like someone diliberately sabatage them. If you did your homework on that OCZ drive like you should have, you would know that the manufacturer really does care about their SSDs out in the wild are performing. I can tell you from personal experience, that when my drive died, they quickly replaced it. OCZ also has great support forum. I'm sure you won't lose all that money you spend if you just send back the drives for replacement. The bottom line is if you want reliability, then go back to machanical hard drives. If you want bleeding edge, the accept the risks and stop whining.MMc

thebeastie - Tuesday, May 4, 2010 - link

There is no point letting the sequential performance have any baring on your choice of SSD, if you like sequential speed just by a mechanical hard drive. But you have been there and no how crap it makes your end user experience.Thats why Intel is still great value for SSD despite all the latest random read and write benchmarks Anandtech has come up with they are still killer speed while the Indilix controllers are running at 0.5megs/sec aligned Windows 7 type performance.

In other words anyone looking at sequential performance is really failing a basic mental handicapped test.

Chloiber - Wednesday, May 5, 2010 - link

Actually, Indilinx is faster on 4k Random Reads with 1 Queue Depth.stoutbeard - Tuesday, May 11, 2010 - link

So what about when you get the agility 2? How do you get the newest sf-1200 firmware (1.01)? It's not on OCZ's site.hartmut555 - Tuesday, May 25, 2010 - link

I guess it might be a little late to comment here and expect a response, but I have been reading a few posts on forums suggesting leaving a portion of a mainstream SSD unpartitioned, so that the drive has a little more spare area to work with. Basically, it is the opposite of what this article is about - instead of recovering some of the spare area capacity for normal use, you are setting aside some of the normal use capacity for spare area. (And yes, they are talking about SSDs, not short-stroking a HDD.)In this article, it states that both the Intel and SandForce controllers appear to be dynamic in that they use any unused sectors as spare area. However, the tests show that the SandForce controller can have pretty much equivalent performance even when the spare area is decreased. This makes me think that there is some point at which more spare area ceases to provide a performance advantage after the drive has been filled (both user area and spare area) - the inevitable case if you are using SSDs in a RAID setup, since there is no TRIM support.

The spare area acts as a sort of "buffer", but the controller implementation would make a big difference as to how much advantage a larger buffer might provide. The workload used for testing might also make a big difference in benchmarks, depending on the GC implementation. For instance, if the SSD controller is "lazy" and only shuffles stuff around when a write command is issued, and only enough to make room for the current write, then spare area size will have virtually no impact on performance. However, if the controller is "active" and lines up a large pool of pre-erased blocks, then having a larger spare area would increase the amount of rapid-fire writes that could happen before the pre-erased blocks were all used up and it had to resort to shuffling data around to free up more erase blocks. Finally, real world workloads almost always include a certain amount of idle time, even on servers. If the GC for the SSD is scheduled for drive idle time, then benchmarks that consist of recorded disk activity which are played back all at once would not allow time for the GC to occur.

Having a complex controller between the port and the flash cells really complicates the evaluation of these drives. It would be nice if we had at least a little info from the manufacturers about stuff like GC scheduling and dynamic spare area usage. Also, it would be interesting to see a benchmark test that is run over a constant time with real-world idle periods (like actually reading the web page that is viewed), and measures wait times for disk activity.

Has anyone tested the affects of increasing spare area (by leaving part of the drive unpartitioned) for drives like the X25-M that have a small spare area when TRIM is not available and the drive has reached its "used" (degraded) state?