The Impact of Spare Area on SandForce, More Capacity At No Performance Loss?

by Anand Lal Shimpi on May 3, 2010 2:08 AM ESTThe Impact of Spare Area on Performance

More spare area can provide for a longer lasting drive, but the best way to measure its impact is to look at performance (lower write amplification leads to lower wear which ultimately leads to a longer lifespan).

SandForce's controllers are dynamic: they'll use all free (untouched or TRIMed) space on the drive as spare area. To measure the difference in performance between a drive with 28% spare area and one with 13% we must first fill the drives to their capacity. The point being to leave the controller with nothing but its spare area to use the moment we start writing to it. Unfortunately this is easier said than done with a SandForce drive.

If you just write sequential data to the drive first there's no guarantee that you'll actually fill up the NAND. The controller may just do away with a lot of the bits and write a fraction of it. To get around this problem we resorted to our custom build of iometer that let's us write completely random data to the drive. In theory this should get around SandForce's algorithms and give us virtually 1:1 mapping between what we write and what ends up on the NAND.

That’s not enough though. The minute we run one of our desktop workloads on the SandForce drive it’ll most likely toss out most of the bits and only lightly tap into the spare area. To be sure let’s look at the results of our AT Storage Bench suite if we first fill these drives with completely random, invalid data. Every time our benchmark scripts write to the drive it should force a read-modify-write and tap into some of that spare area.

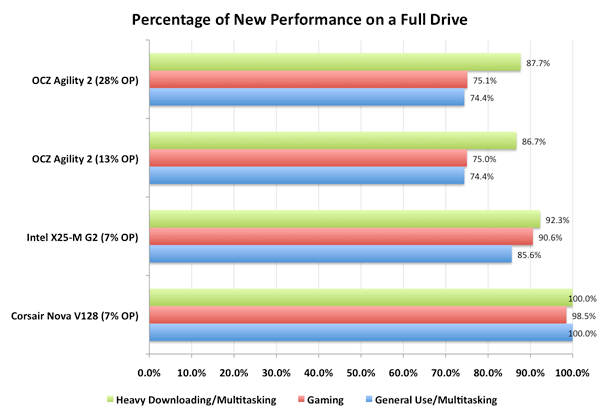

This graph shows the percentage of new performance once the drive is completely full. Dynamic controllers like Intel’s and SandForce’s will show a drop here as they use any unused (or TRIMed) space as spare area. The Indilinx Barefoot controller doesn’t appear to and thus shows no performance hit from a full drive.

You’ll note that there’s virtually no difference between a SF-1200 drive with 13% spare area and one with 28% spare area. Chances are, most users would agree. However I’m not totally satisfied. What we want to see is the biggest performance difference a desktop/notebook user would see between a 13% and 28% overprovisioned drive. To do that we have to not only fill up the user area on the drive but also dirty the spare area as well. Another pass of our Iometer script with some random writes thrown in should do the trick. Now all LBAs on the drive should be touched as well as the spare area.

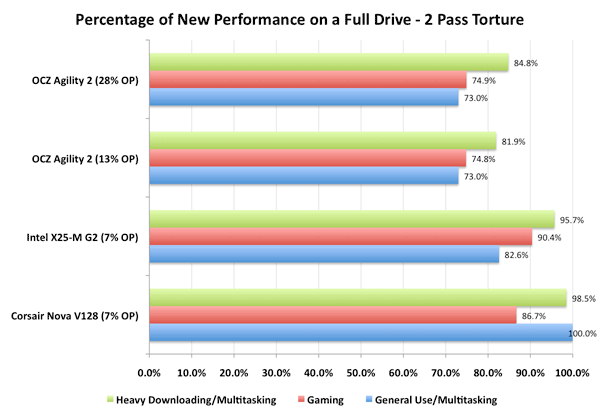

Once again, let’s look at the percentage of new performance from our very full, very dirty drive:

Now we’re getting somewhere. Intel’s controller actually improved in performance between the runs (at least in the heavy downloading/multitasking test), which is a testament to Intel’s architecture. The X25-M is an overachiever, it’s in a constant state of trying to improve itself.

The SandForce controller is being unfairly penalized here - most desktop workloads shouldn’t hit it with so much data that’s purely random in nature. But we’re trying to understand the worst case scenario. And there is a difference, just a very slight one. Only in the heavy downloading/multitasking workload did we see a difference between the two spare area capacities and it’s only about 3 percentage points.

30 Comments

View All Comments

StormyParis - Monday, May 3, 2010 - link

it seems SSD perf is the new dick size ?Belard - Tuesday, May 4, 2010 - link

No, it has nothing to do with "mine is bigger than yours"...They are still somewhat expensive. But when you use one in a notebook or desktop, it makes your computer SO much more faster. Its about SPEED>

Applications like Word or Photoshop are ready to go by the time your finger leaves the mouse button. Windows7 - fully configured (not just some clean / bare install) boots in about 10 seconds (7~14sec depending on the CPU/Mobo & software used)... compared to a 7200RPM drive in 20~55seconds.

And that is with todays X25-G2 drives using SATA 2.0. Imagine in about 2 years, a $100 should get you 128GB that can READ upwards of 450+ MB/s.

Back in the OLD days, my old Amiga 1000 would boot the OS off a floppy in about 45 seconds, with the HD installed, closer to 10 seconds.

I'll admit that Win7's sleep mode works very good with a wake up time on a HDD that is about 3~5 seconds.

neoflux - Friday, June 11, 2010 - link

Wow, someone's an internet douche.Maybe you need to look at the overall system benchmarks, like PCMark Vantage or the AnandTech Storage Bench, where your precious Intels are destroyed by the SF-based drives.

And even if you look at the random reads/writes, the Intels are again destroyed on the writes and the same speed for the reads. I'm of course looking at the aligned benchmarks (aligned read not in this article, but shown here: http://www.anandtech.com/bench/SSD/83 ), because I'm actually being objective and realistic about how SSDs are used rather than trying to justify my purchases/recommendations.

buggyfunbunny - Monday, May 3, 2010 - link

What I'd like to see added to the TestBench, for all drive types (not just SSD), is a Real World, highly (or fully) normalized relational database. Selects, inserts, updates, deletes. The main reason for SSDs will turn out to be such join intensive databases; massive file systems, awash in redundant data, will always out pace silicon storage. In Real World size, tens of gigabytes running transactions. The TPC suite would be nice, but AT may not want to spend the money to join. Any consistent test is fine, but should implement joins over flat-file structures.Zan Lynx - Monday, May 3, 2010 - link

Big databases don't bother with SATA SSDs. They go straight to PCI-e direct-connected SSD, like Fusion-IO and others.If you want fast, you have to get rid of the overhead associated with SATA. SATA protocol overhead is really a big waste of time.

FunBunny - Monday, May 3, 2010 - link

Of course they do. PCIe "drives" aren't drives, and are limited to the number of slots. This approach works OK if you're taking the Google way: one server one drive. That's not the point. One wants a centralized, controlled datastore. That's what BCNF databases are all about. (Massive flatfiles aren't databases, even if they're stored in a crippled engine like MySql.) Such databases talk to some sort of storage array; an SSD one running BCNF data will be faster than some kiddie koders java talking to files (kiddie koders don't know that what they're building is just like their grandfathers' COBOL messes; but that's another episode).In any case, the point is to subject all drives to join intensive datastores, to see which ones do best. PCIe will likely be faster in pure I/O (but not necessarily in retrieving the joined data) than SSD or HDD, but that's OK; some databases can work that way, most won't. Last I checked, the Fusion (which, if you've looked, now expressly say their parts AREN'T SSD) parts are substantially more expensive than the "consumer" parts that AT has been looking at. That said, storage vendors have been using commodity HDD (suitably QA'd) for years. In due time, the same will be true for SSD array vending.

Zan Lynx - Monday, May 3, 2010 - link

It is solid state. It stores data and looks like a drive to the OS. That makes it a SSD by my definition.I wonder why Fusion-IO wants to claim their devices aren't SSDs? My guess is that they just don't want people thinking their devices are the same as SATA SSDs.

jimhsu - Monday, May 3, 2010 - link

Hey Anand,I'm not doubting your Intel performance figures, but I wonder why your benchmarks only show about a 20% performance decrease, when threads like this (http://forums.anandtech.com/showthread.php?t=20699... show that it is possibly quite more? (20% is just on the edge of human perception, and I can tell you that a completely full X25-M does really feel much slower than that). Is the decrease in performance different for read vs/ write, sequential vs. random? Are you using the drive in an OS context (where the drive is constantly being hit with small read/writes while the benchmark is running)?

Anand Lal Shimpi - Monday, May 3, 2010 - link

20% is a pretty decent sized hit. Also note that it's not really a matter of how full your drive is, but how you fill the drive. If you look back at the SSD Relapse article I did you'll see that SSDs like to be written to in a nice sequential manner. A lot of small random writes all over the drive and you run into performance problems much faster. This is one reason I've been very interested in looking at how resilient the SF drives are, they seem to be much better than Intel at this point.Take care,

Anand

FunBunnyBuggyBuggy - Tuesday, May 4, 2010 - link

(first: two issues; the login refuses to retain so I've had to create a new each time I comment which is a pain, and I wanted to re-read the Relapse to refresh my memory but it gets flagged as an Attack Site on FF 3.0.X)-- If you look back at the SSD Relapse article I did you'll see that SSDs like to be written to in a nice sequential manner.

I'm still not convinced that this is strictly true. Controllers are wont to maximize performance and wear-leveling. The assumption is that what is a sequential operation from the point of view of application/OS is so on the "disc"; which is strictly true for a HDD, and measurements bear this out. For SSD, the reality is murkier. As you've pointed out, spare area is not a sequestered group of NAND cells, but NAND cells with a Flag; thus spare move around, presumably in erase block size. A sequential write may not only appear in non-contiguous blocks, but also in non-pure blocks, that is, blocks with data unrelated to the sequential write request from the application/OS. Whichever approach maximizes the controller writers notion of the trade off between performance requirement and wear level requirement will determine the physical write.