The Intel Core i3-12300 Review: Quad-Core Alder Lake Shines

by Gavin Bonshor on March 3, 2022 8:30 AM EST

Just over a month ago Intel pulled the trigger on the rest of its 12th generation "Alder Lake" Core desktop processors, adding no fewer than 22 new chips. This significantly fleshed out the Alder Lake family, adding in the mid-range and low-end chips that weren't part of Intel's original, high-end focused launch. Combined with the launch of the rest of the 600 series chipsets, this finally opened the door to building cheaper and lower-powered Alder Lake systems.

Diving right in, today we're taking a look at Intel's Core i3-12300 processor, the most powerful of the new I3s. Like the entire Alder Lake i3 series, the i3-12300 features four P-cores, and is aimed to compete in the entry-level and budget desktop market. With prices being driven higher on many components and AMD's high-value offerings dominating the lower end of the market, it's time to see if Intel can compete in the budget desktop market and offer value in a segment that currently needs it.

Below is a list of our detailed Intel Alder Lake and Z690 coverage:

- The Intel 12th Gen Core i9-12900K Review: Hybrid Performance Brings Hybrid Complexity

- Intel Architecture Day 2021: Alder Lake, Golden Cove, and Gracemont Detailed

- Intel Announces 12th Gen Core Alder Lake: 22 New Desktop-S CPUs, 8 New Laptop-H CPUs

- The Intel Z690 Motherboard Overview (DDR5): Over 50+ New Models

- The Intel Z690 Motherboard Overview (DDR4): Over 30+ New Models

As a quick recap, we've covered Alder Lake's dual architectural hybrid design in our Core i9-12900K review, including the differences between the P (performance) and E (efficiency cores). The P-cores are based on Intel's high-performance Golden Cove architecture, providing traditional high single-threaded performance. Meanwhile the Gracemont-based E-cores, though lower performing on their own, are significantly smaller and draw much less power, allowing Intel to pack them in to benefit multi-threaded workloads.

Intel Core i3-12300 Processor: Less is Moore

Aside from the two top Core i5 models (i5-12600K and i5-12600KF), all of chips below that level, including the Core i3, Pentium, and Celeron series, only feature Intel's Golden Cove P-cores. Intel's 12th generation Core i3 processors feature four such P-cores, with 12 MB of L3 cache, and all but one (i3-12100F) uses Intel's Xe-LP architecture-based UHD 730 integrated graphics.

Intel's 12th generation Alder Lake desktop processors have been split into the following naming schemes and Performance (P) core and Efficiency (E) core configurations:

- Core i9: 8 Performance Cores + 8 Efficiency Cores

- Core i7: 8 Performance Cores + 4 Efficiency Cores

- Core i5: 6 Performance Cores + 4 Efficiency Cores/6P Only

- Core i3: 4 Performance Cores Only

- Pentium: 2 Performance Cores Only

- Celeron: 2 Performance Cores Only

The Intel Core i3-12300 is the top i3 SKU in the lineup and has a base frequency of 3.5 GHz (60 W), with a turbo frequency of 4.4 GHz (89 W). Other variants vary in core frequency, with different models focusing on lower-powered systems, including the Core i3-12300T, which has a base TDP of 35 W at 2.3 GHz, with turbo clock speeds reaching 4.2 GHz with a 69 W TDP.

| Intel Core i3 Series (12th Gen Alder Lake) | ||||||||

| Processor | Cores P+E |

P-Core Base (MHz) |

P-Core Turbo (MHz) |

L3 (MB) |

IGP | Base (W) |

Turbo (W) |

Price ($1ku) |

| i3-12300 | 4+0 | 3500 | 4400 | 12 | 730 | 60 | 89 | $143 |

| i3-12300T | 4+0 | 2300 | 4200 | 12 | 730 | 35 | 69 | $143 |

| i3-12100 | 4+0 | 3300 | 4300 | 12 | 730 | 60 | 89 | $122 |

| i3-12100F | 4+0 | 3300 | 4300 | 12 | - | 58 | 89 | $97 |

| i3-12100T | 4+0 | 2200 | 4100 | 12 | 730 | 35 | 89 | $122 |

At the time of writing, there are five Core i3 processors announced so far. While the interpretation of TDP can be taken in different ways depending on the company and how it is measured, Intel has gone one step further by offering both TDP at the base frequency and turbo frequencies. Three of these are standard non-K SKUs, while two of these feature the T naming moniker, which signifies that they have a base TDP of just 35W, perfect for lower-powered systems.

Interestingly, only one of the Core i3 processors, the i3-12300T has a turbo TDP of 69 W, while the rest have a rating of 89 W with turbo enabled, including the i3-12100T. The odd one out is the Core i3-12100F, which has a slightly lower base TDP of 58 W, likely as this is the only Core i3 not to include Intel's UHD 730 integrated graphics. It is also the cheapest, with a per 1k unit price of $97.

For this generation Intel has also refreshed its stock CPU coolers, which is the first time it has done this in quite a long time. Although none of the K-series processors include one, aftermarket cooling is necessary to utilize its Thermal Velocity Boost (TVB) and Intel's new 'infinite turbo.' This means that the processor under heavier workloads will try and use turbo as much as possible, which can mean better cooling is needed on the parts with higher P and E-core counts. In the case of the Core i3 series, the maximum TDP figure Intel provides is 89 W, so any conventional CPU cooler should be able to sustain turbo clock speeds for a more extended period of time.

The Core i3 series is shipped and bundled with Intel's new Laminar RM1 CPU cooler, which is similar in size to previous iterations of its stock cooler. Unlike the RH1, the RM1 doesn't feature RGB LED lighting and uses a traditional push-pin arrangement to mount into the socket. Intel hasn't stated which material it uses, e.g., copper or aluminum, or a combination of the two, but regardless of the materials used, for sub 100 W workloads these coolers should be more than ample for the Core i3 series.

The Budget CPU Market: Core i3-12300 versus AMD

Users have lots of choices available in terms of LGA1700 motherboards, including Z690, B660, H670, and H610, as well as support for either DDR5 and DDR4 memory. Users can pair up the Core i3-12300 with the more expensive DDR5 and Z690 for the absolute greatest performance, but the target audience for the Core i3 is users on a budget. This means that users are more likely from a cost perspective to build a system with one of the more affordable B660, H670, and H610 chipsets and pair that with DDR4 memory.

In terms of the competition from AMD, the green team is effectively absent from the sub-$200 quad core market for the moment. AMD does have a more-or-less direct competitor to the Alder Lake i3s in the Ryzen 3 5300G. However, as that chip is OEM-only (and terribly expensive on the gray market), as far as the retail market and individual system builders are concerned, it's all but unavailable. Which means that, at least amidst the ongoing chip crunch, Intel has the run of the market below $200. That said, we are including it in our graphs for completion's sake, and to outline where AMD would be if they could provide their quad core chips in greater volumes.

The next best competitor for the i3-12300 then is arguably the Ryzen 5 5600X, which is an ambitious task and admittedly somewhat lopsided task. The AMD Ryzen 5 5600X is based on its Zen 3 architecture and has six cores versus the four of the i3-12300, while the 5600X also benefits from four more threads (12). Intel's Alder Lake architecture also benefits from PCIe 5.0, but right now there aren't any (consumer) devices that can utilize the extra bandwidth available. The AMD Ryzen 5000 series uses PCIe 4.0 on X570, with PCIe 4.0/3.0 on B550 and below.

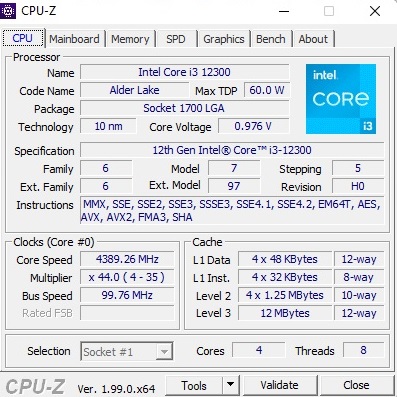

Intel Core i3-12300 CPU-Z screenshot, 4C/8T

Aside from architectural, core count, and thread count differences between the Intel Core i3-12300 and the AMD Ryzen 5 5600X, the next biggest difference is the price. The Ryzen 7 5600X has an MSRP of $299, although it can be found at Amazon at the time of writing for a recently trimmed price of $229. The Intel Core i3-12300 is much cheaper in contrast, with a per 1k unit pricing of $143. With the first shipments just now hitting the market, we expect the retail MSRP to be around the $160-170 mark.

Other processors from AMD that could be considered competitors are the 5000 series Cezanne APUs. This includes the Ryzen 5 5600G, which is currently $219 at Amazon. Despite being more focused on entry-level gaming with integrated Vega 7 graphics, it consists of six cores with a base frequency at 3.9 GHz and a turbo frequency of 4.4 GHz; the 5600G also benefits from twelve threads.

Finally, boxing things in from the other direction, Intel also has the Core i5-12600K processor with six P-cores and four E-cores, with a price tag of $280 at Newegg. We will be reviewing the Core i5 a bit later this month, and it is currently on our testbed undergoing our CPU test suite at the time of writing.

Test Bed and Setup

Although there were some initial problems with the Intel Thread Director when using Windows 10 at the launch of Alder Lake, the P-core only Core i3 stack doesn't need to worry about this. For our testing, we are running the Core i3-12300 with DDR5 memory at JEDEC specifications for Alder Lake (DDR5-4800 CL40). We are also using Windows 11 from now on for our CPU reviews.

For our test bed, we are using the following:

| Alder Lake Test System (DDR5) | |

| CPU | Core i3-12300 ($143) 4+0 Cores, 8 Threads 60W Base, 89W Turbo |

| Motherboard | MSI Z690 Carbon WI-FI |

| Memory | SK Hynix 2x32 GB DDR5-4800 CL40 |

| Cooling | MSI Coreliquid 360mm AIO |

| Storage | Crucial MX300 1TB |

| Power Supply | Corsair HX850 |

| GPUs | NVIDIA RTX 2080 Ti, Driver 496.49 |

| Operating Systems | Windows 11 Up to Date |

All other chips for comparison were run as tests listed in our benchmark database, Bench, on Windows 10.

140 Comments

View All Comments

CiccioB - Friday, March 4, 2022 - link

You may be surprised by how many applications are still using a single thread or even if multi-threaded be on thread bottle-necked.All office suite, for example, use just a main thread. Use a slow 32 thread capable CPU and you'll see how slow Word or PowerPoint can become. Excel is somewhat more threaded, but not surely to the level of using 32 core even for complex tables.

Compilers are not multi-thread. They just spawn many instances to compile more files in parallel, and if you have mane many cores it just end up being I/O limited. At the end of the compiling process, however, you'll have the linked which is a single threaded task. Run it on a slow 64 core CPU, and you'll wait much more time for the final binary then on a fast Celeron CPU.

All graphics retouching applications are mono thread. What is multi-threaded are just some of the effects you can apply. But the interface and the general data management is on a single task. That's why you can have Photoshop layer management so slow even on a Threadripper.

Printing app and format converters are monothread. CADs are also.

And browser as well, though they mask it as much as possible. With my surprise I have found that Javascript is run on a single thread for all opened windows as if I encounter some problems on a heavy Javascript page, other pages are slowed down as well despite having spare cores.

At the end, there are many many task that cannot be parallelized. Single core performance can help much more than having a myriad of slower core.

Yet there are some (and only some) applications that tasks advantage of a swarm of small cores, like 3D renderers, video converters and... well, that's it. Unless you count for scientific simulations but I doubt those are interesting for a consumer oriented market.

BTW, video conversion can be easily and more efficiently done using HW converter like those present in GPUs, you you are left with 3D renderers to be able to saturate whichever the number of core you have.

mode_13h - Saturday, March 5, 2022 - link

> Compilers are not multi-thread.There's been some work in this area, but it's generally a lower priority due to the file-level concurrency you noted.

> if you have mane many cores it just end up being I/O limited.

I've not seen this, but I also don't have anything like a 64-core CPU. Even on a 2x 4-core 3.4 GHz Westmere server with a 4-disk RAID-5, I could do a 16-way build and all the cores would stay pegged. You just need enough RAM for files to stay in cache while they're still needed, and buffer enough of the writes.

> At the end of the compiling process, however,

> you'll have the linked which is a single threaded task.

There's a new, multi-threaded linker on the block. It's called "mold", which I guess is a play on Google's "gold" linker. For those who don't know, the traditional executable name for a UNIX linker is ld.

> At the end, there are many many task that cannot be parallelized.

There are more that could. They just aren't because... reasons. There are still software & hardware improvements that could enable a lot more multi-threading. CPUs are now starting to get so many cores that I think we'll probably see this becoming an area of increasing focus.

CiccioB - Saturday, March 5, 2022 - link

You may be aware that there are lots of compiling chain tools that are not "google based" and are either not based on experimental code."You just need enough RAM for files to stay in cache while they're still needed, and buffer enough of the writes."

Try compiling something that is not "Hello world" and you'll see that there's not such a way to keep the files in RAM unless you have put your entire project is a RAM disk.

"There are more that could. They just aren't because... reasons."

Yes, the fact the making them multi threaded costs a lot of work for a marginal benefit.

The most part of algorithms ARE NOT PARALLELIZABLE, they run as a contiguous stream of code where the following data is the result of the previous instruction.

Parallelizable algorithms are a minority part and most of them require really lots of work to work better than a mono threaded one.

You can easily see this in the fact that multi core CPU in consumer market has been existed for more than 15 years and still only a minor number of applications, mostly rendered and video transcoders, do really take advantage of many cores. Others do not and mostly like single threaded performance (either by improved IPC or faster clock).

mode_13h - Tuesday, March 8, 2022 - link

> Try compiling something that is not "Hello world" and you'll seeMy current project is about 2 million lines of code. When I build on a 6-core workstation with SATA SSD, the entire build is CPU-bound. When I build on a 8-core server with a HDD RAID, the build is probably > 90% CPU-bound.

As for the toolchain, we're using vanilla gcc and ld. Oh and ccache, if you know what that is. It *should* make the build even more I/O bound, but I've not seen evidence of that.

I get that nobody like to be contradicted, but you could try fact-checking yourself, instead of adopting a patronizing attitude. I've been doing commercial software development for multiple decades. About 15 years ago, I even experimented with distributed compilation and still found it still to be mostly compute-bound.

> You can easily see this in the fact that multi core CPU in consumer market has been

> existed for more than 15 years and still only a minor number of applications, mostly

> rendered and video transcoders, do really take advantage of many cores.

Years ago, I saw an article on this site analyzing web browser performance and revealing they're quite heavily multi-threaded. I'd include a link, but the subject isn't addressed in their 2020 browser benchmark article and I'm not having great luck with the search engine.

Anyway, what I think you're missing is that phones have so many cores. That's a bigger motivation for multi-threading, because it's easier to increase efficient performance by adding cores than any other way.

Oh, and don't forget games. Most games are pretty well-threaded.

GeoffreyA - Tuesday, March 8, 2022 - link

"analyzing web browser performance and revealing they're quite heavily multi-threaded"I think it was round about the IE9 era, which is 2011, that Internet Explorer, at least, started to exploit multi-threading. I still remember what a leap it was upgrading from IE8, and that was on a mere Core 2 Duo laptop.

GeoffreyA - Tuesday, March 8, 2022 - link

As for compilers being heavy on CPU, amateur commentary on my part, but I've noticed the newer ones seem to be doing a whole lot more---obviously in line with the growing language specification---and take a surprising amount of time to compile. Till recently, I was actually still using VC++ 6.0 from 1998 (yes, I know, I'm crazy), and it used to slice through my small project in no time. Going to VS2019, I was stunned how much longer it took for the exact same thing. Thankfully, turning on MT compilation, which I believe just duplicates compiler instances, caused it to cut through the project like butter again.mode_13h - Wednesday, March 9, 2022 - link

Well, presumably you compiled using newer versions of the standard library and other runtimes, which use newer and more sophisticated language features.Also, the optimizers are now much more sophisticated. And compilers can do much more static analysis, to possibly find bugs in your code. All of that involves much more work!

GeoffreyA - Wednesday, March 9, 2022 - link

On migration, it stepped up the project to C++14 as the language standard. And over the years, MSVC has added a great deal, particularly features that have to do with security. Optimisation, too, seems much more advanced. As a crude indicator, the compiler backend, C2.DLL, weighs in at 720 KB in VC6. In VS2022, round about 6.4-7.8 MB.mode_13h - Thursday, March 10, 2022 - link

So, I trust you've found cppreference.com? Great site, though it has occasional holes and the very rare error.Also worth a look s the CppCoreGuidelines on isocpp's github. I agree with quite a lot of it. Even when I don't, I find it's usually worth understanding their perspective.

Finally, here you'll find some fantastic C++ infographics:

https://hackingcpp.com/cpp/cheat_sheets.html

Lastly, did you hear that Google has opened up GSoC to non-students? If you fancy working on an open source project, getting mentored, and getting paid for it, have a look!

China's Institute of Software Chinese Academy of Sciences also ran one, last year. Presumably, they'll do it again, this coming summer. It's open to all nationalities, though the 2021 iteration was limited to university students. Maybe they'll follow Google and open it up to non-students, as well.

https://summer.iscas.ac.cn/#/org/projectlist?lang=...

GeoffreyA - Thursday, March 10, 2022 - link

I doubt I'll participate in any of those programs (the lazy bone in me talking), but many, many thanks for pointing out those programmes, as well as the references! Also, last year you directed me to Visual Studio Community Edition, and it turned out to be fantastic, with no real limitations. I am grateful. It's been a big step forward.That cppreference is excellent; for I looked at it when I was trying to find a lock that would replace a Win32 CRITICAL_SECTION in a singleton, and the one found, I think it was std::mutex, just dropped in and worked. But I left the old version in because there's other Win32 code in that module, and using std::mutex meant no more compiling on the older VS, which still works on the project, surprisingly.

Again, much obliged for the leads and references.