Imagination Announces A-Series GPU Architecture: "Most Important Launch in 15 Years"

by Andrei Frumusanu on December 2, 2019 8:00 PM ESTFixed Function Changes & Scalability

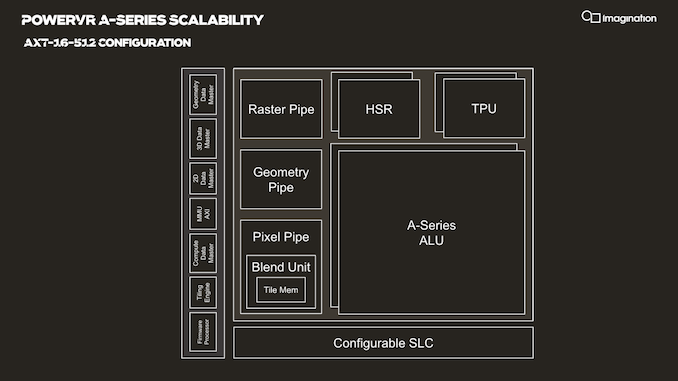

Zooming out from the ALU, we’re seeing a higher-level block configuration that’s very similar to past Imagination PowerVR GPUs. The ALUs themselves are still housed in the larger cluster block that’s called the USC, or unified shading cluster. The USC along with various other fixed function blocks is in turn housed in an SPU, or shader processing unit, effectively the scaling block regularly referred to as a “core”.

Each SPU houses two USCs in the current IP configuration, meaning we have two clusters of 128-wide ALUs. This is valid for all AXT parts, but we imagine the AXM-8-256 unit just has a single USC. The AXT-16-512 is the smallest configuration with a fully populated SPU.

Each SPU has its own geometry pipeline, and up to two texture processing units. The A-Series carries over the per-TPU throughput design from the Furian architecture, meaning the block is able to sample 8 bilinear filtered texels per clock. The A-Series doubles this up now per SPU and the AXT models feature two TPUs, bringing up the total texture fillrate to 16 samples per clock per SPU.

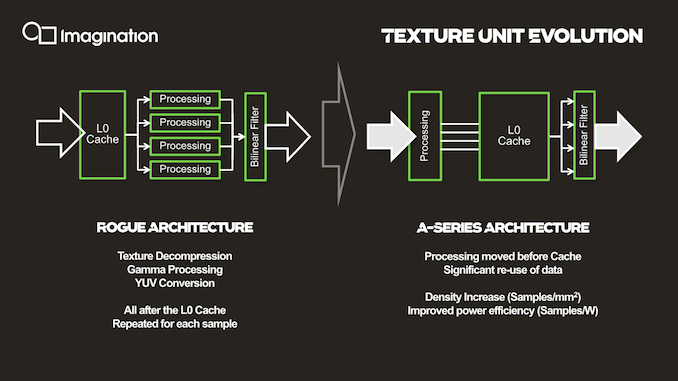

The microarchitecture of the texture units has also evolved beyond just their throughput. A bigger improvement that Imagination is disclosing is the handling and location of the L0 cache. The L0 cache has been relocated within the texturing workflow to between the processing and filtering stages, allowing the L0 cache to hold the outputs of the processing stage, rather than the inputs. This allows for what Imagination calls significant data reuse, as texels don't need to be re-processed each time they're needed. And given how many times a texel may need to be sampled during anisotropic filtering, it's easy to see why. With the benefit of hindsight, this seems like an obvious improvement to make, but the company says that the design choices of the legacy configuration made sense at the time of conception and the workloads back then.

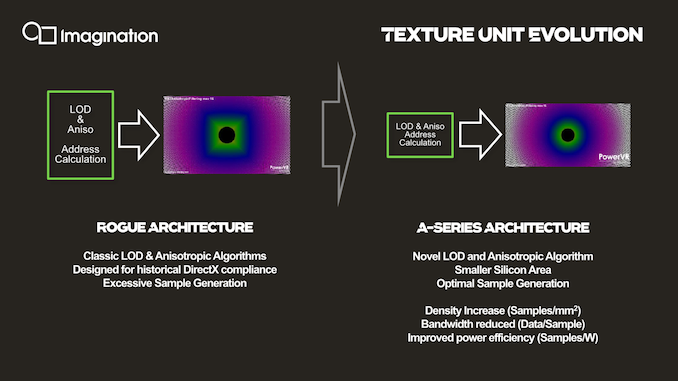

Imagination also talks about how the anisotropic filtering quality of the new architecture is much improved. In a set of comparison screenshots using a traditional texture tunnel, Imagination is showcasing that its new anisotropic filtering algorithms are far closer to being angle-independent – the ideal outcome for aniso filtering – as opposed to angle-dependent filtering with rather hard 90 degree angles on Rogue. Interestingly, Imagination is claiming that they've achieved this improved angle-independence even with fewer fewer samples, all of which serves to improve their efficiency and hardware density. With all of that said, since the comparison is against the Rogue architecture, I’m not entirely sure if it’s an actual novelty of the A-Series or rather a rehash of the anisotropic improvements that already got introduced in the 9XM series last year.

Another change in the fixed function units is found in the pixel pipeline, although superficially the throughput here doesn’t change compared to what we’ve seen on Furian. There’s still up to two PBEs with throughputs of up to 4 pixels per clock, and the design houses two such units for a total of 8 pixels per clock per SPU. There’s actually more internal distinction of the units though – at the front and back core it’s still able to handle 16 pixels per clock and also blend at 16 pixels per clock, although it’s limited on write out to 8 PPC on 1:1 pixel:texture situations.

Imagination’s doubling of the texture throughput whilst maintaining a steady pixel throughput means that the company is generally matching the decreasing pixel:texel fillrate throughput ratio we’ve also seen in other architectures such as from Qualcomm as well as the new Mali-G77, and now falls in at a 1:2 pixel:texel for the A-series.

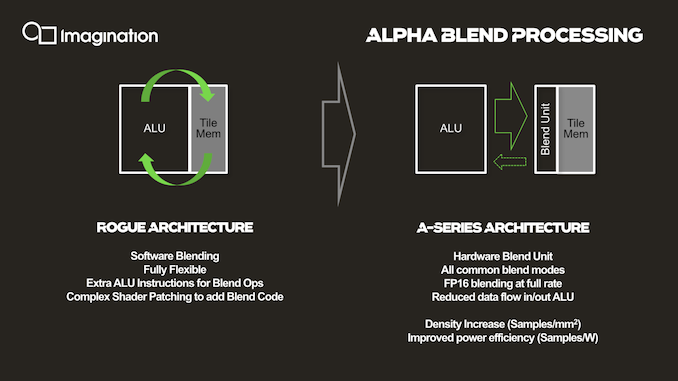

Alpha blending is now done on a dedicated hardware unit in the pixel pipeline instead of being computed by the ALUs. The change results in higher performance through the use of fixed function hardware, allowing for things such as FP16 blending at full rate, and frees up the ALUs themselves so that they can use their computation resources on other work. Density is improved, but more importantly it’s also improving power efficiency as it’s avoiding using more expensive and less task-specific hardware for the same tasks.

It’s to be noted that for the AXM series, the company uses customized fixed function units that are more area efficient, rather than just only scaling the numbers of units.

Scaling Things Up

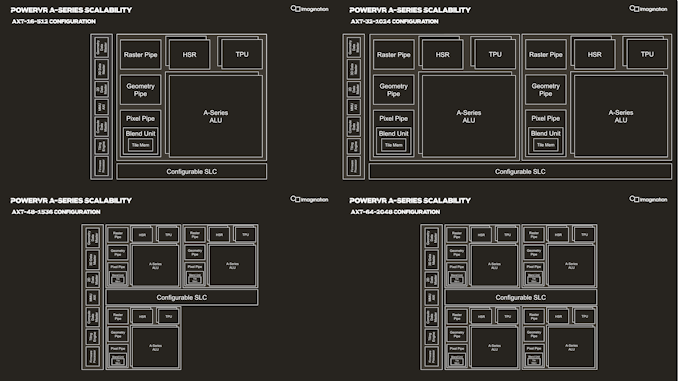

With the SPU being the coarsest scaling block of the architecture, Imagination is building larger GPU configurations by simply adding in more SPUs. Essentially this is the “core” scaling of Imagination's GPU designs.

Scaling of SPUs across the AXT line happens in multiples of 16-512 across the range, both in terms of their product names as well as their texture and FLOPs/clock capabilities, which is rather simple to grasp and very quickly understand a configuration’s capabilities. As mentioned in the introduction, Imagination views the AXT-32-1024 as being the most popular choice for vendors targeting the high-end premium smartphone SoC segment, which possibly some vendors opting to go with the AXT-48-1536 for a larger area and lower clock speeds for more efficiency. The AXT-64-2048 would be a really big GPU which the company could build if there’s costumer interest.

| PowerVR GPU Comparison | ||||

| AXT-16-512 | GT9524 | GT8525 | GT7200 Plus | |

| Core Configuration |

1 SPU (Shader Processing Unit) - "GPU Core" 2 USCs (Unified Shading Clusters) - ALU Clusters |

|||

| FP32 FLOPS/Clock MADD = 2 FLOPs MUL = 1 FLOP |

512 (2x (128x MADD)) |

240 (2x (40x MADD+MUL)) |

192 (2x (32x MADD+MUL)) |

128 (2x (16x MADD+MADD)) |

| FP16 Ratio | 2:1 (Vec2) | |||

| Pixels / Clock | 8 | 4 | ||

| Texels / Clock | 16 | 8 | 4 | |

| Architecture | A-Series (Albiorix) |

Series-9XTP (Furian) |

Series-8XT (Furian) |

Series-7XT (Rogue) |

Comparing the smallest AXT-16-512 configuration with a single SPU and two USCs against similar configurations across the generations, we indeed see that the new A-Series does bring large architectural changes.

Imagination is marketing a 4x increase in ALU throughput, but again that’s against the 9XM GPUs, which are equal in ALU configuration to the Series-7XT in the table. However, it’s not to say that the increases aren’t any less impressive when comparing to the previous 9XTP family; a rise from 240 FLOPs/clock to 512 is still a 2.13x increase.

I think what’s actually more important to note here is the architecture has very big building blocks. At 512 FLOPs and 8 pixels per clock, an AXT SPU is significantly bigger than an Arm Mali-G77 core which comes in at “only” 64 FLOPs/clock and 2 pixels per clock, meaning an AXT core is roughly equivalent to eight G77 cores in computational power and four G77s in fillrate throughput, which is a massive difference in terms of design scaling. Naturally, in terms of effective density and power efficiency, few big cores will always win over a flock of small cores, as demonstrated by Qualcomm and Apple’s recent 2- and 4-core designs.

143 Comments

View All Comments

ET - Tuesday, December 3, 2019 - link

> I fear that this will be a very niche product unless it absolutely dominates all other solutions.At least from the description in the article, it seems to dominate Mali. Even next gen Mali. I don't expect Apple or Qualcomm to move. Samsung I think would be flexible. It's impossible to say how well RDNA fits all price points or when it will arrive, so ImgTech could find a place there. And with the A series supposedly much better than Mali in performance per silicon, I don't think that HiSilicon using it is totally out of the question.

Spunjji - Tuesday, December 3, 2019 - link

No reason HiSilicon can't change their minds if there's a compelling reason. PPA advantages directly translate into cost savings, which is very compelling indeed.MediaTek are probably going to be the biggest customer, though.

mode_13h - Wednesday, December 4, 2019 - link

Chinese want nothing to do with ARM, any more. Got it?So, anyone who's using Mali, or any Chinese phone makers who are using Qualcomm are potential customers.

vladx - Wednesday, December 4, 2019 - link

If you bothered to read the article, you would've found the answer but I guess Americans can't be bothered to read.s.yu - Wednesday, December 4, 2019 - link

"Americans can't be bothered to read"Wow, calling others haters, and look at you.

Etain05 - Tuesday, December 3, 2019 - link

I know that they don’t actually compete, since Apple will never offer its design for licensing, but I still think it’s interesting to compare them.Let’s take the numbers from the Huawei Mate 30 Pro review and compare making some assumptions.

Andrei says: “The comparison implementation here would be an AXT-16-512 implementation running at slightly lower than nominal clock and voltage (in order to match the performance).”

Let’s assume the AXT-16-512 is underclocked by 10% to get to the same performance as the Exynos 9820 and Snapdragon 855. Let’s also assume that an AXT-32-1024 is exactly double the performance of the AXT-16-512.

So, a nominally clocked AXT-16-512 would have 110% the performance of the Snapdragon 855 and Exynos 9820. Double that, and you get 220% the performance, for the AXT-32-1024.

Looking at the Huawei review, here are the numbers:

GFXBench Aztec Ruins High

Exynos 9820 and Snapdragon 855: ~16fps —> AXT-32-1024: 16fps + 120% = 35,2fps

Apple A13: 34fps

GFXBench Aztec Ruins Normal

Exynos 9820 and Snapdragon 855: ~40fps —> AXT-32-1024: 40fps + 120% = 88fps

Apple A13: 91fps

GFXBench Manhattan 3.1

Exynos 9820 and Snapdragon 855: ~69,5fps —> AXT-32-1024: 69,5fps + 120% = 153fps

Apple A13: 123,5fps

GFXBench T-Rex

Exynos 9820 and Snapdragon 855: ~167fps —> AXT-32-1024: 167fps + 120% = 367fps

Apple A13: 329fps

It seems that at least on performance (with generous assumptions), if the new architecture fulfils all promises, it would be competitive, even slightly better than the Apple A13. The problem is that it won’t compete with the A13, but the A14...

How did we get to Apple dominating GPUs too, so fast?

drexnx - Tuesday, December 3, 2019 - link

they're totally unafraid to spend as much die space as they need to get their performance scaling. look at a history of Ax die sizes and you'll see they're all over the placeSpunjji - Tuesday, December 3, 2019 - link

Agreed. It's their vertical integration at work - they're the only company prepared to spend that much die area on performance because they're the only company besides Samsung that can guarantee to sell all every chip they make in a high-end, high-margin device.Andrei Frumusanu - Tuesday, December 3, 2019 - link

Apple's GPUs are the second smallest in the space - only Qualcomm uses less die area.vladx - Wednesday, December 4, 2019 - link

When you extort your customers like Apple does, you can afford to design more expensive SoCs while still keeping huge profits.Apple is the biggest example of what a toxic system capitalism can become.