Imagination Announces A-Series GPU Architecture: "Most Important Launch in 15 Years"

by Andrei Frumusanu on December 2, 2019 8:00 PM ESTHyperLane Technology

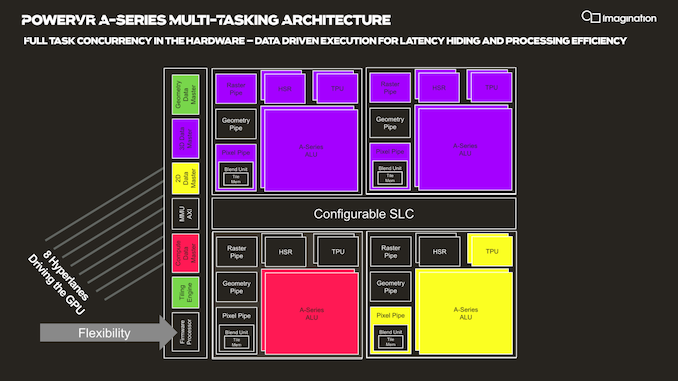

Another new addition to the A-Series GPU is Imagination's “HyperLane” technology, which promises to vastly expand the flexibility of the architecture in terms of multi-tasking as well as security. Imagination GPUs have had virtualization abilities for some time now, and this had given them an advantage in focus areas such as automotive designs.

The new HyperLane technology is said to be an extension to virtualization, going beyond it in terms of separation of tasks executed by a single GPU.

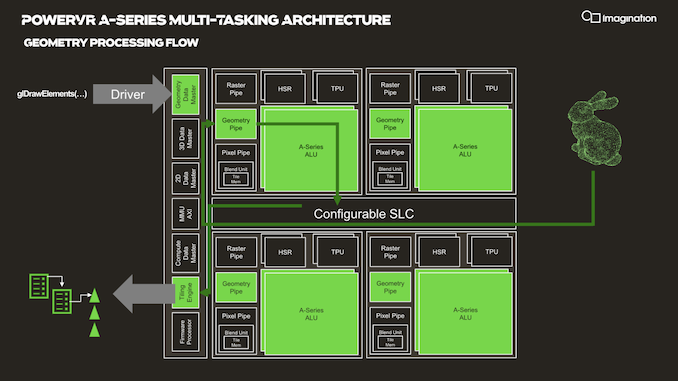

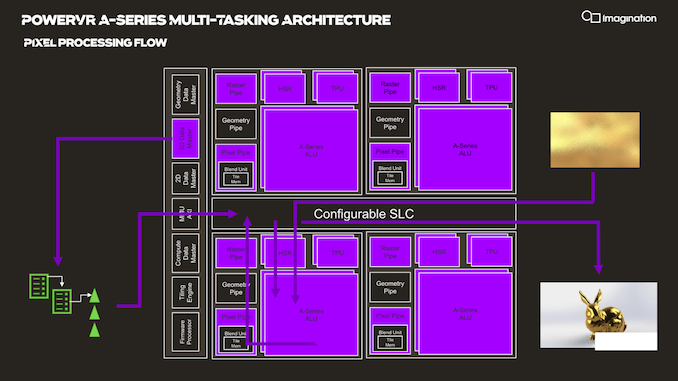

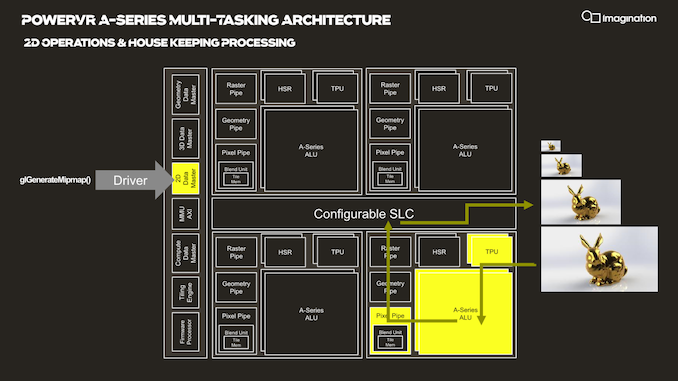

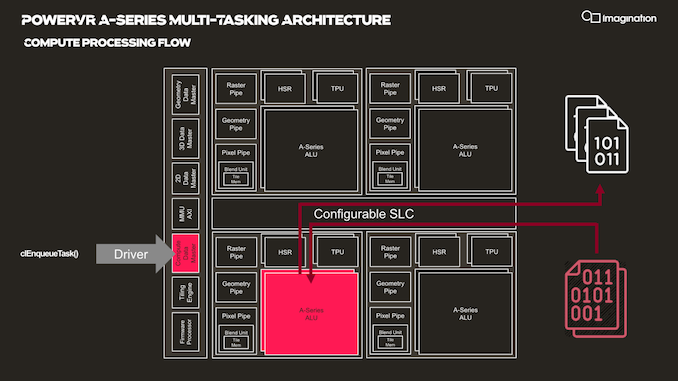

In your usual rendering flows, there are different kinds of “master” controllers each handling the dispatching of workloads to the GPU; geometry is handled by the geometry data master, pixel processing and shading by the 3D data master, 2D operations are handled by the 2D data, master, and compute workloads are processed by the, you guessed it, the compute data master.

In each of these processing flows various blocks of the GPU are active for a given task, while other blocks remain idle.

HyperLane technology is said to be able to enable full task concurrency of the GPU hardware, with multiple data masters being able to be active simultaneously, executing work dynamically across the GPU’s hardware resources. In essence, the whole GPU becomes multi-tasking capable, receiving different task submissions from up to 8 sources (hence 8 HyperLanes).

The new feature sounded to me like a hardware based scheduler for task submissions, although when I brought up this description the Imagination spokespeople were rather dismissive of the simplification, saying that HyperLanes go far deeper into the hardware architecture, with for example each HyperLane having being able to be configured with its own virtual memory space (or also sharing arbitrary memory spaces across hyperlanes).

Splitting GPU resources can happens on a block-level concurrently with other tasks, or also be shared in the time-domain with time-slices between HyperLanes. Priority can be given to HyperLanes, such as prioritizing graphics over a possible background AI task using the remaining free resources.

The security advantages of such a technology also seem advanced, with the company use-cases such as isolation for protected content and rights management.

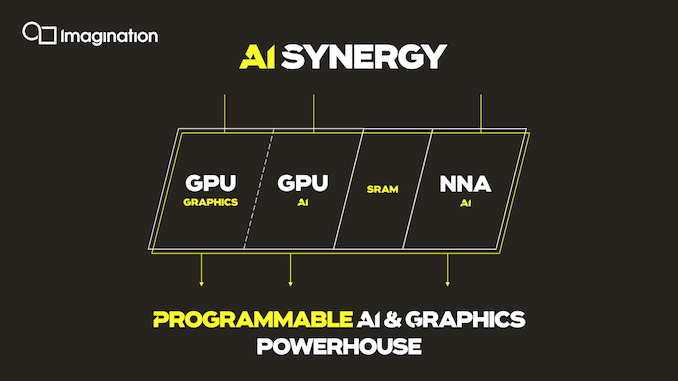

An interesting application of the technology is the synergy it allows between an A-Series GPU and the company’s in-house neural network accelerator IP. It would be able to share AI workloads between the two IP blocks, with the GPU for example handling the more programmable layers of a model while still taking advantage of the NNA’s efficiency for the fixed function fully connected layer processing.

Three Dozen Other Microarchitectural Improvements

The A-Series comes with other numerous microarchitectural advancements that are said to be advantageous to the GPU IP.

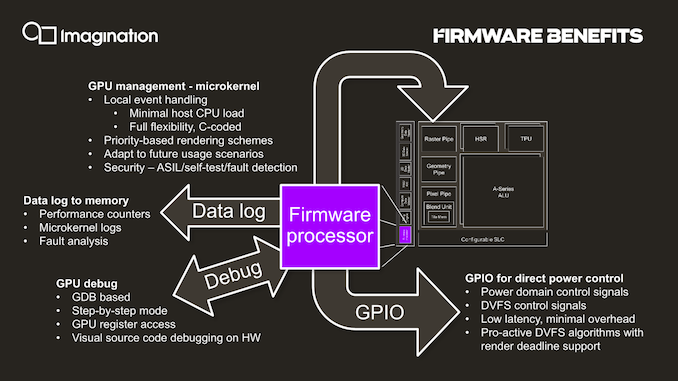

One such existing feature is the integration of a small dedicated CPU (which we understand to be RISC-V based) acting as a firmware processor, handling GPU management tasks that in other architectures might be still be handled by drivers on the host system CPU. The firmware processor approach is said to achieve more performant and efficient handling of various housekeeping tasks such as debugging, data logging, GPIO handling and even DVFS algorithms. In contrast as an example, DVFS for Arm Mali GPUs for example is still handled by the kernel GPU driver on the host CPUs.

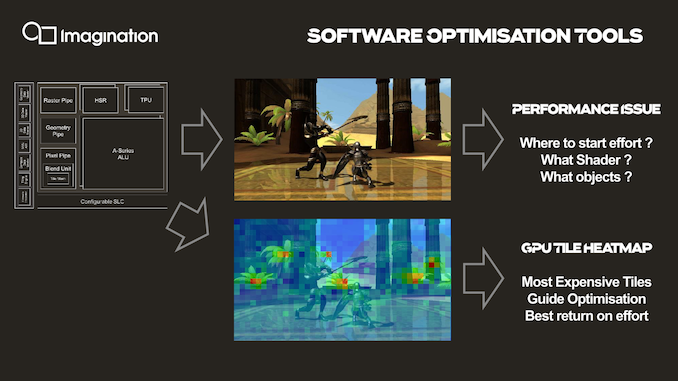

An interesting new development feature that is enabled by profiling the GPU’s hardware counters through the firmware processor is creating tile heatmaps of execution resources used. This seems relatively banal, but isn’t something that’s readily available for software developers and could be extremely useful in terms of quick debugging and optimizations of 3D workloads thanks to a more visual approach.

143 Comments

View All Comments

s.yu - Wednesday, December 4, 2019 - link

No, on the contrary it's reason that Cambricon has to let them if they do.Note the recent 251 incident in which the Party went all-out for 3 days censoring everything trying to suppress the incident, but ultimately failed and they pulled back, and now Huawei for once shows its true colors to the people.

ksec - Tuesday, December 3, 2019 - link

As mentioned by the poster below, I think they are likely to be bought rather than doing well.If you look at the High to Mid End Smartphone, they are dominated by Apple, Samsung, Huawei all using their own Silicon or Qualcomm chip. Which leaves the low end with Mediatek.

Now the low end market only cares about cost, so ARM is actually a better choice due to IP bundling listening.

So I am not too optimistic, and it is also worth mentioning. For anyone who was old enough to remember what the Golden Era of GPU, there were always a new design that claims to be better on paper, and what happened?

Drivers - It is the software. The single biggest unmentioned roadblock to GPU computing. How to efficiently use the hardware is the key. S3, Matrox... and lots of others, remember those?

If I reading correctly this design put even more importance to drivers.

adriaaaaan - Tuesday, December 3, 2019 - link

Thats definitely true but the Vulkan API actually takes away a lot of the driver aspect from the equation. Thats the reasons it exists on so many platforms, because it doesn't depend on the os quite so muchmode_13h - Wednesday, December 4, 2019 - link

Huawei uses Mali GPUs, right? So, once they drop ARM CPU cores and go with a Chinese RISC-V core, they're gonna need a different GPU supplier. Hence, Imagination.In fact, had Apple not dropped Imagination, I wonder if Verisilicon wouldn't still be pumping Vivante's IP.

ET - Wednesday, December 4, 2019 - link

Matrox is still around. Not doing anything on the 3D chip front, but still using its own ASICs for 2D, far as I understand. Using AMD for 3D.VIA, who bought S3, are still around, but I don't know if its newer CPUs include custom made GPUs.

> there were always a new design that claims to be better on paper, and what happened?

Most of them were also better in practice. Though most of them had drawback. It was quite an interesting time. APIs were quite a problem then, and the limited amount of stuff one could do in hardware, but it definitely was an era of growth.

mode_13h - Wednesday, December 4, 2019 - link

Well, if you're going to stretch that far, you might as well also cite Intel.Threska - Wednesday, December 4, 2019 - link

PowerVR (NEC) in a console as well as a PC part.vladx - Wednesday, December 4, 2019 - link

You just ignored that MediaTek just re-entered the high-end SoC market. Or most likely, if you didn't even bother reading the article.ph00ny - Monday, December 2, 2019 - link

Everyone keeps saying if they bring it out. How does a fabless GPU designing firm like imagination "bring it out"?shabby - Monday, December 2, 2019 - link

They use their... imagination 😂