AnandTech Year in Review 2018: GPUs

by Ryan Smith on December 26, 2018 11:00 AM EST

2018 has nearly drawn to a close, and as we’re already gearing up for the event that kicks off 2019 for the industry – the mega-show that is CES – we wanted to spend one last moment going over the highs and lows of the tech industry in 2018. So, whether you’ve been out of the loop for a while and are looking to catch up, or just after a quick summary of the year behind and a glimpse at the year to come, you’re in the right place for the AnandTech Year in Review.

GPU Market: Boom, Bust, & Broken

For better or for worse, by far the leading story in the GPU space for 2018 is the latest rise and fall of the cryptocurrency markets. While the GPU space is by no means a stranger to the market-breaking weirdness that cryptocurrency mining has on GPU prices – we’ve been through this a couple of times now – the latest cycle was the biggest boom and the biggest bust yet. And as a result it’s had immense repercussions on the industry that will play out well into 2019.

While the latest cycle was already well underway before 2018 started, it hit its peak right at the start of the year. Indeed, that’s when the Ethereum cyptocurrency itself – the predominant cryptocurrency for GPUs – hit its all-time record closing price of $1,396/token. As a result, demand for GPUs for the first eight months of the year was unprecedented, which was a boon for some, and a problem for many others.

Ethereum: From Boom To Bust (Ethereumprice.org)

For the better part of three quarters AMD and NVIDIA could sell virtually every last GPU they produced, with some eager companies going straight to the source to try to secure boards and GPUs to scale up their mining operations. As a result, video card prices spiked – of the limited number of cards to even make it to the market, they were just as quickly picked up by miners – which benefitted board vendors and the GPU vendors greatly, but put a major kink in gaming. In fact, affordable gaming cards took the brunt of this demand, as their relatively high price/performance ratios meant that for all the reasons they were good for gaming, they were good for mining as well. When there’s money to be made a Radeon RX 580 (MSRP: $229) will still find buyers, even at $370.

However with every boom comes a bust, and the latest runup on the price of Ethereum was no different. After peaking early in the year, the cryptocurrency’s price continued to trend downward over the entire year, eating away at the profitability of GPU mining. This was exacerbated by the introduction of dedicated Ethereum ASICs, which although required their own fab space to produce and components (e.g. RAM) to go with them, alleviated some of the demand for video cards for mining. Ultimately Ethereum dropping below $300/token in August put the latest boom to an end, and in the last month prices have dropped below $100/token, while the profitability of mining even with the most efficient video cards has turned negative at times.

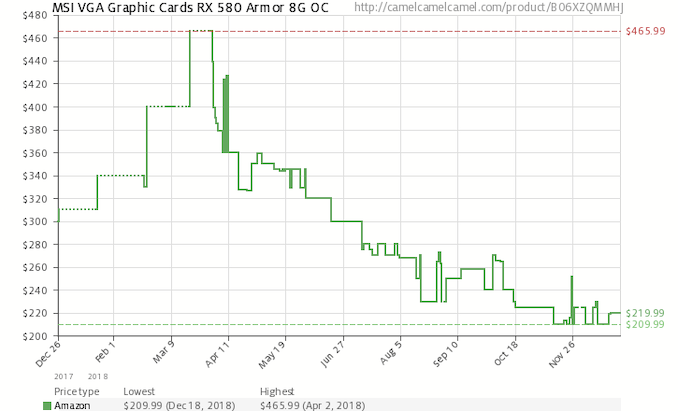

CamelCamelCamel Price History for MSI's Radeon RX 580 Armor 8G

As a result, it’s only been in the last few months that video card prices have come back down to their reasonable, intended prices. Indeed, this year’s Black Friday to Cyber Monday period was especially robust, as RX 580s were going for as little as $169, a far cry from where they were 6 months earlier. The market may not be entirely fixed quite yet, but good video cards are finally affordable again.

With that said, as we’ve since learned from their recent earnings announcements, the break in video card prices isn’t just due to a drop in demand. Both AMD and NVIDIA ramped up their GPU production orders to try to take advantage of this extended boom period, And, judging from the inventory issues they’re now facing, both have been burnt by it. Hungover from the boom in GPU demand and the boost to revenue and profits that came from it, both companies are now in a small slump as they have built up sizable stockpiles of GPUs that came in right when demand really tapered off. As a result, both companies are trying to offload their inventory in a controlled pace, selling it in limited batches to avoid flooding the market and crashing video card prices entirely. The cryptocurrency market is all but impossible to predict, and hardware suppliers in particular are caught in the tough spot: do nothing and let cryptocurrency break the market indefinitely, or try to react and risk having excess inventory when the market needs it the least.

But for the moment, with most of the sanity restored to the video card market, there is a silver lining: cheap cards! Along with frequent sales for new cards, the second-hand market is also increasingly filled with video cards from miners and speculators who are offloading their equipment and inventory, even if it comes at a loss. So for buyers who are willing to take a bit more of a chance, right now is a very good time for snagging a late-generation video card for a good price.

GDDR6 Memory Hits The Scene

GPU pricing and market matters aside, 2018 was also an important year on the technology front. After years of planning, GDDR6, the next generation of memory for GPUs and other high-throughput processors, finally reached mass production and hit the market. This is an especially important development for mid-range cards, as these products have never had access to more exotic memory technologies like GDDR5X and HBM.

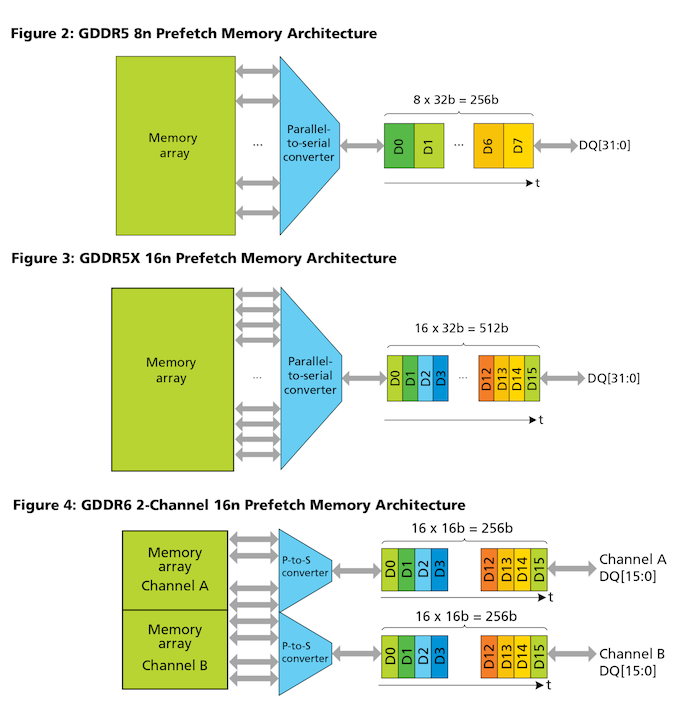

GDDR6 follows in the footsteps of both the long-lived GDDR5 and the more recent GDDR5X. First put into video cards in 2008 – a lifetime ago for the GPU industry – GDDR5 has been the backbone of most video cards over the last decade. It’s been taken to far greater frequencies than JEDEC ever originally planned, with the first cards launching with data rates of 3.6Gbps while the most recent cards have shipped at 8 and 9gbps. And while GDDR5X tried to pick up where GDDR5 left off in 2016, the lack of adoption outside of Micron meant that it never had the traction required to replace its ubiquitous predecessor.

GDDR6, on the other hand, has no such problems. The Big 3 memory vendors – SK Hynix, Samsung, and Micron – are all producing the memory. And while prices are still high as an early technology (don’t expect GDDR5 to disappear overnight), GDDR6 is primed to become the new backbone of the GPU memory industry.

Overall, GDDR6 introduces a trio of key improvements that vault the memory technology ahead of GDDR5. On the signaling front, it uses Quad Data Rate (QDR) signaling as opposed to Double Data Rate (DDR) signaling. With twice as many signal pumps per clock as before, GDDR6’s memory bus can reach the necessary data rates at lower clockspeeds, making it more efficient and easier to implement than a higher clocked DDR bus, though not without some signal integrity challenges of its own.

The second big change is to how the memory is organized and how data is prefetched: instead of a chip having a single 32-bit wide channel with an 8n prefetch, GDDR6 divides that into a pair of 16-bit wide channels, each with a 16n prefetch. This effectively doubles the amount of data that is fed to the memory bus on every memory clock cycle, matching the increased bus capacity and allowing for greater data rates out of the memory without increasing the core clock rate of the memory itself.

Finally, GDDR6 once again lowers the memory operating voltage. Whereas GDDR5 typically ran at 1.5v, the standard voltage for GDDR6 is 1.35v. The actual power savings are a bit hard to quantify here since power consumption also depends on the memory controller used, but all-told we’re expecting to see significant increases in bandwidth with minimal power consumption increases with both GPU vendors – and network controller manufacturers and other GDDR users, for that matter.

GDDR6 is currently readily available in speeds up to 14Gbps, and the standard allows for faster speeds as well. Samsung is already talking about doing 18Gbps memory, and if it ends up as long-lived as its predecessor, GDDR6 will undoubtedly go faster than that still. Meanwhile it will be interesting to see where the line between GDDR6 and HBM2 falls in the next year or two; GDDR6’s speed somewhat undermines the advantages of HBM2, but the latter memory technology recently saw its own bandwidth and capacity boost, so it may still be used as a high-end option, especially in space-constrained products such as socketed accelerators.

35 Comments

View All Comments

Znaak - Thursday, December 27, 2018 - link

I got to play around with Battlefield V on a 2080Ti this christmas. 1440p screen just like I have at home. And while it certainly had the looks when I stood around admiring the scenery I didn;t even notice the improvement when the action was on.So for my own 1440p system I will keep my trusty 1080Ti which delivers plenty of FPS. I can't justify spending € 1500 for an almost unnoticable increase in rendering quality and a slight drop n framerates. (Or the same rendering quality with an increase in framerate to above what my monitor can handdle anyway).

What I really want is for AMD to release navi with double precission compute at 1:2 or even 1:8 would make me happy. The game performance of the current generation is more than sufficient for me and I won't be gaming at 4k anyway, it really makes no sense in action games. Double precission compute performance otoh will make the professional in me very happy.

ToTTenTranz - Thursday, December 27, 2018 - link

If rumors of a consumer version of Vega 20 come true, you may get a high-end card with DP (Vega2 Frontier Edition?) much sooner than when Navi hits the shelves.HollyDOL - Thursday, December 27, 2018 - link

To be completely frank any visual quality in last paced games is kind of wasted, you won't notice it or focus on it while sprinting around and given the scene complexity you'd perform best if the graphics was old school vector without textures.Otoh slower paced games will be able to get alot of improvement.

Either way with RT we should be able to gradually increase visual quality with less complex code, I keep fingers crossed for RT. We've been waiting way too long for realistic model patching the thing in the meantime with more and more obscure workarounds.

Tech-fan - Thursday, December 27, 2018 - link

How did it feel to be milked by NVIDIA?Did you fell for the "Just Buy" out of Ryans keyboard? 😉🤣

Znaak - Friday, December 28, 2018 - link

Maybe you should reread my post, because you look silly now ;)I built a gaming PC for a friend over christmas. He doesn't feel comfortable building it himself and mainly listened to my advise on components, except for the GPU. Which gave me the chance to experience what most reviewers have experienced. That feeling of being underwhelmed by the new nVidia cards.

Gastec - Tuesday, February 12, 2019 - link

"Building" computers with the likes of 1080Ti followed by 2080Ti. These are people who upgrade to the lastest and greatests each generation and they are obviously wealthy. It's pointless to mock rich people.FreckledTrout - Thursday, December 27, 2018 - link

My old GTX 1070 plays BFV on ultra at 1440p at 65 FPS on average. Works perfectly for me.Znaak - Friday, December 28, 2018 - link

Exactly, with a 1080Ti it rarely even drops under 75fps 1440p Ultra settings, which is great because my monitor has a 75Hz refresh rate.piiman - Sunday, December 30, 2018 - link

But you get no Rayzpiiman - Sunday, December 30, 2018 - link

" I didn;t even notice the improvement when the action was on."This is true for any level of detail, in most cases once the action starts you aren't staring at the scenery anyways. lol Frankly even low settings are pretty damn good these days.