The Crucial P1 1TB SSD Review: The Other Consumer QLC SSD

by Billy Tallis on November 8, 2018 9:00 AM ESTPower Management Features

Real-world client storage workloads leave SSDs idle most of the time, so the active power measurements presented earlier in this review only account for a small part of what determines a drive's suitability for battery-powered use. Especially under light use, the power efficiency of a SSD is determined mostly be how well it can save power when idle.

For many NVMe SSDs, the closely related matter of thermal management can also be important. M.2 SSDs can concentrate a lot of power in a very small space. They may also be used in locations with high ambient temperatures and poor cooling, such as tucked under a GPU on a desktop motherboard, or in a poorly-ventilated notebook.

| Crucial P1 NVMe Power and Thermal Management Features |

|||

| Controller | Silicon Motion SM2263 | ||

| Firmware | P3CR010 | ||

| NVMe Version |

Feature | Status | |

| 1.0 | Number of operational (active) power states | 3 | |

| 1.1 | Number of non-operational (idle) power states | 2 | |

| Autonomous Power State Transition (APST) | Supported | ||

| 1.2 | Warning Temperature | 70 °C | |

| Critical Temperature | 80 °C | ||

| 1.3 | Host Controlled Thermal Management | Supported | |

| Non-Operational Power State Permissive Mode | Not Supported | ||

The Crucial P1 includes a fairly typical feature set for a consumer NVMe SSD, with two idle states that should both be quick to get in and out of. The three different active power states probably make little difference in practice, because even in our synthetic benchmarks the P1 seldom draws more than 3-4W.

| Crucial P1 NVMe Power States |

|||||

| Controller | Silicon Motion SM2263 | ||||

| Firmware | P3CR010 | ||||

| Power State |

Maximum Power |

Active/Idle | Entry Latency |

Exit Latency |

|

| PS 0 | 9 W | Active | - | - | |

| PS 1 | 4.6 W | Active | - | - | |

| PS 2 | 3.8 W | Active | - | - | |

| PS 3 | 50 mW | Idle | 1 ms | 1 ms | |

| PS 4 | 4 mW | Idle | 6 ms | 8 ms | |

Note that the above tables reflect only the information provided by the drive to the OS. The power and latency numbers are often very conservative estimates, but they are what the OS uses to determine which idle states to use and how long to wait before dropping to a deeper idle state.

Idle Power Measurement

SATA SSDs are tested with SATA link power management disabled to measure their active idle power draw, and with it enabled for the deeper idle power consumption score and the idle wake-up latency test. Our testbed, like any ordinary desktop system, cannot trigger the deepest DevSleep idle state.

Idle power management for NVMe SSDs is far more complicated than for SATA SSDs. NVMe SSDs can support several different idle power states, and through the Autonomous Power State Transition (APST) feature the operating system can set a drive's policy for when to drop down to a lower power state. There is typically a tradeoff in that lower-power states take longer to enter and wake up from, so the choice about what power states to use may differ for desktop and notebooks.

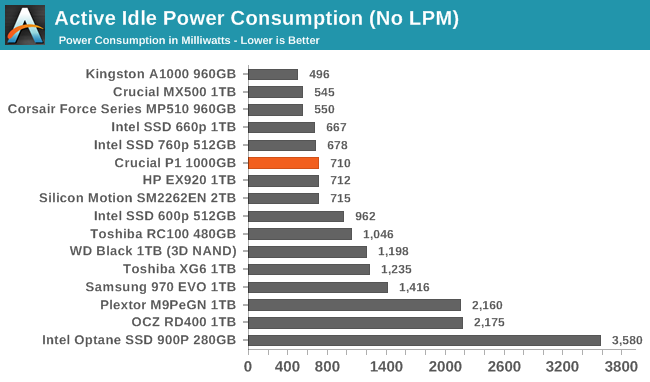

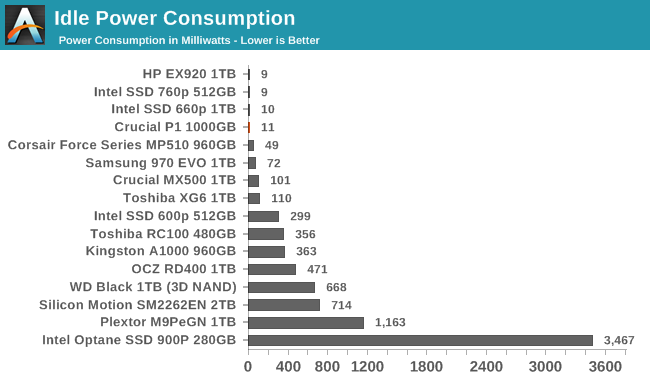

We report two idle power measurements. Active idle is representative of a typical desktop, where none of the advanced PCIe link or NVMe power saving features are enabled and the drive is immediately ready to process new commands. The idle power consumption metric is measured with PCIe Active State Power Management L1.2 state enabled and NVMe APST enabled if supported.

The idle power consumption numbers from the Crucial P1 match the pattern seen with other recent Silicon Motion platforms. The active idle draw is a bit higher for the P1 than the 660p due to the latter having less DRAM, but both do very well when put to sleep.

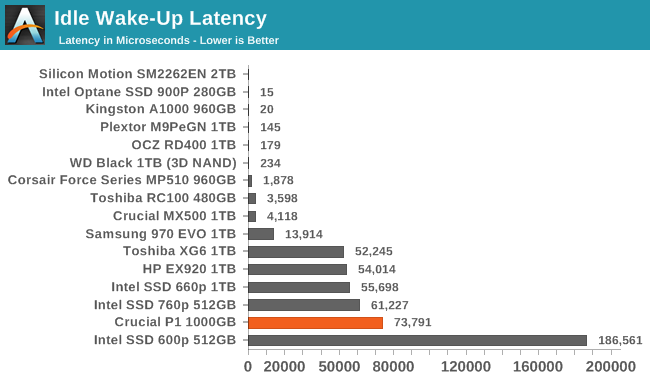

The wake-up latency of over 73ms for the Crucial P1 is fairly high, and definitely much worse than what the drive advertises to the operating system. This could lead to some responsiveness problems if the OS is misled into choosing an overly-aggressive power management strategy.

66 Comments

View All Comments

DigitalFreak - Thursday, November 8, 2018 - link

At this rate, by the time they get to H(ex)LC you'll only be able to write 1GB per day to your drive or risk having it fail.PeachNCream - Thursday, November 8, 2018 - link

Please don't give them any ideas! The last thing we need is NAND that generously handles a few dozen P/E cycles before dying. We've already gone from millions of P/E cycles to a few hundred in the last 15 years and data retention has dropped from over a decade to under six months. Sure you can get a lot more capacity for the price, but NAND needs to be replaced with something more durable sooner rather than later. (And no, I'm not advocating for Optane either, just something that lasts longer and has room for density improvements - don't care what that something is.)MrCommunistGen - Thursday, November 8, 2018 - link

I was expecting the extra DRAM to provide a more meaningful advantage over the Intel 660p... I guess it makes sense that Intel left it off to save on BOM.Ratman6161 - Thursday, November 8, 2018 - link

This could be a very good standard desktop drive if 1) the price is right and 2) you can accept that the 1 TB drive is really only good for up to 900 GB. You would just partition the drive such that there is 100 GB free (or make sure you always just keep that much space free) so you always have the maximum SLC cach available. For the price to be right, it has to be lower. Taking the prices from the article, the 1 TB P1 is only $8 cheaper than a 970 EVO. Now if they could get the price down to the same territory as the current MX 500 they might have something.Billy Tallis - Thursday, November 8, 2018 - link

Leaving 10% of the drive unpartitioned won't be enough to get the maximum size SLC cache, because 1GB of SLC cache requires 4GB of QLC to be used as SLC. However, 10% manual overprovisioning would definitely reduce the already small chances of overflowing the SLC cache.mczak - Thursday, November 8, 2018 - link

On that note, wouldn't it actually make sense to use a MLC cache instead of a SLC cache for these SSDs using QLC flash (and by MLC of course I mean using 2 bits per cell)? I'd assume you should still be able to get very decent write speeds with that, and it would effectively only need half as much flash for the same cache size.Billy Tallis - Thursday, November 8, 2018 - link

Cache size isn't really a big enough problem for a 2bpc MLC write cache to be worthwhile. Using SLC for the write cache has several advantages: highest performance/lowest latency, single-pass reads and writes (important for Crucial's power loss immunity features), and your SLC cache can use flash blocks that are too worn out to still reliably store multiple bits per cell. A slower write cache with twice the capacity would only make sense if consumer workloads regularly overflowed the existing write cache. Almost all of the instances where our benchmarks overflow SLC caches are a consequence of our tests giving the drive less idle time than real-world usage, rather than being tests representing use cases where the cache would be expected to overflow even in the real world.idri - Thursday, November 8, 2018 - link

Why don't you guys include the Samsung 970 PRO 1TB in your charts for comparison? It's one of the most sought after SSDs on the market for HEDT systems and for sure it would be useful to have your tests results for this one too. Thanks.Billy Tallis - Thursday, November 8, 2018 - link

A.) Samsung didn't send me a 970 PRO. B.) The 970 PRO is pretty far outside the range of what could be considered competition for an entry-level NVMe SSD. It's a drive you buy for bragging rights, not for real-world performance benefits. The Optane SSD is in that same category, and I don't think the graphs for this kind of review need to be cluttered up with too many of those.PeachNCream - Thursday, November 8, 2018 - link

Not to be obtuse, but by price the 970 PRO is well within the range of competition for the P1 given that the 1TB 970 retails for $228 on Amazon right now and the MSRP for the 1TB P1 $220. Buyers looking for a product will most certainly consider the $8 difference and factor that into their decision to move up from an entry-level product to a "bragging rights" option given the insignificant difference in cost. Your first point is valid. I would have stopped there since its reasonable to say, "Physically impossible, don't have one there pal."