The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

Meet The New Future of Gaming: Different Than The Old One

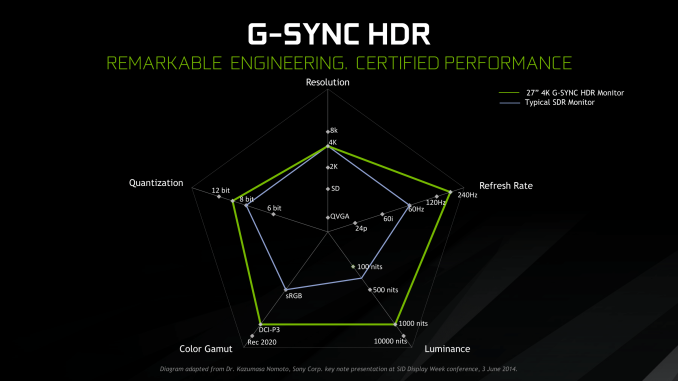

Up until last month, NVIDIA had been pushing a different, more conventional future for gaming and video cards, perhaps best exemplified by their recent launch of 27-in 4K G-Sync HDR monitors, courtesy of Asus and Acer. The specifications and display represented – and still represents – the aspired capabilities of PC gaming graphics: 4K resolution, 144 Hz refresh rate with G-Sync variable refresh, and high-quality HDR. The future was maxing out graphics settings on a game with high visual fidelity, enabling HDR, and rendering at 4K with triple-digit average framerate on a large screen. That target was not achievable by current performance, at least, certainly not by single-GPU cards. In the past, multi-GPU configurations were a stronger option provided that stuttering was not an issue, but recent years have seen both AMD and NVIDIA take a step back from CrossFireX and SLI, respectively.

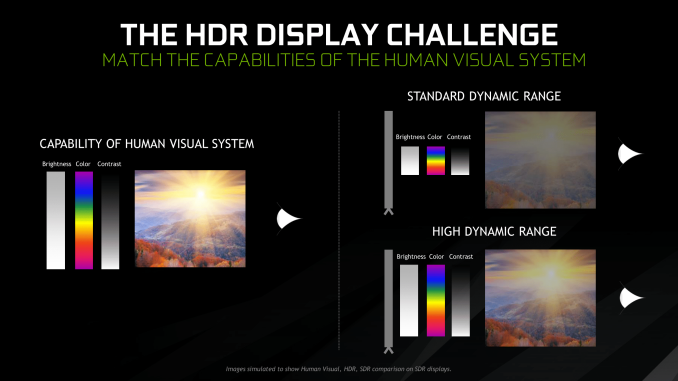

Particularly with HDR, NVIDIA expressed a qualitative rather than quantitative enhancement in the gaming experience. Faster framerates and higher resolutions were more known quantities, easily demoed and with more intuitive benefits – though in the past there was the perception of 30fps as cinematic, and currently 1080p still remains stubbornly popular – where higher resolution means more possibility for details, higher even framerates meant smoother gameplay and video. Variable refresh rate technology soon followed, resolving the screen-tearing/V-Sync input lag dilemma, though again it took time to catch on to where it is now – nigh mandatory for a higher-end gaming monitor.

For gaming displays, HDR was substantively different than adding graphical details or allowing smoother gameplay and playback, because it meant a new dimension of ‘more possible colors’ and ‘brighter whites and darker blacks’ to gaming. Because HDR capability required support from the entire graphical chain, as well as high-quality HDR monitor and content to fully take advantage, it was harder to showcase. Added to the other aspects of high-end gaming graphics and pending the further development of VR, this was the future on the horizon for GPUs.

But today NVIDIA is switching gears, going to the fundamental way computer graphics are modelled in games today. Of the more realistic rendering processes, light can be emulated as rays that emit from their respective sources, but computing even a subset of the number of rays and their interactions (reflection, refraction, etc.) in a bounded space is so intensive that real time rendering was impossible. But to get the performance needed to render in real time, rasterization essentially boils down 3D objects as 2D representations to simplify the computations, significantly faking the behavior of light.

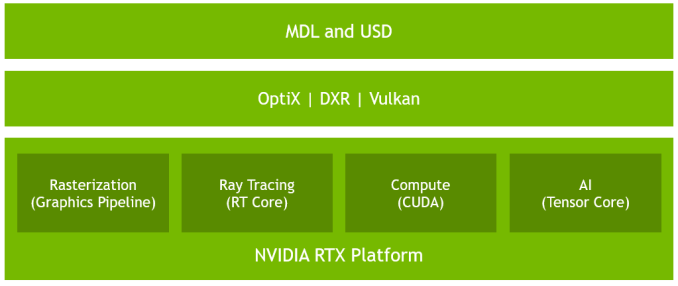

It’s on real time ray tracing that NVIDIA is staking its claim with GeForce RTX and Turing’s RT Cores. Covered more in-depth in our architecture article, NVIDIA’s real time ray tracing implementation takes all the shortcuts it can get, incorporating select real time ray tracing effects with significant denoising but keeping rasterization for everything else. Unfortunately, this hybrid rendering isn’t orthogonal to the previous concepts. Now, the ultimate experience would be hybrid rendered 4K with HDR support at high, steady, and variable framerates, though GPUs didn’t have enough performance to get to that point under traditional rasterization.

There’s a still a performance cost incurred with real time ray tracing effects, except right now only NVIDIA and developers have a clear idea of what it is. What we can say is that utilizing real time ray tracing effects in games may require sacrificing some or all three of high resolution, ultra high framerates, and HDR. HDR is limited by game support more than anything else. But the first two have arguably minimum performance standards when it comes to modern high-end gaming on PC – anything under 1080p is completely unpalatable, and anything under 30fps or more realistically 45 to 60fps hurts the playability. Variable refresh rate can mitigate the latter and framedrops are temporary, but low resolution is forever.

Ultimately, the real time ray tracing support needs to be implemented by developers via a supporting API like DXR – and many have been working hard on doing so – but currently there is no public timeline of application support for real time ray tracing, Tensor Core accelerated AI features, and Turing advanced shading. The list of games with support for Turing features - collectively called the RTX platform - will be available and updated on NVIDIA's site.

337 Comments

View All Comments

milkod2001 - Thursday, September 20, 2018 - link

NV is messing with us. Even with no competition from AMD those price hikes at such low performance gains are laughable. This generation of new GPU seems like just a stop gap before NV will have something more serious to show next year.willis936 - Thursday, September 20, 2018 - link

No they seem like they will be exactly the same as the 1000 series: they are what they are, you pay what they ask, and they will be the only decent option they offer for the next two years.Maybe if Radeon ever gets their shit together the landscape might look different in 2-3 years but trust me: for now, expect more of the same.

milkod2001 - Thursday, September 20, 2018 - link

Yeah we are pretty much getting into Intel vs AMD scenario when Intel dominates for a years and bring customers overpriced products with very slow performance upgrades. There is a hope AMD will at least try to do something about it.yhselp - Thursday, September 20, 2018 - link

The temperature and noise results are shocking. The results are much closer to what you'd expect from a blower, rather than an open-air cooler. Previous gen OEM solutions do much better than this. What's the reason for this?milkod2001 - Thursday, September 20, 2018 - link

Chips are much bigger than previous gen.iwod - Thursday, September 20, 2018 - link

I think we need DLSS and Hybrid Ray Tracing to judge whether it is worth it. At the moment, we could have the nearly double the performance of 1080Ti if we simply have a 7xxmm2 Die of it.I think the idea Nvidia had is that we have reached the plateau of Graphic Gaming. Imagine what you could do with a 7nm 7xxm2 Die of 2080Ti? Move the 4K Ultra High Quality frame rate from ~60 to 100? That is in 2019, in 2022 3nm, double that frame rate from 100 to 200?

The industry as a whole needs to figure out how to extract even more Graphics Quality with less transistors, simpler software while at the same time makes 3D design modelling easier. The graphics assets from gaming are now worth 100s to millions. Just the asset, not engine programming, network, 3D interaction etc, nothing to do with code. Just the Graphics. And Hybrid Ray tracing is an attempt to bring more quality graphics without the ever increasing cost of Engine and graphics designer simulating those effect.

What is interesting is that we will have 8 Core 5Ghz CPU and 7nm GPU next year.

Chawitsch - Thursday, September 20, 2018 - link

Given how much die space is dedicated to the new features software support will definitely be the key for these cards' success. Otherwise their price is just too high for what they offer today. Buying these cards now is somewhat of a gamble, but nVidia does have excellent relations with developers however, so support should come. As someone who would like to have a capable GPU for 100+ FPS gaming at 1440p, especially one that is future proof, I would much rather take my chances with these new cards.To me the question is this, would it really be worth focusing even more on 4k gaming, when it is a fairly niche market segment still due to monitor prices (especially ones with low latency for gaming). Arguably these high end cards are niche too, but when we can already have 4k@60 FPS, with maxed graphics settings, other considerations become more important. At any given resolution and feature level pure performance becomes meaningless after a certain point, at least for gaming. Arguing that reaching 100 FPS at 4k definitely has merit in my opinion, but by the time really good 4k monitors take over we'll get there, even with the path nVidia took.

Regarding graphics quality and transistor count, ray tracing should be a win here, if not now in the future certainly. There are diminishing returns with rasterization as you approach more realistic scenes and ray tracing makes you jump through less hoops to if you want to create a correct looking scene.

MadManMark - Thursday, September 20, 2018 - link

"I think the idea Nvidia had is that we have reached the plateau of Graphic Gaming. Imagine what you could do with a 7nm 7xxm2 Die of 2080Ti?"Yes, but that is probably why they stuck with 12nmFF actually. Note the die size, plus each card has its own GPU, rather than binned selection from the same GPU (kudos to Nate for also ruminating briefly on this in text). This means maximizing yiled is particularly important, and so begs for a mature, efficient process. TSMC achieved great things with their current 7nm process, no knock on it, but it is still UV-based, it's been long documented that there are yield challengels with that. IMO Nvidia will wait to hitch their wagons to TSMC's next process (expected next year), EUV-based 7nm+, which is expected to mitigate a lot of these yield concerns.

In other words it will be very interesting to see what the 2180 Ti looks like next year -- yes, I built a lot of assumptions into that sentence ;)

eddman - Thursday, September 20, 2018 - link

Come on, the naming is already set; 1080, 2080, 3080. What the hell is a "2180"?P.S. OCD

Lolimaster - Saturday, September 22, 2018 - link

That works if you expect graphics to be stagnant, tons of mini effects and polygon count will chunk a current 1080ti to 10fps in 2021.