Assessing Cavium's ThunderX2: The Arm Server Dream Realized At Last

by Johan De Gelas on May 23, 2018 9:00 AM EST- Posted in

- CPUs

- Arm

- Enterprise

- SoCs

- Enterprise CPUs

- ARMv8

- Cavium

- ThunderX

- ThunderX2

Memory Subsystem: Bandwidth

Measuring the full bandwidth potential of a system with John McCalpin's Stream bandwidth benchmark is getting increasingly difficult on the latest CPUs, as core and memory channel counts have continued to grow. As you can see from the results below, it not easy to measure bandwidth. The result vary wildly depending on the setting you choose.

| Memory: STREAM Bandwidth | ||

| Mem Hierarchy |

Compiler & OS settings | Result |

| Cavium ThunderX2 Gcc 7.2 binary |

-O2 -mcmodel=large -fopenmp -DVERBOSE -fno-PIC" OMP_PROC_BIND=spread |

241 GB/s |

| Cavium ThunderX2 Gcc 7.2 binary |

-Ofast -fopenmp -static OMP_PROC_BIND=spread |

157 GB/s |

| Cavium ThunderX2 Gcc 7.2 binary |

OMP_PROC_BIND not configured | 118 GB/s |

| Intel ICC Binary | -fast -qopenmp -parallel KMP_AFFINITY=verbose,scatter |

183 GB/s |

| Intel gcc Binary | Ofast -fopenmp -static OMP_PROC_BIND=spread |

151 GB/s |

| Intel gcc Binary | Ofast -fopenmp -static OMP_PROC_BIND not configured |

150 GB/s |

Theoretically, the ThunderX2 has 33% more bandwidth available than an Intel Xeon, as the SoC has 8 memory channels compared to Intel's six channels. These high bandwidth numbers can only be achieved in very specific conditions and require quite a bit of tuning to avoid reaching out to remote memory. In particular, we have to ensure that threads don't migrate from one socket to the other.

We first tried to achieve the best results on both architectures. In case of Intel the ICC compiler always produced better results with some low level optimizations inside the stream loops. In case of Cavium, we followed the instructions of Cavium. So strictly speaking these are not comparable, but it should give you an idea of what kind of bandwidth these CPUs can achieve at their respective peaks. To be fair to Intel, with ideal settings (AVX-512) you should be able to achieve 200 GB/s.

Nevertheless, it is clear that the ThunderX2 system can deliver between 15% and 28% more bandwidth to its CPU cores. This works out to 235 GB/sec, or about 120 GB/sec per socket. Which in turn is about 3 times more than what the original ThunderX was capable off.

Memory Subsystem: Latency

While Bandwidth measurements are only relevant to a small part of the server market, almost every application is heavily impacted by the latency of memory subsystem. To that end, we used LMBench in an effort to try to measure cache and memory latency. The numbers we looked at were "Random load latency stride=16 Bytes". Note that we're expressing the L3 cache and DRAM latency in nanoseconds since we don't have accurate L3-cache clockspeed values.

| Memory: LMBench Latency | |||

| Mem Hierarchy |

Cavium ThunderX DDR4-2133 |

Cavium ThunderX2 DDR4-2666 |

Intel Skylake 8176 DDR4-2666 |

| L1-cache (cycles) | 3 | 4 | 4 |

| L2-cache (cycles) | 40/80 (*) | 8-9 | 12 |

| L3-cache 4-8 MB (ns) | N/A | 27-30 ns | 24-29 ns |

| Memory 384-512 (ns) | 103/206 (*) | 156-157 ns | 89-91 ns |

The L2-cache of the ThunderX2 is accessed with very little latency, and with a single thread running, the L3-cache is competitive with the Intel's complex L3 cache. Once we hit the DRAM however, Intel offers significantly lower latency.

Memory Subsystem: TinyMemBench

To get a deeper understanding of the respective architectures, we also ran the open source TinyMemBench benchmark. The source code was compiled with GCC 7.2 and the optimization level was set to "-O3". The benchmark's testing strategy is described rather well in its manual:

Average time is measured for random memory accesses in the buffers of different sizes. The larger the buffer, the more significant the relative contributions of TLB, L1/L2 cache misses, and DRAM accesses become. All the numbers represent extra time, which needs to be added to L1 cache latency (4 cycles).

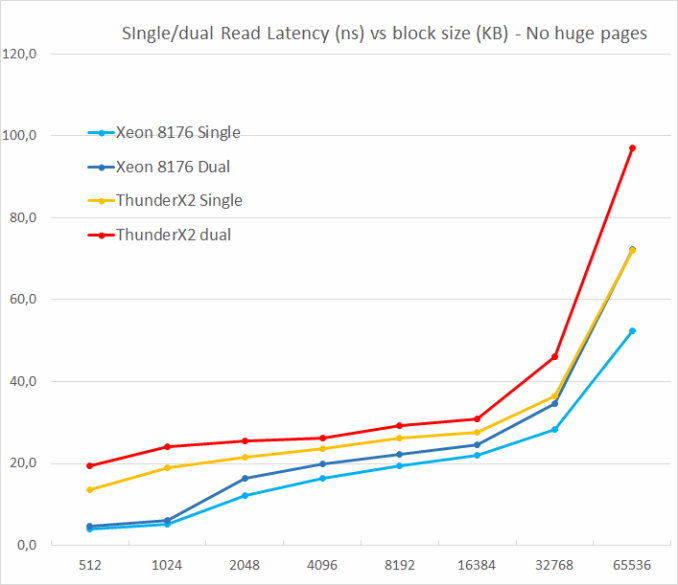

We tested with single and dual random read (no huge pages), as we wanted to see how the memory system coped with multiple read requests.

One of the major weaknesses of the original ThunderX was that it did not support multiple outstanding misses. Memory level parallelism is an important feature for any high-performance modern CPU core: using it it avoids cache misses that would starve the wide back end. A non-blocking cache is thus a key feature for wide cores.

The ThunderX2 does not suffer from that problem at all, thanks to its non-blocking cache. Just like the Skylake core in the Xeon 8176, a second read causes the overall latency to increase by only 15-30%, and not 100%. According to TinyMemBench, the Skylake core has tangibly better latencies. The datapoint at 512 KB is of course easy to explain: the Skylake core is still fetching from its fast L2, while the ThunderX2 core has to access its L3. But the numbers at 1 and 2 MB indicate that Intel's prefetchers offer a serious advantage as the latency stays is an averag of the L2 and the L3-cache. Around 8 to 16 MB, the latency numbers are close, but once we go beyond the L3 (64 MB), Intel's Skylake offers lower memory latencies.

97 Comments

View All Comments

Eris_Floralia - Wednesday, May 23, 2018 - link

The L2$ for SKX should be 1MB (256+768KiB), 16-way.Ryan Smith - Wednesday, May 23, 2018 - link

Right you are. Thanks!danjme - Wednesday, May 23, 2018 - link

Mental.Duncan Macdonald - Wednesday, May 23, 2018 - link

The CPU may be much cheaper than the equivalent Intel CPU - however on the price of a complete server there would be almost no difference as the vast majority of the price of a server is in other items (RAM, storage, network, software etc). To take a significant share, the performance needs to be better than Intel CPUs on both a per thread and a per socket basis. Potential users will look at this CPU - see that it is not faster than Intel on a per thread basis and is also not X86-64 compatible and turn away with a shrug. A price difference of under 5% for a complete server is not enough to justify the risks of going from x86-64 to ARM.BurntMyBacon - Thursday, May 24, 2018 - link

Perhaps you are correct and the lack of per thread performance will not allow Cavium to take a "significant' share of the market from Intel. However, at this point, getting even a small amount of market penetration in the server market is a significant achievement for an ARM vendor. This processor doesn't need to take a "significant" share from Intel to be successful. It just needs to establish a solid foothold. Given the data, I think it has a good chance of succeeding in that.The bigger question in my mind is how Intel will respond. They already have the ability to make a many lite core accelerator as demonstrated by the Xeon Phi line. Will they bring this tech to their CPU lineup, create a new accelerator based on this tech to handle applications that use many light threads, create a new many small core CPU based on Goldmont Plus (or Tremont), or will they consider the ARM threat insignificant enough to ignore.

boeush - Wednesday, May 23, 2018 - link

"(*) EPYC and Xeon E5 V4 are older results, run on Kernel 4.8 and a slightly older Java 1.8.0_131 instead of 1.8.0_161. Though we expect that the results would be very similar on kernel 4.13 and Java 1.8.0_161"What about Spectre/Meltdown mitigation patches? Were they in effect for 'older' results?

boeush - Wednesday, May 23, 2018 - link

To elaborate: if those numbers really are from July 2017, then they don't reflect true performance in a server context any longer (servers are where Spectre/Meltdown patches would be applied most.). Since the performance impact of Spectre/Meltdown is greatest on speculative execution and memory loads/prefetching, I'd guess those super-aggressive memory subsystem performance numbers, as well as single thread IPC advantages that Intel's CPUs claim in your benchmarks, are not really entirely applicable any longer.HStewart - Wednesday, May 23, 2018 - link

Spectre has been proved to effect other CPU's than Intel and even effects ARM and AMD.,Image on this article states that this CPU supports Fully Out of Order execution. So with my understanding of Spectre that this CPU also has issues.

To be honest I not sure how much the whole Spectre/Meltdown stuff is in this real world. It probably cause more harm in the computer industry than help.

Manch - Thursday, May 24, 2018 - link

Commentor: Blah Blah Blah Spectre?HStewart: Shill Shill Shill must defend Intel by any means...

lmcd - Thursday, May 24, 2018 - link

Commentor: reasonable position takenManch: *banned for unreasonable, offensive comments*