Assessing Cavium's ThunderX2: The Arm Server Dream Realized At Last

by Johan De Gelas on May 23, 2018 9:00 AM EST- Posted in

- CPUs

- Arm

- Enterprise

- SoCs

- Enterprise CPUs

- ARMv8

- Cavium

- ThunderX

- ThunderX2

Memory Subsystem: Bandwidth

Measuring the full bandwidth potential of a system with John McCalpin's Stream bandwidth benchmark is getting increasingly difficult on the latest CPUs, as core and memory channel counts have continued to grow. As you can see from the results below, it not easy to measure bandwidth. The result vary wildly depending on the setting you choose.

| Memory: STREAM Bandwidth | ||

| Mem Hierarchy |

Compiler & OS settings | Result |

| Cavium ThunderX2 Gcc 7.2 binary |

-O2 -mcmodel=large -fopenmp -DVERBOSE -fno-PIC" OMP_PROC_BIND=spread |

241 GB/s |

| Cavium ThunderX2 Gcc 7.2 binary |

-Ofast -fopenmp -static OMP_PROC_BIND=spread |

157 GB/s |

| Cavium ThunderX2 Gcc 7.2 binary |

OMP_PROC_BIND not configured | 118 GB/s |

| Intel ICC Binary | -fast -qopenmp -parallel KMP_AFFINITY=verbose,scatter |

183 GB/s |

| Intel gcc Binary | Ofast -fopenmp -static OMP_PROC_BIND=spread |

151 GB/s |

| Intel gcc Binary | Ofast -fopenmp -static OMP_PROC_BIND not configured |

150 GB/s |

Theoretically, the ThunderX2 has 33% more bandwidth available than an Intel Xeon, as the SoC has 8 memory channels compared to Intel's six channels. These high bandwidth numbers can only be achieved in very specific conditions and require quite a bit of tuning to avoid reaching out to remote memory. In particular, we have to ensure that threads don't migrate from one socket to the other.

We first tried to achieve the best results on both architectures. In case of Intel the ICC compiler always produced better results with some low level optimizations inside the stream loops. In case of Cavium, we followed the instructions of Cavium. So strictly speaking these are not comparable, but it should give you an idea of what kind of bandwidth these CPUs can achieve at their respective peaks. To be fair to Intel, with ideal settings (AVX-512) you should be able to achieve 200 GB/s.

Nevertheless, it is clear that the ThunderX2 system can deliver between 15% and 28% more bandwidth to its CPU cores. This works out to 235 GB/sec, or about 120 GB/sec per socket. Which in turn is about 3 times more than what the original ThunderX was capable off.

Memory Subsystem: Latency

While Bandwidth measurements are only relevant to a small part of the server market, almost every application is heavily impacted by the latency of memory subsystem. To that end, we used LMBench in an effort to try to measure cache and memory latency. The numbers we looked at were "Random load latency stride=16 Bytes". Note that we're expressing the L3 cache and DRAM latency in nanoseconds since we don't have accurate L3-cache clockspeed values.

| Memory: LMBench Latency | |||

| Mem Hierarchy |

Cavium ThunderX DDR4-2133 |

Cavium ThunderX2 DDR4-2666 |

Intel Skylake 8176 DDR4-2666 |

| L1-cache (cycles) | 3 | 4 | 4 |

| L2-cache (cycles) | 40/80 (*) | 8-9 | 12 |

| L3-cache 4-8 MB (ns) | N/A | 27-30 ns | 24-29 ns |

| Memory 384-512 (ns) | 103/206 (*) | 156-157 ns | 89-91 ns |

The L2-cache of the ThunderX2 is accessed with very little latency, and with a single thread running, the L3-cache is competitive with the Intel's complex L3 cache. Once we hit the DRAM however, Intel offers significantly lower latency.

Memory Subsystem: TinyMemBench

To get a deeper understanding of the respective architectures, we also ran the open source TinyMemBench benchmark. The source code was compiled with GCC 7.2 and the optimization level was set to "-O3". The benchmark's testing strategy is described rather well in its manual:

Average time is measured for random memory accesses in the buffers of different sizes. The larger the buffer, the more significant the relative contributions of TLB, L1/L2 cache misses, and DRAM accesses become. All the numbers represent extra time, which needs to be added to L1 cache latency (4 cycles).

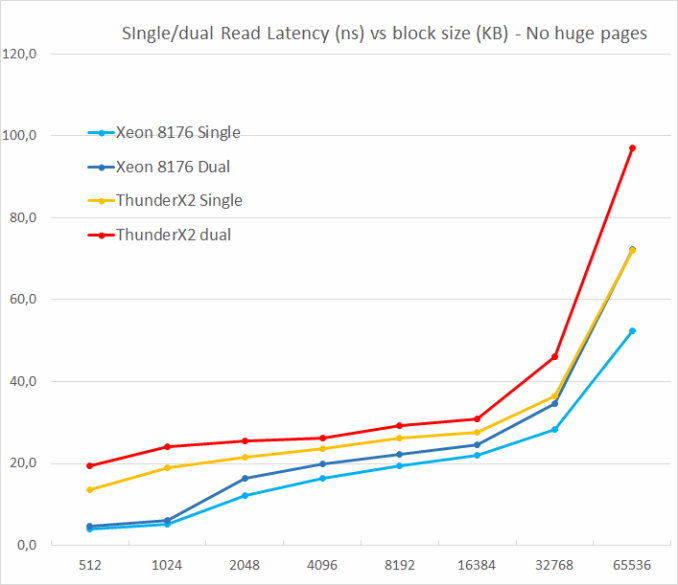

We tested with single and dual random read (no huge pages), as we wanted to see how the memory system coped with multiple read requests.

One of the major weaknesses of the original ThunderX was that it did not support multiple outstanding misses. Memory level parallelism is an important feature for any high-performance modern CPU core: using it it avoids cache misses that would starve the wide back end. A non-blocking cache is thus a key feature for wide cores.

The ThunderX2 does not suffer from that problem at all, thanks to its non-blocking cache. Just like the Skylake core in the Xeon 8176, a second read causes the overall latency to increase by only 15-30%, and not 100%. According to TinyMemBench, the Skylake core has tangibly better latencies. The datapoint at 512 KB is of course easy to explain: the Skylake core is still fetching from its fast L2, while the ThunderX2 core has to access its L3. But the numbers at 1 and 2 MB indicate that Intel's prefetchers offer a serious advantage as the latency stays is an averag of the L2 and the L3-cache. Around 8 to 16 MB, the latency numbers are close, but once we go beyond the L3 (64 MB), Intel's Skylake offers lower memory latencies.

97 Comments

View All Comments

Gunbuster - Wednesday, May 23, 2018 - link

Because it's hard to explain the critical line of business software or database is having some unknown edge case issue because you thought look at me I'm so smart and saved 1% of the project cost using unproven low penetration hardware.daanno2 - Wednesday, May 23, 2018 - link

I'm guessing you've never dealt with expensive enterprise software before. They are mostly licensed per-core, so getting the absolute best performance per core, even if the CPU is 2-3x more expensive, is worth it. At the end of the day, the CPUs might be <5% of the total cost.SirPerro - Wednesday, May 23, 2018 - link

You can swallow a big risk if the benefit is 75% of the cost. Hey, it's definitely worth the try.If your hardware makes up for 5% of the cost, saving a 3% of the total budget is not worth the risk of migration.

FunBunny2 - Thursday, May 24, 2018 - link

"You can swallow a big risk if the benefit is 75% of the cost. Hey, it's definitely worth the try."the EOL of today's machines, the amortization schedules must be draconian. only if a 'different' server pays off in dozens of months, not years, will it have chance. to the extent that enterprise software is a C/C++ and *nix codebase, porting won't be onerous. but, I'm willing to guess, even Oracle code isn't all that parallel, so throwing a truckload of teeny cpu at it won't necessarily work.

name99 - Thursday, May 24, 2018 - link

The bigger problem here is the massive uncertainty around the meaning of the word "server" and thus the target for these new ARM CPUs.Some people seem to think "server" means primarily boxes that run SAP or ORACLE, but I think it's clear that the ARM ecosystem has little interest in that, at least right now.

What's of much more interest is racks on racks of CPUs running commodity (LAMP) or homegrown software, ie data warehouses and HPC. I'm not even sure the Java benchmarks being run are of much interest to this market. The things that matter are the sorts of things Cloudflare was measuring when they tested Centriq -- memcached, nginx, transforming one type of data into another (compression/decompression, encrypt/decrypt, transcode,...) at massive throughput.

That's where I'd expect to see the big sales of the ARM "server" cores -- to Cloudflare, Baidu, Google, and so on.

Also now that Marvell is in the game, will be interesting to see the extent to which they pull this downward, into their traditional sorts of markets like infrastructure network and storage control (eg to go into network appliances and NAS boxes).

Ed469546 - Wednesday, June 13, 2018 - link

Some of the commercial software you pay per core. Intel had the best single threaded performance mening power license costs.Interesting question is how the Thunderx2 cores are counted in this case: one core can run 4 threads.

andrewaggb - Wednesday, May 23, 2018 - link

I wonder what workloads they are targeting? High throughput with poor single threaded results is somewhat limiting.peevee - Wednesday, May 23, 2018 - link

Web app servers. VM servers. Hadoop/Spark nodes. All benefit more from having more threads running in parallel instead of each request waiting or switching contexts.If you are concerned about single-thread performance on 256-thread server (as 2-CPU server with this CPU will provide) AT ALL, you choose outrageously wrong hardware for the task to begin with. Go buy a 2-core i3. Practically the only test in this article which matters is Critical jOPS (assuming the used quality of service metric was configured realistically).

GeekyMcGeekface - Friday, May 25, 2018 - link

I’m building a cluster now with a few hundred Raspberry Pi’s because scale up is expensive and stupid. By distributing across a pool of clusters, I can handle far more memory bandwidth and compute. Consider 100 Raspberry PIs have 400 64-bit cores and 100GB of RAM. Total cost $3500 + power, mounting and switches.Running three clusters of those with Kubernetes, Couchbase and Azure Functions provides 1200 64-bit cores, about 100GB of extremely high performance storage, incredible failover and a map-reduce environment to die for.

Add some 64GB MicroSD cards and an object storage system to the cluster and there’s 12TB of cold storage (4TB when made redundant).

Pay a service fee to some sweatshop in the Eastern Block to do the labor intensive bits and you can build a massively parallel, almost impossible to crash, CI/CD friendly, multi-tenant, infinitely scalable PaaS... for less than the cost of the RAM for a single one of the servers here.

The only expensive bits in the design are the Netscalers.

Oh... and the power foot print is about the same as one of these servers.

I honestly have no idea what I what I would use a server like these in a new design for.

jospoortvliet - Wednesday, May 30, 2018 - link

single-core performance with your pi's is considerably lower, as is inter-core bandwidth. If your tasks require little inter-process communication you're good but with highly interdependent compute it won't perform well. But for specific tasks, yes, it might be very cost effective.